The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

The RTX Recap: A Brief Overview of the Turing RTX Platform

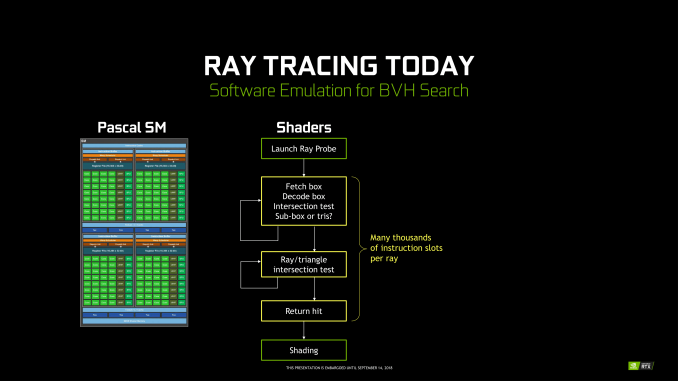

Overall, NVIDIA’s grand vision for real-time, hybridized raytracing graphics means that they needed to make significant architectural investments into future GPUs. The very nature of the operations required for ray tracing means that they don’t map to traditional SIMT execution especially well, and while this doesn’t preclude GPU raytracing via traditional GPU compute, it does end up doing so relatively inefficiently. Which means that of the many architectural changes in Turing, a lot of them have gone into solving the raytracing problem – some of which exclusively so.

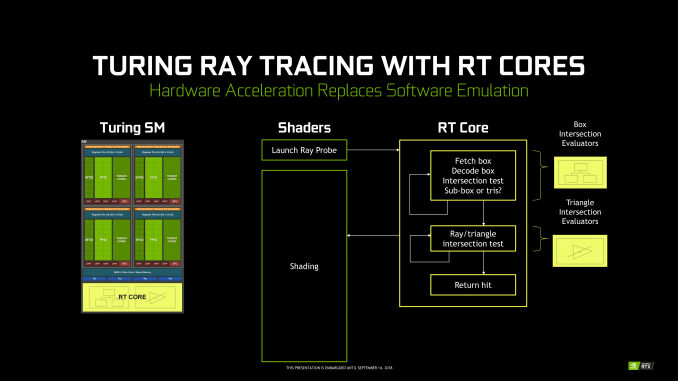

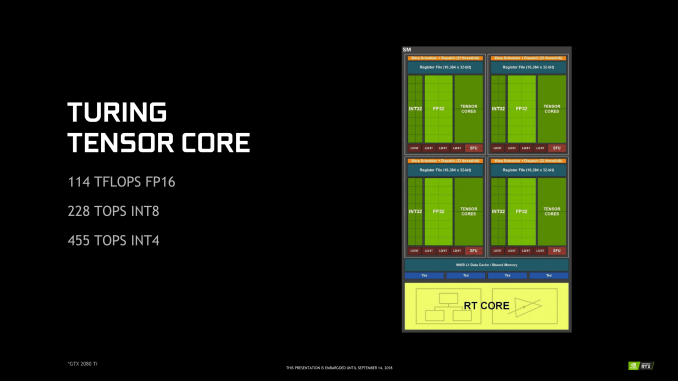

To that end, on the ray tracing front Turing introduces two new kinds of hardware units that were not present on its Pascal predecessor: RT cores and Tensor cores. The former is pretty much exactly what the name says on the tin, with RT cores accelerating the process of tracing rays, and all the new algorithms involved in that. Meanwhile the tensor cores are technically not related to the raytracing process itself, however they play a key part in making raytracing rendering viable, along with powering some other features being rolled out with the GeForce RTX series.

Starting with the RT cores, these are perhaps NVIDIA’s biggest innovation – efficient raytracing is a legitimately hard problem – however for that reason they’re also the piece of the puzzle that NVIDIA likes talking about the least. The company isn’t being entirely mum, thankfully. But we really only have a high level overview of what they do, with the secret sauce being very much secret. How NVIDIA ever solved the coherence problems that dog normal raytracing methods, they aren’t saying.

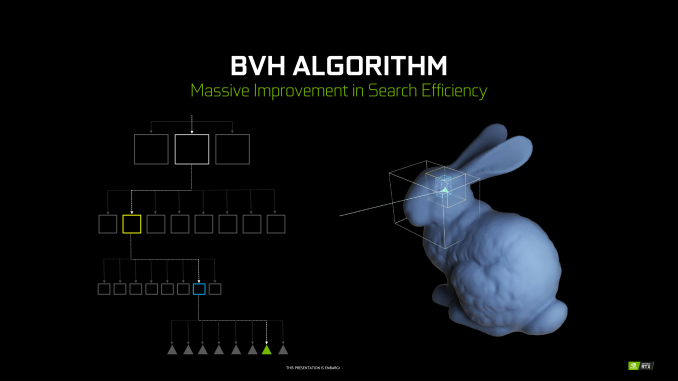

At a high level then, the RT cores can essentially be considered a fixed-function block that is designed specifically to accelerate Bounding Volume Hierarchy (BVH) searches. BVH is a tree-like structure used to store polygon information for raytracing, and it’s used here because it’s an innately efficient means of testing ray intersection. Specifically, by continuously subdividing a scene through ever-smaller bounding boxes, it becomes possible to identify the polygon(s) a ray intersects with in only a fraction of the time it would take to otherwise test all polygons.

NVIDIA’s RT cores then implement a hyper-optimized version of this process. What precisely that entails is NVIDIA’s secret sauce – in particular the how NVIDIA came to determine the best BVH variation for hardware acceleration – but in the end the RT cores are designed very specifically to accelerate this process. The end product is a collection of two distinct hardware blocks that constantly iterate through bounding box or polygon checks respectively to test intersection, to the tune of billions of rays per second and many times that number in individual tests. All told, NVIDIA claims that the fastest Turing parts, based on the TU102 GPU, can handle upwards of 10 billion ray intersections per second (10 GigaRays/second), ten-times what Pascal can do if it follows the same process using its shaders.

NVIDIA has not disclosed the size of an individual RT core, but they’re thought to be rather large. Turing implements just one RT core per SM, which means that even the massive TU102 GPU in the RTX 2080 Ti only has 72 of the units. Furthermore because the RT cores are part of the SM, they’re tightly couple to the SMs in terms of both performance and core counts. As NVIDIA scales down Turing for smaller GPUs by using a smaller number of SMs, the number of RT cores and resulting raytracing performance scale down with it as well. So NVIDIA always maintains the same ratio of SM resources (though chip designs can very elsewhere).

Along with developing a means to more efficiently test ray intersections, the other part of the formula for raytracing success in NVIDIA’s book is to eliminate as much of that work as possible. NVIDIA’s RT cores are comparatively fast, but even so, ray interaction testing is still moderately expensive. As a result, NVIDIA has turned to their tensor cores to carry them the rest of the way, allowing a moderate number of rays to still be sufficient for high-quality images.

In a nutshell, raytracing normally requires casting many rays from each and every pixel in a screen. This is necessary because it takes a large number of rays per pixel to generate the “clean” look of a fully rendered image. Conversely if you test too few rays, you end up with a “noisy” image where there’s significant discontinuity between pixels because there haven’t been enough rays casted to resolve the finer details. But since NVIDIA can’t actually test that many rays in real time, they’re doing the next-best thing and faking it, using neural networks to clean up an image and make it look more detailed than it actually is (or at least, started out at).

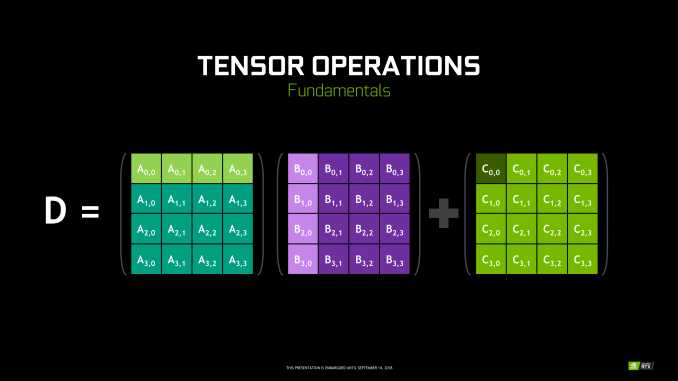

To do this, NVIDIA is tapping their tensor cores. These cores were first introduced in NVIDIA’s server-only Volta architecture, and can be thought of as a CUDA core on steroids. Fundamentally they’re just a much larger collection of ALUs inside a single core, with much of their flexibility stripped away. So instead of getting the highly flexible CUDA core, you end up with a massive matrix multiplication machine that is incredibly optimized for processing thousands of values at once (in what’s called a tensor operation). Turing’s tensor cores, in turn, double down on what Volta started by supporting newer, lower precision methods than the original that in certain cases can deliver even better performance while still offering sufficient accuracy.

As for how this applies to ray tracing, the strength of tensor cores is that tensor operations map extremely well to neural network inferencing. This means that NVIDIA can use the cores to run neural networks which will perform additional rendering tasks. in this case a neural network denoising filter is used to clean up the noisy raytraced image in a fraction of the time (and with a fraction of the resources) it would take to actually test the necessary number of rays.

No Denoising vs. Denoising in Raytracing

The denoising filter itself is essentially an image resizing filter on steroids, and can (usually) produce a similar quality image as brute force ray tracing by algorithmically guessing what details should be present among the noise. However getting it to perform well means that it needs to be trained, and thus it’s not a generic solution. Rather developers need to take part in the process, training a neural network based on high quality fully rendered images from their game.

Overall there are 8 tensor cores in every SM, so like the RT cores, they are tightly coupled with NVIDIA’s individual processor blocks. Furthermore this means tensor performance scales down with smaller GPUs (smaller SM counts) very well. So NVIDIA always has the same ratio of tensor cores to RT cores to handle what the RT cores coarsely spit out.

Deep Learning Super Sampling (DLSS)

Now with all of that said, unlike the RT cores, the tensor cores are not fixed function hardware in a traditional sense. They’re quite rigid in their abilities, but they are programmable none the less. And for their part, NVIDIA wants to see just how many different fields/tasks that they can apply their extensive neural network and AI hardware to.

Games of course don’t fall under the umbrella of traditional neural network tasks, as these networks lean towards consuming and analyzing images rather than creating them. None the less, along with denoising the output of their RT cores, NVIDIA’s other big gaming use case for their tensor cores is what they’re calling Deep Learning Super Sampling (DLSS).

DLSS follows the same principle as denoising – how can post-processing be used to clean up an image – but rather than removing noise, it’s about restoring detail. Specifically, how to approximate the image quality benefits of anti-aliasing – itself a roundabout way of rendering at a higher resolution – without the high cost of actually doing the work. When all goes right, according to NVIDIA the result is an image comparable to an anti-aliased image without the high cost.

Under the hood, the way this works is up to the developers, in part because they’re deciding how much work they want to do with regular rendering versus DLSS upscaling. In the standard mode, DLSS renders at a lower input sample count – typically 2x less but may depend on the game – and then infers a result, which at target resolution is similar quality to a Temporal Anti-Aliasing (TAA) result. A DLSS 2X mode exists, where the input is rendered at the final target resolution and then combined with a larger DLSS network. TAA is arguably not a very high bar to set – it’s also a hack of sorts that seeks to avoid doing real overdrawing in favor of post-processing – however NVIDIA is setting out to resolve some of TAA’s traditional inadequacies with DLSS, particularly blurring.

Now it should be noted that DLSS has to be trained per-game; it isn’t a one-size-fits all solution. This is done in order to apply a unique neutral network that’s appropriate for the game at-hand. In this case the neural networks are trained using 64x SSAA images, giving the networks a very high quality baseline to work against.

None the less, of NVIDIA’s two major gaming use cases for the tensor cores, DLSS is by far the more easily implemented. Developers need only to do some basic work to add NVIDIA’s NGX API calls to a game – essentially adding DLSS as a post-processing stage – and NVIDIA will do the rest as far as neural network training is concerned. So DLSS support will be coming out of the gate very quickly, while raytracing (and especially meaningful raytracing) utilization will take much longer.

In sum, then the upcoming game support aligns with the following table.

| Planned NVIDIA Turing Feature Support for Games | |||||

| Game | Real Time Raytracing | Deep Learning Supersampling (DLSS) | Turing Advanced Shading | ||

| Ark: Survival Evolved | Yes | ||||

| Assetto Corsa Competizione | Yes | ||||

| Atomic Heart | Yes | Yes | |||

| Battlefield V | Yes | ||||

| Control | Yes | ||||

| Dauntless | Yes | ||||

| Darksiders III | Yes | ||||

| Deliver Us The Moon: Fortuna | Yes | ||||

| Enlisted | Yes | ||||

| Fear The Wolves | Yes | ||||

| Final Fantasy XV | Yes | ||||

| Fractured Lands | Yes | ||||

| Hellblade: Senua's Sacrifice | Yes | ||||

| Hitman 2 | Yes | ||||

| In Death | Yes | ||||

| Islands of Nyne | Yes | ||||

| Justice | Yes | Yes | |||

| JX3 | Yes | Yes | |||

| KINETIK | Yes | ||||

| MechWarrior 5: Mercenaries | Yes | Yes | |||

| Metro Exodus | Yes | ||||

| Outpost Zero | Yes | ||||

| Overkill's The Walking Dead | Yes | ||||

| PlayerUnknown Battlegrounds | Yes | ||||

| ProjectDH | Yes | ||||

| Remnant: From the Ashes | Yes | ||||

| SCUM | Yes | ||||

| Serious Sam 4: Planet Badass | Yes | ||||

| Shadow of the Tomb Raider | Yes | ||||

| Stormdivers | Yes | ||||

| The Forge Arena | Yes | ||||

| We Happy Few | Yes | ||||

| Wolfenstein II | Yes | ||||

337 Comments

View All Comments

Qasar - Wednesday, September 19, 2018 - link

just checked a local store, the lowest priced 2080 card, a gigabyte rtx 2080 is $1080, and thats canadian dollars... the most expensive RTX card ..EVGA RTX 2080 Ti XC ULTRA GAMING 11GB is $1700 !!!! again that's canadian dollars !! to make things worse.. that's PRE ORDER pricing, and have this disclaimer : Please note that the prices of the GeForce RTX cards are subject to change due to FX rate and the possibility of tariffs. We cannot guarantee preorder prices when the stock arrives - prices will be updated as needed as stock become available.even if i could afford these cards.. i think i would pass.. just WAY to expensive.. id prob grab a 1080 or 1080ti and be done with it... IMO... nvida is being a tad bit greedy just to protect and keep its profit margins.. but, they CAN do this.. cause there is no one else to challenge them...

PopinFRESH007 - Wednesday, September 19, 2018 - link

would you care to share the bill of materials for the tu102 chip? Since you seem to suggest you know the production costs, and therefor know the profit margin which you suggest is a bit greedy.Qasar - Wednesday, September 19, 2018 - link

popin.. all i am trying to say is nvidia doesnt have to charge the prices they are charging.. but they CAN because there is nothing else out there to provide competition...tamalero - Thursday, September 20, 2018 - link

Please again explain how the cost of materials is somehow relevant on the price performance argument for consumers?Chips like R600, Fermi, similars.. were huge.. did it matter? NO, did performance matter? YES.

PopinFRESH007 - Thursday, September 20, 2018 - link

I specifically replied to Qasar's claim "nvida is being a tad bit greedy just to protect and keep its profit margins.. but, they CAN do this" which is baseless unless they have cost information to know what their profit margins are.Nagorak - Thursday, September 20, 2018 - link

Nvidia is a public company. You can look up their profit margin and it is quite high.Qasar - Thursday, September 20, 2018 - link

PopinFRESH * sigh * i guess you will never understand the concept of " no competition, we can charge what ever we want, and people will STILL buy it cause it is the only option if you want the best or fastest " it has NOTHING to do with knowing cost info or what a companies profit margins are... but i guess you will never understand this....just4U - Thursday, September 20, 2018 - link

Ofcourse their being greedy. Since they saw their cards flying off the shelfs at 50% above MSRP earlier this year they know people are willing to pay.. so their pushing the limit. As they normally do.. this isn't new with Nvidia. Not sure why any are defending them.. or getting excessively mad about it. (..shrug)mapesdhs - Wednesday, September 26, 2018 - link

Effectively, gamers are complaining about themselves. Previous cards sold well at higher prices, so NVIDIA thinks it can push up the pricing further, and reduce product resources at the same time even when the cost is higher. If the cards do sell well then gamers only have themselves to blame, in which case nothing will change until *gamers* stop making it fashionable and cool to have the latest and greatest card. Likewise, if AMD does release something competitive, whether via price, performance or both, then gamers need to buy the damn things instead of just exploiting the lowered NVIDIA pricing as a way of getting a cheaper NVIDIA card. There's no point AMD even being in this market if people don't buy their products even when it does make sense to do so.BurntMyBacon - Thursday, September 20, 2018 - link

@PopinFRESH007: "would you care to share the bill of materials for the tu102 chip? Since you seem to suggest you know the production costs, and therefor know the profit margin which you suggest is a bit greedy."You have a valid point. It is hard to establish a profit margin without a bill of materials (among other things). We don't have a bill of materials, but let me establish some knowns so we can better assess.

Typically, most supporting components on a graphics card are pretty similar to previous generation cards. Often times different designs used to do the same function are a cost cutting measure. I'm going to make an assumption that power transistors, capacitors, output connectors, etc. will remain nominally the same cost. So I'll focus on differences. The obvious is the larger GPU. This is not the first chip made on this process (TSMC 12nm) and the process appears to be a half node, so defect rates should be lower and the wafer cost should be similar to TSMC 14nm. On the other hand, the chip is still very large which will likely offset some of that yield gain and reduce the number of chips fabricated per wafer. Pascal was first generation chip produced on a new full node process (TSMC 14nm), but quite a bit smaller, so yields may have been higher and there were more chips fabricated per wafer. Also apparent is the newer GDDR6 memory tech, which will naturally cost more than GDDR5(X) at the moment, but clearly not as much as HBM2. The chips also take more power, so I'd expect a marginal increase for power related circuitry and cooling relative to pascal. I'd expect about the same cost here as for maxwell based chips, given similar power requirements.

From all this, it sounds like graohics cards based on Turing chips will cost more to manufacture than Pascal equivalents. I it is probably not unreasonable to suggest that a TU106 may have a similar cost bill of materials to a GP102 with the note that the cost to produce the GP102 has most certainly dropped since introduction.

I'll leave the debate on how greedy or not this is to others.