The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

Display Uniformity and Power Usage

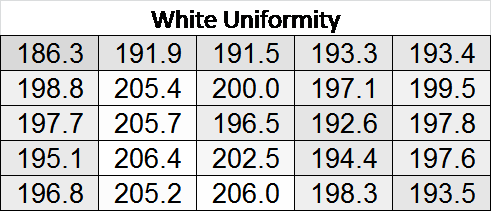

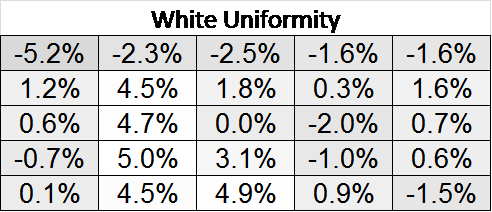

Especially with localized dimming, the PG27UQ's panel uniformity was solid. In the default out-of-the-box configuration (FALD enabled), the maximum local difference of white levels is around 5% of the center brightness.

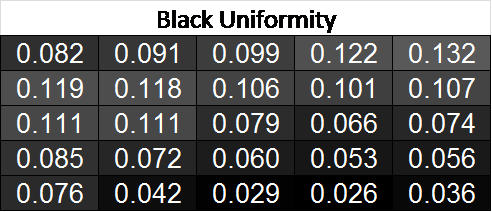

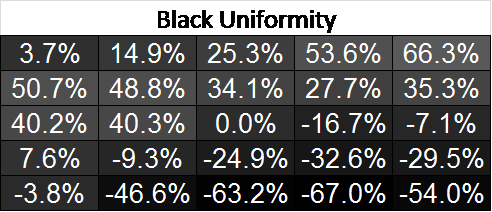

Black levels were more uneven, with a general trend of brighter blacks towards the top and darker blacks towards the bottom.

Color reproduction across the panel, however, is excellent, and virtually imperceptible between different parts of the display.

Power Use

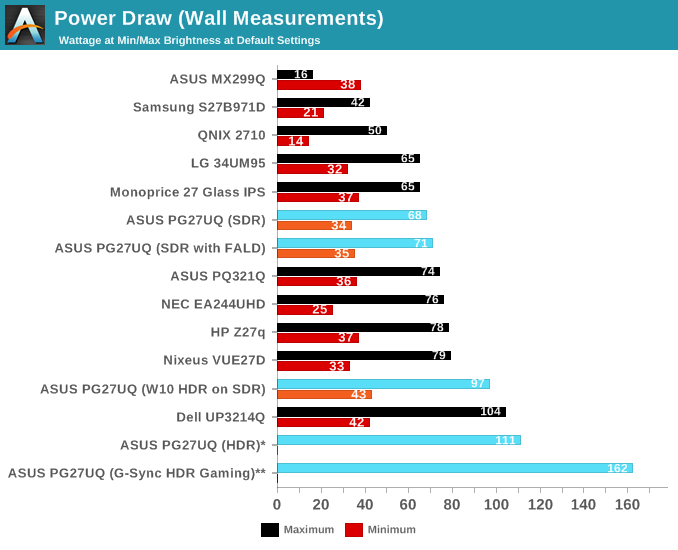

As far as power usage goes, the PG27UQ has been specified for a peak 180W with HDR on. Stand-by was specified at 0.5W, but in practice the monitor often idled for some time around 27W in the power-off mode, before finally going to sub-1W power draw. The fan is on at that time, and it's not exactly clear how this state is governed.

With G-Sync and HDR enabled, peaks of around 150W to 160W were observed during gaming, with a peak of 162W. In SDR mode, power consumption is more-or-less in line with typical monitors.

91 Comments

View All Comments

crimsonson - Tuesday, October 2, 2018 - link

Someone can correct me, but AFAIK there are no native 10 bit RGB support in games. 10-bit panel would at least improve its HDR capabilities.FreckledTrout - Tuesday, October 2, 2018 - link

The games that say they are HDR should be using 10-bit color as well.a5cent - Wednesday, October 3, 2018 - link

Any game that supports HDR uses 10 bpp natively. In fact, many games use 10 bpp internally even if they don't support HDR officially.That's why a HDR monitor must support the HDR10 video signal (that's the only way to get the 10 bpp frame from the GPU to the monitor).

OTOH, a 10 bit panel for gaming typically won't provide a perceptible improvement. In practice, 8bit+FRC is just as good. IMHO it's only for editing HDR still imagery where real 10bit panels provide benefits.

GreenReaper - Thursday, October 4, 2018 - link

I have to wonder if 8-bit+FRC makes sense on the client side for niche situations like this, where the bandwidth is insufficient to have full resolution *and* colour depth *and* refresh rate at once?You run the risk of banding or flicker, but frankly that's similar for display FRC, and I imagine if the screen was aware of what was happening it might be able to smooth it out. It'd essentially improve the refresh rate of the at the expense of some precise accuracy. Which some gamers might well be willing to take. Of course that's all moot if the card can't even play the game at the target refresh rate.

GreenReaper - Thursday, October 4, 2018 - link

By client, of course, I mean card - it would send an 8-bit signal within the HDR colour gamut and the result would be a frequency-interpolated output hopefully similar to that possible now - but by restricting at the graphics card end you use less bandwidth, and hopefully it doesn't take too much power.a5cent - Thursday, October 4, 2018 - link

"I have to wonder if 8-bit+FRC makes sense on the client side for niche situations like this"It's an interesting idea, but I don't think it can work.

The core problem is that the monitor then has no way of knowing if in such an FRC'ed image, a bright pixel next to a darker pixel correctly describes the desired content, or if it's just an FRC artifact.

Two neighboring pixels of varying luminance affect everything from how to control the individual LEDs in a FALD backlight, to when and how strongly to overdrive pixels to reduce motion blur. You can't do these things in the same way (or at all) if the luminance delta is merely an FRC artifact.

As a result, the GPU would have to control everything that is currently handled by the monitor's controller + firmware, because only it has access to the original 10 bpp image. That would be counter productive, because then you'd also have to transport all the signaling information (for the monitor's backlighting and pixels) from the GPU to the monitor, which would require far more bandwidth than the 2 bpp you set out to save 😕

What you're thinking about is essentially a compression scheme to save bandwidth. Even if it did work, employing FRC in this way is lossy and nets you, at best, a 20% bandwidth reduction.

However, the DP1.4(a) standard already defines a compression scheme. DSC is lossless and nets you about 30%.That would be the way to do what you're thinking of.

Particularly 4k DP1.4 gaming monitors are in dire need of this. That nVidia and Acer/Asus would implement chroma subsampling 4:2:2 (which is also a lossy compression scheme) rather than DSC is shameful. 😳

I wonder if nVidia's newest $500+ g-sync module is even capable of DSC. I suspect it is not.

Zoolook - Friday, October 5, 2018 - link

DSC is not lossless, it's "visually lossless", which means that most of the time you shouldn't percieve a difference compared to an uncompressed stream.I'll reserve my judgement until I see some implementations.

Impulses - Tuesday, October 2, 2018 - link

That Asus PA32UC wouldn't get you G-Sync or refresh rates over 60Hz and it's still $975 tho... It sucks that the display market is so fractured and people who use their PCs for gaming as well as content creation can't get anything approaching perfect or even ideal at times.There's a few 4K 32" displays with G-Sync or Freesync but they don't go past 60-95Hz AFAIK and then you don't get HDR, it's all a compromise, and has been for years due to competing adaptive sync standards, lagging connection standards, a lagging GPU market, etc etc.

TristanSDX - Tuesday, October 2, 2018 - link

Soon there will be new PG27UC, with mini led backlight (10000 diodes vs 384) and with DSCDanNeely - Tuesday, October 2, 2018 - link

Eventually, but not soon. AUO is the only panel company working on 4k/high refresh/HDR; and they don't have anything with more dimming zones on their public road map (which is nominally about a year out for their production, add a few months to it for monitors makers to package them and get them to retail up once they start volume production of panels).