Samsung Kicks Off Mass Production of 8 TB NF1 SSDs with PCIe 3.0 x4 Interface [updated]

by Anton Shilov on June 21, 2018 9:00 AM EST

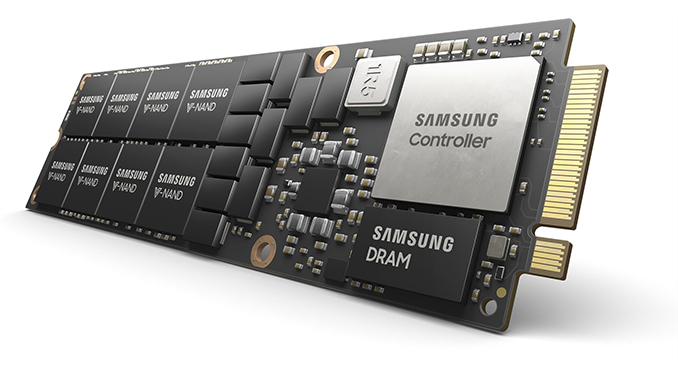

Samsung this week announced that it had started mass production of its new 8 TB NF1 SSDs. Samsung has been demonstrating prototype NF1 SSDs for slightly less than a year now, so it is not surprising that some of its customers are now ready to adopt them. The larger NF1 form factor allows for drives with double the capacity of M.2 SSDs, and they are aimed primarily at data-intensive analytics and virtualization applications that require higher performance and capacity than what M.2 can provide.

Update 6/22: Samsung made an update to its statements regarding the NF1 SSDs. The drives are based on the Phoenix controller, they do not use a PCIe 4.0 interface, but rely on a more traditional PCIe Gen 3 x4 interface.

Samsung’s NF1 SSDs are based on the company’s 512 GB packages comprising of 16 layers of 256 Gb TLC V-NAND memory devices, as well as the company’s proprietary controller accompanied by 12 GB of LPDDR4 memory. Prototype NF1 drives used Samsung’s Phoenix controllers already used for client SSDs, early on Friday the company confirmed that the commercial NF1 SSDs are indeed the PM983 SSDs powered by the Phoenix controller. From performance point of view, the NF1 drives deliver sequential read speeds of 3100 MB/s and write speeds of 2000 MB/s. When it comes to random performance, the drives are capable of up to 500K read IOPS as well as 50K write IOPS. As for endurance, Samsung rates the drives for 1.3 DWPD.

| Samsung NF1 (PM983) SSD Specification | |||

| Capacity | 8 TB | ||

| Controller | Phoenix | ||

| NAND Flash | 256 Gb TLC V-NAND | ||

| Form-Factor, Interface | NF1, PCIe 3.0 x4 | ||

| Sequential Read | 3100 MB/s | ||

| Sequential Write | 2000 MB/s | ||

| Random Read IOPS | 500K IOPS | ||

| Random Write IOPS | 50K IOPS | ||

| Pseudo-SLC Caching | unknown, likely not | ||

| DRAM Buffer | 12 GB LPDDR4 | ||

| TCG Opal Encryption | No | ||

| Power Consumption | Active | Read | ? W |

| Write | ? W | ||

| Idle | ? mW | ||

| Warranty | 3 years | ||

| MTBF | 2 million hours | ||

| TBW | 11388 TB | ||

| Price | ? | ||

Two interesting points that Samsung mentioned in its press release was the fact that its NF1 SSDs enabled an undisclosed maker of servers to install 72 of such drives in a 2U rack for a 576 TB capacity and the fact that the drives used a PCIe 4 interface, a claim that was later retracted. All previous public demonstrations of NF1 SSDs were carried out on 1U servers based on Intel’s Xeon processors and there is also an NF1-compatible server from AIC based on AMD’s EPYC CPU. Samsung’s customer who uses the NF1 drives will likely identify itself in the coming months. In the meantime, the only shipping processor supporting PCIe 4 is the IBM POWER9, whereas the only PCIe 4-supporting switches are available from Microsemi. As for the 2U machine featuring 72 NF1 bays, it has not been publically announced yet.

Samsung promises to start producing higher-capacity NF1 SSDs later this year. The company also says that JEDEC is expected to formally standardize the NF1 (aka NGSFF) spec this October.

Update 07/05: Samsung has sent out an oddly timed correction some 2 weeks after the initial announcement, essentially disowning their comments on when NF1 is expected to be standardized. However their new statement also doesn't state that their estimate was incorrect, merely that they shouldn't have made it.

"Samsung in a footnote to its NF1 announcement unintentionally exceeded its jurisdiction in estimating a possible time frame for completion of the JEDEC Next-generation Small Form Factor (NGSFF) standard. We regret the oversight."

Related Reading:

Source: Samsung

21 Comments

View All Comments

nevcairiel - Thursday, June 21, 2018 - link

PCIe 4 should effectively double the bandwidth of PCIe 3, ie. getting close to 8GB/s on a 4x link. However the NAND and/or the controller are probably not up for those speeds quite yet.Which begs the question why even use a PCIe 4 controller on this if it can't even saturate a 4x PCIe 3 link? Or maybe it usea a PCIe 4 2x link only?

DanNeely - Thursday, June 21, 2018 - link

At the data center level, just the increased power efficiency could be sufficient justification even if performance is unchanged.The switch from HDD to SSD servers massively moved the capacity bottleneck in most data centers to being thermally/power limited instead of rack space limited. Anything to reduce power per server will feed directly back into being able to fit more servers into your existing footprint.

CheapSushi - Thursday, June 21, 2018 - link

Might not be 100% accurate info. But I think it's more likely using less lanes, like you mention. This is one of the goals with PCIe 5.0 at least, in terms of making x1 lanes enough for mass NVMe storage. So it could be a way of allowing mass storage on 4.0. The PLX switches are expensive, so it could be they're trying to find a balance. Check out SuperMicro for server examples with all M.3/NF1 1U.lightningz71 - Monday, June 25, 2018 - link

You've hit the nail on the head here. For 72 of these units to be in a server, that's 288 lanes that need to be routed all over the place. Switch to PCIe gen 5, and that's a quarter of the number of lanes and a drastic reduction in power usage. Even with gen4, that's 144 lanes and still a significant power level reduction. For gen5, an EPYC based server wouldn't even need a PCIe multiplexer, assuming that lane bifurcation is granular enough. That's, of course, a pipe dream as doing 1x lane bifurcation from the processor would be an extraordinarily expensive affair from a circuit standpoint.Realistically, what you'll see if a fat data connection to the processor from a single multiplexer chip that's feeding 2X Gen 4 channels to each slot in the next generation of the product.

Dragonstongue - Thursday, June 21, 2018 - link

seems "nice" but why is the random write IOPS so low compared to many other SSD that are out there and likely cost a fraction of what this probably will?the TBW however is quite good 1423.5 per TB is a "class leader" as far as I can tell, by quite a margin

Death666Angel - Thursday, June 21, 2018 - link

Probably a limitation of the controller. That is a hell of a lot of NAND chips to manage. And as I read the article, it is more of a "read the data off it and use it to compute stuff". So I would guess it fits the use case.Kristian Vättö - Thursday, June 21, 2018 - link

Enterprise SSDs specs are based on steady-state performance, not 2 second bursts like client specs.FunBunny2 - Thursday, June 21, 2018 - link

does the 10X difference in random strike anyone else as regressive?FullmetalTitan - Thursday, June 21, 2018 - link

Not for the use case these are intended for. These will be for large data sets and heavy database computation, the read:write ratio is heavily skewed.Gothmoth - Thursday, June 21, 2018 - link

only 3000mb/s with 12GB of ram cache .. did i read that right? seems to be time for PCI 6.0.....