The Samsung Galaxy S9 and S9+ Review: Exynos and Snapdragon at 960fps

by Andrei Frumusanu on March 26, 2018 10:00 AM ESTCamera Architecture & Video Performance

Samsung’s marketing for the S9 is strongly focused on the camera, and indeed that is where we see the most impressive improvements. The Galaxy S9’s new main rear camera sees large changes in the optics while also employing a brand new sensor. The resolution of the new sensor remains at 12MP and the pixel pitch should still be 1.4µm (to be confirmed depending on the exact sensor size). We are talking about a new sensor but Samsung hasn’t disclosed if and what kind of improvements have been made to the pixel array itself.

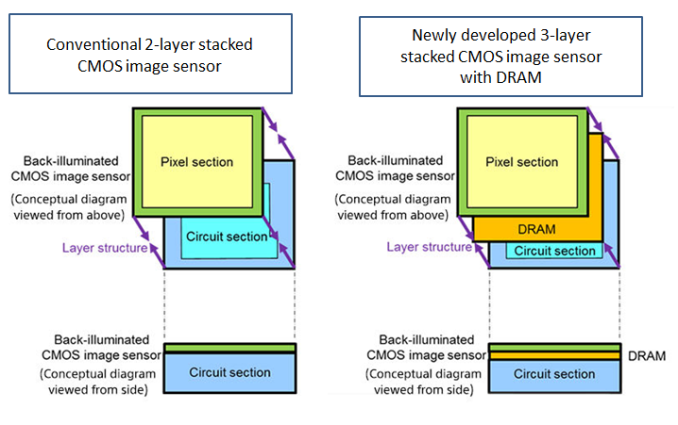

What has changed is the sensor structure. First launched last year by Sony and implemented in the Xperia XZ’s – the Galaxy S9’s also implement tri-stack sensor structures.

Traditional two-stack sensors are comprised of the pixel array layer (which is the CMOS image sensor itself), and an image signal processing stack which takes care of various pre-processing tasks before the data is forwarded to the SoC’s camera pipelines. The new tri-stack sensor modules introduce a DRAM layer into the mix which serves as a temporary data buffer for readouts from the CMOS sensor pixel array.

Diagram source: Sony Xperia Blog

As digital cameras lack any mechanical shutter, light exposure to the sensor is constant. What this means for traditional sensors, which have to scan the pixel array line-by-line and forward it to the image-processing layer, is that there is a significant time difference between the first upper left pixel’s exposure and the last lower right pixel’s exposure. This in turn creates the effect of focal plane distortion in fast-moving objects.

The DRAM layer serves as an intermediary buffer. It enables readout of each of each individual pixel ADC value from the CMOS sensor at a much faster rate before forwarding it to the processing layer. While this is not a true global shutter, the enormously faster readout rate is able to very much mimic one in practice.

Samsung uses this for two major advantages: high framerate video recording as well as very fast multi-frame noise reduction.

Super slow-mo capture at 960fps - Apologies for the bad YouTube compression

For the first, Samsung is now able to match Sony’s devices with up to 720p960 high-framerate recording. Other modes available are 1080p240 and 4K60. The S9’s allows up to 0.2s of real-time recording in this high-frame rate mode, which expands for up to 6s slow-motion footage in the resulting recording. Samsung also differentiates itself from Sony’s implementation as it uses “AI” for automatic triggering of the high-framerate capture as it’s able to detect very fast moving objects. We still have to experiment more with the feature before coming to a verdict. The camera also allows for manual triggering – but for the highest framerate bursts correct timing will be critical in capturing the subject.

The second feature enhanced by the new sensor (as well as the SoC imaging pipelines) is the multi-frame noise reduction. Google’s Pixel’s devices and HDR+ algorithm were the first to use a software implementation of this feature. The camera captures a series of short-exposure shots and the individual captures are interpolated into a single image that has less noise. Samsung claims a noise-reduction of up to 30% through this method – in theory this also will improve sharpness as there is less detail lost to algorithmically applied noise reduction, something we’ll have to verify in more thorough hands-on testing.

The new sensor is only part of the improvements to the camera as the module has also seen a very large change in its optics. The maximum aperture of the lens has risen from f/1.7 to f/1.5 which by itself allows for 30% more light to fall onto the sensor.

Aperture change at 960fps

An aperture this high can also lead to overexposure, and also has a very shallow depth of field. For this reason Samsung has pioneered, for the first time in a mobile device, the introduction of an adjustable aperture lens. The S9’s main rear camera is able to switch between a wide f/1.5 aperture and a narrower f/2.4 aperture. The smaller aperture allows for two things: less light in bright conditions, and a deeper depth of field. Arguably the first isn’t a proper reason to switch to a narrower aperture, as unless there are some issues with the sensor or imaging pipeline of if the camera has issues with overexposures for some reason, the more light the better for the shooter.

The second effect of a narrower aperture however is a deeper depth of field. This can have a considerable impact on pictures – especially when considering the maximum aperture of f/1.5 which allows for an incredibly shallow depth of field. In theory this could lead to focusing issues in some scene compositions where you want to have more objects in the plane to be in focus, but being able to switch apertures could be extremely useful and it is a compromise for some of the disadvantages that a wide aperture brings. The S9 only supports switching between f/1.5 and f/2.4 – I suspect that having a more fine-grained control to allow for a more variable aperture would vastly increase complexity of the camera module and the aperture blade actuators.

The Galaxy Note8 introduced dual-cameras for Samsung flagships and the S9 follows suit – sort of. Unfortunately only the bigger Galaxy S9+ employs a secondary camera module equipped with a 2x zoom telephoto lens. The specifications of this module match that of the telephoto camera of the Note8: a 12MP sensor with an f/2.4 aperture lens equipped with OIS. The layout of the cameras does change in comparison to the Note8 as the S9+’s telephoto lens is located between the main camera and the fingerprint sensor in a vertical instead of horizontal arrangement. The software functionality remains the same as that of the Note8.

Video Evaluation

The regular video recording modes of the Galaxy S9 offer a variety of modes reaching up to 4K recording at 60fps. Beyond the addition of an official 4K60 mode, the Galaxy S9 also for the first time offers the option to encode videos in HEVC instead of AVC, which greatly reduces video file sizes.

Depending on the video mode selected the Galaxy S9 select a variety of encoding profiles and bit-rates. The quality of the HEVC encodings should in theory be equal than the equivalent settings on AVC – and in the above table the bit-rate advantage for HEVC is very clear.

| Recording Mode | AVC / H.264 | HEVC / H.265 |

| 3840p 60fps | High@L5.1 - 71.5 Mbps | Main@L5.1 - 41.3Mbps |

| 3840p 30fps | High@L5.1 - 48.4 Mbps | Main@L5.1 - 28.4Mbps |

| 1080p 60fps | High@L4.2 - 28.4 Mbps | Main@L4 - 16.3 Mbps |

| 1080p 30fps | High@L4 - 14.6 Mbps | Main@L4 - 8.5 Mbps |

I haven’t had sufficient time to test the video encoding quality of the Galaxy S9 variants in-depth, but above are four excerpts in the most popular modes. The phone did very well in terms of stabilisation, focus response and exposure response. YouTube re-encodes the video so it doesn’t quite make it justice, especially at the higher bitrates.

We’ll follow up with a more in-depth video quality article in the future, as I’ve spent more time focusing on still image evaluation for this article.

Panorama Quality Evaluation

First of all I’d like to go over the methodology for the still image evaluation. We’re posting a very large range of comparison devices for this review from Samsung, Google, Huawei, LG and Apple to serve for the best possible evaluation of not only the Galaxy S9 against its predecessor but also against the existing high-end competition. The comparison included a total of 1144 unsorted shots across all devices and scenarios. Each device took several shots and I filtered them out for their best takes. I also found that to be most fair to the individual processing and exposure types of each device camera to post a variety of capturing modes if warranted, and also multiple exposure types (auto vs manual spot metering) in scenarios which showed differences.

To view the pictures in their full unedited resolutions you can open them by clicking on the link on the thumbnails of each scenario. To change the thumbnails between the devices, you can click in the button list underneath each scenario thumbnail for the respective device. The button label will also contain a short description of the photo type – either capturing mode or metering mode. There are also associated galleries for each scenario, but due to limitations in our CMS that might not be the best way to browse quick phone-to-phone comparisons.

The Galaxy S9 captures were done on the Exynos variant as I had already finished the full camera comparison before I received a Snapdragon variant. I’m not expecting large differences as Samsung traditionally tunes both variants very closely. I will follow-up with a dedicated article if I find notable differences.

[ Galaxy S9 ] - [ Galaxy S8 ] - [ Galaxy S7 ] - [ Pixel 2 XL ] - [ Pixel XL ]

[ P10 ] - [ Mate 9 ] - [ Mate 10 ] - [ G6 ] - [ V30 ]

[ iPhone 7 ] - [ iPhone 8 ] - [ iPhone 8 Plus ] - [ iPhone X ]

Starting off with an evaluation of the panorama modes of each device we see that the Galaxy S9 provides a significant upgrade over the Galaxy S8 as it maintains a wider dynamic range and constant exposure in this very difficult scenario against the overhead sun in the middle of the panorama. It’s especially the colour balance consistency that is very visible against the S8. The Galaxy S9 also manages to capture more detail than the S8, either through the new lens system and sensor or through the new processing which does less sharpening. Samsung goes quite overboard in terms of file size as the S7 to S9 all generate 37-40MB images that I had to recompress without noticeable loss in order to upload onto our CMS.

The S9 doesn’t really have proper competition here as other phones have noticeable drawbacks. The iPhones, while providing an image with more contrast, also have to make due with heavier processing that loses out on details. They also lack in dynamic range compared to the Galaxy S9 and partly the S8 as features in the dark part of the valley are essentially not picked up at all. The LG V30 does well and posts better colour temperature than the G6 – but both seem to underexpose the scene a bit too much. Among Huawei’s devices the Mate 10 goes a very good job in terms of dynamic range but again like LG it ends up with a rather darker image than I had preferred.

One of these devices is not like the other and that’s the Pixel 2 XL which does an extreme amount of HDR processing on the shot. Google here tries to extract the maximum amount of detail, and while it looks intriguing in the thumbnail, it looks quite fake when looking closer up. It’s also visible that this isn’t a true high dynamic range shot from the sensor as the camera doesn’t pick up details such as the stairs in the dark part in the middle of the valley.

190 Comments

View All Comments

generalako - Monday, March 26, 2018 - link

Android has had color management since Oreo, so get your facts straight. As for being able to device color modes, that's a different thing entirely, and is not and was not Samsung's way of dealing with color management, as you claim. That's just a made-up lie from your side. Allowing people to choose different color modes is actually a beneficial thing to have. With iOS, the target is to have color accurate images. So whatever images you see, they strictly follow sRGB or P3 gamuts. But on TouchWiz, Pixel UI, etc., people actually have the opportunity to choose more saturated colors, if that is to their liking. So they in essence provide an alternative in an area where Apple doesn't.Also, it needs to be stated that the S9 has the most color accurate display of any phone out there, as DisplayMate so very clearly noted and tested.

id4andrei - Monday, March 26, 2018 - link

I'm not saying that in its respective color profile the display is not good. I am talking about the OS. You the user are not supposed to choose color profiles. On ios you could have two images side by side, each targeting a different color profile, and the OS will display them correctly. In Android, you must manually switch profiles to match a photo while the other photo will look way off.generalako - Monday, March 26, 2018 - link

Except it isn't. The difference lies in the display drivers. Even Anandtech mention it in this article, about the black clipping, that Samsung ought to be able to be as good as well. Why? Because it's the same panel technology. So clearly the clipping isn't a result of hardware limitation, as is the case with LG's OLED panel in the V30 and Pixel 2 XL.DanNeely - Monday, March 26, 2018 - link

The big cores are about 3x the size of the small ones - "an A75+L2 core coming in at 1.57mm² and the A55+L2 coming in at ~0.53mm²" - I think you might be getting confused because the big core cluster wraps around the small ones in a backwards L shape, with 2 cores at the same height as the small ones and the second pair below.tipoo - Monday, March 26, 2018 - link

Rodger, was just going off what TechInsights had highlighted.tipoo - Monday, March 26, 2018 - link

Doh, I see now. The bottom line I was looking at is a SoC feature, not a white highlight box, I missed the end of the L.Commodus - Monday, March 26, 2018 - link

It's still fascinating to me that Qualcomm and Samsung alike seem to be losing ground to Apple -- aside from some of the graphics tests, the A11 fares better overall in cross-platform benchmarks. Used to be that there was some degree of leapfrogging between QC/Samsung and Apple, but the S845 and E9810 are really just catching up.goatfajitas - Monday, March 26, 2018 - link

Ogling over benchmarks and putting much weight into them is sooooooo 2006 ;)Quantumz0d - Wednesday, March 28, 2018 - link

True, Most of the talented developers at XDA nowadays focus more on the UX, Although there were a few long time back who had both but since Android changed a lot and the SoCs have increased in complexity, SD80x platform had bins and voltage control was easy but with the SD820 platform the voltage planes have increased even though can still be customized but need significant skill to micro optimize unlike the OMAP and the 800 and others.Apple is just banking on faux peak figures and OS knit with their tight integration optimizing for limited space of UI on iOS, they certainly have benefits but the downsides massively outweigh these advantages about the walled garden.

peevee - Friday, April 6, 2018 - link

"Apple is just banking on faux peak figures"They are not faux. If anything, the long-term testing in cooled environment is "faux", because nobody uses their smartphones as compute farms. When you start an app or open a webpage, the process runs for seconds at most, so that is what matters most in the CPU/memory/flash department. Only sustained performance which matters is games, on the GPU side of things.