Intel Optane SSD DC P4800X 750GB Hands-On Review

by Billy Tallis on November 9, 2017 12:00 PM ESTMixed Read/Write Performance

Workloads consisting of a mix of reads and writes can be particularly challenging for flash based SSDs. When a write operation interrupts a string of reads, it will block access to at least one flash chip for a period of time that is substantially longer than a read operation takes. This hurts the latency of any read operations that were waiting on that chip, and with enough write operations throughput can be severely impacted. If the write command triggers an erase operation on one or more flash chips, the traffic jam is many times worse.

The occasional read interrupting a string of write commands doesn't necessarily cause much of a backlog, because writes are usually buffered by the controller anyways. But depending on how much unwritten data the controller is willing to buffer and for how long, a burst of reads could force the drive to begin flushing outstanding writes before they've all been coalesced into optimal sized writes.

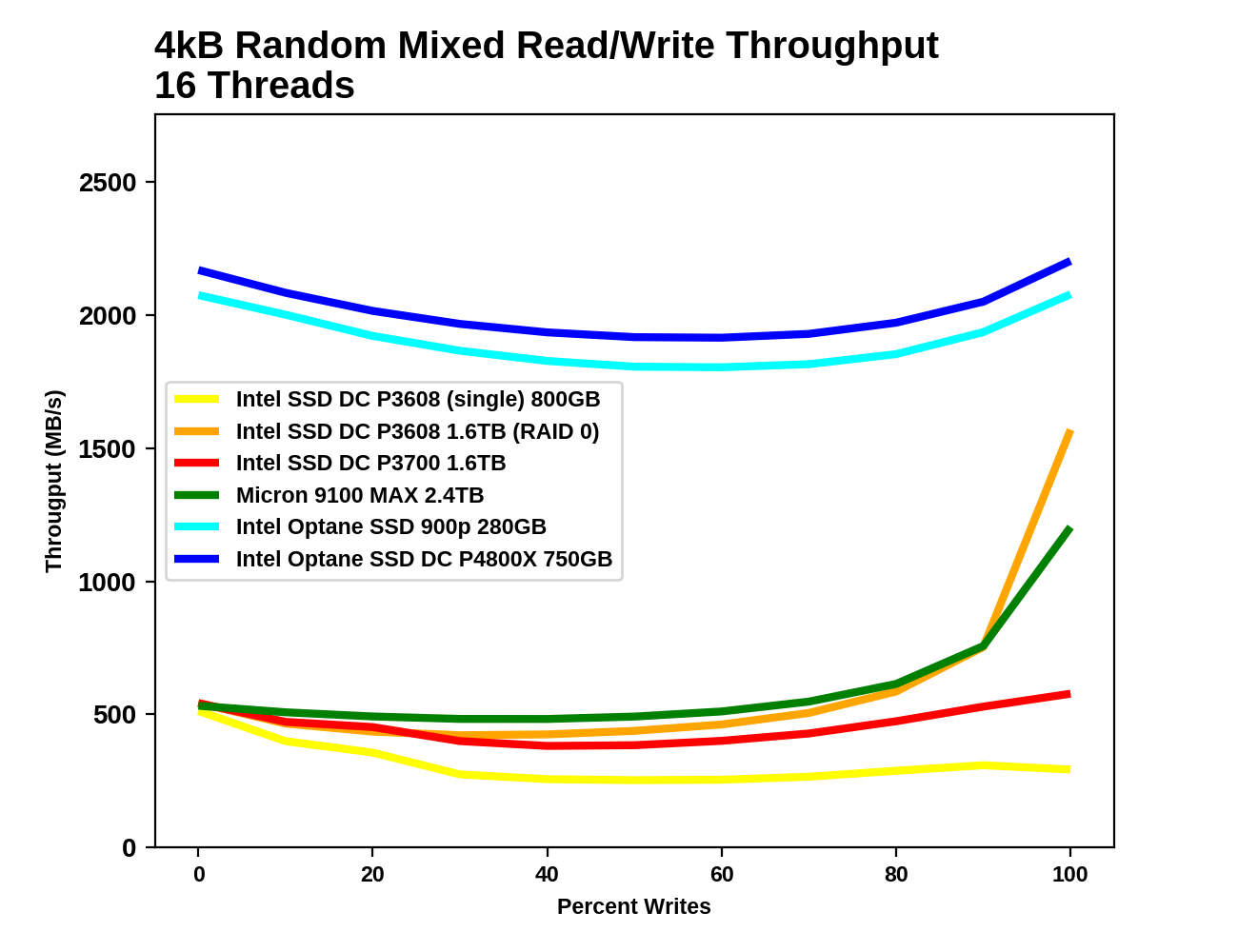

Our first mixed workload test is an extension of what Intel describes in their specifications for throughput of mixed workloads. A total of 16 threads are used, each performing a mix of random reads and random writes at a queue depth of 1. Instead of just testing a 70% read mixture, the full range from pure reads to pure writes is tested at 10% increments.

|

|||||||||

| Vertical Axis units: | IOPS | MB/s | |||||||

The Intel Optane SSD DC P4800X is slightly faster than the Optane SSD 900p throughout this test, but either is far faster than the flash-based SSDs. Performance from the Optane SSDs isn't entirely flat across the test, but the minor decline in the middle is nothing to complain about. The Intel P3608 and Micron 9100 both show strong increases near the end of the test due to caching and combining writes.

|

|||||||||

| Mean | Median | 99th Percentile | 99.999th Percentile | ||||||

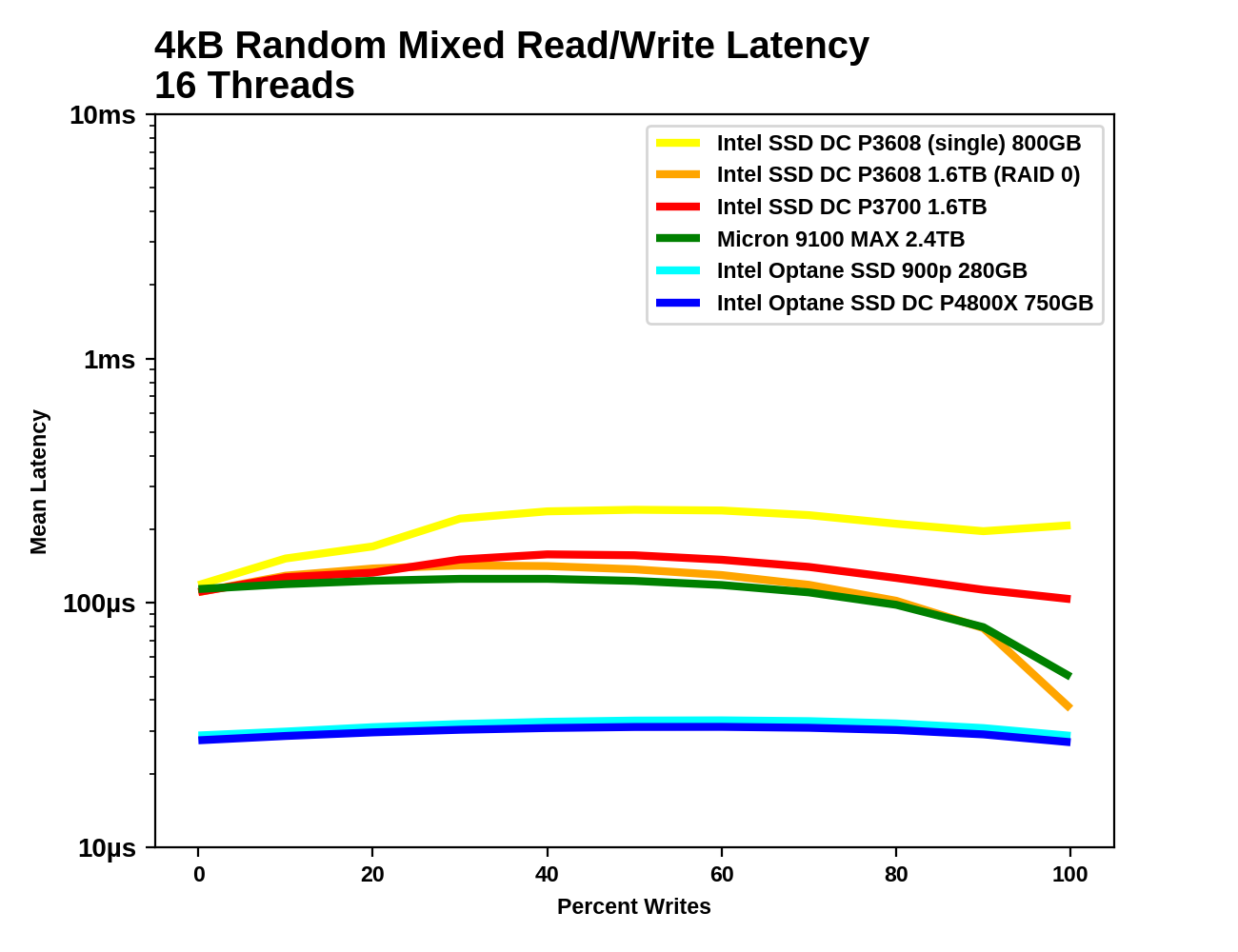

The mean latency graphs are simply the reciprocal of the throughput graphs above, but the latency percentile graphs reveal a bit more. The median latency of all of the flash SSDs drops significantly once the workload consists of more writes than reads, because the median operation is now a cacheable write instead of an uncacheable read. A graph of the median write latency would likely show writes to be competitive on the flash SSDs even during the read-heavy portion of the test.

The 99th percentile latency chart shows that the flash SSDs have much better QoS on pure read or write workloads than on mixed workloads, but they still cannot approach the stable low latency of the Optane SSDs.

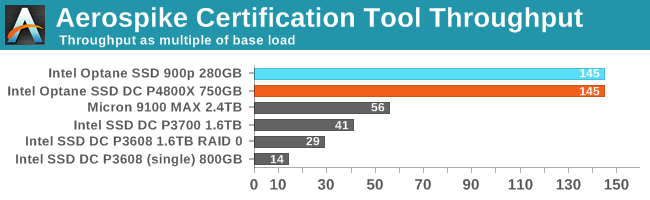

Aerospike Certification Tool

Aerospike is a high-performance NoSQL database designed for use with solid state storage. The developers of Aerospike provide the Aerospike Certification Tool (ACT), a benchmark that emulates the typical storage workload generated by the Aerospike database. This workload consists of a mix of large-block 128kB reads and writes, and small 1.5kB reads. When the ACT was initially released back in the early days of SATA SSDs, the baseline workload was defined to consist of 2000 reads per second and 1000 writes per second. A drive is considered to pass the test if it meets the following latency criteria:

- fewer than 5% of transactions exceed 1ms

- fewer than 1% of transactions exceed 8ms

- fewer than 0.1% of transactions exceed 64ms

Drives can be scored based on the highest throughput they can sustain while satisfying the latency QoS requirements. Scores are normalized relative to the baseline 1x workload, so a score of 50 indicates 100,000 reads per second and 50,000 writes per second. We used the default settings for queue and thread counts and did not manually constrain the benchmark to a single NUMA node, so this test produced a total of 64 threads sharing a total of 32 CPU cores split across two sockets.

The usual runtime for ACT is 24 hours, which makes determining a drive's throughput limit a long process. In order to have results in time for this review, much shorter ACT runtimes were used. Fortunately, none of these SSDs take anywhere near 24h to reach steady state. Once the drives were in steady state, a series of 5-minute ACT runs was used to estimate the drive's throughput limit, and then ACT was run on each drive for two hours to ensure performance remained stable under sustained load.

| ACT Transaction Latency | |||

| Drive | % over 1ms | % over 2ms | |

| Intel Optane SSD DC P4800X 750GB | 0.82 | 0.16 | |

| Intel Optane SSD 900p 280GB | 1.53 | 0.36 | |

| Micron 9100 MAX 2.4TB | 4.94 | 0.44 | |

| Intel SSD DC P3700 1.6TB | 4.64 | 2.22 | |

| Intel SSD DC P3608 (single controller) 800GB | 4.51 | 2.29 | |

When held to a specific QoS standard, the two Optane SSDs deliver more than twice the throughput than any of the flash-based SSDs. More significantly, even at their throughput limit, they are well below the QoS limits: the CPU is actually the bottleneck at that rate, leading to overall transaction times that are far higher than the actual drive I/O time. Somewhat higher throughput could be achieved by tweaking the thread and queue counts in the ACT configuration. Meanwhile, the flash SSDs are all close to the 5% limit for 1ms transactions, but are so far under the limit for longer latencies that I've left those numbers out of the above table.

58 Comments

View All Comments

AlaskanDruid - Thursday, November 9, 2017 - link

Ok. what am I missing? What is the use of these when you can have one in a m.2 form which takes up MUCH less room?I know I'm missing something, but what is it?

Drumsticks - Thursday, November 9, 2017 - link

The drives dissipate 12-18W, which is 2-3x more than flash based SSDs. I don't think the m.2 form factor would make heat dissipation easy, unless you scale the design back down to the 32-120GB range.Billy Tallis - Thursday, November 9, 2017 - link

More than just capacity, you'd almost certainly have to scale down performance by a lot. It's possible to do better than the current PCIe x2 32GB module, but a 7 channel controller would be hard to fit physically, and it would have to run slower to not melt. I think a ~3 channel M.2 drive is plausible, but I'm not sure its write speeds would be high enough.DanNeely - Thursday, November 9, 2017 - link

it looks like you could physically do 7 (or even 8) channels on a dual sided 22110 m.2 module with the current package size. The thermals would be a real challenge though; and are why I really wish U.2 would've gained at least a little traction in the consumer market.Billy Tallis - Thursday, November 9, 2017 - link

I was thinking more along the lines of a 22x80mm card. I agree that 7 3D XPoint packages is probably possible on 22x110, but I'm not sure how dense the controller's BGA pad can get.DanNeely - Thursday, November 9, 2017 - link

If you went 2 sided you could fit 6 on a 2280 card. With a bare bottom they're just short on enough room for a 3rd package on top; move some of that misc stuff underneath and you'd free up the PCB area needed.Rwbeckman - Thursday, November 9, 2017 - link

This is the datacenter model, good for releasing higher capacity sooner with its size. Plus rackmount cooling design will help. U.2. Will be for hot swap, the card will not. The M.2 enthusiast consumer model is the 900PSamus - Thursday, November 9, 2017 - link

XPoint technology is just one of the reasons Intel released the "ruler" form factor for future SSD's. I suspect a mobile variant will replace m2 in the future.https://www.anandtech.com/show/11702/intel-introdu...

CheapSushi - Thursday, November 9, 2017 - link

Or just use the new M.3 format (wider) that Samsung came out with.Drumsticks - Thursday, November 9, 2017 - link

Great review Billy, thank you!Optane as a gen 1 product is pretty fantastic. I can't wait to see how the rest of the market will compete, and what Intel and Micron (where are their drives?) can do for round two.