The Intel Core i9-7980XE and Core i9-7960X CPU Review Part 1: Workstation

by Ian Cutress on September 25, 2017 3:01 AM ESTBenchmarking Performance: CPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

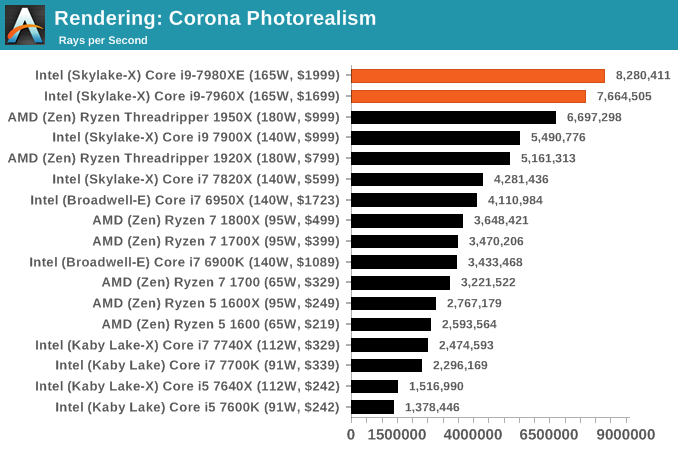

Corona 1.3: link

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

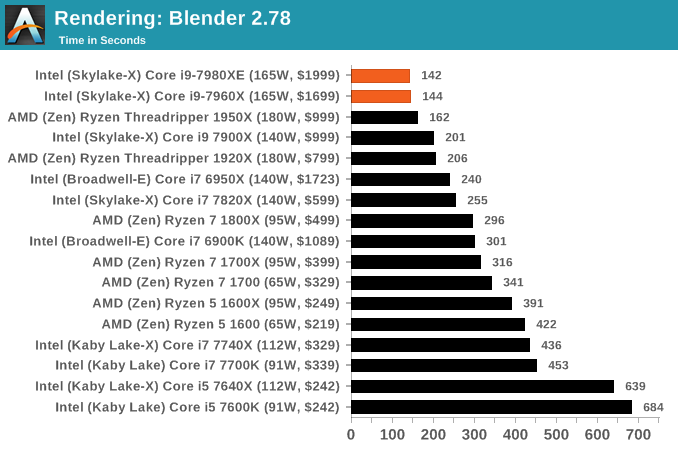

Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

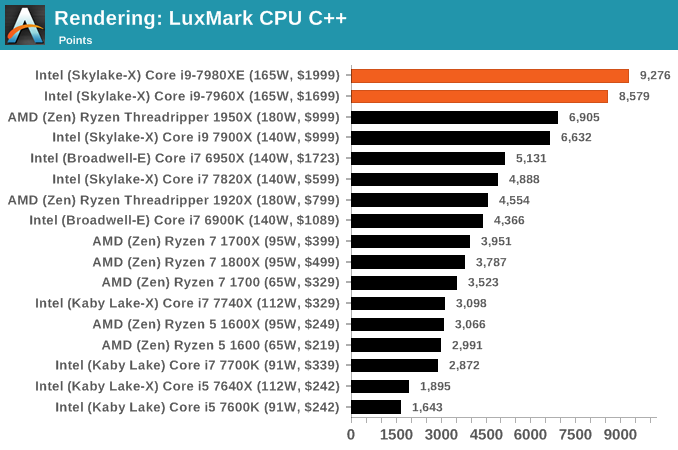

LuxMark v3.1: Link

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

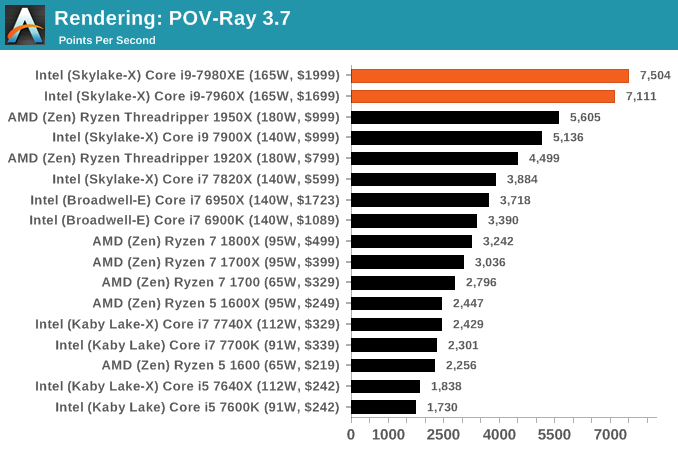

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

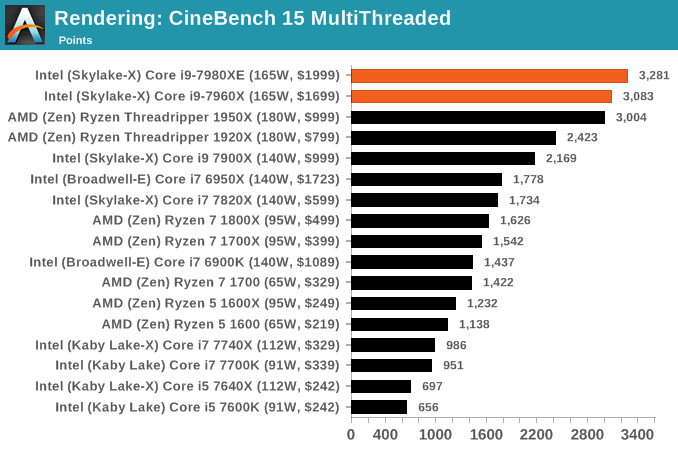

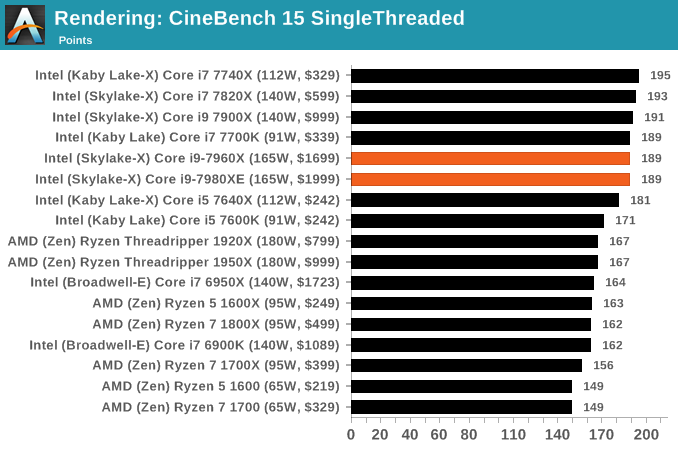

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

152 Comments

View All Comments

mapesdhs - Tuesday, September 26, 2017 - link

In that case, using Intel's MO, TR would have 68. What Intel is doing here is very misleading.iwod - Monday, September 25, 2017 - link

If we factor in the price of the whole system, rather then just CPU, ( AMD's MB tends to be cheaper ), then AMD is doing pretty well here. I am looking forward to next years 12nm Zen+.peevee - Monday, September 25, 2017 - link

From the whole line, only 7820X makes sense from price/performance standpoint.boogerlad - Monday, September 25, 2017 - link

Can an IPC comparison be done between this and Skylake-s? Skylake-x LCC lost in some cases to skylake, but is it due to lack of l3 cache or is it because the l3 cache is slower?IGTrading - Monday, September 25, 2017 - link

There will never be an IPC comparison of Intel's new processors, because all it would do is showcase how Intel's IPC actually went down from Broadwell and further down from KabyLake.Intel's IPC is a downtrend affair and this is not really good for click and internet traffic.

Even worse : it would probably upset Intel's PR and that website will surely not be receiving any early review samples.

rocky12345 - Monday, September 25, 2017 - link

Great review thank you. This is how a proper review is done. Those benchmarks we seen of the 18 core i9 last week were a complete joke since the guy had the chip over clocked to 4.2GHz on all core which really inflated the scores vs a stock Threadripper 16/32 CPU. Which was very unrealistic from a cooling stand point for the end users.This review had stock for stock and we got to see how both CPU camps performed out of the box states. I was a bit surprised the mighty 18 core CPU did not win more of the benches and when it did it was not by very much most of the time. So a 1K CPU vs a 2K CPU and the mighty 18 core did not perform like it was worth 1K more than the AMD 1950x or the 1920x for that matter. Yes the mighty i9 was a bit faster but not $1000 more faster that is for sure.

Notmyusualid - Thursday, September 28, 2017 - link

I too am interested to see 'out of the box performance' also.But if you think ANYONE would buy this and not overclock - you'd have to be out of your mind.

There are people out there running 4.5GHz on all cores, if you look for it.

And what is with all this 'unrealistic cooling' I keep hearing about? You fit the cooling that fits your CPU. My 14C/28T CPU runs 162W 24/7 running BOINC, and is attached to a 480mm 4-fan all copper radiator, and hand on my heart, I don't think has ever exceeded 42C, and sits at 38C mostly.

If I had this 7980XE, all I'd have to do is increase pump speed I expect.

wiyosaya - Monday, September 25, 2017 - link

Personally, I think the comments about people that spend $10K on licenses having the money to go for the $2K part are not necessarily correct. Companies will spend that much on a license because they really do not have any other options. The high end Intel part in some benchmarks gets 30 to may be 50 percent more performance on a select few benchmarks. I am not going to debate that that kind of performance improvement is significant even though it is limited to a few benchmarks; however, to me that kind of increased performance comes at an extreme price premium, and companies that do their research on the capabilities of each platform vs price are not, IMO, likely to throw away money on a part just for bragging rights. IMO, a better place to spend that extra money would be on RAM.HStewart - Monday, September 25, 2017 - link

In my last job, they spent over $100k for software version system.In workstation/server world they are looking for reliability, this typically means Xeon.

Gaming computers are different, usually kids want them and have less money, also they are always need to latest and greatest and not caring about reliability - new Graphics card comes out they replace it. AMD is focusing on that market - which includes Xbox One and PS 4

For me I looking for something I depend on it and know it will be around for a while. Not something that slap multiple dies together to claim their bragging rights for more core.

Competition is good, because it keeps Intel on it feat, I think if AMD did not purchase ATI they would be no competition for Intel at all in x86 market. But it not smart also - would anybody be serious about placing AMD Graphics Card on Intel CPU.

wolfemane - Tuesday, September 26, 2017 - link

Hate to burst your foreign bubble but companies are cheap in terms of staying within budgets. Specially up and coming corporations. I'll use the company I work for as an example. Fairly large print shop with 5 locations along the US West coast that's been in existence since the early 70's. About 400 employees in total. Server, pcs, and general hardware only sees an upgrade cycle once every 8 years (not all at once, it's spread out). Computer hardware is a big deal in this industry, and the head of IT for my company Has done pretty well with this kind of hardware life cycle. First off, macs rule here for preprocessing, we will never see a Windows based pc for anything more than accessing the Internet . But when it comes to our servers, it's running some very old xeons.As soon as the new fiscal year starts, we are moving to an epyc based server farm. They've already set up and established their offsite client side servers with epyc servers and IT absolutely loves them.

But why did I bring up macs? The company has a set budget for IT and this and the next fiscal year had budget for company wide upgrades. By saving money on the back end we were able to purchase top end graphic stations for all 5 locations (something like 30 new machines). Something they wouldn't have been able to do to get the same layout with Intel. We are very much looking forward to our new servers next year.

I'd say AMD is doing more than keeping Intel on their feet, Intel got a swift kick in the a$$ this year and are scrambling.