The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

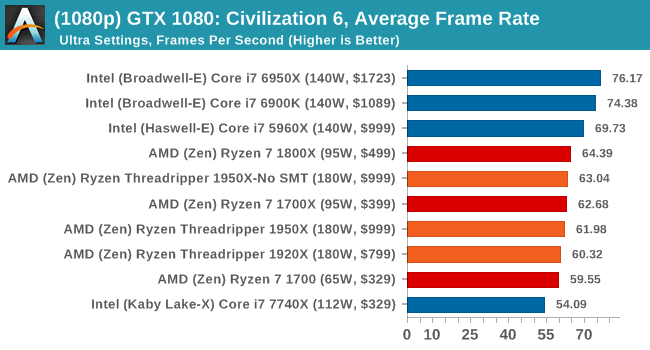

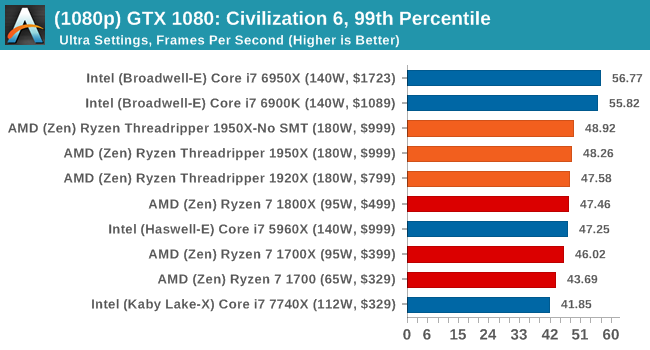

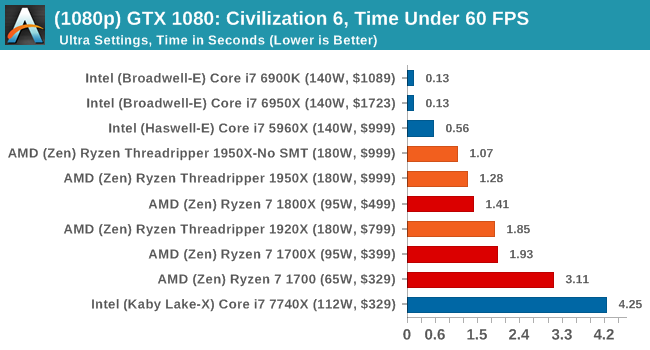

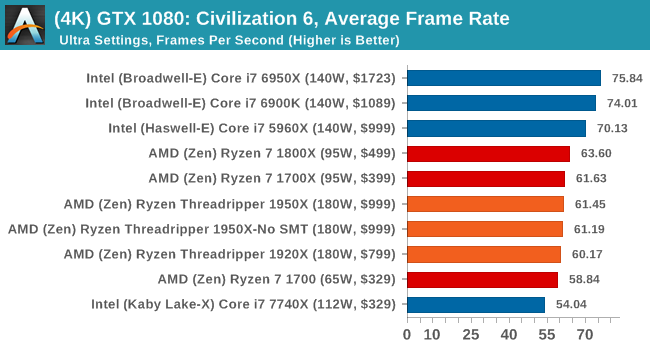

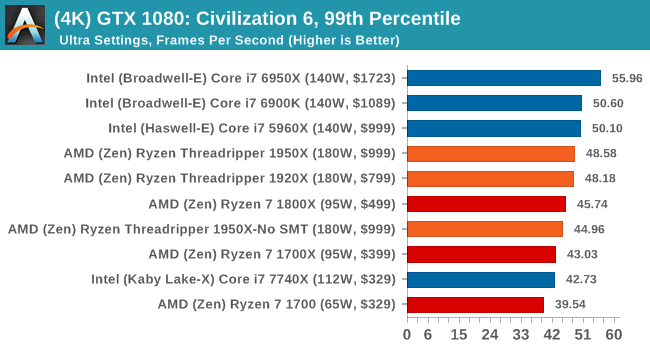

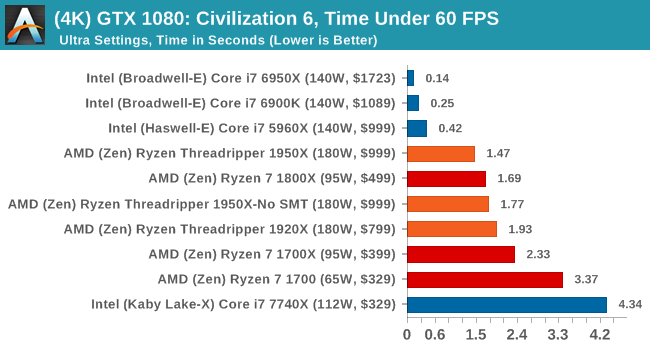

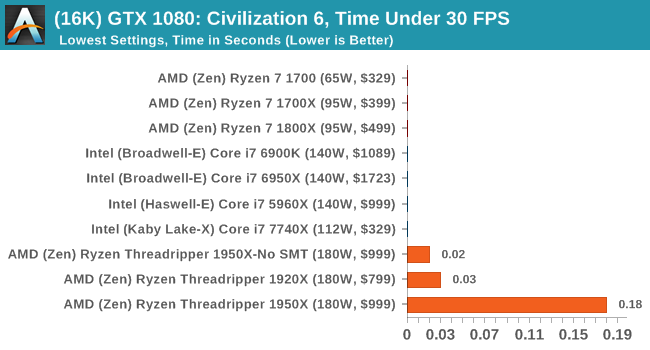

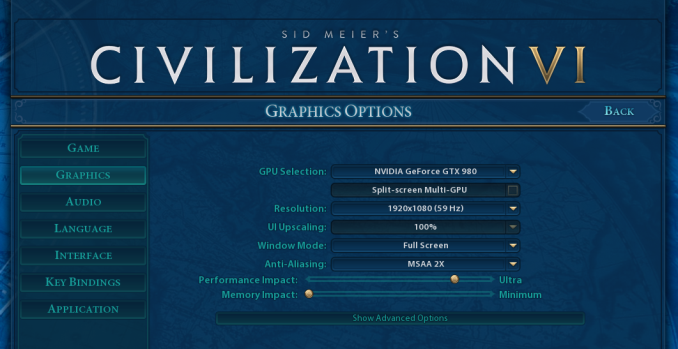

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

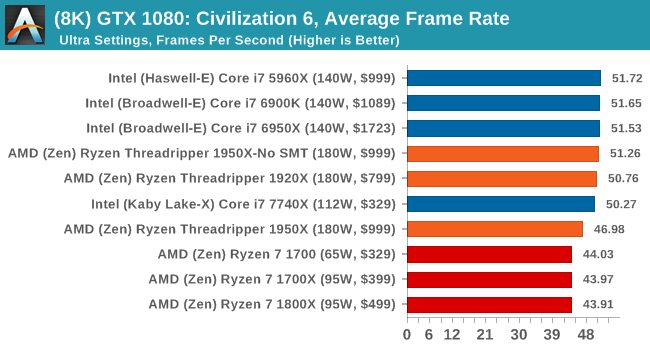

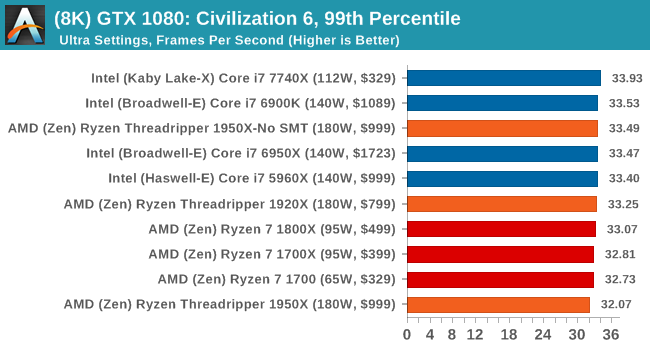

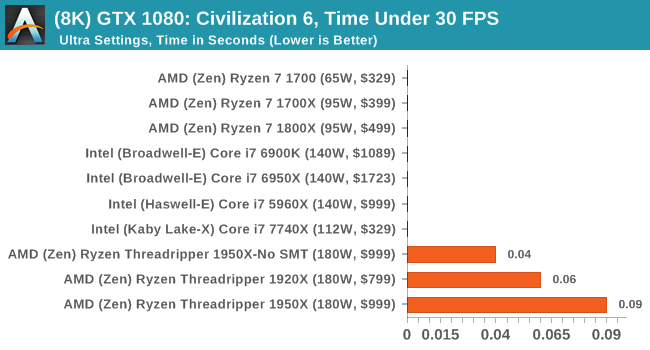

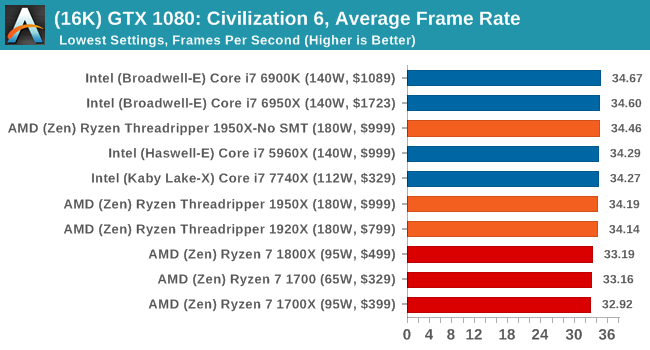

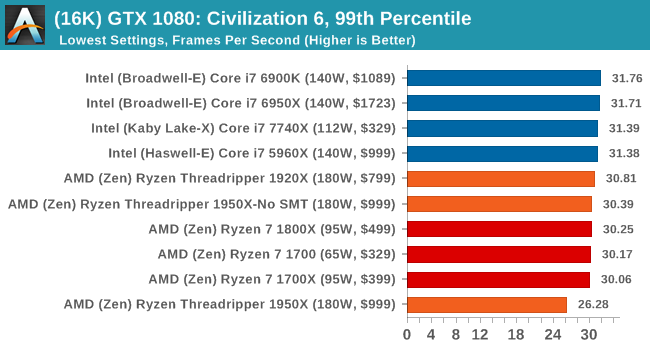

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

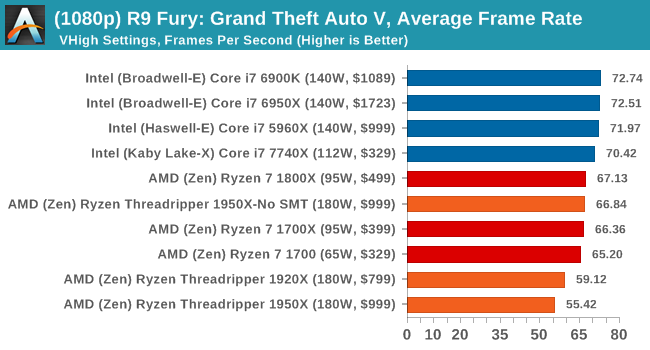

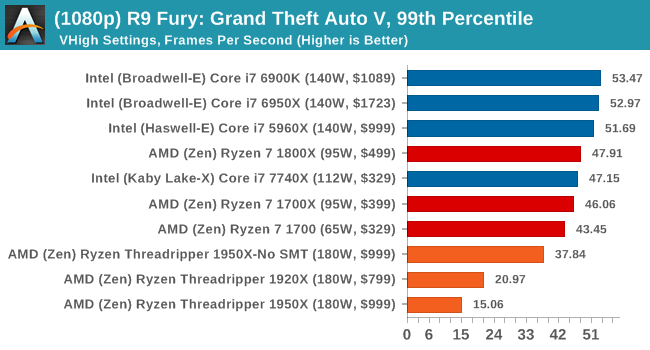

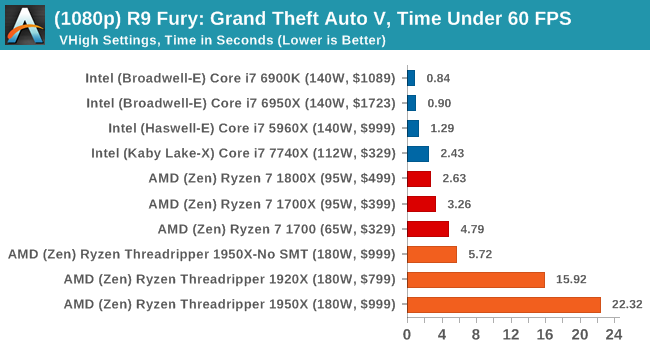

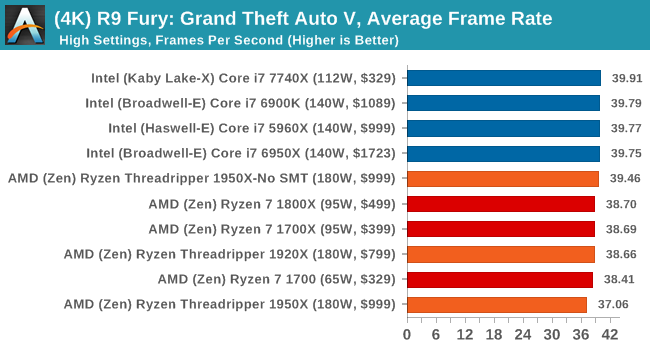

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

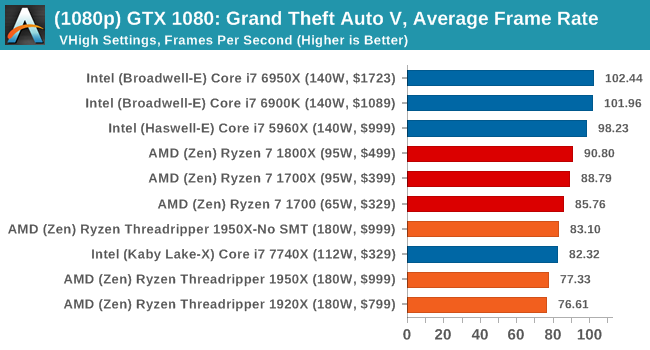

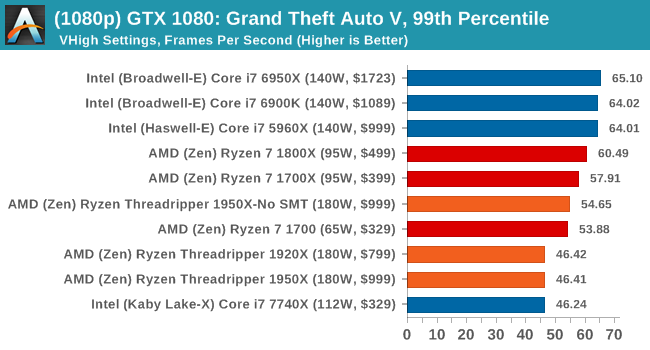

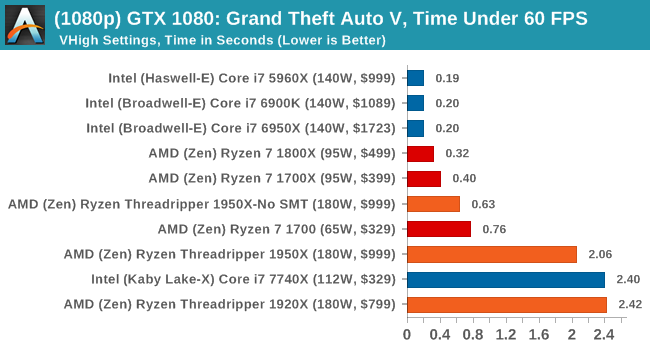

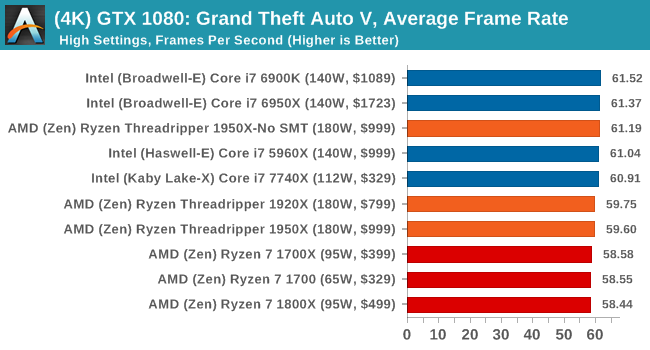

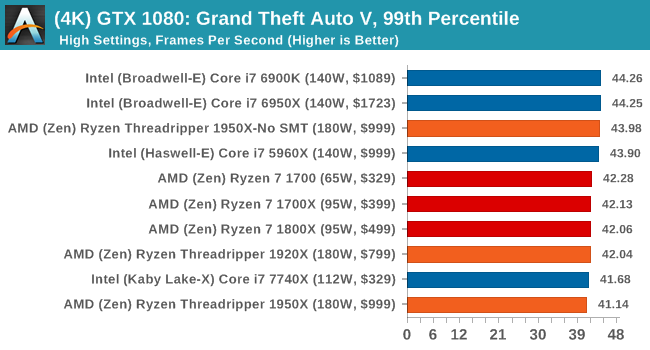

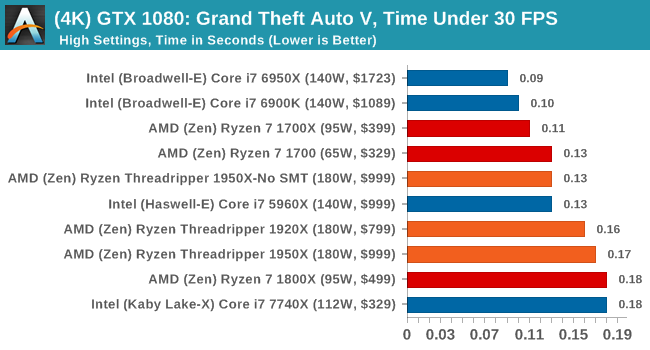

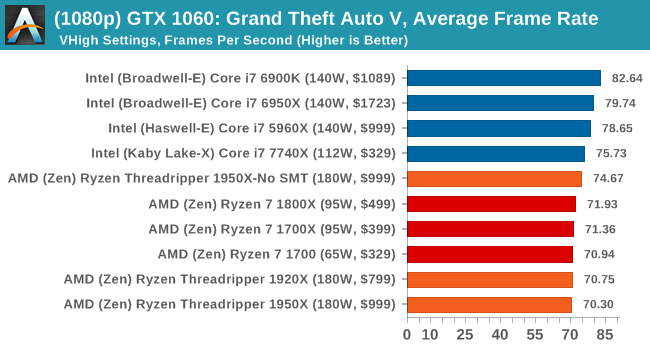

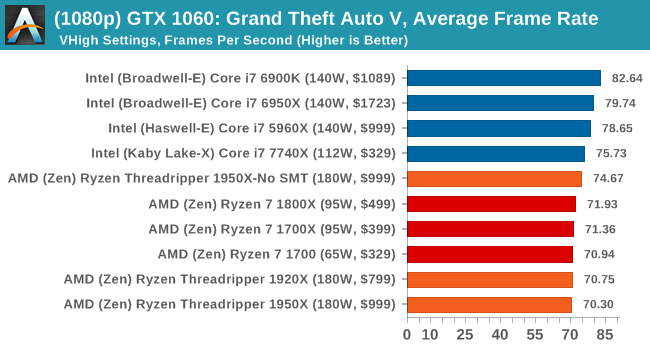

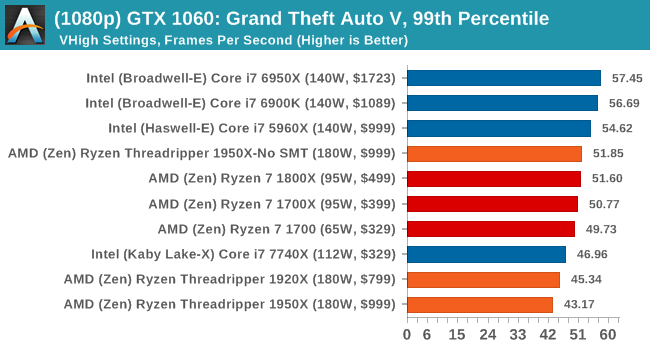

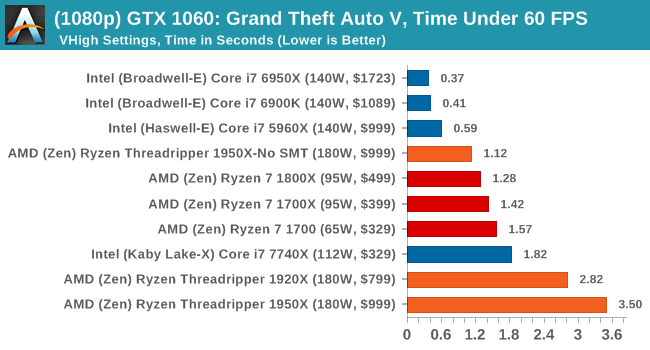

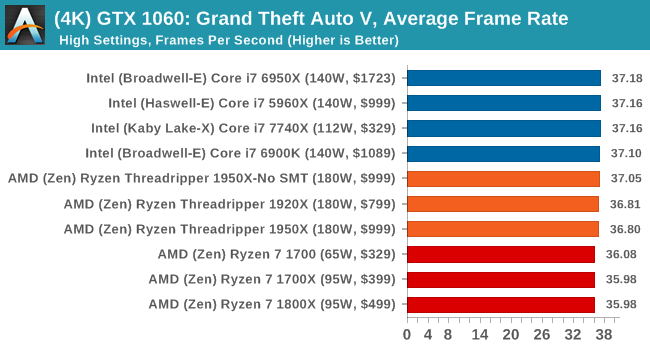

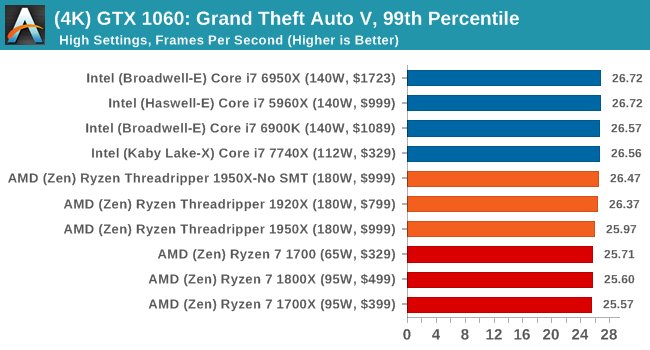

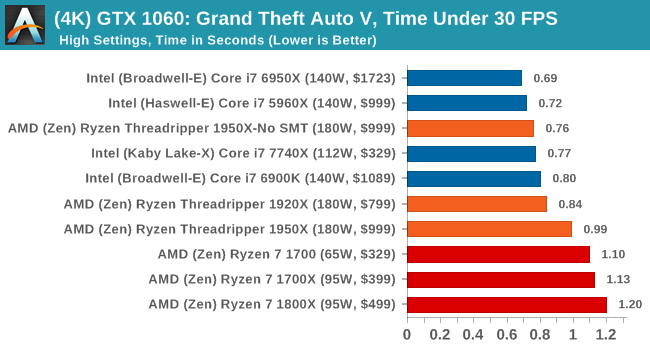

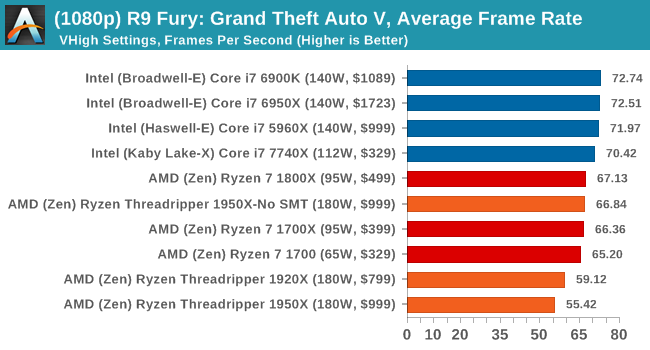

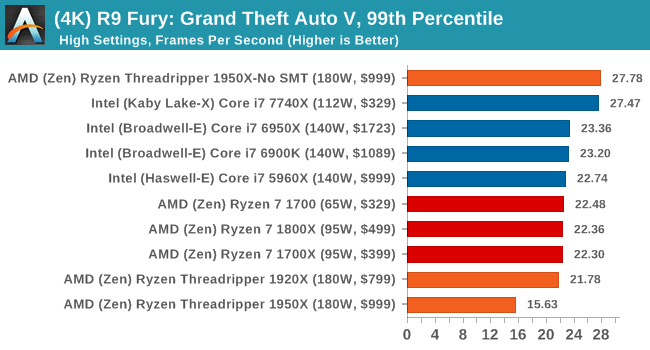

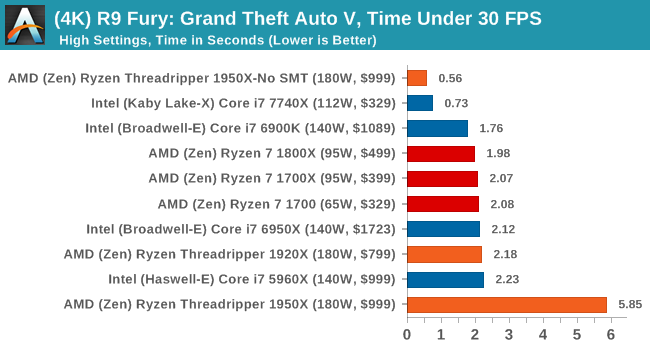

On the whole, the Threadripper CPUs perform as well as Ryzen does on most of the tests, although the Time Under analysis always seems to look worse for Threadripper.

347 Comments

View All Comments

drajitshnew - Thursday, August 10, 2017 - link

You have written that "This socket is identical (but not interchangeable) to the SP3 socket used for EPYC,".Please, clarify.

I was under the impression that if you drop an epyc in a threadripper board, it would disable 4 memory channels & 64 PCIe lanes as those will simply not be wired up.

Deshi! - Friday, August 11, 2017 - link

No AMD have stated that won;t work. Its probably not hardware incompatible, but they probably put microcode on the CPUS so that if it doesn;t detect its a Ryzen CPU it doesn't work. There might also be differences in how the cores are wired up on the fabric since its 2 cores instead of 4. Remember, Threadripper has only 2 Physical Dies that are active. on Epyc all processors are 4 dies with cores on each die disabled right down to the 8 core part. (2 enabled on each physical die)Deshi! - Friday, August 11, 2017 - link

Wish there was an edit function..... but to add to that, If you pop in an Epyc processor, it might go looking for those extra lanes and memory busses that don;t exist on Threadripper boards, hence cause it not to function.pinellaspete - Thursday, August 10, 2017 - link

This is the second article where you've tried to start an acronym called SHED (Super High End Desktop) in referring to AMD Threadripper systems. You also say that Intel systems are HEDT (High End Desktop) when in all reality both AMD and Intel are HEDT. It is just that Intel has been keeping the core count low on consumer systems for so long you think that anything over a 10 core system is unusual.AMD is actually producing a HEDT CPU for $1000 and not inflating the price of a HEDT CPU and bleeding their customers like Intel was doing with the i7-6950X CPU for $1750. HEDT CPUs should cost about $1000 and performance should increase with every generation for the same price, not relentlessly jacking the price as Intel has done.

HEDT should be increasing in performance every generation and you prove yourself to be Intel biased when something finally comes along that beats Intel's butt. Just because it beats Intel you want to put it into a different category so it doesn't look like Intel fares as bad. If we start a new category of computers called SHED what comes next in a few years? SDHED? Super Duper High End Desktop?

Deshi! - Friday, August 11, 2017 - link

theres a good reason for that. Intel is not just inflating the cost because they want to. It literally cost them much more to produce their chips because of the monolithic die aproach vs AMDs Modular aproach. AMDs yeilds are much better than INtels in the higher core counts. Intel will not be able to match AMDs prices and still make significant profit unless they also adopt the same approach.fanofanand - Tuesday, August 15, 2017 - link

"HEDT CPUs should cost about $1000 "That's not how free markets work. Companies will price any given product at their maximum profit. If they can sell 10 @ $2000 or 100 at $1000 and it costs them $500 to produce, they would make $15,000 selling 10 and $50,000 selling 100 of them. Intel isn't filled with idiots, they priced their chips at whatever they thought would bring the maximum profits. The best way for the consumer to protest prices that we believe are higher than the "right" price is to not buy them. The companies will be forced to reduce their prices to find the market equilibrium. Stop complaining about Intel's gouging, vote with your wallet and buy AMD. Or don't, it's up to you.

Stiggy930 - Thursday, August 10, 2017 - link

Honestly, the review is somewhat disappointing. For a pro-sumer product, there is no MySQL/PostgreSQL benchmark. No compilation test under Linux environment. Really?name99 - Friday, August 11, 2017 - link

"In an ideal world, all software would be NUMA-aware, eliminating any concerns over the matter."Why? This is an idiotic statement, like saying that in an ideal world all software would be aware of cache topology. In an actual ideal world, the OS would handle page or task migration between NUMA nodes transparently enough that almost no app would even notice NUMA, and even in an non-ideal world, how much does it actually matter?

Given the way the tech world tends to work ("OMG, by using DRAM that's overclocked by 300MHz you can increase your Cinebench score by .5% !!! This is the most important fact in the history of the universe!!!") my suspicion, until proven otherwise, is that the amount of software for which this actually matters is pretty much negligible and it's not worth worrying about.

cheshirster - Friday, August 11, 2017 - link

Anandtechs power and compiling tests are completely out of other rewiewers results.Still hiding poor Skylake-X gaming results.

Most of the tests are completely out of that 16-core CPU target workloads.

2400 memory used for tests.

Absolutely zero perf/watt and price/perf analisys.

Intel bias is over the roof here.

Looks like I'm done with Anandtech.

Hurr Durr - Friday, August 11, 2017 - link

Here`s your pity comment.