The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTAshes of the Singularity Escalation

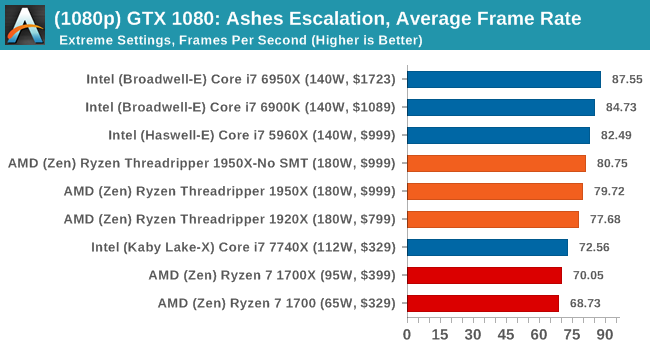

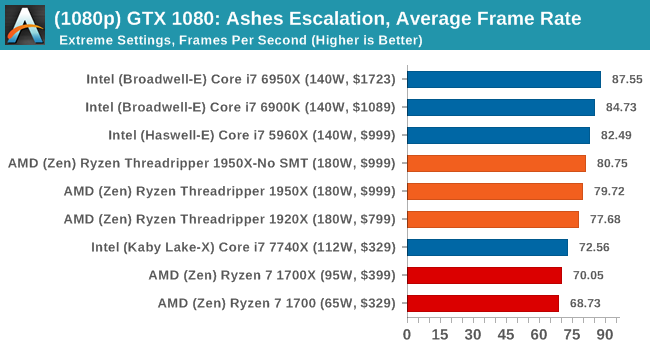

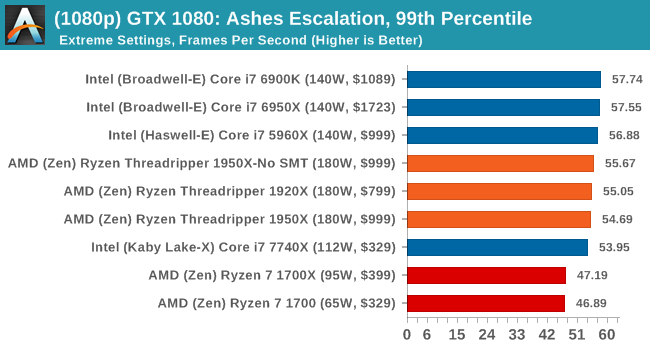

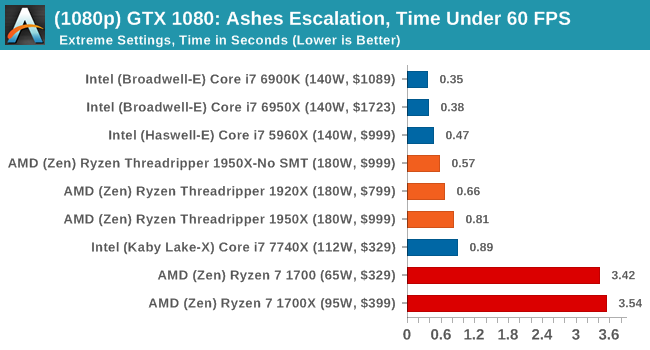

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

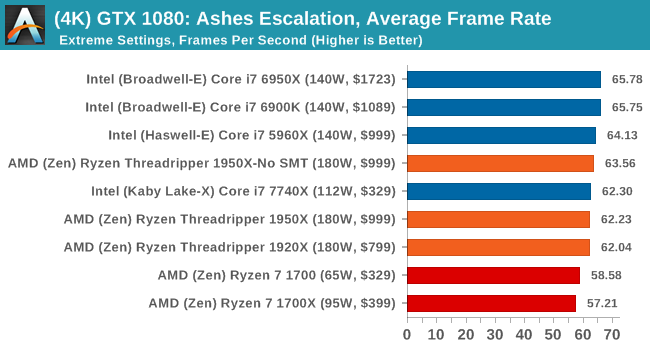

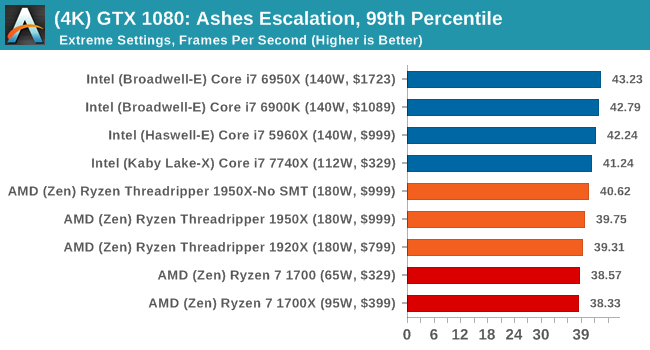

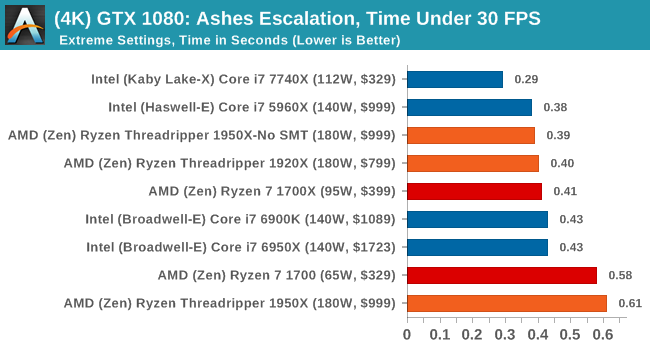

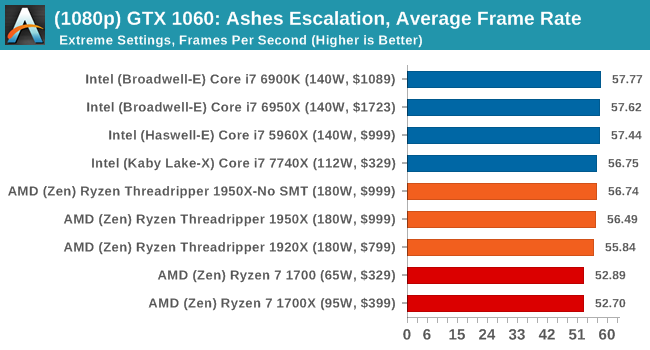

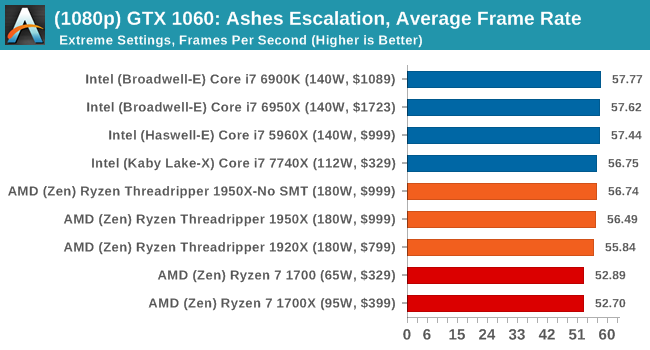

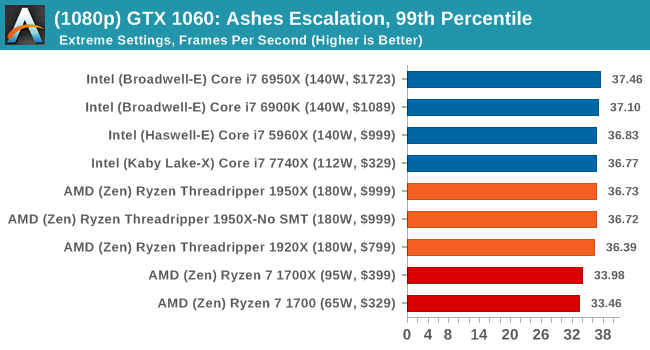

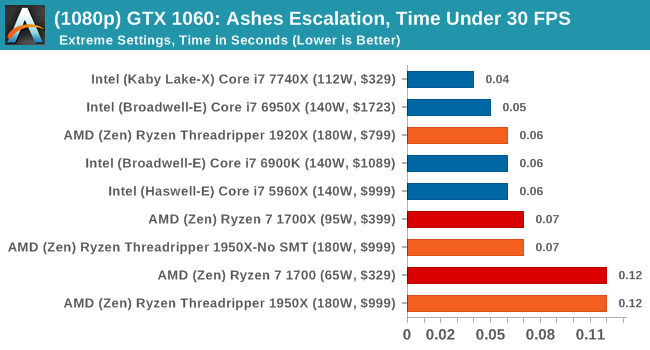

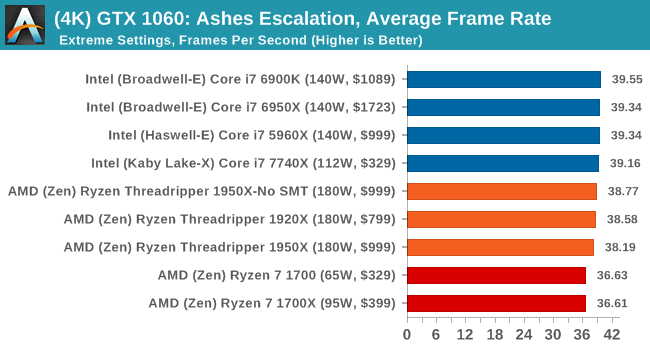

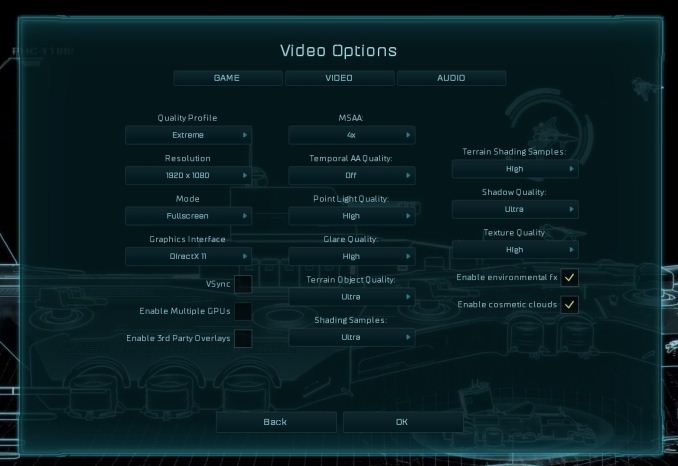

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

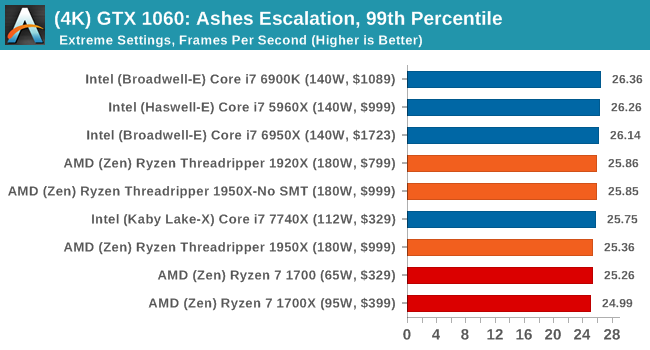

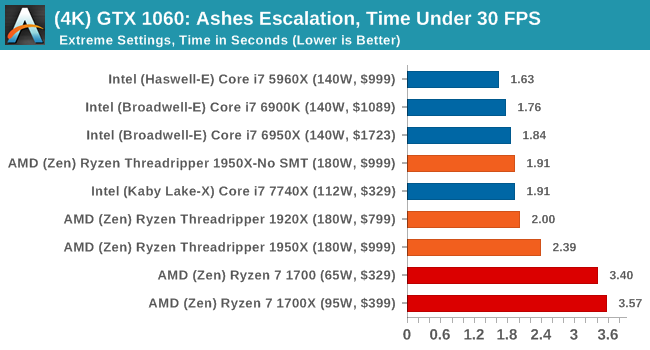

ASUS GTX 1060 Strix 6G Performance

1080p

4K

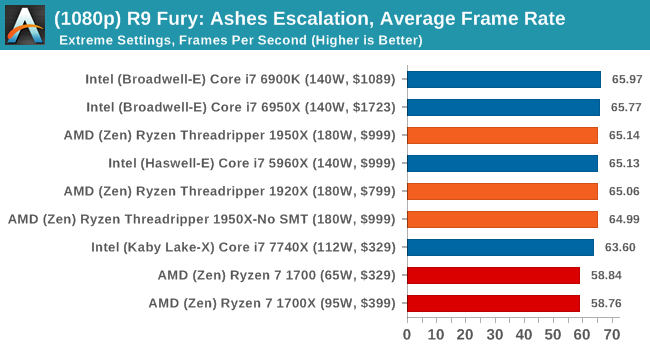

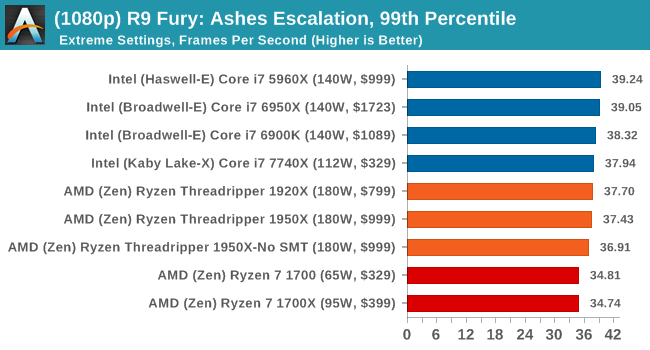

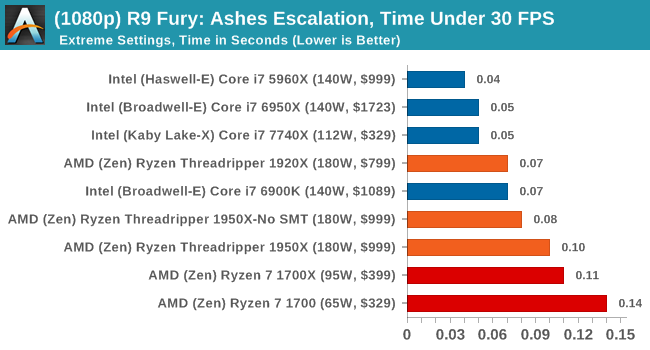

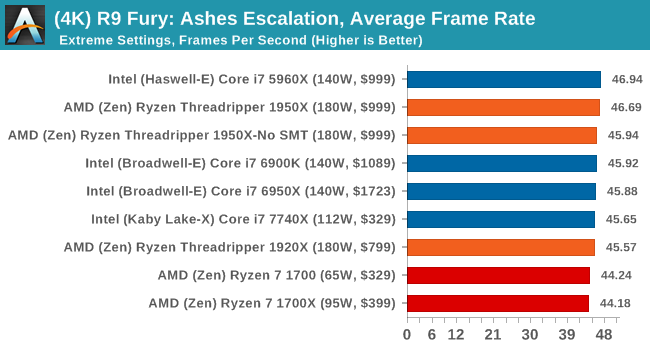

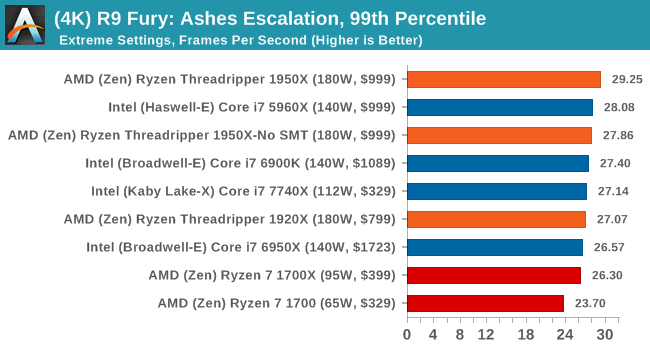

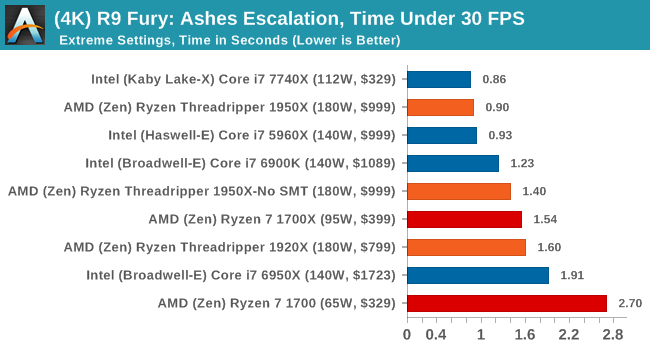

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

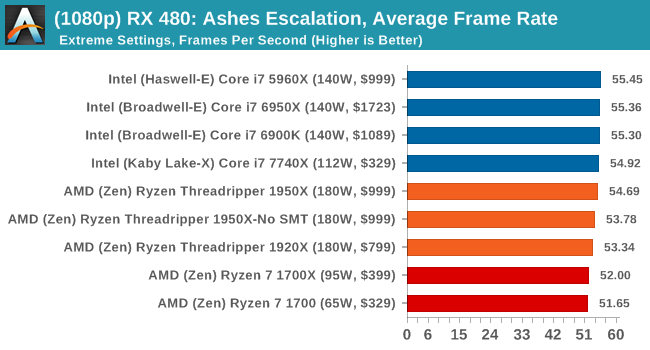

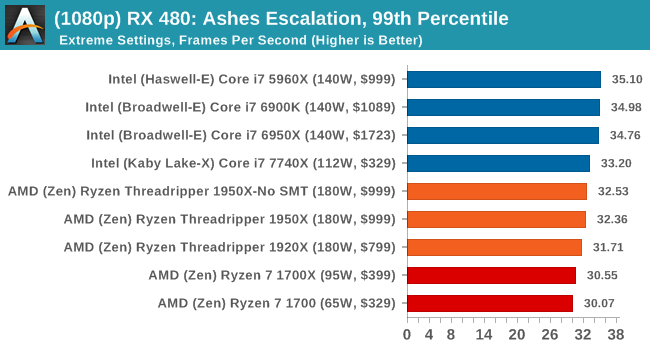

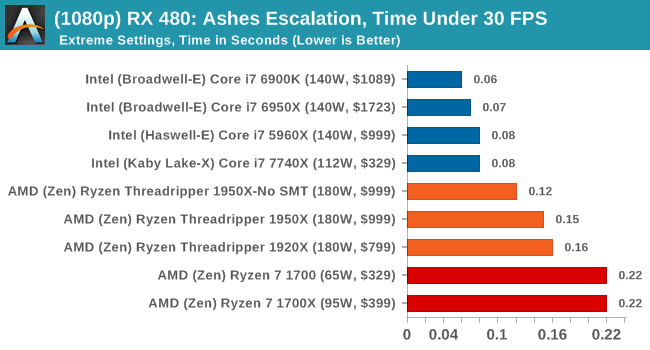

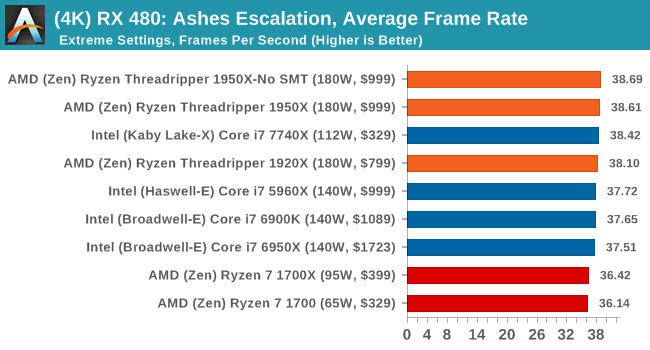

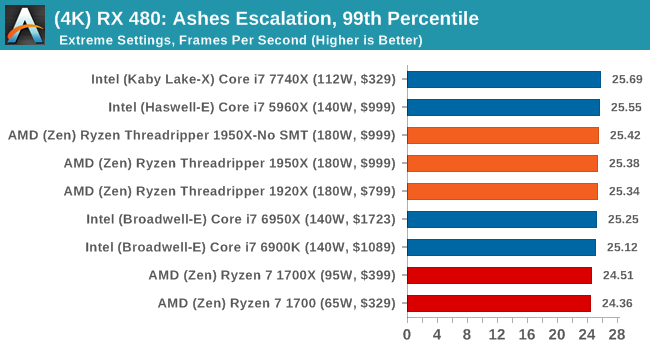

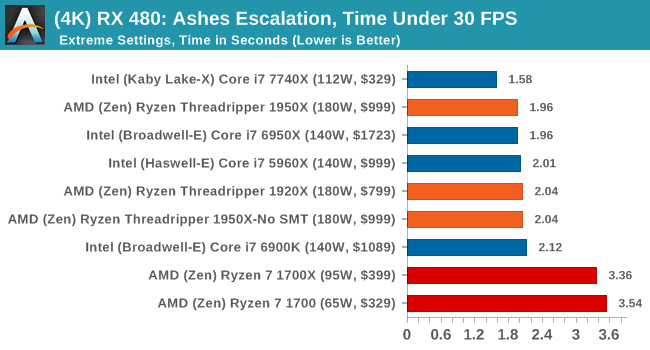

Sapphire Nitro RX 480 8G Performance

1080p

4K

AMD gets in the mix a lot with these tests, and in a number of cases pulls ahead of the Ryzen chips in the Time Under analysis.

347 Comments

View All Comments

BOBOSTRUMF - Friday, August 11, 2017 - link

Actually Intel's 140 can consume more than 210 if You want the top unrestricted performance limited. Read tomshardware reviewFiliprino - Thursday, August 10, 2017 - link

How comes WinRAR is faster with the 10 core Broadwell than with the 10 core Skylake?What did they change on Cinebench going from 10 to 11.5? Threadripper is the faster CPU in Cinebench 10, but in the newer one it is not. Then again Cinebench 15 shows TR as the faster CPU. Is this benchmark reliable?

How comes Chromium compilation is so slow? Others have pointed out they get much better scaling (linear speedup). That makes sense because compilation basically consists in launching isolated processes (compiler instances). Is this related with the segfaulting problem under GNU/Linux systems?

For encoding I would start to use FFmpeg when benchmarking so many cores. In my brain lies a memory of FFmpeg being faster than Handbrake for the same number of cores. Maybe the GUI loop interrupts the process in a performance-unfriendly way. Too much overhead. HPC workloads can suffer even from the network driver having too many interrupts (hence, Linux tickless configuration).

I have read SYSMARK Results and I find strange that TR media results are slower than data, being TR slower than Intel in media and faster than Intel in data. Isn't SYSMARK from BAPCo (http://www.pcworld.com/article/3023373/hardware/am... You already point it out on the article, sorry.

How comes R9 Fury in Shadow of Mordor has AMD and Intel CPUs running consistently at two different frame rates (~95 vs ~103)?

The same but with the GTX 1080. Both cases happen regardless of the Intel architecture (Haswell, Broadwell and Skylake all have the same FPS value).

What happens with NVIDIA driver on Rocket League? Bad caching algorithm (TR has more cores/threads -> more cache available to store GPU command data)? You say you had issues but, what are your thoughts?

How comes GTA V has those Under 60 and 30 FPS graphs knowing that the game is available for PS4 and XBox One (it has been already optimized for two CCX CPU, at least there is a version for that case)? Nevertheless, with NVIDIA cards, 2 seconds out of 90 is not that much.

What I can think is that all these benchmarks are programmed using threading libraries from the "good old times" due to bad scaling. And in some cases there is architecture-specific targeted code. I also include in my conception the small dataset being used. I also would not make a case out of a benchmark programmed with code having false sharing (¡:O!)

Currently for gaming, it seems that the easiest way is to have a Virtual Machine with PCIe passthrough pinned to one of the MCM dies.

As a suggestion to Anandtech, I would like to see more free (libre) software being used to measure CPU performance, compiling the benchmarks from source against the target CPU architecture. Something like Phoronix. Maybe you could use PTS (Phoronix Test Suite).

Filiprino - Thursday, August 10, 2017 - link

Positive things: ThreadRipper is under its TDP consumption. Intel is more power hungry. The Intel 16-core might go through the rough in power consumption.Good gaming performance. Intel is generally better, but TR still offers a beefy CPU for that too, losing a few frames only.

Strong rendering performance.

Strong video encoding performance.

When you talk about IPC, it would be useful to measure it with profiling tools, not just getting "points", "miliseconds" and "seconds".

Seeing how these benchmarks do not scale by much beyond 10 cores you might realize software has to get better.

Chad - Thursday, August 10, 2017 - link

Second ffmpeg test (pretty please!)mapesdhs - Thursday, August 10, 2017 - link

Ian, a query about the CPU Legacy Tests: why do you reckon does the 1920X beat both 1950X and 1950X-G for CB 11.5 MT, yet the latter win out for CB 10 MT? Is there a max-thread limit in V11.5? Filiprino asked much the same above.

"...and so losing half the threads in Game Mode might actually be a detriment to a workstation implementation."

Isn't that the whole point though? For most workstation tasks, don't use Game Mode. There will be exceptions of course, but in general...

Btw, where's C-ray? ;)

Ian.

Da W - Thursday, August 10, 2017 - link

ALL OF YOU COMPLAINERS: START A TECH REVIEW WEBSITE YOURSELVES AND STFU!hansmuff - Thursday, August 10, 2017 - link

Don't read the comments. Also, a lot of the "complaints" are read by Ryan and he actually addresses them and his articles improve as a result of criticism. He's never been bad, but you can see an ascension in quality over time, along with his partaking in critical commentary.IOW, we don't really need a referee.

hansmuff - Thursday, August 10, 2017 - link

And of course I mean Ian, not Ryan.mapesdhs - Friday, August 11, 2017 - link

It is great that he replies at all, and does so to quite a lot of the posts too.Kepe - Thursday, August 10, 2017 - link

Wait a second, according to AMD and all the other articles about the 1950X and Game Mode, game mode disables all the physical cores of one of the CPU clusters and leaves SMT on, so you get 8 cores and 16 threads. It doesn't just turn off SMT for a 16 core / 16 thread setup.AMD's info here: https://community.amd.com/community/gaming/blog/20...