The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTSilicon, Glue, & NUMA Too

In the Ryzen family, AMD designed an 8 core silicon die known as a Zeppelin die. This consisted of two core complexes (CCX) of four cores each, with each CCX having access to 8 MB of L3 cache. The Zeppelin die had access to two DRAM channels, and was fixed with 16 PCIe lanes for add-in cards. With Threadripper, AMD has doubled up the silicon.

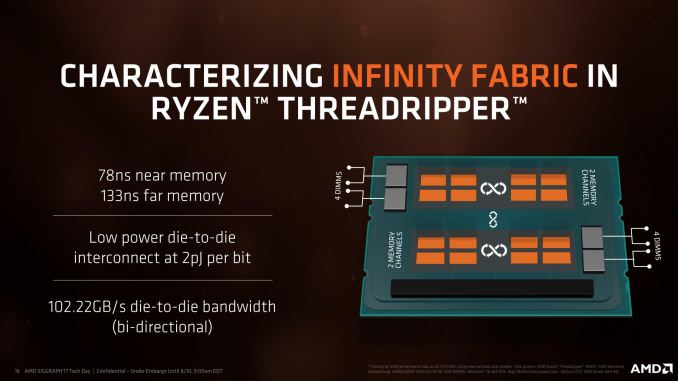

If you were to delid a Threadripper CPU, you would actually see four silicon dies, similar to what an EPYC processor would have, making Threadripper a Multi Core Module (MCM) design. Two of these are reinforcing spacers – empty silicon with no use other than to help distribute the weight of the cooler and assist in cooling. The other two dies (in opposite corners for thermal performance and routing) are basically the same Zeppelin dies as Ryzen, containing eight cores each and having access to two memory channels each. They communicate through Infinity Fabric, which AMD lists as 102 GB/s die-to-die bandwidth (full duplex bidirectional), along with 78ns to reach the near memory (DRAM connected to the same die) and 133ns to reach the far memory (DRAM on another die). We confirmed those numbers on DDR4-2400 memory, also achieving 65 ns and 108 ns respectively using DDR4-3200.

Despite this AMD slide showing two silicon dies, there are four units of silicon in the package. Only two of the dies are active, so AMD has 'simplified' the diagram'

By comparison, EPYC lists die-to-die bandwidth as 42.6 GB/s at DDR4-2666. This is because EPYC runs fabric links to three dies internally and one die externally (on the next socket), which maximizes all the links available. The dies in Threadripper only have to communicate with one other die, so has more flexibility. To that extent, we’re under the impression that Threadripper is using two of these links at 10.4 GT/s using the following method:

- Die to Die for EPYC is quoted as 42.6 GB/s at DDR4-2667

- Die to Die for Threadripper is quoted as 102.2 GB/s at DDR4-3200

- 42.6 GB/s * 2 links * 3200/2667 = 102.2 GB/s

- 42.6 GB/s * 3 links * 3200/2667 at 8.0 GT/s = 115.8 GB/s (too high)

- 42.6 GB/s * 3 links * 3200/2667 at 6.4 GT/s = 92.6 GB/s (too low)

This configuration for AMD is essentially what the industry calls a NUMA configuration: non-uniform memory access. If left as it, it means that code cannot rely on a regular (and low) latency between requesting something from DRAM and receiving it. This can be an issue for high-performance code, which is why some software is designed NUMA-aware, so that it can intelligently pin the memory it needs to the closest DRAM controller, lowering potential bandwidth but prioritizing latency.

NUMA is nothing new in the x86 space. Once CPUs began shipping with on-die memory controllers rather than using an off-die memory controller in the Northbridge, NUMA became an inherent part of multi-socket systems. In this respect AMD was the leader here right from the start, as they beat Intel to on-die memory controllers for x86 CPUs by years. So AMD has been working with NUMA for years, and similarly NUMA has been the state of affairs for Intel's multi-socket server systems for almost a decade.

What's new with Threadripper however is that NUMA has never been a consumer concern. MCM consumer CPUs have been few and far between, and we'd have to go all the way back to the Core 2 Quad family to find a CPU with cores on multiple dies, which was a design that predates on-die memory controllers for Intel. So with Threadripper, this is the very first time that consumers – even high-end consumers – have been exposed to NUMA.

But more importantly, consumer software has been similarly unexposed to NUMA, so almost no software is able to take its idiosyncrasies into account. The good news is that while NUMA changes the rules of the game a bit, it doesn't break software. NUMA-aware OSes do the heavy lifting here, helping unaware software by keeping threads and memory accesses together on the same NUMA node in order to ensure classic performance characteristics. The downside to this is that much like an overprotective parent, the OS is going discourage unaware software from using other NUMA nodes. Or in the case of Threadripper, discouraging applications from using the other die and its 8 cores.

At a hardware level, Threadripper is natively two NUMA nodes

In an ideal world, all software would be NUMA-aware, eliminating any concerns over the matter. From a practical perspective however, software is slow to change and it seems unlikely that NUMA-style CPUs are going to become common in the future. Furthermore NUMA can be tricky to program for, especially in the case of workloads/algorithms that inherently struggle with "far" cores and memory. So the quirks of NUMA are never going to completely go away, and instead AMD has taken it upon themselves to manage the matter.

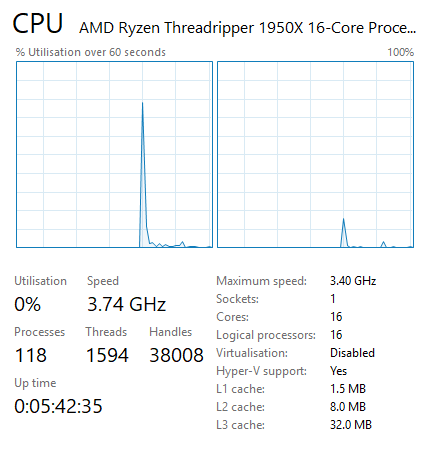

AMD has implemented BIOS switches and software switches in order to better support and control the NUMAness of Threadripper. By default, Threadripper actually hides its NUMA architecture. AMD instead runs Threadripper in a UMA configuration: a uniform memory access system where memory is sent to any DRAM and the return is variable in latency (e.g. ~100ns averaging between 78ns and 133ns) but focusing for a high peak bandwidth. By presenting the CPU to the OS as a monolithic, single-domain design, memory bandwidth is maximized and all applications (NUMA-aware and not) see all 16 cores as part of the same CPU. So for applications that are not NUMA-aware – and consequently would have been discouraged by the OS in NUMA mode – this maximizes the number of cores/threads they can use and the memory bandwidth they can use.

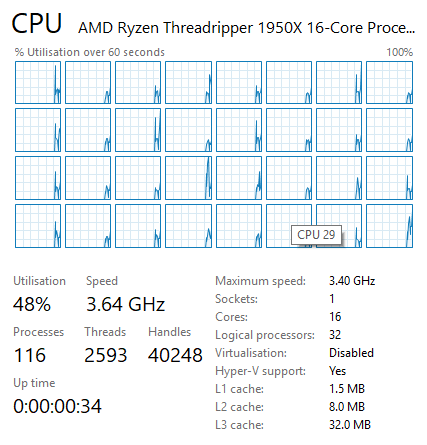

All 32 threads are exposed as part of a single monolithic CPU

The drawback to UMA mode is that because it's hiding how Threadripper really works, it doesn't allow the OS and applications to make fully informed decisions for themselves, and consequently they may not make the best decisions. Latency-sensitive NUMA-unaware applications that fare poorly with high core/memory latencies can struggle here if they use cores and memory attached to the other die. Which is why AMD also allows Threadripper to be configured for NUMA mode, exposing its full design to the OS and resulting in separate NUMA domains for the two dies. This informs the OS to keep applications pinned to one die when possible as previously discussed, and this mode is vital for some software and some games, and we’ve tested it in this review.

Overall, using a multi-silicon design has positives and negatives. The negatives end up being variable memory latency, variable core-to-core latency, and often redundancy in on-die units that don’t need to be repeated. As a result, AMD uses 400mm2+ of silicon to achieve this, which can increase costs at the manufacturing level. By contrast, the positives are in silicon design and overall yeilds: being able to design a single piece of silicon and repeat it, rather than design several different floor plans which multiplies up the design costs, and having the (largely) fixed number of wafer defects spread out over many more smaller dies.

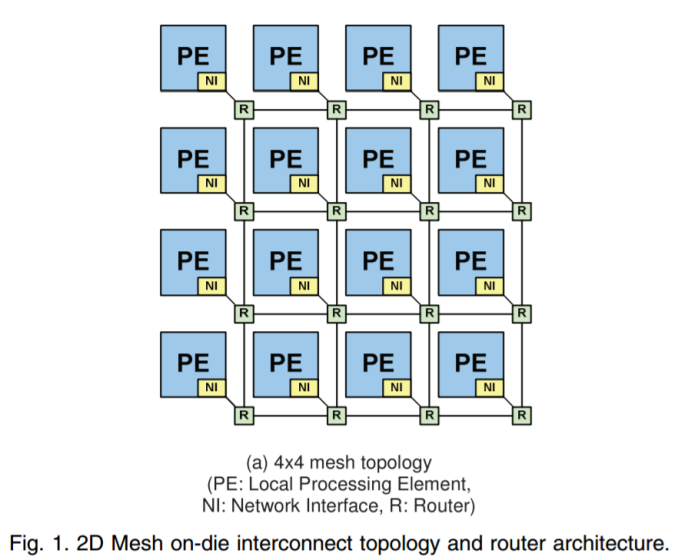

By contrast, Intel uses a single monolithic die for its Skylake-X processors: the LCC die up to 10-core and HCC die from 12-core up to 18-core. These use a rectangular grid of cores (3x4 and 5x4 respectively), with two of the segments reserved for the memory controllers. In order to communicate between the cores, Intel uses a networking mesh, which determines which direction the data needs to travel (up, down, left, right, or accepted into the core). We covered Intel’s MOdular Decoupled Crossbar (MoDe-X) methodology in our Skylake-X review, but the underlying concept is consistency. This mesh runs at 2.4 GHz nominally. Prior to Skylake-X, Intel implemented a ring topology, such that data would have to travel around the ring of cores to get to where it needed to go.

With reference to glue, or glue-logic, we’re referring to the fabric of each processor. For AMD that’s the Infinity Fabric, which has to travel within the silicon die or out to the other silicon die, and for Intel that’s the internal MoDe-X mesh. Elmer’s never looked so complicated.

347 Comments

View All Comments

NikosD - Sunday, August 13, 2017 - link

Well, reading the whole review today - 13/08/2017 - I can see that the reviewer did something more evil than not using DDR4-3200 to give us performance numbers.He used DDR4-2400, as he clearly states in the configuration table, filling up the performance tables BUT in the power consumption page he added DDR4-3200 results (!) just to inform us that DDR4-3200 consumes 13W more, without providing any performance numbers for that memory speed (!!)

The only thing left for the reviewer is to tell us in which department of Intel works exactly, because in the first pages he wanted to test TR against a 2P Intel system as Skylake-X has only 10C/20T but Intel didn't allow him.

Ask for your Intel department to permit it next time.

Zingam - Sunday, August 13, 2017 - link

Yeah! You make a great point! Too much emphasis on gaming all the time! These processors aren't GPUs after all! Most people who buy PCs don't play games at all. Even I as a game developer would like to see more real world tests, especially compilation and data-crunching tests that are typical for game content creation and development workloads. Even I as a game developer spend 99% of my time in front of the computer not playing any games.pm9819 - Friday, August 18, 2017 - link

So Intel made AMD release the underpowered overheating Bulldozer cpu's? Did Intel also make them sell there US and EU based fabs so they'll be wholly dependant on the Chinese to make their chips? Did Intel also make them buy a equally struggling graphics card company? Truth is AMD lost all the mind and market share they had because of bad corporate decision and uncompetitive cpu designs post Thunderbird. It's no one's fault but there own that it took seven years to produce a competitive replacement. Was Intel suppose to wait till they caught up? And Intel was a monopoly long before AMD started producing competitive cpu's.You can keep blaming Intel for AMD's screw ups but those of us with common sense and the ability to read know the fault lays with AMD's management.

ddriver - Thursday, August 10, 2017 - link

You are not sampled because of your divine objectivity Ian, you are sampled because you review for a site that is still somewhat popular for its former glory. You can deny it all you want, and understandable, as it is part of your job, but AT is heavily biased towards the rich american boys - intel, apple, nvidia... You are definitely subtle enough for the dumdums, but for better or worse, we are not all dumdums yet.But hey, it is not all that bad, after all, nowadays there are scores of websites running reviews, so people have a base for comparison, and extrapolate objective results for themselves.

ddriver - Thursday, August 10, 2017 - link

And some bits of constructive criticism - it would be nicer if those reviews featured more workloads people actually use in practice. Too much synthetics, too much short running tests, too much tests with software that is like "wtf is it and who in the world is using it".For example rendering - very few people in the industry actually use corona or blender, blender is used for modelling and texturing a lot, but not really for rendering. Neither is luxmark. Neither is povray, neither is CB.

Most people who render stuff nowadays use 3d max and vray, so testing this will actually be indicative of actual, practical perforamnce to more people than all those other tests combined.

Also, people want render times, not scores. That's very poor indication of actual performance that you will get, because many of those tests are short, so the CPU doesn't operate in the same mode it will operate if it sweats under continuous work.

Another rendering test that would benefit prosumers is after effects. A lot of people use after effects, all the time.

You also don't have a DAW test, something like cubase or studio one.

A lot of the target market for HEDT is also interested in multiphysics, for example ansys or comsol.

The compilation test you run, as already mentioned several times by different people, is not the most adequate either.

Basically, this review has very low informational value for people who are actually likely to purchase TR.

mapesdhs - Thursday, August 10, 2017 - link

AE would definitely be a good test for TR, it's something that can hammer an entire system, unlike games which only stress certain elements. I've seen AE renders grab 40GB RAM in seconds. A guy at Sony told me some of their renders can gobble 500GB of data just for a single frame, imposing astonishing I/O demands on their SAN and render nodes. Someone at a London movie company told me they use a 10GB/sec SAN to handle this sort of thing, and the issues surrounding memory access vs. cache vs. cores are very important, eg. their render management sw can disable cores where some types of render benefit from a larger slice of mem bw per core.There are all sorts of tasks which impose heavy I/O loads while also needing varying degrees of main CPU power. Some gobble enormous amounts of RAM, like ANSYS, though I don't know if that's still used.

I'd be interested to know how threaded Sparks in Flame/Smoke behave with TR, though I guess that won't happen unless Autodesk/HP sort out the platform support.

Ian.

Zingam - Sunday, August 13, 2017 - link

Good points!Notmyusualid - Sunday, August 13, 2017 - link

...only he WAS sampled. Read the review.bongey - Thursday, August 10, 2017 - link

You don't have to be paid by Intel, but this is just a bad review.Gothmoth - Thursday, August 10, 2017 - link

where is smoke there is fire.there are clear indications that anandtech is a bit biased.