The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTAshes of the Singularity Escalation

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

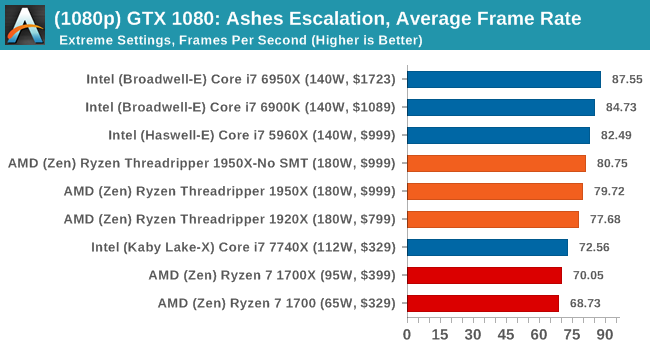

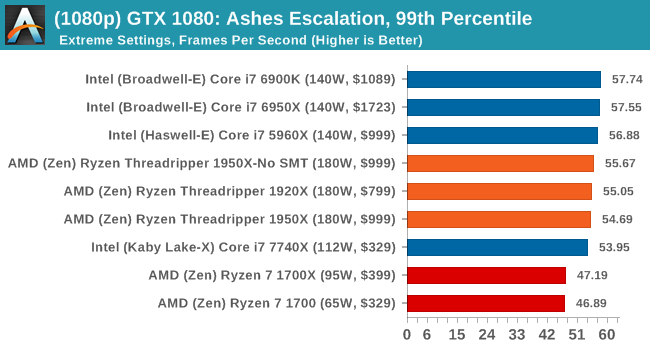

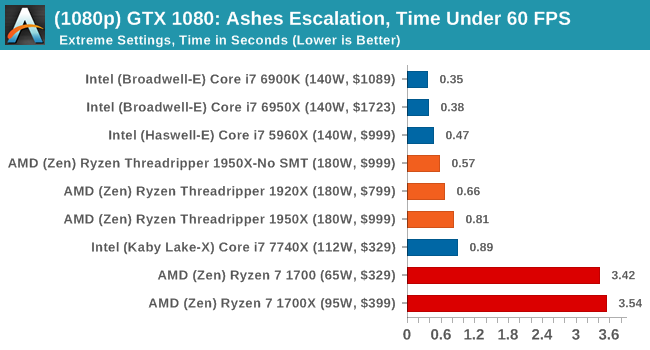

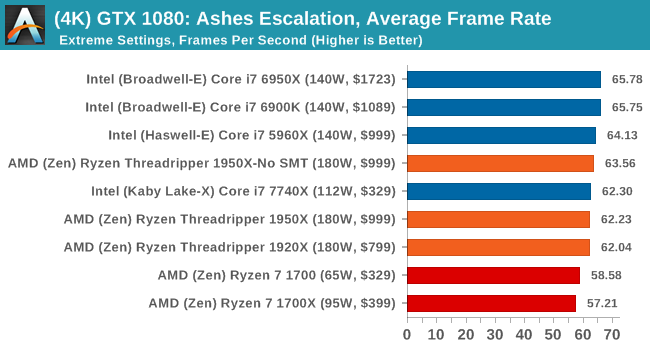

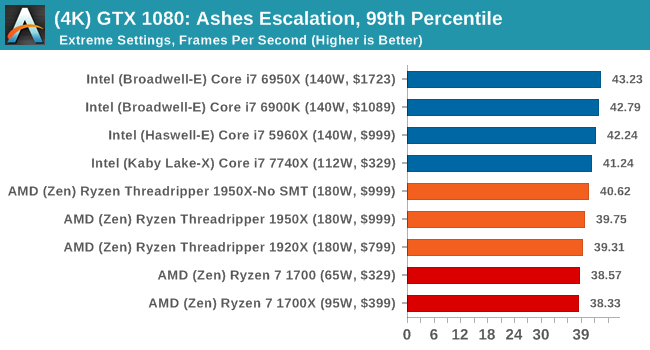

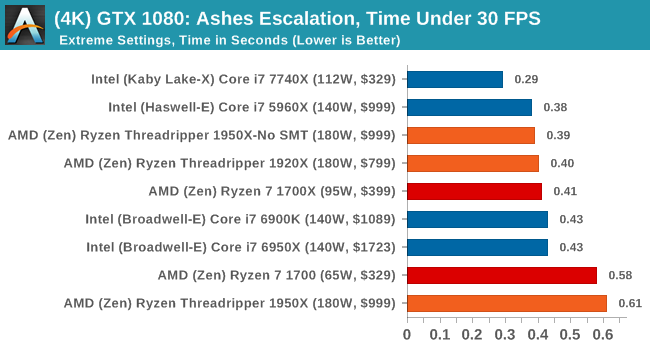

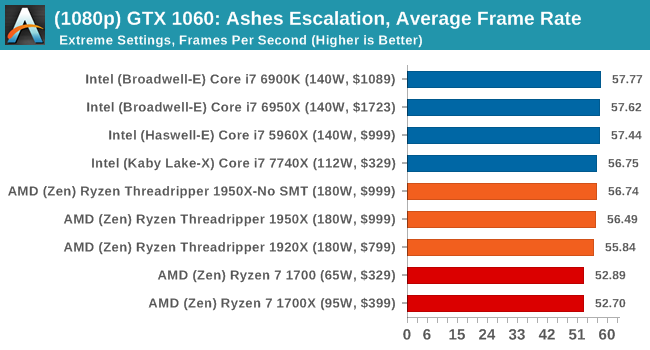

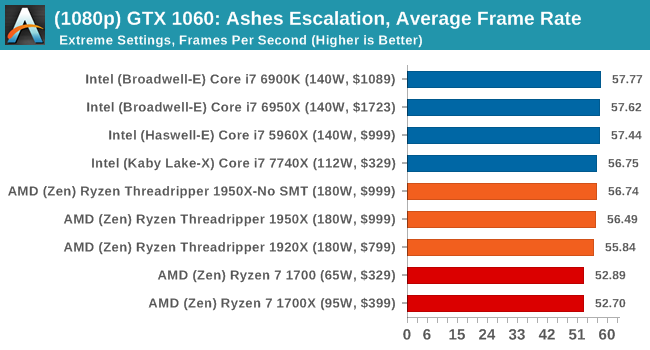

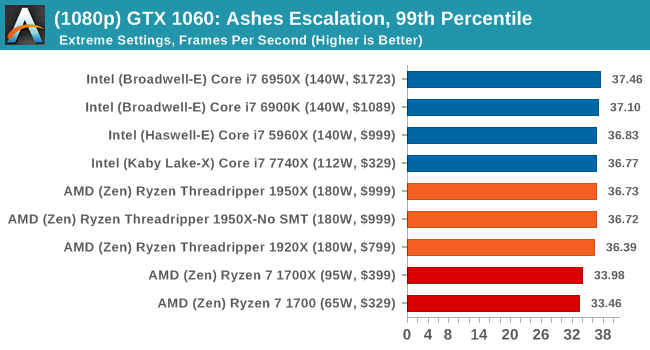

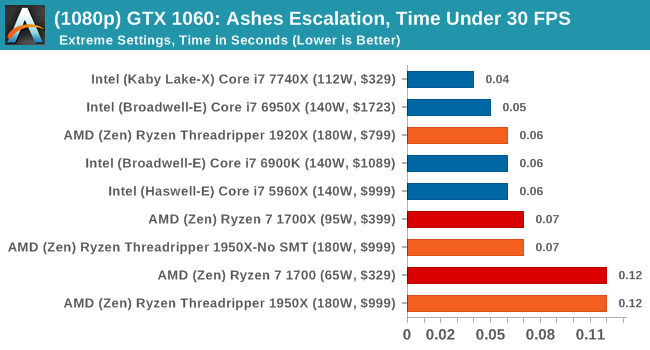

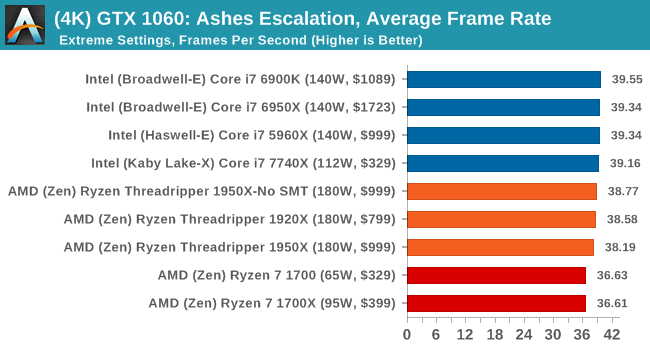

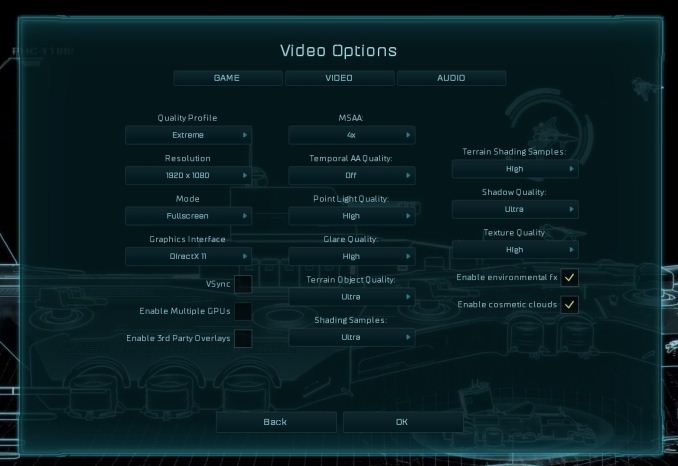

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

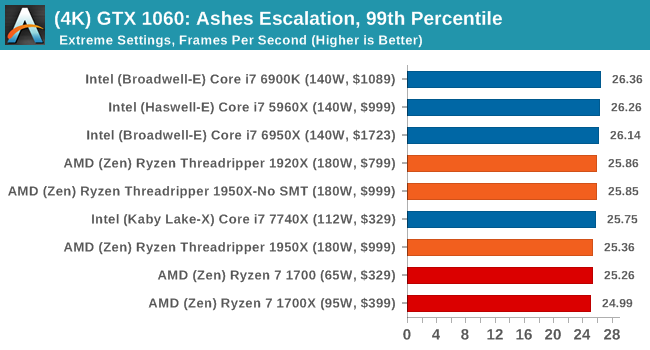

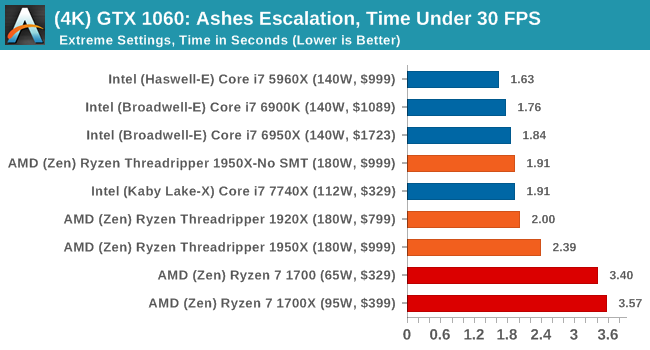

ASUS GTX 1060 Strix 6G Performance

1080p

4K

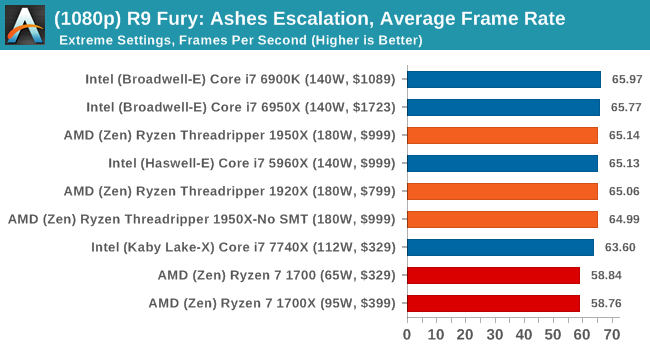

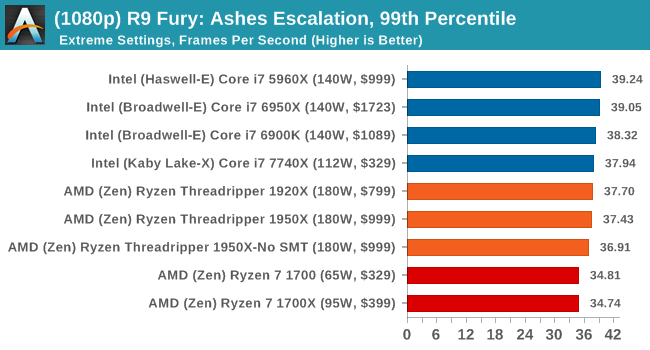

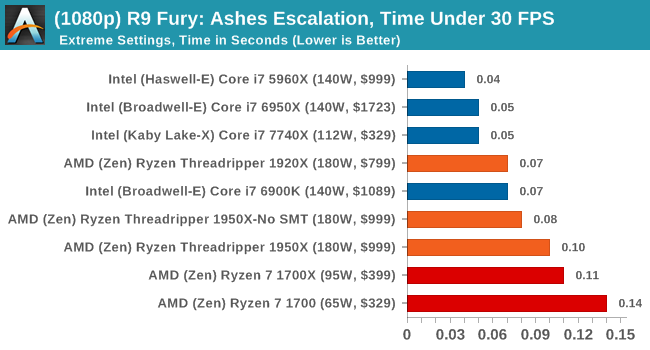

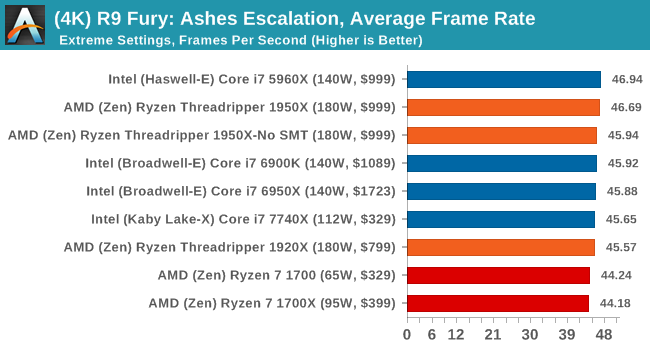

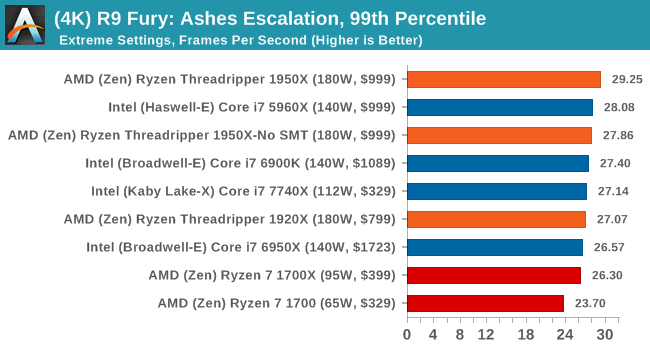

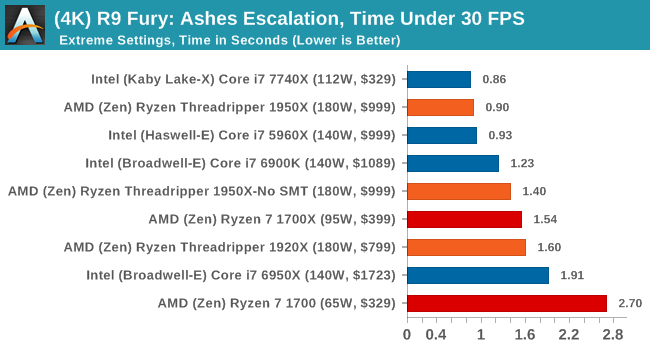

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

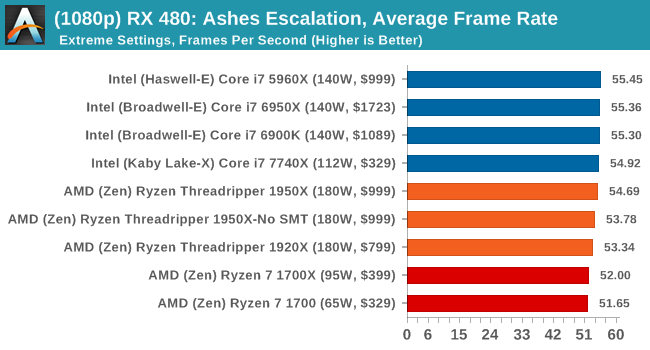

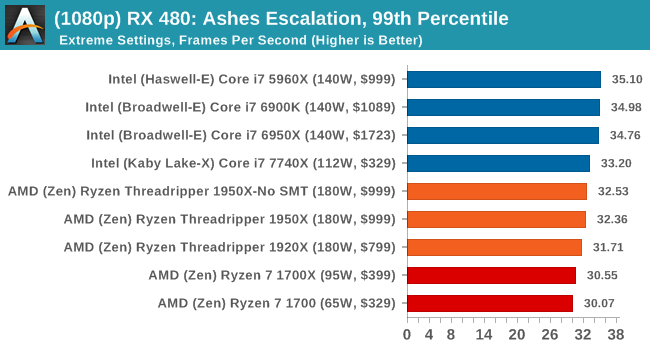

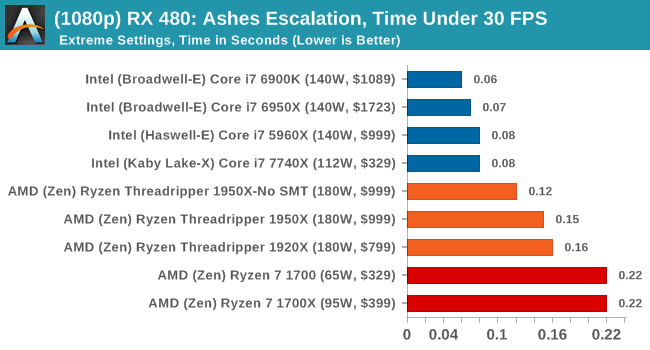

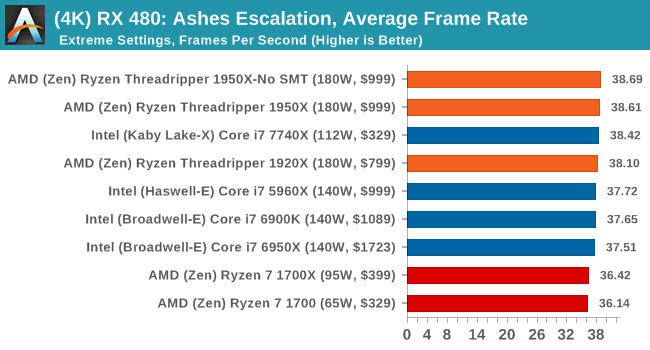

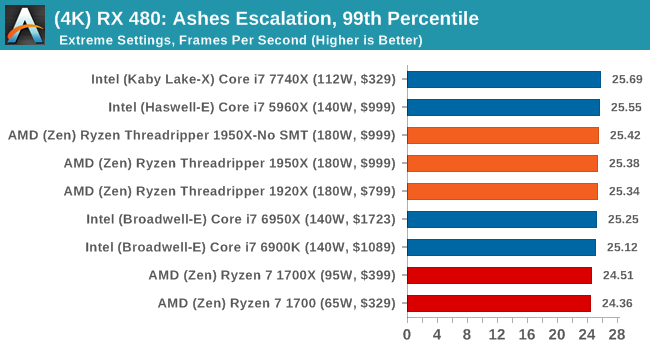

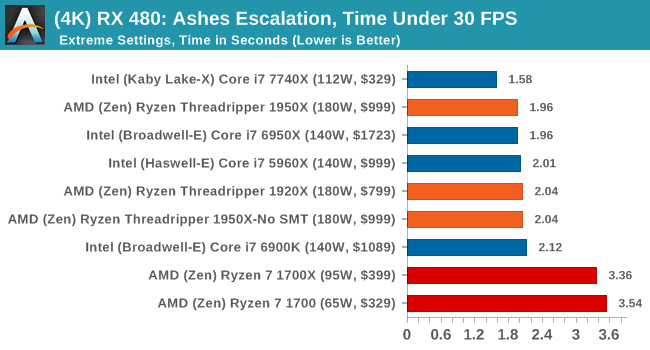

Sapphire Nitro RX 480 8G Performance

1080p

4K

AMD gets in the mix a lot with these tests, and in a number of cases pulls ahead of the Ryzen chips in the Time Under analysis.

347 Comments

View All Comments

Makaveli - Thursday, August 10, 2017 - link

Some professional work from home. Kinda of a silly question.mapesdhs - Thursday, August 10, 2017 - link

Yeah, I just inferred that'd be the case.prisonerX - Friday, August 11, 2017 - link

"640K should be enough for anyone"peevee - Thursday, August 10, 2017 - link

I had a lot of hope for Threadripper as a development machine... but when 16 core TR loses so bad to 10-core 7900x or even 8-core 7820x in compilation, there is something seriously wrong with the picture. Too much emphasis on FP performance nobody at home needs all that much (except in games where it is provided by GPU and not CPU anyway)? Maybe AT tests are wrong, say, they have failed to specify /m for MsBuild?peevee - Thursday, August 10, 2017 - link

Well, there is a good chance that the optimal config for the test would be SMT on (obviously) and NUMA on.peevee - Thursday, August 10, 2017 - link

Hiding the fact that the CPU is NUMA both from the OS and from software is a very bad idea. Thread migration out of a core is a disaster all but itself, but thread migration to different memory and especially L3 cache (as big as it is) should never be attempted.peevee - Thursday, August 10, 2017 - link

Basically, at this point I would take 7820x over TR1950X for every task, with similar MT performance in vast majority of tasks not offloadable to a GPU, better mixed-load performance and much better ST performance. And would save $400 and electricity costs in the process.BOBOSTRUMF - Friday, August 11, 2017 - link

Take your Intel, I'm with the ThreadRipperLolimaster - Friday, August 11, 2017 - link

X299 + cpu consumes and produces way more heat than TR, and that's a fact, anand is anand, if you're happy for you blu placebo site, good for ya.Notmyusualid - Saturday, August 12, 2017 - link

@ peeveeI'm sort-of eyeing-up the 7900X myself. But I have the feeling the Mrs. will sh1t if I buy any more new toys, and my 13 Nvidia GPUs.... :)