The Intel Skylake-X Review: Core i9 7900X, i7 7820X and i7 7800X Tested

by Ian Cutress on June 19, 2017 9:01 AM ESTThe New Core-to-Core Communication Paradigm

Within the last week, Intel released a surprising announcement regarding the capability of its upcoming Skylake-SP processors ahead of any official announcement and release, and the relevance of it extends through to the Skylake-X chips as well. With a multi-core design, how cores communicate can be vital, when sharing data between them or by accessing each other’s cache via snooping or having to go out to main memory.

In previous generations, Intel has championed its ring bus strategy. Pretty much every commercial multi-core chip Intel has released uses some form of ring bus, and it boils down to a single communication ring on which all the cores are connected. If data needs to a certain core, it travels on the ring until it reaches the core it needs. The idea is relatively simple, and has worked quite well. It does mean that there is a range of core-to-core latencies however, depending on how far away the core is.

Imagine connecting to a network of computers, but you could only connect to the two computers either side of you. If you needed to speak to a computer that was 5 nodes away, the data would have to travel through each of the nodes to get there. Welcome to the ring bus. The speed of this ring bus was typically denoted by the ‘uncore’ frequency.

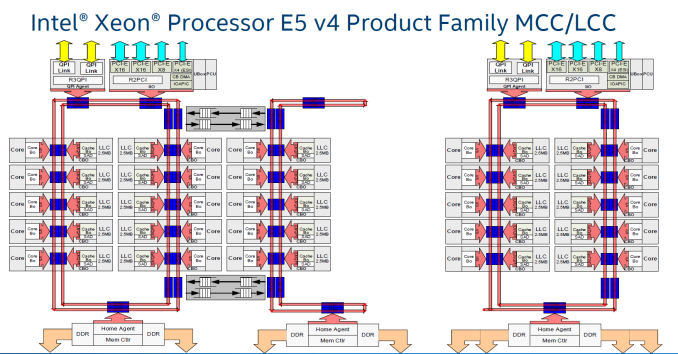

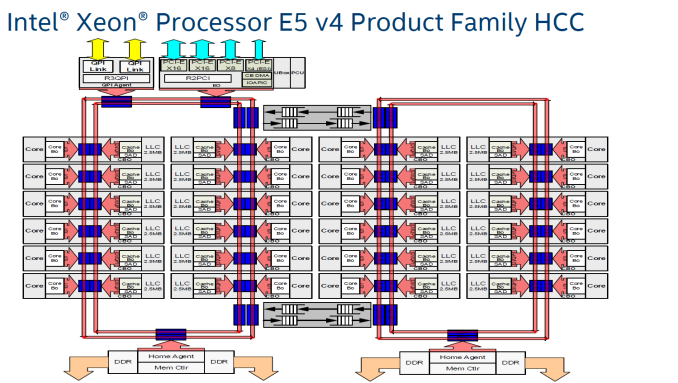

With Intel’s three-die program on its enterprise chips, each one used different variants of the ring bus topology. On Broadwell-E, the 10-core LCC design used a single ring bus for all 8 cores and the DRAM controller/PCIe root complex, whereas the HCC and XCC designs both had dual ring buses that used router communication methods to talk between each other. Now obviously talking to a core on the other ring bus added latency, and was sometimes considered a ‘non-uniform memory access’ (NUMA) environment on chip, with extra latency if you needed data from memory on the other ring bus. (Typically NUMA is applied to dual socket platforms, where the DRAM on the other chip has a large delay.) Intel emphasized that the Broadwell-E design was memory location aware, and tried to keep relevant data local to the core it was needed on.

With the larger XCC CPUs for Broadwell-E, the ring buses become quite cumbersome: you end up with one ring bus servicing 12 cores, and another ring bus servicing 12 cores (due to the layout with other elements of the chip).

As more and more cores get added, what is the solution? More ring buses? How about a 2D array of ring buses? Or even a mesh or a network?

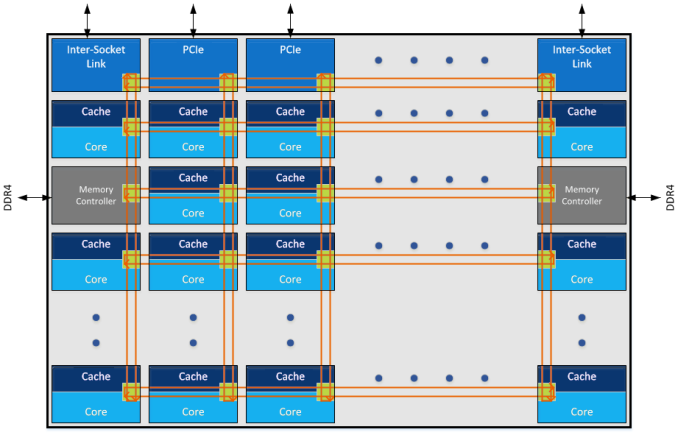

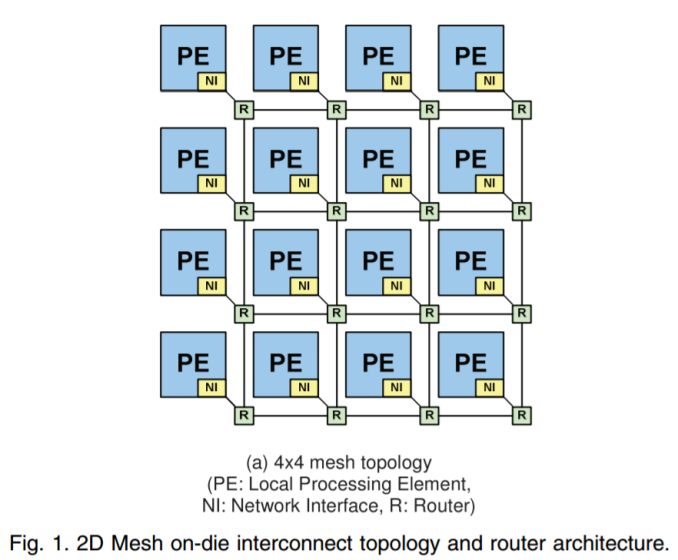

The announcement that Intel made in the past week was that Skylake-SP processors will use a mesh topology, whereby each CPU in the design will be connected to the ones above, below, and to the side. Earlier in the week I mentioned on Twitter that a mesh in this context, or as drawn above, is just an array of ring buses in a 2D arrangement, although new information has come to light.

There are several things to talk about the mesh here, so in no particular order:

Mesh Me Up

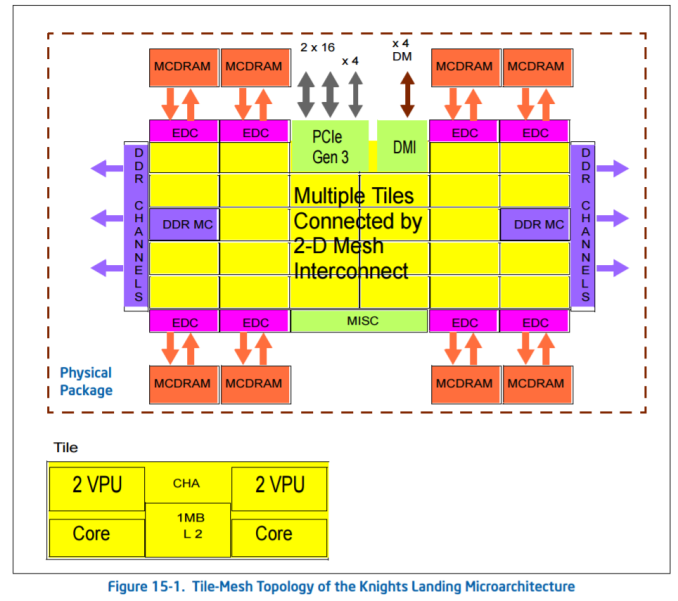

Intel already sells chips with mesh connectivity: the latest Xeon Phi Knights Landing many-core processors. These massive (~646mm2) chips have 72 cores arranged as 36 pairs, six memory channels and 16GB of MCDRAM, and using a ring bus (or a succession of ring buses) is probably not feasible.

For KNL, Intel calls the mesh the ‘untile’. Taken from the KNL optimization guide:

‘At each mesh stop, there is a connection to the tile and a tag directory that identifies which L2 cache (if any) holds a particular cache line. [In Knights Landing] there is no shared L3 cache within a physical package, [so] memory accesses that miss in the tile must go over the mesh to the tag directory to identify any cached copies in another tile. Cache coherence uses the MESIF protocol. If the cache line is not cached in another tile, then a request goes to memory.’

Knights Landing can run in a variety of mesh modes, due to the lack of an L3 but the presence of an intermediary MCDRAM layer, certain modes to do with the memory hierarchy help with different codes. It is not known if Skylake-SP will have the same feature set.

When distributing cores along a mesh, another way to think about it is as a big routing environment. There are two ways – each core could talk to a central processing router which then sends information as it is needed, or each core acts as a networking point itself, forwarding packets in the direction it is needed. The first method, commonly known as a crossbar method, is easier to implement but can be bottlenecked very easily, not to mention power hungry if the whole crossbar needs to be enabled all the time. The second method, where every core is a router node, looks like what we have already in the image Intel provided.

The announcement Intel made was done officially by Dr Akhilesh Kumar, one of Skylake-SP’s CPU architects. He has been at Intel for a number of years, and digging back through releases with his name on popped up a very interesting paper regarding how to implement a router-like mesh in a multi-core environment, published in March 2014 which is about the right time when Skylake-SPs mesh was being developed. We haven’t had confirmation from Intel that this is how Skylake-SP’s mesh is actually like, though it is worth covering what is in the paper.

For anyone interested, the paper is called MoDe-X: Microarchitecture of a Layout-Aware Modular Decoupled Crossbar for On-Chip Interconnects, IEEE Transactions on Computers, Vol. 63, No. 3, March 2014 P622.

MoDe-X: Is This Skylake-SP’s Mesh?

As mentioned above, the easiest way for a mesh/router is through a crossbar. When the design instead uses each core as a node in the mesh, the result is essentially a ‘mini’ decoupled crossbar in each core acting as the director for data coming in and out of the core. With each core in control of its own actions, the design becomes modular. Put these elements together, and you have a MOdular DEcoupled Crossbar, or MoDe-X.

Each core has an integrated network interface and a router, with each router connected to the four routers around it (or 2 routers for corners and 3 routers for edges). A data packet can come in one of five directions – north, south, east, west, or from the core the router is attached to. The data packet then needs to be sent to one of five directions again: north, south, east, west, or to the core the router is attached to. If each core is essentially a node on a 2D map with an x-ordinate and a y-ordinate, if a packet needs to go to (0,0), the router can send it on its way as it knows which nodes are nearest to it. There are algorithms designed to reduce congestion, such as only moving in the x-direction until you are correct then start moving in the y-direction, but a basic system will have buffers and queues and will know how busy the local network congestion is.

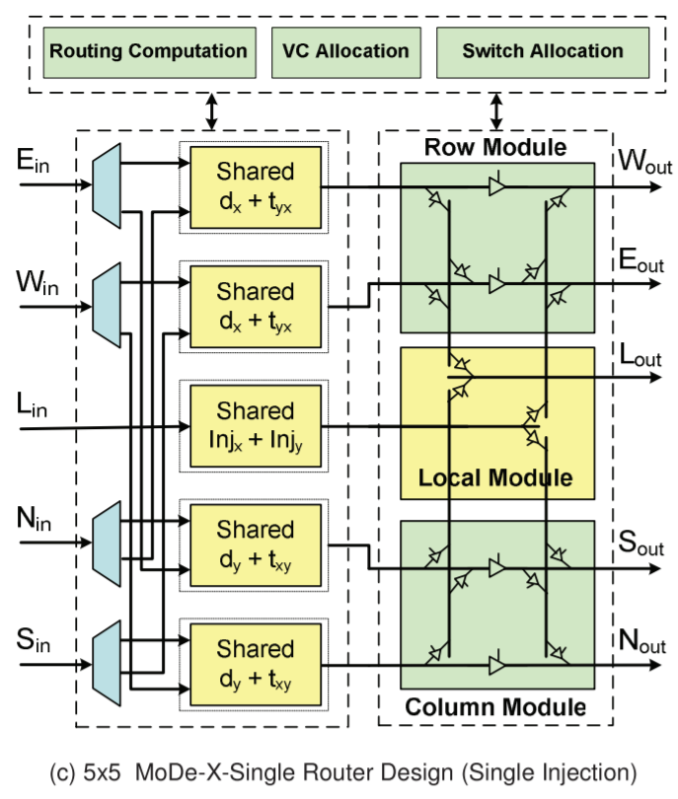

The default way to design the router is to take the date from every input, decided where to send it, then send it. The MoDe-X design does it a little differently, and essentially splits the directions up into three groups: one group is north/south, one group is east/west, and the local core is the third group. The following diagram is a bit complex, but we’ll go through it:

On the left are the five inputs: E, W, L (local), N, S.

On the right are the five outputs, W, E, L, S, N

The first column of ‘shared’ boxes determines where the data wants to go: does it need to traverse a row, a column, or stay local. From each input, there are only two arrows going to either the top pair or the bottom pair of ‘shared’ boxes. Depending on contention and allocation, the data is then sent to the second column whereby if the data needed to change columns, it could either go to Sout or Nout and if it needed to change rows into Wout or Eout. In both circumstances the data could go to the local node.

In the research paper, there is a six-input design which modifies the local input in order to make the wiring in the second half of the design easier (and it lowers power), but the image above is a fair representation of the idea. There are other additions in the paper as well, with layout aware arrangements (how exactly you put it together in silicon) as well as feeder wires such that if data needs to continue in the same direction it can do so with less effort.

The paper is very dense (it took me a while to get to grips with it), but worth a read if you are interested. I found it easier to consider it more like a router, than an on-chip mesh. As mentioned, this is a 2014 paper, and shows simulation data on an 8x8 grid of cores, where this design is both lower power and smaller area than a generic mesh design or competing designs. There were some areas where the generic design had marginally better latency, but the power conservation numbers (due to the wiring reduction and an aggressive power gating scheme to disable certain parts for up to 99% less power use) makes it a more attractive option (especially in multi-core CPUs). Intel was doing simulation work in early 2014 (which means 2013), and the paper mentions that they were in the process of putting these ideas into silicon. It wouldn’t be too far-fetched to consider that this is what we are seeing today.

264 Comments

View All Comments

mat9v - Tuesday, June 20, 2017 - link

To play it safe, invest in the Core i9-7900X today.To play it safe and get a big GPU, save $400 and invest in the Core i7-7820X today.

Then the conclusion should have been - wait for fixed platform. I'm not even suggesting choosing Ryzen as it performs slower but encouraging buying flawed (for now) platform?

mat9v - Tuesday, June 20, 2017 - link

Please then correct tables on 1st page comparing Ryzen and 7820X and 7800X to state that Intel has 24 lines as they leave 24 for PCIEx slots and 4 is reserved for DMI 3.0If you strip Ryzen lines to only show those available for PCIEx do so for Intel too.

Ryan Smith - Wednesday, June 21, 2017 - link

The tables are correct. The i7 7800 series have 28 PCIe lanes from the CPU for general use, and another 4 DMI lanes for the chipset.PeterCordes - Tuesday, June 20, 2017 - link

Nice article, thanks for the details on the microarchitectural changes, especially to execution units and cache. This explains memory bandwidth vs. working-set size results I observed a couple months ago on Google Compute Engine's Skylake-Xeon VMs with ~55MB of L3: The L2-L3 transition was well beyond 256kB. I had assumed Intel wouldn't use a different L3 cache design for SKX vs. SKL, but large L2 doesn't make much sense with an inclusive L3 of 2 or 2.5MB per core.Anyway, some corrections for page3: The allocation queue (IDQ) is in Skylake-S is always 64 uops, with or without HT. For example, I looked at the `lsd.uops` performance counter in a loop with 97 uops on my i7-6700k. For 97 billion counts of uops_issued.any, I got exactly 0 counts of lsd.uops, with the system otherwise idle. (And I looked at cpu_clk_unhalted.one_thread_active to make sure it was really operating in non-HT mode the majority of the time it was executing.) Also, IIRC, Intel's optimization manual explicitly states that the IDQ is always 64 entries in Skylake.

The scheduler (aka RS or Reservation Station) is 97 unfused-domain uops in Skylake, up from 60 in Haswell. The 180int / 168fp numbers you give are the int / fp register-file sizes. They are sized more like the ROB (224 fused-domain uops, up from 192 in Haswell), not the scheduler, since like the ROB, they have to hold onto values until retirement, not just until execution. See also http://blog.stuffedcow.net/2013/05/measuring-rob-c... for when the PRF size vs. the ROB is the limit on the out-of-order window. See also http://www.realworldtech.com/haswell-cpu/6/ for a nice block diagram of the whole pipeline.

SKL-S DIVPS *latency* is 11 cycles, not 3. The *throughput* is one per 3 cycles for 128-bit vectors, or one per 5 cycles for 256b vectors, according to Agner Fog's table. I forget if I've tested that myself. So are you saying that SKL-SP has one per 5 cycle throughput for 128-bit vectors? What's the throughput for 256b and 512b vectors?

-----

It's really confusing the way you keep saying "AVX unit" or "AVX-512 unit" when I think you mean "512b FMA unit". It sounds like vector-integer, shuffle, and pretty much everything other than FMA will have true 512b execution units. If that's correct, then video codecs like x264/x265 should run the same on LCC vs. HCC silicon (other than differences in mesh interconnect latency), because they're integer-only, not using any vector-FP multiply/add/FMA.

-------

> This should allow programmers to separate control flow from data flow...

SIMD conditional operations without AVX512 are already done branchlessly (I think that's what you mean by separate from control-flow) by masking the input and/or output. e.g. to conditionally add some elements of a vector, AND the input with a vector of all-one or all-zero elements (as produced by CMPPS or PGMPEQD, for example). Adding all-zeros is a no-op (the additive identity).

Mask registers and support for doing it as part of another operation makes it much more efficient, potentially making it a win to vectorize things that otherwise wouldn't be. But it's not a new capability; you can do the same thing with boolean vectors and SSE/AVX VPBLENDVPS.

PeterCordes - Tuesday, June 20, 2017 - link

Speed Shift / Hardware P-State is not Windows-specific, but this article kind of reads as if it is.Your article doesn't mention any other OSes, so nothing it says is actually wrong: I'm sure it did require Intel's collaboration with MS to get support into Win10. The bullet-point in the image that says "Collaboration between Intel and Microsoft specifically for W10 + Skylake" may be going too far, though. That definitely implies that it only works on Win10, which is incorrect.

Linux has supported it for a while. "HWP enabled" in your kernel log means the kernel has handed off P-state selection to the hardware. (Since Linux is open-source, Intel contributed most of the code for this through the regular channels, like they do for lots of other drivers.)

dmesg | grep intel_pstate

[ 1.040265] intel_pstate: Intel P-state driver initializing

[ 1.040924] intel_pstate: HWP enabled

The hardware exposes a knob that controls the tradeoff between power and performance, called Energy Performance Preference or EPP. Len Brown@Intel's Linux patch notes give a pretty good description of it (and how it's different from a similar knob for controlling turbo usage in previous uarches), as well as describing how to use it from Linux. https://patchwork.kernel.org/patch/9723427/.

# CPU features related to HWP, on an i7-6700k running Linux 4.11 on bare metal

fgrep -m1 flags /proc/cpuinfo | grep -o 'hwp[_a-z]*'

hwp

hwp_notify

hwp_act_window

hwp_epp

I find the simplest way to see what speed your cores are running is to just `grep MHz /proc/cpuinfo`. (It does accurately reflect the current situation; Linux finds out what the hardware is actually doing).

IDK about OS X support, but I assume Apple has got it sorted out by now, almost 2 years after SKL launch.

Arbie - Wednesday, June 21, 2017 - link

There are folks for whom every last compute cycle really matters to their job. They have to buy the technical best. If that's Intel, so be it.For those dealing more with 'want' than 'need', a lot of this debate misses an important fact. The only reason Intel is suddenly vomiting cores, defecating feature sizes, and pre-announcing more lakes than Wisonsin is... AMD. Despite its chronic financial weakness that company has, incredibly, come from waaaay behind and given us real competition again. In this ultra-high stakes investment game, can they do that twice? Maybe not. And Intel has shown us what to expect if they have no competitor. In this limited-supplier market it's not just about who has the hottest product - it's also about whom we should reward with our money, and about keeping vital players in the game.

I suggest - if you can, buy AMD. They have earned our support and it's in our best interests to do so. I've always gone with Intel but have lately come to see this bigger picture. It motivated me to buy an 1800X and I will also buy Vega.

Rabnor - Wednesday, June 21, 2017 - link

To play it safe and get a big GPU, save $400 and invest in the Core i7-7820X today.You have to spend that $400+ on a good motherboard & aio cooler.

Are you sold by Intel, anandtech?

Synviks - Thursday, June 22, 2017 - link

For some extra comparison: running Cinebench R15 on my 14c 2.7ghz Haswell Xeon, with turbo to 3ghz on all cores, my score is 2010.Pretty impressive performance gain if they can shave off 4 cores and end up with higher performance.

Pri - Thursday, June 22, 2017 - link

On the first page you wrote this:Similarly, the 6-core Core i7-7820X at $599 goes up against the 8-core $499 Ryzen 7 1800X.

The Core i7 7820X was mistakenly written as a 6-core processor when it is in-fact an 8-core processor.

Kind Regards.

Gigabytes - Thursday, June 22, 2017 - link

Okay, here is what I learned from this article. Gaming performance sucks and you will be able to cook a pizza inside your case. Did I miss anything?Oh, one thing missing.

Play it SMART and wait to see the Ripper in action before buy your new Intel toaster oven.