The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

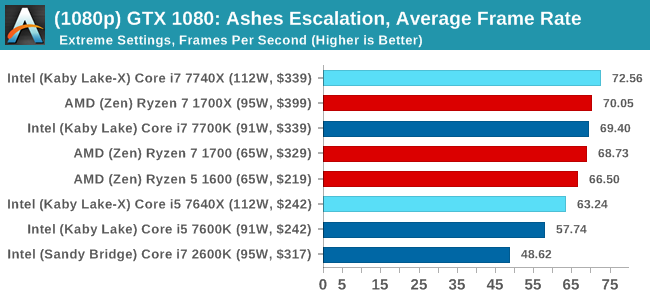

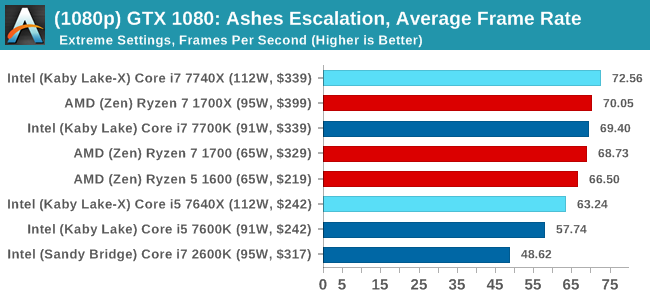

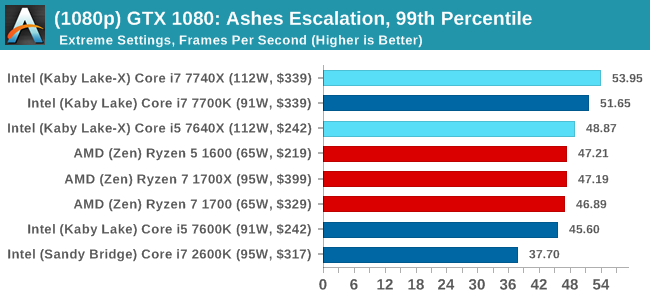

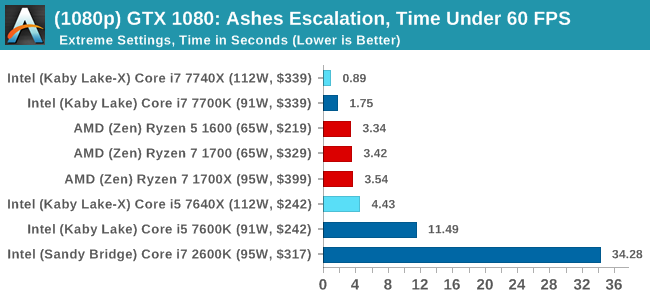

Ashes of the Singularity: Escalation

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

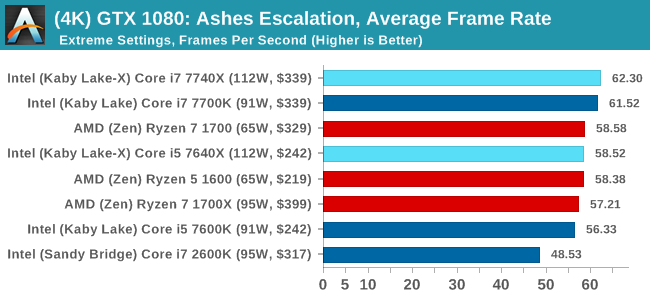

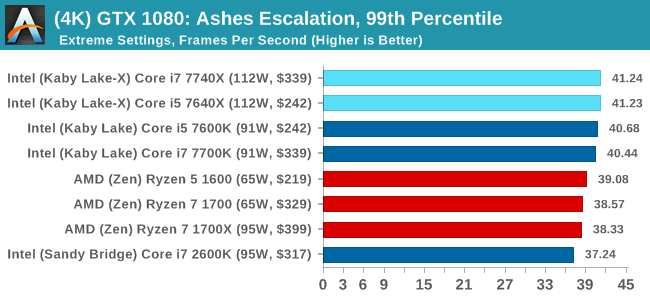

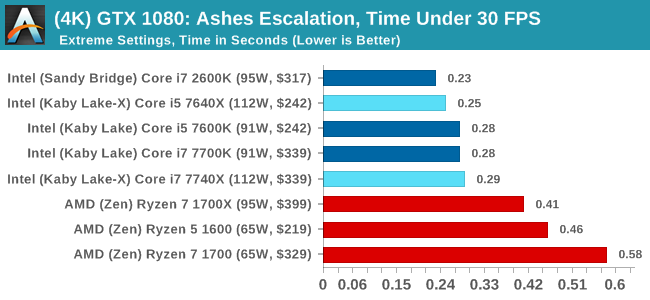

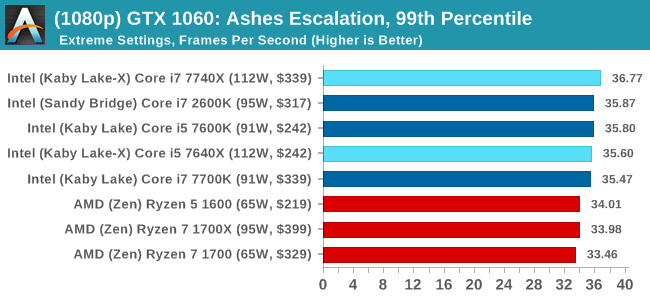

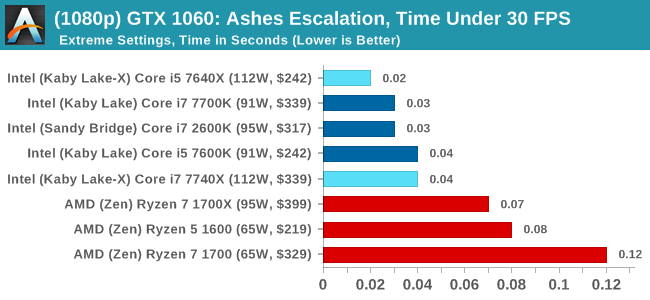

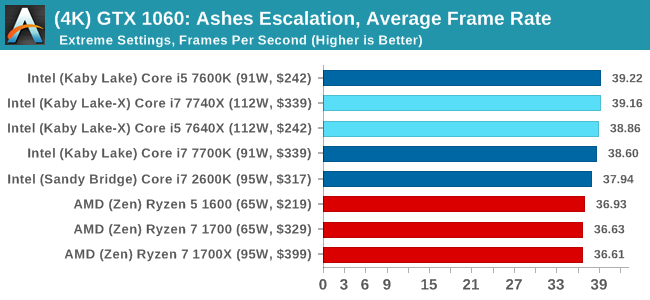

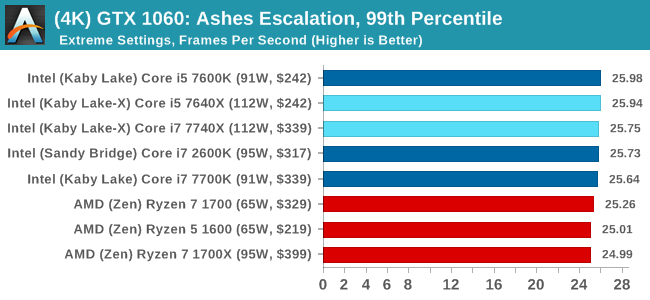

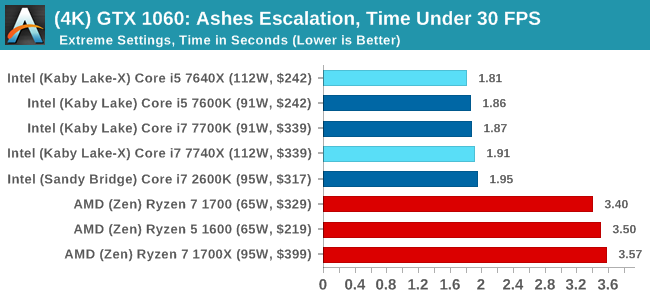

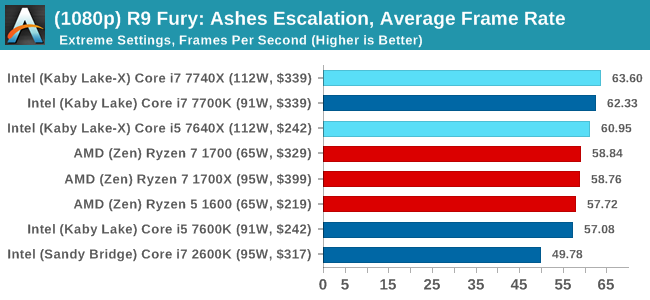

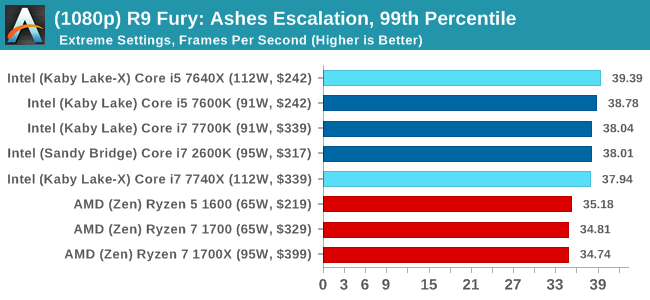

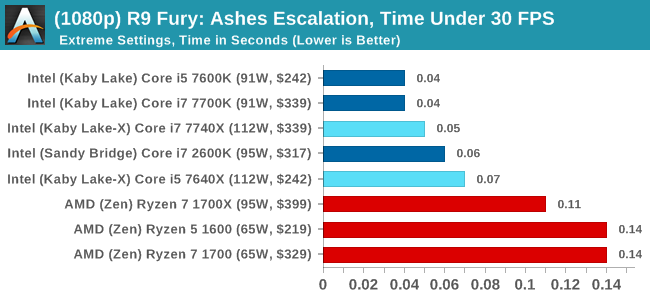

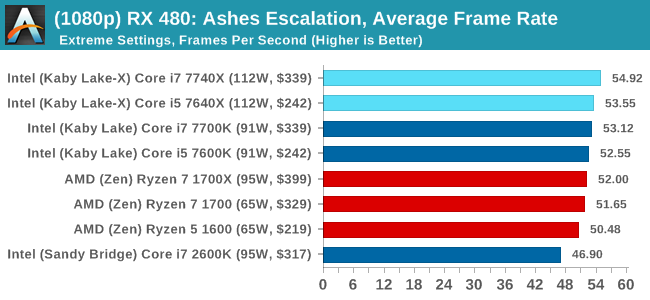

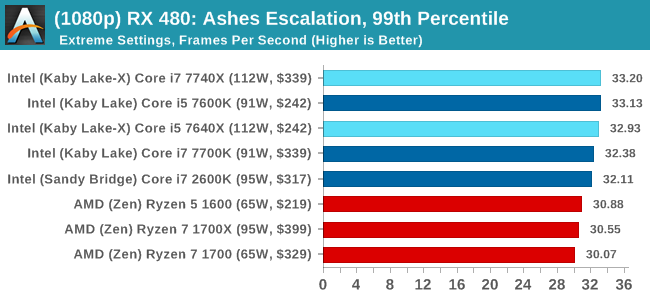

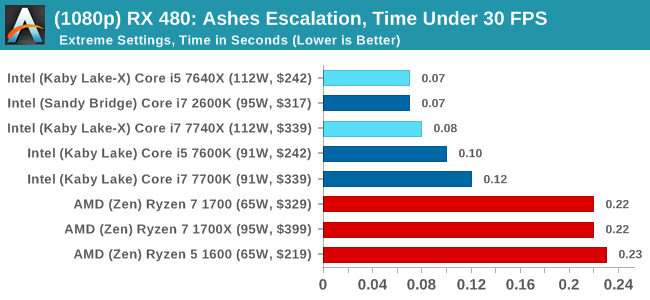

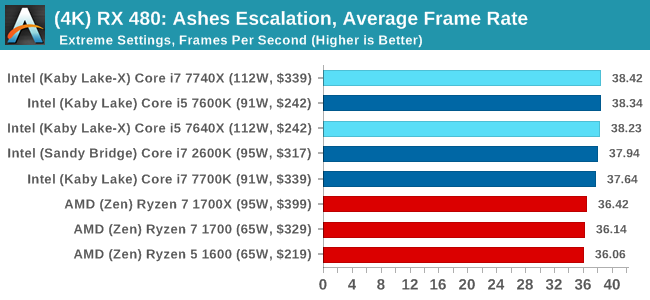

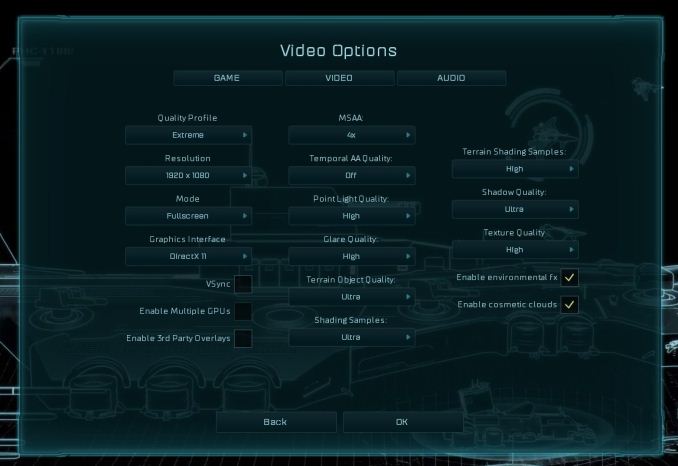

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

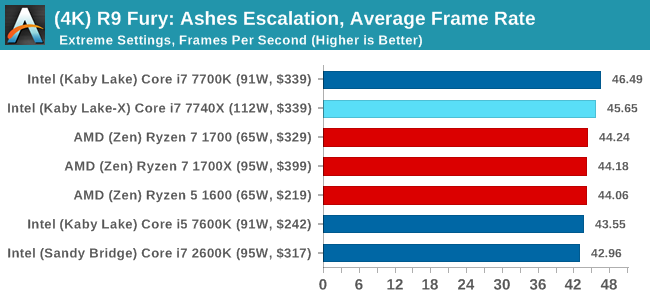

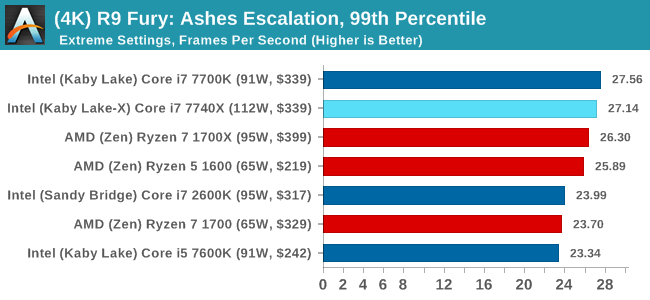

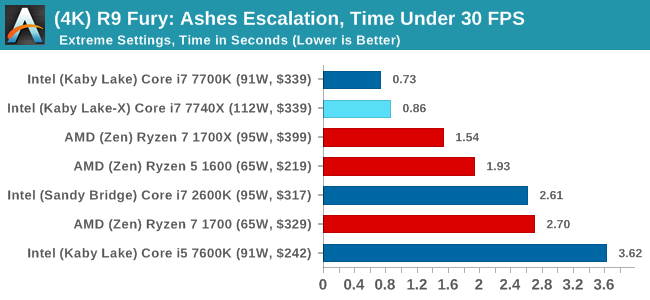

Sapphire R9 Fury 4GB Performance

1080p

4K

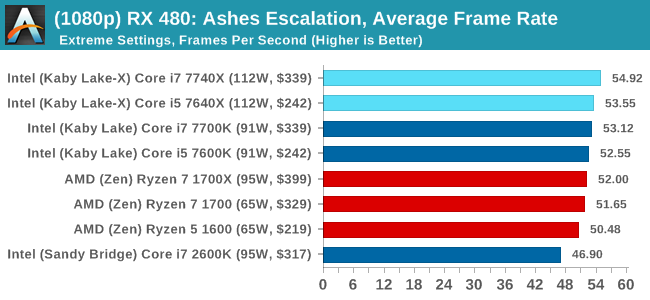

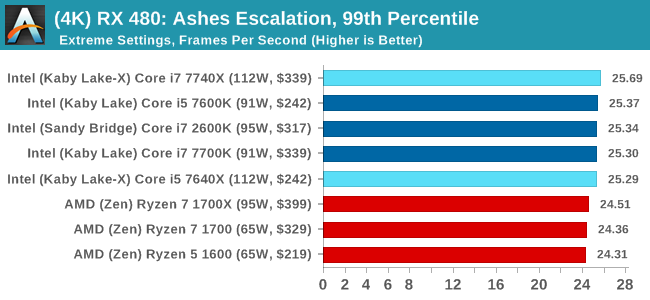

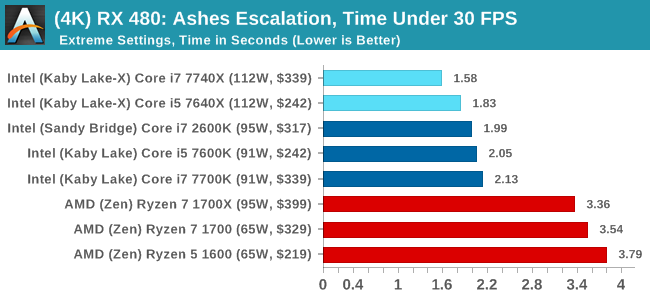

Sapphire RX 480 8GB Performance

1080p

4K

Ashes Conclusion

Pretty much across the board, no matter the GPU or the resolution, Intel gets the win here. This is most noticable in the time under analysis, although AMD seems to do better when the faster cards are running at the lower resolution. That's nothing to brag about though.

176 Comments

View All Comments

iwod - Monday, July 24, 2017 - link

Intel has 10nm and 7nm by 2020 / 2021. Core Count is basically a solved problem, limited only by price.What we need is a substantial breakthrough in single thread performance. May be there are new material that could bring us 10+Ghz. But those aren't even on the 5 years roadmap.

mapesdhs - Monday, July 24, 2017 - link

That's more down to better sw tech, which alas lags way behind. It needs skills that are largely not taught in current educational establishments.wolfemane - Monday, July 24, 2017 - link

Under Handbrake testing, just above the first graph you state:"Low Quality/Resolution H264: He we transcode a 640x266 H264 rip of a 2 hour film, and change the encoding from Main profile to High profile, using the very-fast preset."

I think you mean to say "HERE we transcode..."

Great article overall. Thank you!

Ian Cutress - Monday, July 24, 2017 - link

Thanks, corrected :)wolfemane - Monday, July 24, 2017 - link

I wish your team would finally add in an edit button to comments! :)On the last graph ENCODING: Handbrake HEVC (4k) you don't list the 1800x, but it is present in the previous two graphs @ LQ and HQ. Was there an issue with the 1800x preventing 4k testing? Quite interested in it's results if you have them.

Ian Cutress - Monday, July 24, 2017 - link

When I first did the HEVC testing for the Ryzen 7 review, there was a slight issue in it running and halfway through I had to change the script because the automation sometimes dropped a result (like the 1800X which I didn't notice until I was 2-3 CPUs down the line). I need to put the 1800X back on anyway for AGESA 1006, which will be in an upcoming article.IanHagen - Monday, July 24, 2017 - link

One thing that caught my eye for a while is how compile tests using GCC or clang show much better results on Ryzen compared to using Microsoft's VS compiler. Phoronix tests clearly shows that. Thus, I cannot really believe yet on Ian's recurring explanation of Ryzen suffering from its victim L3 cache. After all, the 1800X beats the 7700K by a sizable margin when compiling the Linux kernel.Isn't Ryzen relatively poor performance compiling Chromium due to idiosyncrasies of the VS compiler?

Ian Cutress - Monday, July 24, 2017 - link

The VS compiler seems to love L3 cache, then. The 1800X does have 2x threads and 2x cores over the 7700K, accounting for the difference. We saw a -17% drop going from SKL-S with its fully inclusive L3 to SKL-SP with a victim L3, clock for clock.Chromium was the best candidate for a scripted, consistent compile workflow I could roll into our new suite (and runs on Windows). Always open for suggestions that come with an ELI5.

ddriver - Monday, July 24, 2017 - link

So we are married to chromium, because it only compiles with msvc on windows?Or maybe because it is a shitty implementation that for some reason stacks well with intel's offerings?

Pardon my ignorance, I've only been a multi-platform software developer for 8 years, but people who compile stuff a lot usually don't compile chromium all day.

I'd say go GCC or Clang, because those are quality community drive open source compilers that target a variety of platforms, unlike msvc. I mean if you really want to illustrate the usefulness of CPUs for software developers, which at this point is rather doubtful...

Ian Cutress - Monday, July 24, 2017 - link

Again, find me something I can rope into my benchmark suite with an ELI5 guide and I try and find time to look into it. The Chromium test took the best part of 2-3 days to get in a position where it was scripted and repeatable and fit with our workflow - any other options I examined weren't even close. I'm not a computer programmer by day either, hence the ELI5 - just years old knowledge of using Commodore BASIC, batch files, and some C/C++/CUDA in VS.