The NVIDIA GeForce GTX 1080 Ti Founder's Edition Review: Bigger Pascal for Better Performance

by Ryan Smith on March 9, 2017 9:00 AM ESTSecond Generation GDDR5X: More Memory Bandwidth

One of the more unusual aspects of the Pascal architecture is the number of different memory technologies NVIDIA can support. At the datacenter level, NVIDIA has a full HBM 2 memory controller, which they use for the GP100 GPU. Meanwhile for consumer and workstation cards, NVIDIA equips GP102/104 with a more traditional memory controller that supports both GDDR5 and its more exotic cousin: GDDR5X.

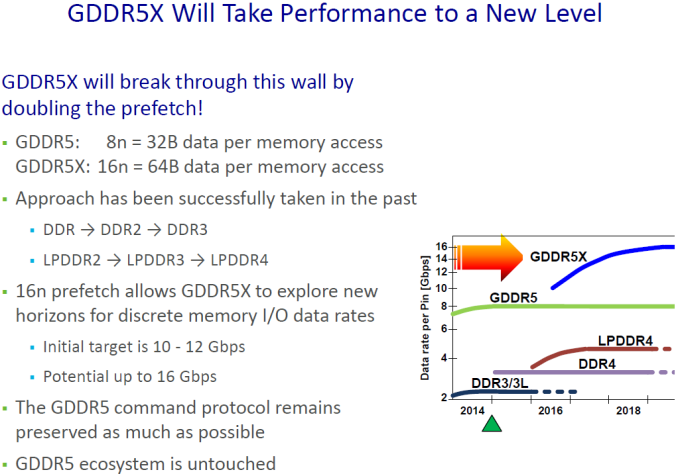

A half-generation upgrade of sorts, GDDR5X was developed by Micron to further improve memory bandwidth over GDDR5. GDDR5X further increases the amount of memory bandwidth available from GDDR5 through a combination of a faster memory bus coupled with wider memory operations to read and write more data from DRAM per clock. And though it’s not without its own costs such as designing new memory controllers and boards that can accommodate the tighter requirements of the GDDR5X memory bus, GDDR5X offers a step in performance between the relatively cheap and slow GDDR5, and relatively fast and expensive HBM2.

With rival AMD opting to focus on HBM2 and GDDR5 for Vega and Polaris respectively, NVIDIA has ended up being the only PC GPU vendor to adopt GDDR5X. The payoff for NVIDIA, besides the immediate benefits of GDDR5X, is that they can ship with memory configurations that AMD cannot. Meanwhile for Micron, NVIDIA is a very reliable and consistent customer for their GDDR5X chips.

When Micron initially announced GDDR5X, they laid out a plan to start at 10Gbps and ramp to 12Gbps (and beyond). Now just under a year after the launch of the GTX 1080 and the first generation of GDDR5X memory, Micron is back with their second generation of memory, which of course is being used to feed the GTX 1080 Ti. And NVIDIA, for their part, is very eager to talk about what this means for them.

With Micron’s second generation GDDR5X, NVIDIA is now able to equip their cards with 11Gbps memory. This is a 10% year-over-year improvement, and a not-insignificant change given that memory speeds increase at a fraction of GPU throughput. Coupled with GP102’s wider memory bus – which sees 11 of 12 lanes enabled for the GTX 1080 Ti – and NVIDIA is able to offer just over 480GB/sec of memory bandwidth with this card, a 50% improvement over the GTX 1080.

For NVIDIA, this is something they’ve been eagerly awaiting. Pascal’s memory controller was designed for higher GDDR5X memory speeds from the start, but the memory itself needed to catch up. As one NVIDIA engineer put it to me “We [NVIDIA] have it easy, we only have to design the memory controller. It’s Micron that has it hard, they have to actually make memory that can run at those speeds!”

Micron for their part has continued to work on GDDR5X after its launch, and even with what I’ve been hearing was a more challenging than anticipated launch last year, both Micron and NVIDIA seem to be very happy with what Micron has been able to accomplish with their second generation GDDR5X memory.

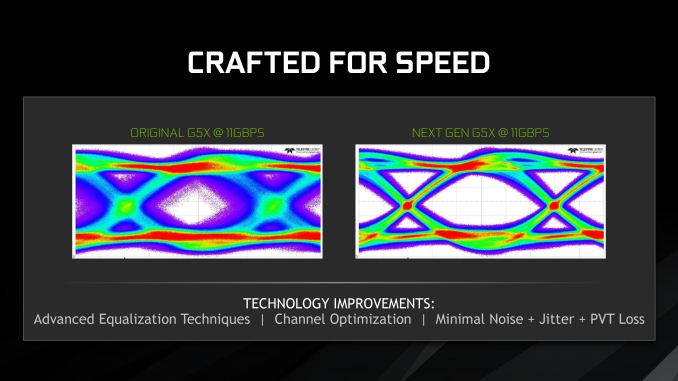

As demonstrated in eye diagrams provided by NVIDIA, Micron’s second generation memory coupled with NVIDIA’s memory controller is producing a very clean eye at 11Gbps, whereas the first generation memory (which was admittedly never speced for 11Gbps) would produce a very noisy eye. Consequently NVIDIA and their partners can finally push past 10Gbps for the GTX 1080 Ti and the forthcoming factory overclocked GTX 1080 and GTX 1060 cards.

Under the hood, the big developments here were largely on Micron’s side. The company continued to optimize their metal layers for GDDR5X, and combined with improved test coverage were able to make a lot of progress over the first generation of memory. This in turn is coupled with improvements in equalization and noise reduction, resulting in the clean eye we see above.

Longer-term here, GDDR6 is on the horizon. But before then, Micron is still working on further improvements to GDDR5X. Micron’s original goal was to hit 12Gbps with this memory technology, and while they’re not there quite yet, I wouldn’t be too surprised to be having this conversation once again for 12Gbps memory within the next year.

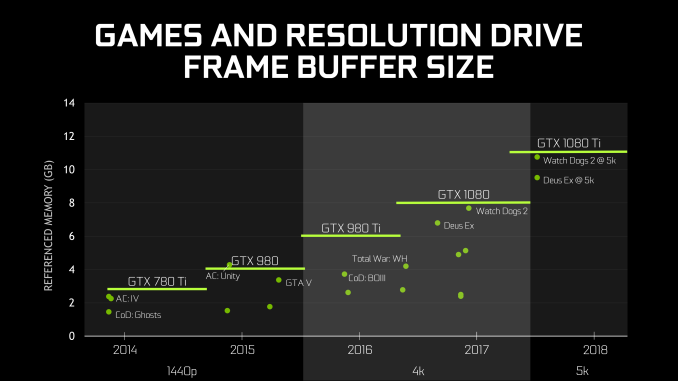

Finally, speaking of memory, it’s worth noting that NVIDIA also dedicated a portion of their GTX 1080 Ti presentation to discussing memory capacity. To be honest, I get the impression that NVIDIA feels like they need to rationalize equipping the GTX 1080 Ti with 11GB of memory, beyond the obvious conclusions that it is cheaper than equipping the card with 12GB and it better differentiates the GTX 1080 Ti from the Titan X Pascal.

In any case, NVIDIA believes that based on historical trends, 11GB will be sufficient for 5K gaming in 2018 and possibly beyond. Traditionally NVIDIA has not been especially generous on memory – cards like the 3GB GTX 780 Ti and 2GB GTX 770 felt the pinch a bit early – so going with a less-than-full memory bus doesn’t improve things there. On the other hand with the prevalence of multiplatform games these days, one of the biggest drivers in memory consumption was that the consoles had 8GB of RAM each; and with 11GB, the GTX 1080 Ti is well ahead of the consoles in this regard.

161 Comments

View All Comments

ddriver - Thursday, March 9, 2017 - link

It is kinda both, although I wouldn't really call it a job, because that's when you are employed by someone else to do what he says. More like it's my work and hobby. Building a super computer on the budget out of consumer grade hardware turned out very rewarding in every possible aspect.Zingam - Friday, March 10, 2017 - link

This is something I'd like to do. Not necessarily with GPUs but I have no idea how to make any money tobpay the bill yet. I'vw only started thinking about it recently.eddman - Thursday, March 9, 2017 - link

"nvidia will pay off most game developers to sandbag"AMD, nvidia, etc. might work with developers to optimize a game for their hardware.

Suggesting that they would pay developers to deliberately not optimize a game for the competition or even make it perform worse is conspiracy theories made up on the internet.

Not to mention it is illegal. No one would dare do it in this day and age when everything leaks eventually.

DanNeely - Thursday, March 9, 2017 - link

Something that blatant would be illegal. What nVidia does do is to offer a bunch of blobs that do various effects simulations/etc that can save developers a huge amount of time vs coding their own versions but which run much faster on their own hardware than nominally equivalent AMD cards. I'm not even going accuse them of deliberately gimping AMD (or Intel) performance, only having a single code path that is optimized for the best results on their hardware will be sub-optimal on anything else. And because Gameworks is offered up as blobs (or source with can't show it to AMD NDA restrictions) AMD can't look at the code to suggest improvements to the developers or to fix things after the fact with driver optimizations.eddman - Thursday, March 9, 2017 - link

True, but most of these effects are CPU-only, and fortunately the ones that run on the GPU can be turned off in the options.Still, I agree that vendor specific, source-locked GPU effects are not helping the industry as a whole.

ddriver - Thursday, March 9, 2017 - link

Have you noticed anyone touching nvidia lately? They are in bed with the world's most evil bstards. Nobody can touch them. Their practice is they offer assistance on exclusive terms, all this aims to lock in developers into their infrastructure, or the very least on the implied condition they don't break a sweat optimizing for radeons.I have very close friends working at AAA game studios and I know first hand. It all goes without saying. And nobody talks about it, not if they'd like to keep their job, or be able to get a good job in the industry in general.

nvidia pretty much do the same intel was found guilty of on every continent. But it is kinda less illegal, because it doesn't involve discounts, so they cannot really pin bribery on them, in case that anyone would dare challenge them.

amd is actually very competitive hardware wise, but failing at their business model, they don't have the money to resist nvidia's hold on the market. I run custom software at a level as professional as it gets, and amd gpus totally destroy nvidian at the same or even higher price point. Well, I haven't been able to do a comparison lately, as I have migrated my software stack to OpenCL2, which nvidia deliberately do not implement to prop up their cuda, but couple of years back I was able to do direct comparisons, and as mentioned above, nvidia offered 2 to 3 times worse value than amd. And nothing has really changed in that aspect, architecturally amd continue to offer superior compute performance, even if their DP rates have been significantly slashed in order to stay competitive with nvidia silicon.

A quick example:

~2500$ buys you either a:

fire pro with 32 gigs of memory and 2.6 tflops FP64 perf and top notch CL support

quadro with 8 gigs of memory and 0.13 tflops FP64 perf and CL support years behind

Better compute features, 4 times more memory and 20 times better compute performance at the same price. And yet the quadro outsells the firepro. Amazing, ain't it?

It is true that 3rd party cad software still runs a tad better on a quadro, for the reasons and nvidian practices outlined above, but even then, the firepro is still fast enough to do the job, while completely annihilating quadros in compute. Which is why at this year's end I will be buying amd gpus by the dozens rather than nvidia ones.

eddman - Friday, March 10, 2017 - link

So you're saying nvidia constantly engages in illegal activities with developers?I don't see how pro cards and software have to do with geforce and games. There is no API lock-in for games.

thehemi - Friday, March 10, 2017 - link

> "And nobody talks about it, not if they'd like to keep their job"Haha we're not scared of NVIDIA, they are just awesome. I'm in AAA for over a decade, they almost bought my first company and worked closely with my next three so I know them very well. Nobody is "scared" of NVIDIA. NVIDIA have their devrel down. They are much more helpful with optimizations, free hardware, support, etc. Try asking AMD for the same and they treat you like you're a peasant. When NVIDIA give us next-generation graphics cards for all our developers for free, we tend to use them. When NVIDIA sends their best graphics engineers onsite to HELP us optimize for free, we tend to take them up on their offers. Don't think I haven't tried getting the same out of AMD, they just don't run the company that way, and that's their choice.

And if you're really high up, their dev-rel includes $30,000 nights out that end up at the strip club. NVIDIA have given me some of the best memories of my life, they've handed me a next generation graphics card at GDC because I joked that I wanted one, they've funded our studio when it hit a rough patch and tried to justify it with a vendor promotion on stage at CES with our title. I don't think that was profitable for them, but the good-will they instilled definitely has been.

I should probably write a "Secret diaries of..." blog about my experiences, but the bottom line is they never did anything but offer help that was much appreciated.

Actually, heh, The worst thing they did, was turn on physx support by default for a game we made with them for benchmarks back when they bought Ageia. My game engine was used for their launch demo, and the review sites (including here I think) found out that if you turned a setting off to software mode, Intel chips doing software physics were faster than NVIDIA physics accelerated mode. Still not illegal, and still not afraid of keeping my job, since I've made it pretty obvious who I am to the right people.

ddriver - Friday, March 10, 2017 - link

Well, for you it might be the carrot, but for others is the stick. Not all devs are as willing to leave their products upoptimized in exchange for a carrot as you are. Nor do they need nvidia to hold them by the hand and walk them through everything that is remotely complex in order to be productive.In reality both companies treat you like a peasant, the difference is that nvidia has the resources to make into a peasant they can use, while to poor old amd you are just a peasant they don't have the resources to pamper. Try this if you dare - instead of being a lazy grateful slob take the time and effort to optimize your engine to take the most of amd hardware and brag about that marvelous achievement, and see if nvidia's pampering will continue.

It is still technically a bribe - helping someone to do something for free that ends up putting them at an unfair advantage. It is practically the same thing as giving you the money to hire someone who is actually competent to do what you evidently cannot be bother with or are unable to do. They still pay the people who do that for you, which would be the same thing if you paid them with money nvidia gave you for it. And you are so grateful for that assistance, that you won't even be bothered to optimize your software for that vile amd, who don't rush to offer to do your job for you like noble, caring nvidia does.

ddriver - Friday, March 10, 2017 - link

It is actually a little sad to see developers so cheap. nvidia took you to see strippers once and now you can't get your tongue out their ass :)but it is understandable, as a developer there is a very high chance it was the first pussy you've seen in real life :D