AMD Announces Radeon Instinct: GPU Accelerators for Deep Learning, Coming In 2017

by Ryan Smith on December 12, 2016 9:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Fiji

- Machine Learning

- Polaris

- Vega

- Neural Networks

- AMD Instinct

MIOpen: The Radeon Instinct Software Stack

While solid hardware is the necessary starting point for building a deep learning product platform, as AMD has learned the hard way over the years, it takes more than good hardware to break into the HPC market. Software is just as important as hardware (if not more so), as software developers want to get to as close to plug-and-play as possible. For this reason, frameworks and libraries that do a lot of the heavy lifting for developers are critical. Case in point, along with the Tesla hardware, the other secret ingredient in NVIDIA’s deep learning stable has been cuDNN and their other libraries, which have moved most of the effort of implementing deep learning systems off of software developers and on to NVIDIA. This is the kind of ecosystem AMD needs to be able to build for Radeon Instinct to crack the market.

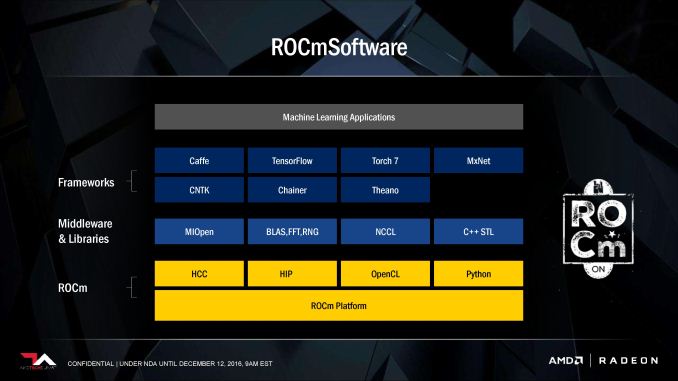

The good news for AMD is that they’re already partially here with ROCm, which lays the groundwork for their software stack. They now have the low-level tools such as stable programming languages and good compilers to build further libraries and frameworks off of that. The Radeon Instinct software stack, then, is all about building on top of ROCm.

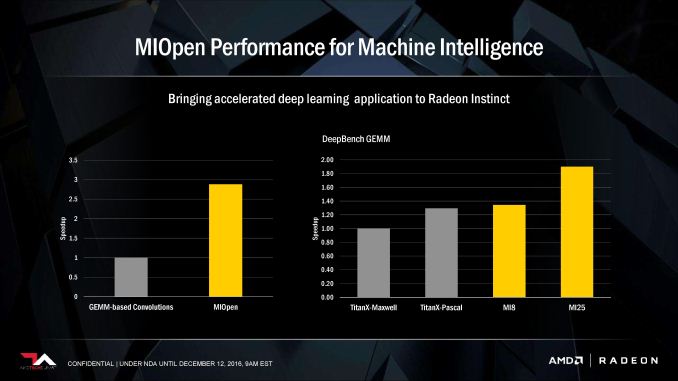

The cornerstone of AMD’s efforts here (and their answer to cuDNN) is MIOpen, a high performance deep learning library for Radeon Instinct. AMD’s performance slides should be taken with a suitably large grain of salt when it comes to competitive comparisons, but they none the less hammer the point home that the company has been focused on putting together a powerful library to support their cards. The library will be responsible for providing optimized routines for basic neural network functions such as convolution, normalization, and activation functions.

Meanwhile built on top of MIOpen will be updated versions of the major deep learning frameworks, including Caffe, Torch 7, and TensorFlow. It’s these common frameworks that deep learning applications are actually built against, and as a result AMD has been lending their support to the developers of these frameworks to get MIOpen/AMD optimized paths added to them. All of this can sound a bit mundane to outsiders, but its importance cannot be overstated; it’s the low-level work that is necessary for AMD to turn the Instinct hardware into a complete ecosystem.

Alongside their direct library and framework support, when it comes to the Instinct software stack, expect to see AMD once again hammer the benefits of being open source. AMD has staked the entire ROCm platform on this philosophy, so it’s to be expected. None the less it’s an interesting point of contrast to NVIDA’s largely closed ecosystem. AMD believes that deep learning developers are looking for a more open software stack than what NVIDIA has provided – that being closed has limited developers’ ability to make full use of NVIDIA’s platform – so this will be AMD’s opportunity to put that to the test.

Instinct Servers

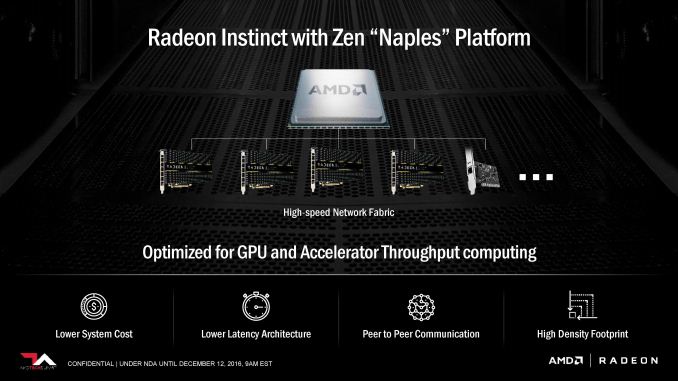

Finally, along with creating a full hardware and software ecosystem for the Instinct product family, AMD also has their eye on the bigger picture, going beyond individual cards and out to servers and whole racks. As a manufacturer of both GPUs and CPUs, AMD is in a rare position to be able to offer a complete hardware platform, and as a result the company is keen to take advantage of the synergy between CPU and GPU to offer something that their rivals cannot.

The basis for this effort is AMD’s upcoming Naples platform, the server platform based on Zen. Besides offering a potentially massive performance increase over AMD’s now well-outdated Bulldozer server platform, Naples lets AMD flex their muscle in heterogeneous applications, tapping into their previous experience with HSA. This is a bit more forward looking – Naples doesn’t have an official launch date yet – but AMD is optimistic about their ability to own large-scale deployments, providing both the CPU and the GPUs in large deep learning installations.

Going a bit off the beaten path here, perhaps the most interesting aspect of Naples as it intersects with Radeon Instinct comes down to PCIe lanes. All signs point to Naples offering at least 64 PCIe lanes per CPU; this is an important metric because it means there are enough lanes to give up to 4 Instinct cards a full, dedicated PCIe x16 link to the host CPU and the other cards. Intel’s Xeon platform only offers 40 PCIe lanes, which means a quad-card configuration has to either sacrifice on bandwidth or latency, trading off between a mix of x8 and x16 slots, using two Xeon CPUs, or building in a high-end PCIe switch to route together 4 x16 slots. Ultimately for installations focusing on GPU-heavy workloads, this gives AMD a distinct advantage since it means they can drive 4 Instinct cards off of a single CPU, making it a cheaper option than the aforementioned Xeon configurations.

In any case, AMD has already lined up partners to show off Naples server configurations for the Radeon Instinct. SuperMicro and Inventec are both showcasing server/rack designs for anywhere between 3 and 120 Instinct MI25 cards. The largest systems will of course involve off-system networking and more complex networking fabrics, and while AMD isn’t saying too much on the subject at this time, it’s clear that they’re being mindful of what they need to support truly massive clusters of cards.

Closing Thoughts

Wrapping things up with today’s Radeon Instinct announcement, while today’s revelations are closer to a teaser than a fully fleshed out product announcement, it’s none the less clear that AMD is about to embark on their most aggressive move in the GPU server space since their first FireStream cards almost a decade ago. Making gains in the server space has long been one of the keys to AMD’s success, both for CPUs and GPUs, and with the Radeon Instinct hardware and overarching initiative, AMD has laid out a critical plan for how to get there.

Not that any of this will come easy for AMD. Breaking back into the server market is a recurring theme for them, and their struggles there are why it’s recurring. NVIDIA is already several steps ahead of AMD in the deep learning GPU market, so AMD needs to be quick to catch up. The good news for AMD here is that unlike the broader GPU server market, the deep learning market is still young, so AMD has the opportunity to act before anyone gets too entrenched. It’s still early enough in the game that AMD believes that if they can flip just a few large customers – the Googles and Facebooks of the world – that they can make up for lost time and capture a significant chunk of the deep learning market.

With that said, as the Radeon Instinct products are not set to ship until H1 of 2017, a lot can change, both inside and outside of AMD. The company has laid down what looks to be a solid plan, but now they need to show that they can follow-through on it, executing on both hardware and software on schedule, and handling the inevitable curveball. If they can do that, then the deep learning market may very well be that server GPU success that the company has spent much of the past decade looking for.

39 Comments

View All Comments

TheinsanegamerN - Tuesday, December 13, 2016 - link

the kind of servers these cards aim for should be able to handle the load. And odds are many will simply be buying new servers with new hardware, rather then buying new cards and putting them in old servers.Michael Bay - Monday, December 12, 2016 - link

I`d like to know if AMD is sharing PR team with Seagate, or vice versa. Oh those product names.Yojimbo - Monday, December 12, 2016 - link

This is probably going to force NVIDIA to rethink the current strategic product segmentation they've implemented by withholding packed FP16 support from the Titan X cards. These announced products from AMD don't really compete with the P100, I think, but they are appealing for anyone thinking of training neural networks on the Titan X or scaled out servers using the Titan X. The Volta-based iteration of the Titan X may need to include FP16 support, which may then force that support onto the Volta-based P40 and P4 replacements, as well.These AMD products are well too late to affect the Pascal generation cards, though. It takes a long time for a new product to be qualified for a large server and I'm guessing the middleware and framework support isn't really there either, and isn't likely to be up to snuff for a while.

p1esk - Monday, December 12, 2016 - link

Amen, brother. This separation of training/inference to run on different hardware pissed me off. I hope Nvidia gets a little bit of competition.On the other hand, maybe we will find a way to train networks with 8 bits precision, after all it's highly unlikely our biological neurons/synapses are that precise.

Threska - Sunday, January 1, 2017 - link

Analog is a different beast.Holliday75 - Monday, December 12, 2016 - link

My portfolio likes this news and I am thrilled to see the lack of comments asking how many display ports this card has.BenSkywalker - Monday, December 12, 2016 - link

What segment are they shooting for with these parts?The MI6 which is implied to be an inference targeted device has one quarter the performance at three times the power draw of the P4 for INT8, this doesn't look bad, it looks like an embarrassment to the industry. ~8.5% of the performance per watt of parts that have been shipping for a while now for a product we don't even have a launch date for?

The MI8 doesn't have enough memory to do any data heavy workloads, it is too big and way too power hungry for its performance for inference, what exactly is this part any good for?

MI25 without memory amounts, bandwidth and some useful performance numbers(TOPS) it's hard to gauge where this is going to fall. Maybe this could be useful as an entry level device if priced really cheap?

Their software stack, well, AMD has a justly earned reputation of being a third tier, at best, software development house. The only hope they have it relying on the community, their problem is going to be despite the borderline vulgar levels of misinformation and propaganda to the contrary, this *IS* an established market with massive resources already devoted to it, and AMD is coming very late to the game and are going to try and woo resources that have been working with the competition for years already?

Comments on high levels of bandwidth for large scale deployment are kind of quaint. Why are you comparing the AMD solutions to those of Intel for high end usage? The high end for this market is using Power/nVidia with NVLink and measuring bandwidth in TB/s, the segment you are talking about is, at best, mid tier.

What's worse, from a useful information perspective, is your comments that AMD making their own CPUs is rare in this market. In terms of volume the most popular use case for deep learning is going to be paired with ARM processors for the next decade at least- a market that has many players already and nVidia is quickly pushing them out of the segment. The only real viable competition at this point seems likely to come from Intel and their upcoming Xeon Phi parts, which appear to be likely to ship roughly when AMD would be shipping these parts.

Pretty much, everyone that matters in deep learning makes CPUs.

p1esk - Monday, December 12, 2016 - link

*Pretty much, everyone that matters in deep learning makes CPUs.*Sorry, what?

BenSkywalker - Monday, December 12, 2016 - link

Intel, nVidia, IBM and Qualcomm currently represent all of the major players- I know there are a bunch of FPGA and DSPs on the drawing board, but out of actual shipping solutions, the players all make their own CPUs.Obviously I'm talking about the hardware manufacturer side.

Yojimbo - Monday, December 12, 2016 - link

It'll be interesting to see what Graphcore's offering is like.