AMD Announces Radeon Instinct: GPU Accelerators for Deep Learning, Coming In 2017

by Ryan Smith on December 12, 2016 9:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Fiji

- Machine Learning

- Polaris

- Vega

- Neural Networks

- AMD Instinct

With the launch of their Polaris family of GPUs earlier this year, much of AMD’s public focus in this space has been on the consumer side of matters. However now with the consumer launch behind them, AMD’s attention has been freed to focus on what comes next for their GPU families both present and future, and that is on the high-performance computing market. To that end, today AMD is taking the wraps off of their latest combined hardware and software initiative for the server market: Radeon Instinct. Aimed directly at the young-but-quickly-growing deep learning/machine learning/neural networking market, AMD is looking to grab a significant piece of what is potentially a very large and profitable market for the GPU vendors.

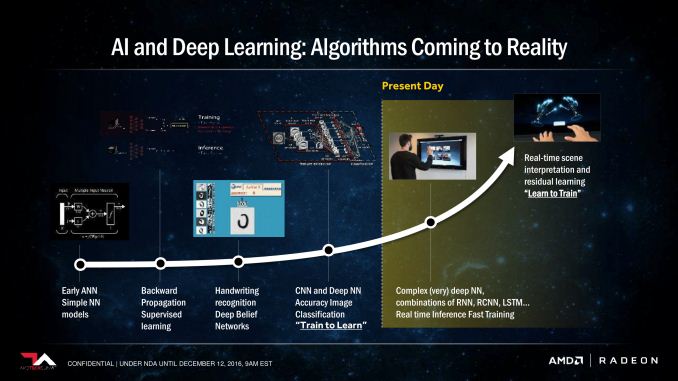

Broadly speaking, while the market for HPC GPU products has been slower to evolve than first anticipated, it has at last started to arrive in the last couple of years. Early efforts to port neural networking models to GPUs combined with their significant year-after-year performance growth have not only created a viable GPU market for deep learning, but one that is rapidly growing as well. It’s not quite what anyone had in mind a decade ago when the earliest work with GPU computing began, but then rarely are the killer apps for new technologies immediately obvious.

At present time the market for deep learning is still young (and its uses somewhat poorly defined), but as the software around it matures, there is increasing consensus in the utility of being able to apply neural networks to analyze large amounts of data. Be it visual tasks like facial recognition in photos or speech recognition in videos, GPUs are finally making neural networks dense enough – and therefore powerful enough – to do meaningful work. Which is why major technology industry players from Google to Baidu are either investigating or making use of deep learning technologies, with many smaller players using it to address more mundane needs.

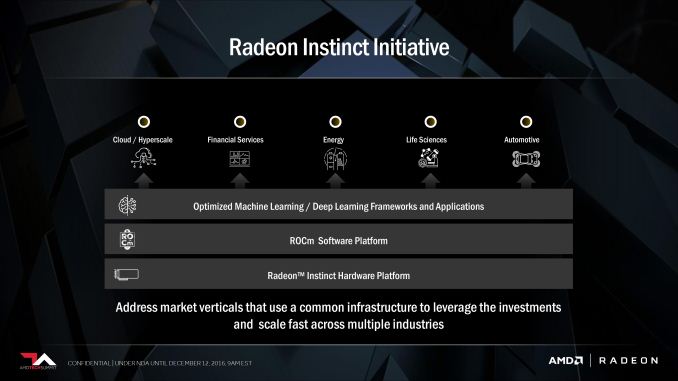

Getting to the meat of AMD’s announcement today then, deep learning has the potential to be a very profitable market for the GPU manufacturer, and as a result the company has put together a plan for the next year to break into that market. That plan is the Radeon Instinct initiative, a combination of hardware (Instinct) and an optimized software stack to serve the deep learning market. Rival NVIDIA is of course already heavily vested in the deep learning market – all but staking the Tesla P100 on it – and it has paid off handsomely for the company as their datacenter revenues have exploded.

As is too often the case for AMD, they approach the deep learning market as the outsider looking in. AMD has struggled for years in the HPC GPU market, and their fortunes have only very recently improved as the Radeon Open Compute Platform (ROCm) has started paying dividends in the last few months. The good news for AMD here is that by starting on ROCm in 2015, they’ve already laid a good part of the groundwork needed to tackle the deep learning market, and while reaching parity with NVIDIA in the wider HPC market is still some time off, the deep learning market is far newer, and NVIDIA is much less entrenched. If AMD plays their cards right and isn’t caught-off guard by NVIDIA, they believe they could capture a significant portion of the market within a generation.

(As an aside, this is the kind of quick, agile action that AMD hasn’t even been able to plan for in the past. If AMD is successful here, I think it will prove the value in creating the semi-autonomous Radeon Technologies Group under Raja Koduri).

39 Comments

View All Comments

TheinsanegamerN - Tuesday, December 13, 2016 - link

the kind of servers these cards aim for should be able to handle the load. And odds are many will simply be buying new servers with new hardware, rather then buying new cards and putting them in old servers.Michael Bay - Monday, December 12, 2016 - link

I`d like to know if AMD is sharing PR team with Seagate, or vice versa. Oh those product names.Yojimbo - Monday, December 12, 2016 - link

This is probably going to force NVIDIA to rethink the current strategic product segmentation they've implemented by withholding packed FP16 support from the Titan X cards. These announced products from AMD don't really compete with the P100, I think, but they are appealing for anyone thinking of training neural networks on the Titan X or scaled out servers using the Titan X. The Volta-based iteration of the Titan X may need to include FP16 support, which may then force that support onto the Volta-based P40 and P4 replacements, as well.These AMD products are well too late to affect the Pascal generation cards, though. It takes a long time for a new product to be qualified for a large server and I'm guessing the middleware and framework support isn't really there either, and isn't likely to be up to snuff for a while.

p1esk - Monday, December 12, 2016 - link

Amen, brother. This separation of training/inference to run on different hardware pissed me off. I hope Nvidia gets a little bit of competition.On the other hand, maybe we will find a way to train networks with 8 bits precision, after all it's highly unlikely our biological neurons/synapses are that precise.

Threska - Sunday, January 1, 2017 - link

Analog is a different beast.Holliday75 - Monday, December 12, 2016 - link

My portfolio likes this news and I am thrilled to see the lack of comments asking how many display ports this card has.BenSkywalker - Monday, December 12, 2016 - link

What segment are they shooting for with these parts?The MI6 which is implied to be an inference targeted device has one quarter the performance at three times the power draw of the P4 for INT8, this doesn't look bad, it looks like an embarrassment to the industry. ~8.5% of the performance per watt of parts that have been shipping for a while now for a product we don't even have a launch date for?

The MI8 doesn't have enough memory to do any data heavy workloads, it is too big and way too power hungry for its performance for inference, what exactly is this part any good for?

MI25 without memory amounts, bandwidth and some useful performance numbers(TOPS) it's hard to gauge where this is going to fall. Maybe this could be useful as an entry level device if priced really cheap?

Their software stack, well, AMD has a justly earned reputation of being a third tier, at best, software development house. The only hope they have it relying on the community, their problem is going to be despite the borderline vulgar levels of misinformation and propaganda to the contrary, this *IS* an established market with massive resources already devoted to it, and AMD is coming very late to the game and are going to try and woo resources that have been working with the competition for years already?

Comments on high levels of bandwidth for large scale deployment are kind of quaint. Why are you comparing the AMD solutions to those of Intel for high end usage? The high end for this market is using Power/nVidia with NVLink and measuring bandwidth in TB/s, the segment you are talking about is, at best, mid tier.

What's worse, from a useful information perspective, is your comments that AMD making their own CPUs is rare in this market. In terms of volume the most popular use case for deep learning is going to be paired with ARM processors for the next decade at least- a market that has many players already and nVidia is quickly pushing them out of the segment. The only real viable competition at this point seems likely to come from Intel and their upcoming Xeon Phi parts, which appear to be likely to ship roughly when AMD would be shipping these parts.

Pretty much, everyone that matters in deep learning makes CPUs.

p1esk - Monday, December 12, 2016 - link

*Pretty much, everyone that matters in deep learning makes CPUs.*Sorry, what?

BenSkywalker - Monday, December 12, 2016 - link

Intel, nVidia, IBM and Qualcomm currently represent all of the major players- I know there are a bunch of FPGA and DSPs on the drawing board, but out of actual shipping solutions, the players all make their own CPUs.Obviously I'm talking about the hardware manufacturer side.

Yojimbo - Monday, December 12, 2016 - link

It'll be interesting to see what Graphcore's offering is like.