AMD Announces Radeon Instinct: GPU Accelerators for Deep Learning, Coming In 2017

by Ryan Smith on December 12, 2016 9:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Fiji

- Machine Learning

- Polaris

- Vega

- Neural Networks

- AMD Instinct

With the launch of their Polaris family of GPUs earlier this year, much of AMD’s public focus in this space has been on the consumer side of matters. However now with the consumer launch behind them, AMD’s attention has been freed to focus on what comes next for their GPU families both present and future, and that is on the high-performance computing market. To that end, today AMD is taking the wraps off of their latest combined hardware and software initiative for the server market: Radeon Instinct. Aimed directly at the young-but-quickly-growing deep learning/machine learning/neural networking market, AMD is looking to grab a significant piece of what is potentially a very large and profitable market for the GPU vendors.

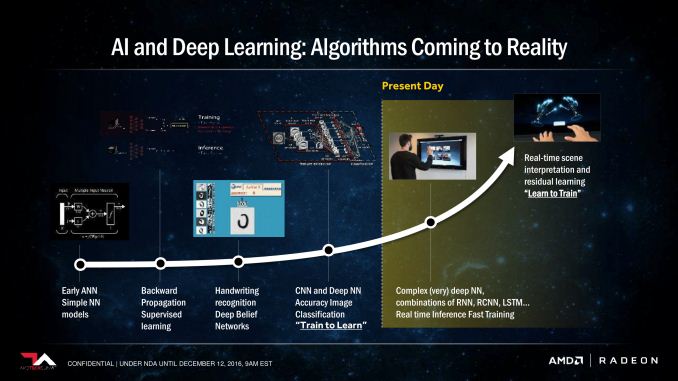

Broadly speaking, while the market for HPC GPU products has been slower to evolve than first anticipated, it has at last started to arrive in the last couple of years. Early efforts to port neural networking models to GPUs combined with their significant year-after-year performance growth have not only created a viable GPU market for deep learning, but one that is rapidly growing as well. It’s not quite what anyone had in mind a decade ago when the earliest work with GPU computing began, but then rarely are the killer apps for new technologies immediately obvious.

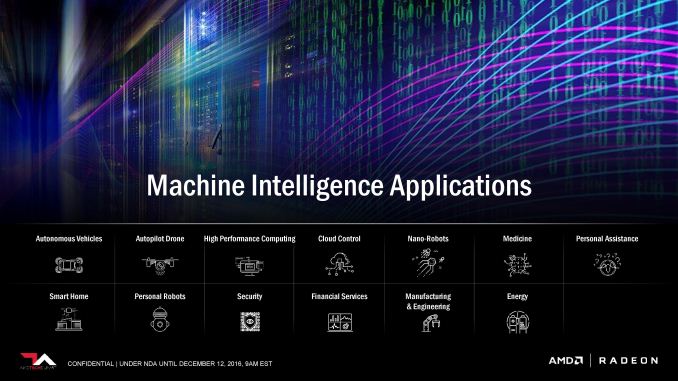

At present time the market for deep learning is still young (and its uses somewhat poorly defined), but as the software around it matures, there is increasing consensus in the utility of being able to apply neural networks to analyze large amounts of data. Be it visual tasks like facial recognition in photos or speech recognition in videos, GPUs are finally making neural networks dense enough – and therefore powerful enough – to do meaningful work. Which is why major technology industry players from Google to Baidu are either investigating or making use of deep learning technologies, with many smaller players using it to address more mundane needs.

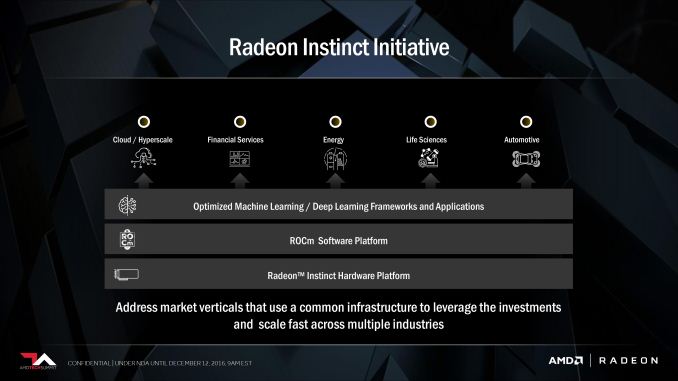

Getting to the meat of AMD’s announcement today then, deep learning has the potential to be a very profitable market for the GPU manufacturer, and as a result the company has put together a plan for the next year to break into that market. That plan is the Radeon Instinct initiative, a combination of hardware (Instinct) and an optimized software stack to serve the deep learning market. Rival NVIDIA is of course already heavily vested in the deep learning market – all but staking the Tesla P100 on it – and it has paid off handsomely for the company as their datacenter revenues have exploded.

As is too often the case for AMD, they approach the deep learning market as the outsider looking in. AMD has struggled for years in the HPC GPU market, and their fortunes have only very recently improved as the Radeon Open Compute Platform (ROCm) has started paying dividends in the last few months. The good news for AMD here is that by starting on ROCm in 2015, they’ve already laid a good part of the groundwork needed to tackle the deep learning market, and while reaching parity with NVIDIA in the wider HPC market is still some time off, the deep learning market is far newer, and NVIDIA is much less entrenched. If AMD plays their cards right and isn’t caught-off guard by NVIDIA, they believe they could capture a significant portion of the market within a generation.

(As an aside, this is the kind of quick, agile action that AMD hasn’t even been able to plan for in the past. If AMD is successful here, I think it will prove the value in creating the semi-autonomous Radeon Technologies Group under Raja Koduri).

39 Comments

View All Comments

CoD511 - Saturday, December 31, 2016 - link

Well, what I find shocking is the P4 with a 5.5TFLOP rating at 50w/75w versions as the rated maximum power, not even using TDP to obfuscate the numbers. It's right near the output of the 1070 but the power numbers are just, what? If that's true and it may well be considering they're available products, I wonder how they've got that set up to draw so little power yet output so much or process at such speed.jjj - Monday, December 12, 2016 - link

The math doesn't work like that at all.Additionally, we don't know the die size and the GPU die is not the only thing using power on a GPU AiB.

What we do know is that it gets more at 300W rated TDP than Nvidia's P100.

ddriver - Monday, December 12, 2016 - link

"The math doesn't work like that at all." - neither do flamboyant statements devoid of substantiation. The numbers are exactly where I'd expect them to be based on rough estimates on the cost of implementing a more fine-grained execution engine.RussianSensation - Monday, December 12, 2016 - link

Almost everyone has gotten this wrong this generation. It's not 2 node jumps because the 14nm GloFo and 16nm TSMC are really "20nm equivalent nodes." The 14nm/16nm at GloFo and TSMC are more marketing than a true representation."Bottom line, lithographically, both 16nm and 14nm FinFET processes are still effectively offering a 20nm technology with double-patterning of lower-level metals and no triple or quad patterning."

https://www.semiwiki.com/forum/content/1789-16nm-f...

Intel's 14nm is far superior to the 14nm/16nm FinFET nodes offered by GloFo and TSMC at the moment.

abufrejoval - Monday, December 19, 2016 - link

Which is *exactly* why I find the rumor that AMD is licensing its GPUs to Chipzilla to replace Intel iGPUs so scary: With that Intel would be able to produce true HSA APUs with HBM2 and/or EDRAM which nobody else can match.Intel has given away more than 50% of silicon real-estate for years for free to starve off Nvidia and AMD (isn't that illegal silicon dumping?) and now they could be ripping the crown jewels off a starving AMD to crash NVidia where Knights Lansing failed.

AMD having on-par CPU technology now is only going to pull some punch, when it's accompanied with a powerful GPU part in their APUs that Intel can't match and NVidia can't deliver.

They license that to Intel, they are left with nothing to compete with.

Perhaps Intel lured AMD by offering their foundries for dGPU, which would allow ATI to make a temporary return. I can't see Intel feeding snakes at their fab-bosom (or producing "Zenselves").

At this point in the silicon end game, technology becomes a side show to politics and it's horribly fascinating to watch.

cheshirster - Sunday, January 8, 2017 - link

If Apple is a customer everything is possible.lobz - Tuesday, December 13, 2016 - link

ddriver......do you have any idea what else is going on under the hood of that surprisingly big card? =}

there could be a lot of things accumulating that add up to <300W, which is still lower then the P100's 10,6 TF @ 300W =}

hoohoo - Wednesday, December 14, 2016 - link

You're being hyperbolic.24.00 W/TF for Vega.

21.34 W/TF for Fiji.

12% higher power use for Vega. That's not really gutted.

Haawser - Monday, December 12, 2016 - link

PCIe P100 = 18.7TF of 16bit in 250WPCie Vega = ~24TF of 16bit in 300W

In terms of perf/W Vega might get ~22% more perf for ~20% more power. So essentially they should be near as darnit the same. Except AMD will probably be cheaper, and because each card is more powerful, you'll be able to pack more compute into a given amount of rack space. Which is what the people who run multi-million $ HPC research machines will *really* be interested in, because that's kind of their job.

Ktracho - Monday, December 12, 2016 - link

Not all servers can handle providing 300 W to add in cards, so even if they are announced as 300 W cards, they may be limited to something closer to 250 W in actual deployments.