The Intel SSD 600p (512GB) Review

by Billy Tallis on November 22, 2016 10:30 AM ESTPerformance Consistency

Our performance consistency test explores the extent to which a drive can reliably sustain performance during a long-duration random write test. Specifications for consumer drives typically list peak performance numbers only attainable in ideal conditions. The performance in a worst-case scenario can be drastically different as over the course of a long test drives can run out of spare area, have to start performing garbage collection, and sometimes even reach power or thermal limits.

In addition to an overall decline in performance, a long test can show patterns in how performance varies on shorter timescales. Some drives will exhibit very little variance in performance from second to second, while others will show massive drops in performance during each garbage collection cycle but otherwise maintain good performance, and others show constantly wide variance. If a drive periodically slows to hard drive levels of performance, it may feel slow to use even if its overall average performance is very high.

To maximally stress the drive's controller and force it to perform garbage collection and wear leveling, this test conducts 4kB random writes with a queue depth of 32. The drive is filled before the start of the test, and the test duration is one hour. Any spare area will be exhausted early in the test and by the end of the hour even the largest drives with the most overprovisioning will have reached a steady state. We use the last 400 seconds of the test to score the drive both on steady-state average writes per second and on its performance divided by the standard deviation.

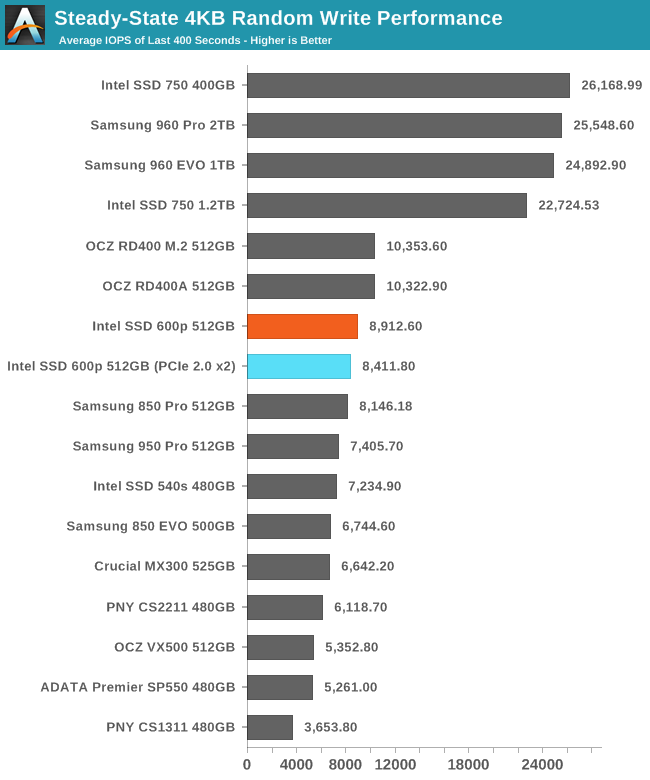

The Intel 600p's steady state random write performance is reasonably fast, especially for a TLC SSD. The 600p is faster than all of the SATA SSDs in this collection. The Intel 750 and Samsung 960s are in an entirely different league, but the OCZ RD400 is only slightly ahead of the 600p.

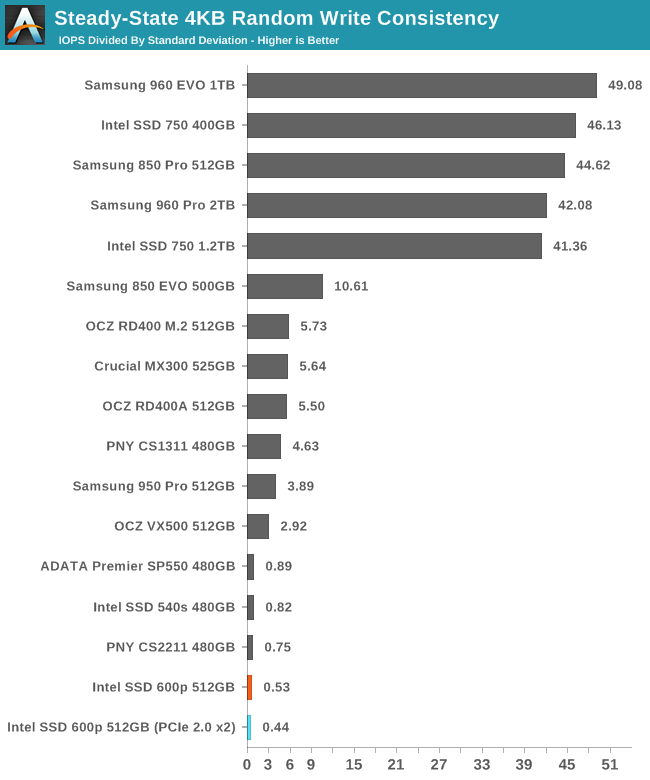

Despite a decently high average performance, the 600p has a very low consistency score, indicating that even after reaching steady state, the performance varies widely and the average does not tell the whole story.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

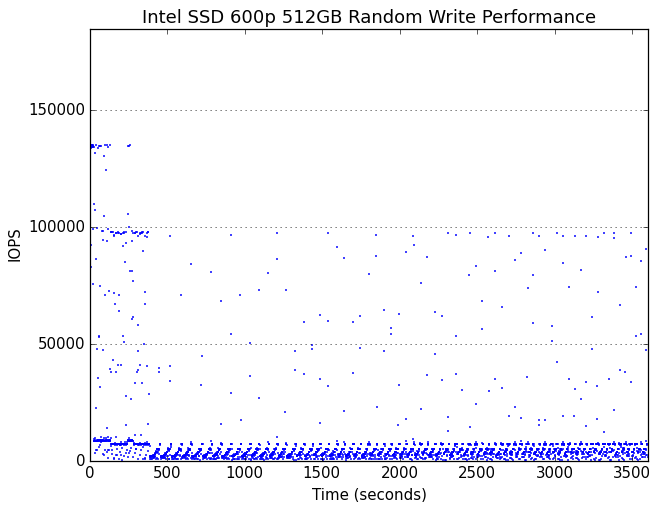

Very early in the test, the 600p begins showing cyclic drops in performance due to garbage collection. Several minutes into the hour-long test, the drive runs out of spare area and reaches steady state.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

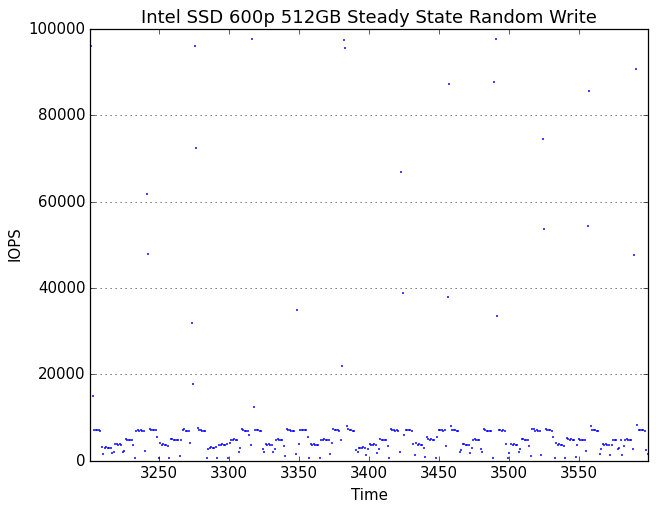

In its steady state, the 600p spends most of the time tracing out a sawtooth curve of performance that has a reasonable average but is constantly dropping down to very low performance levels. Oddly, there are also brief moments of unhindered performance where the drive spikes to exceptionally high performance of up to 100k IOPS, but these are short and infrequent enough to have little impact on the average performance. It would appear that the 600p occasionally frees up some SLC cache, which then immediately gets used up and kicks off another round of garbage collection.

With extra overprovisioning, the 600p's garbage collection cycles don't drag performance down as far, making the periodicity less obvious.

63 Comments

View All Comments

Samus - Wednesday, November 23, 2016 - link

Multicast helped but when you are saturating the backbone of the switch with 60Gbps of traffic it only slightly improves transfer. With light traffic we were getting 170-190MB/sec transfer rate but with a full image battery it was 120MB/sec. Granted with Unicast it never cracked 110MB/sec under any condition.ddriver - Wednesday, November 23, 2016 - link

Multicast would be UDP, so it would have less overhead, which is why you are seeing better bandwidth utilization. Point is with multicast you could push the same bandwidth to all clients simultaneously, whereas without multicast you'd be limited by the medium and switching capacity on top of the TCP/IP overhead.Assuming dual 10gbit gives you the full theoretical 2500 mb/s, if you have 100 mb/s to each client, that means you will only be able to serve no more than 25 clients. Whereas with multicast you'd be able to push those 170-190 mb/s to any number of clients, tens, hundreds, thousands or even millions, and by daisy chaining simply gigabit routers you make sure you don't run out of switching capacity. Of course, assuming you want to send the same identical data to all of them.

BrokenCrayons - Wednesday, November 23, 2016 - link

"Also, he doesn't really have "his specific application", he just spat a bunch of nonsense he believed would be cool :D"Technical sites are great places to speculate about what-ifs of computer technology with like minded people. It's absolutely okay to disagree with someone's opinion, but I don't think you're doing so in a way that projects your thoughts as calm, rational, or constructive. It seems as though idle speculation on a very insignificant matter is treated as a threat worthy of attack in your mind. I'm not sure why that's the case, but I don't think it's necessary. I try to tell my children to keep things in perspective and not to make a mountain out of a problem if its not necessary. It's something that helps them get along in their lives now that they're more independent of their system of parental checks and balances. Maybe stopping for a few moments to consider whether or not the thing that's upsetting you and making you feel mad inside is a good idea. It could put some of these reader comments into a different, more lucid perspective.

ddriver - Tuesday, November 22, 2016 - link

Oh and obviously, he meant "image" as in pictures, not image as in os images LOL, that was made quite obvious by the "media" part.tinman44 - Monday, November 28, 2016 - link

The 960 EVO is only a little bit more expensive for consistent, high performance compared to the 600p. Any hardware implementation where more than a few people are using the same drive should justify getting something worthwhile, like a 960 pro or real enterprise SSD, but the 960 EVO comes very close to the performance of those high-end parts for a lot less money.ddriver: compare perf consistency of the 600p and the 960 EVO, you don't want the 600p.

vFunct - Wednesday, November 23, 2016 - link

> There is already a product that's unbeatable for media storage - an 8tb ultrastar he8. As ssd for media storage - that makes no sense, and a 100 of those only makes a 100 times less sense :DYou've never served an image gallery, have you?

You know it takes 5-10 ms to serve a single random long-tail image from an HDD. And a single image gallery on a page might need to serve dozens (or hundreds) of them, taking up up to 1 second of drive time.

Do you want to tie up an entire hard for one second, when you have hundreds of people accessing your galleries per second?

Hard drives are terrible for image serving on the web, because of their access times.

ddriver - Wednesday, November 23, 2016 - link

You probably don't know, but it won't really matter, because you will be bottlenecked by network bandwidth. hdd access times would be completely masked off. Also, there is caching, which is how the internet ran just fine before ssds became mainstream.You will not be losing any service time waiting for the hdd, you will be only limited by your internet bandwidth. Which means that regardless of the number of images, the client will receive the entire data set only 5-10 msec slower compared to an ssd. And regardless of how many clients you may have connected, you will always be limited by your bandwidth.

Any sane server implementation won't read the entire gallery in a burst, which may be hundreds of megabytes before it services another client. So no single client will ever block the hdd for a second. Practically every contemporary hdd have ncq, which means the device will deliver other requests while your network is busy delivering data. Servers buffer data, so say you have two clients requesting 2 different galleries at the same time, the server will read the first image for the first client and begin sending it, and then read the first image for the second client and begin sending it. The hdd will actually be idling quite a lot waiting, because your connection bandwidth will be vastly exceeded by the drive's performance. And regardless of how many clients you may have, that will not put any more strain on the hdd, as your network bandwidth will remain the same bottleneck. If people end up waiting too long, it won't be the hdd but the network connection.

But thanks for once again proving you don't have a clue, not that it wasn't obvious from your very first post ;)

vFunct - Friday, November 25, 2016 - link

> You probably don't know, but it won't really matter, because you will be bottlenecked by network bandwidth. hdd access times would be completely masked off. Also, there is caching, which is how the internet ran just fine before ssds became mainstream.ddriver, just stop. You literally have no idea what you're talking about.

Image galleries aren't hundreds of megabytes. Who the hell would actually send out that much data at once? No image gallery sends out full high-res images at once. Instead, they might be 50 mid-size thumbnails of 20kb each that you scroll through on your mobile device, and send high-res images later when you zoom in. This is like literally every single e-commerce shopping site in the world.

Maybe you could take an internship at a startup to gain some experience in the field? But right now, I recommend you never, ever speak in public ever again, because you don't know anything at all about web serving.

close - Wednesday, November 23, 2016 - link

@ddriver, I really didn't expect you to laugh at other people's ideas for new hardware given your "thoroughly documented" 5.25" hard drive brain-fart.ddriver - Wednesday, November 23, 2016 - link

Nobody cares what clueless troll wannabes like you expect, you are entirely irrelevant.