Micron Announces QuantX Branding For 3D XPoint Memory (UPDATED)

by Billy Tallis on August 9, 2016 10:45 AM EST- Posted in

- SSDs

- Micron

- 3D XPoint

- Flash Memory Summit

- QuantX

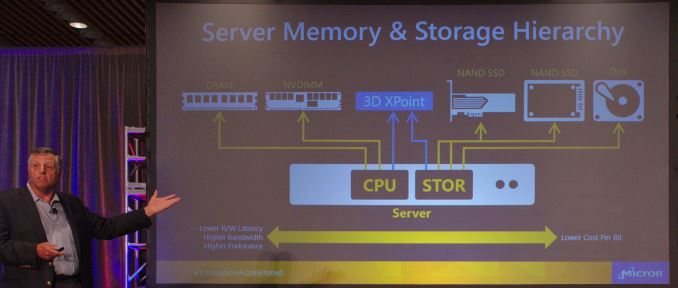

In a keynote speech later this morning at Flash Memory Summit, Micron will be unveiling the branding and logo that their products based on 3D XPoint memory will be using. The new QuantX brand is Micron's counterpart to Intel's Optane brand and will be used for the NVMe storage products that we will hear more about later this year. 3D Xpoint memory was jointly announced by Intel and Micron shortly before last year's Flash Memory Summit and we analyzed the details that were available at the time. Since then there has been very little new official information but much speculation. We do know that the initial products will be NVMe SSDs rather than NVDIMMs or other memory bus attached devices.

In the meantime, Micron VP Darren Thomas goes on stage at 11:30 AM PT, and we'll update with any further information.

UPDATE:

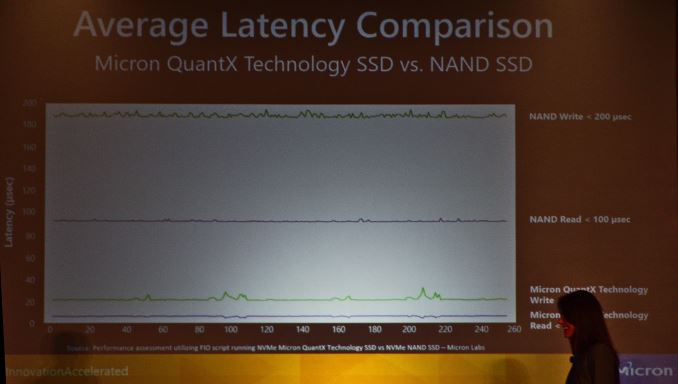

Micron's keynote reiterated their strategy of positioning 3D XPoint and thus QuantX products in between NAND flash and DRAM, with the advantages of 3d XPoint relative to each highlighted (while the disadvantages go unmentioned). But then they moved on to showing some meaningful performance graphs from actual benchmarks of QuantX drives.

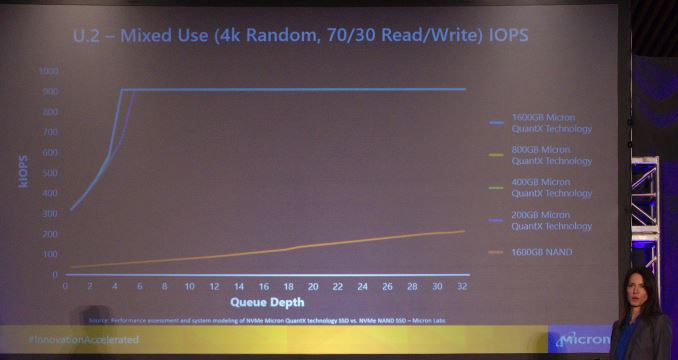

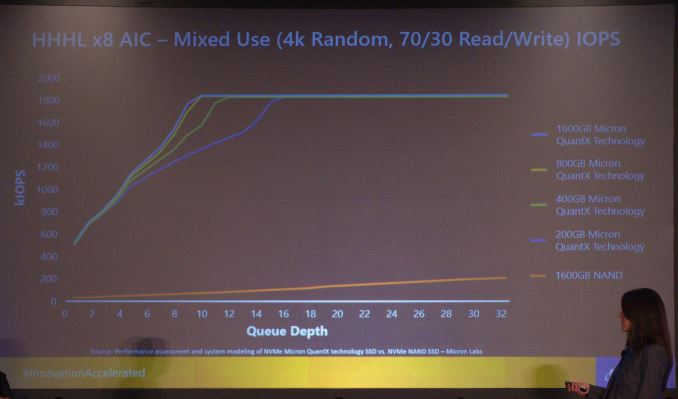

The factor of ten improvement in both read and write latency for NVMe drives is great, but perhaps the more impressive results are the graphs showing queue depth scaling. In a comparison of U.2 NVMe drives, Micron showed performance of QuantX drives ranging from 200GB to 1600GB, relative to a 1600GB NAND flash NVMe SSD. The QuantX drives saturated the PCIe x4 link with a 70/30 mix of random reads and random writes with queue depths of just 4-6, while the NAND SSD gets nowhere close to saturating the link with random accesses. The next comparison was of the PCIe x8 add-in card performance, where the link was saturated with queue depths between 10 and 16, depending on the capacity of the QuantX drive.

This great performance at low queue depths will make it relatively easy for a wide variety of workloads to benefit from the raw performance 3D XPoint memory offers. By contrast, the biggest and fastest PCIe SSDs based on NAND flash often require careful planning on the software side to achieve full utilization.

Source: Micron

52 Comments

View All Comments

beginner99 - Wednesday, August 10, 2016 - link

True. We want edram/l4 caches. Why no skylake-c intel WTF? Only hope is kabylake-x will have it but not holding my breath. We want faster RAM and we want faster storage. I mean with L4 cache and 3D XPoint between RAM and storage as a cache could make SSD for consumers obsolete.JoeyJoJo123 - Wednesday, August 10, 2016 - link

>We want faster RAMDoesn't make a huge difference in most applications, and particularly not games (unless you're using an integrated graphics package that includes no DRAM).

>and we want faster storage

Faster storage is making a decreasingly smaller and smaller impact as it gets faster through generations. Improving Read/Write access over HDDs by 70% was a big thing for SSDs and the way they affect the general snappiness and speed of a system, then improving it by another 70%, has pretty much less of an upfront impact than before.

Eventually those big improvements are going to be affecting it by a few dozen nanoseconds, if anything, which is hardly a big deal.

wumpus - Friday, August 12, 2016 - link

You can't have "faster RAM/faster Storage", but your suggestion seems about right.I'd rather have HBM[2/3] connected DRAM as a cache and use this "other stuff" (similar to memristers, but not quite it) as primary storage. eDRAM is bigger than it should be and tends to disappoint.

Can you get lower latency DRAM? I remember back in the dawn of time (especially for 3d graphics before GDDR[n] took over) there was stuff called MDRAM. Basically it was DRAM stored in smaller squares that had lower latency. I wonder if they could fill the HDM DRAM caches with low-latency DRAM (not really required but it should work better). Otherwise it would mainly be for high-thread count CPUs (and software) and iffy elsewhere.

Eden-K121D - Tuesday, August 9, 2016 - link

Let's see how it compares with NAND on price and performance. The SM 961 sure looks sweet at this time for meMrSpadge - Tuesday, August 9, 2016 - link

The performance is significantly better (otherwise they would not introduce it to the market), but will require new controllers and probably faster interconnects to fully reveal their potential. Due to this simple reason expect higher prices than for NAND, especially initially. The middle between NAND and DRAM seems like a fair guess.saratoga4 - Tuesday, August 9, 2016 - link

FWIW, Intel has been planning NVMe with their custom Xpoint controllers from the beginning, so that shouldn't be a problem. I don't think NVMe will be too big a problem either (at least initially), the main advantage Intel seems to be pushing is latency, and NVMe interface latency is an order of magnitude lower than the NAND delay, so they've got at least a factor of 10 improvement.frenchy_2001 - Tuesday, August 9, 2016 - link

Xpoint slots between NAND and DRAM.It's:

+ faster than NAND (about 50% slower than DRAM, so 10s if not 100s times faster than NAND)

+ has much better write endurance than NAND (100ks if not millions...)

+ uses direct addressing like DRAM (due to the endurance)

- it's less dense than NAND (but much denser than DRAM

- hence its cost will be between NAND and DRAM

Its first function will be as an additional level of storage, between DRAM and NAND (PCIe bus, NVME protocol). They had talked about replacing DRAM is some cases (NVDIMMs), but this seems pushed back.

p1esk - Tuesday, August 9, 2016 - link

Did you write this after reading the marketing slides, or the actual real-world test results?Xanavi - Tuesday, August 9, 2016 - link

It"s more dense than NAND, and it is bit addressable because of the CROSS POINT designMaxx Hoo - Tuesday, August 9, 2016 - link

Don't miss new requirementsGreat surprise in latency performance Will help IoT-enabled apps