Assessing IBM's POWER8, Part 2: Server Applications on OpenPOWER

by Johan De Gelas on September 15, 2016 8:01 AM EST

How serious are IBM's OpenPOWER efforts? It is a question we got a lot after publishing our reviews of the POWER8 and POWER8 servers. Despite the fact that IBM has launched several scale out servers, many people still do not see IBM as a challenger for Intel's midrange Xeon E5 business. Those doubts are understandable if you consider that Linux based systems are only 10% of IBM's Power systems sales. And some of those Linux based systems are high-end scale up servers. Although the available financial info gives us a very murky view, nobody doubts that the Linux based scale out servers are only a tiny part of IBM's revenue. Hence the doubts whether IBM is really serious about the much less profitable midrange server market.

At the same time, IBM's strongholds – mainframes, high-end servers and the associated lucrative software and consulting services – are in a slow but steady decline. Many years ago IBM put into motion plans to turn the ship around, by focusing on Cloud services (Softlayer& Bluemix), Watson (Cognitive applications, AI & Analytics) and ... OpenPOWER. If you pay close attention to what the company has been saying there is little doubt: these three initiatives will make or break IBM's future. And cloud services will be based upon scale out servers.

The story of Watson, the poster child of the new innovative IBM, is even more telling. Watson, probably the most advanced natural language processing software on the planet, started its career on typical IBM Big Iron: a cluster of 90 POWER 750 servers, containing 2880 Power7 CPUs and 16 TB of RAM. We were even surprised that this AI marvel was on the market for no more than 3 million dollars. The next generation for Watson cloud services will be (is?) based upon OpenPOWER hardware: a scale out dual POWER8 server with NVIDIA's K80 GPU accelerator as the basic building block. An OpenPOWER based Watson service is simply much cheaper and faster, even in IBM's own datacenters. But there is more to it than just IBM's internal affairs.

Google: "a simple config change"

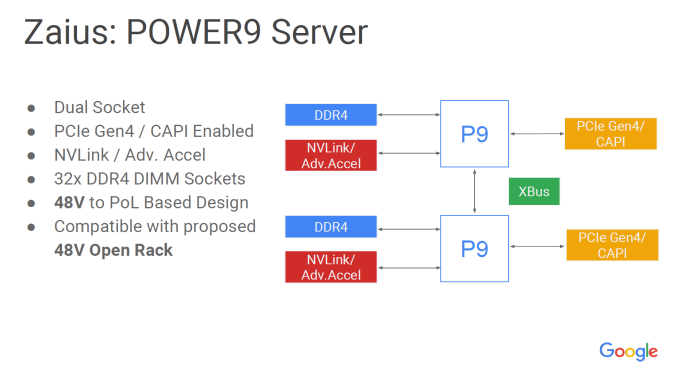

Google seems to be quite enthusiastic about OpenPOWER. Google always experimented with different architectures, and told the world more than once that it was playing around with ARM and POWER based servers. However, the plans with OpenPOWER hardware are very tangible and require large investments: Google is actively developing a POWER9 server with Rackspace.

"Zaius, the OpenPOWER POWER9 server of Google and Rackspace"

Google has also publicly stated that "For most Googlers, enabling POWER is a config change". This far transcends the typical "we experimenting with that stuff in the labs".

So no wonder that IBM is giving this currently low revenue but very promising project such a high priority. And the pace increases: IBM just announced even more "OpenPOWER based" servers. The scale out server family is expanding quickly...

49 Comments

View All Comments

nils_ - Monday, September 26, 2016 - link

Isn't the limit slighty lower than 32 GiB? At some point the JVM switches to 64 bit pointers, which means you'll lose a lot of the available heap to larger pointers. I think you might want to lower your settings. I'm curious, what kind of GC times are you seeing with your heap size? I don't currently have access to Java running on non virtualised hardware so I would like to know if the overhead is significant (mostly running Elasticsearch here).CajunArson - Thursday, September 15, 2016 - link

All in all the Power chip isn't terrible but the power consumption coupled with the sheer amount of tuning that is required just to get it competitive with the Xeons isn't too encouraging. You could spend far less time tuning the Xeons and still have higher performance or go ahead with tuning to get even more performance out of those Xeons.On top of the fact that this isn't a supposedly "high end" model, the higher end power parts cost more and will burn through even more power, and that's an expense that needs to be considered for the types of real-world applications that use these servers.

dgingeri - Thursday, September 15, 2016 - link

That ad on the last page that claims lower equipment cost of course compares that to an HP DL380, the most overpriced Xeon E5 system out right now. (I know because I shopped them.) Comparing it to a comparable Dell R730 would show less expense, better support, and better expansion options.Morawka - Thursday, September 15, 2016 - link

you mean a company made a slide that uses the most extreme edge cases to make their product look good?!?! Shocking /sGondalf - Thursday, September 15, 2016 - link

Something is wrong is these power consumption data. The plataform idles at 221W and under full load only 260W?? the cpu is vanished?? Power 8 at over 3Ghz has an active power of only 40W??1) the idle value is wrong or 2) the under load value is wrong. All this is not consistent with IBM TDP official values.

IMO the energy consumption page of the article has to be rewrite.

JohanAnandtech - Thursday, September 15, 2016 - link

We have double checked those numbers. It is probably an indication that many of the power saving features do not work well under Linux right now.BTW, just to give you an idea: running c-ray (floating point) caused the consumption to go to 361W.

Kevin G - Thursday, September 15, 2016 - link

I presume that c-ray uses the 256 bit vector unit on POWER8?Also have you done any energy consumption testing that takes advantage of the hardware decimal unit?

mapesdhs - Thursday, September 15, 2016 - link

C-ray isn't that smart. :D It's a very simple code, brute force basically, and the smaller dataset can easily fit in a modern cache (actually the middling size test probably does too on CPUs like these). Hmm, I suppose it's possible one could optimise the compilation a bit to help, but I doubt anything except a full rewrite could make decent use of any vector tech, and I don't want to allow changes to the code, that would make comparisons to all other test results null. Compiler optimisations are ok, but not multi-pass optimisations that feed back info about the target data into the initial compile, that's cheating IMO (some people have done this to obtain what look like really silly run times, but I don't include them on my main C-ray page).Ian.

Gondalf - Tuesday, September 20, 2016 - link

Ummm so in short words the utilized sw don't stress at all the cpu, not even the hot caches near the memory banks. We need a bench with an high memory utilization and a balanced mix between integer and FP, more in line with real world utilizationI don't know if this test is enough to say POWER8 is power/perf competitive with haswell in 22nm.

In fact POWER market share is definitively at the historic minimum and 14nm Broadwell is pretty young, so this disaster it is not its fault.

jesperfrimann - Wednesday, September 21, 2016 - link

If you have a OPAL (Bare Metal system that cannot run POWERVM) then all the powersavings features are off by default AFAIR.Try to have a look at:

https://public.dhe.ibm.com/common/ssi/ecm/po/en/po...

Many of the features does have a performance impact, ranging from negative over neutral to positive for a single one.

But Again. I think your comparison with 'vanilla' software stacks are relevant. This is what people would see out of the box with an existing software stack.

It is 101% relevant to do that comparison as this is the marked that IBM is trying to break into with these servers.

But what could be fun to see was some tests where all the Bells and Whistles were utilized. As many have written here.. use of Hardware supported Decimal Floating Point. The Vector Execution unit, the ability to do hardware assisted Memory Compression etc. etc.

// Jesper