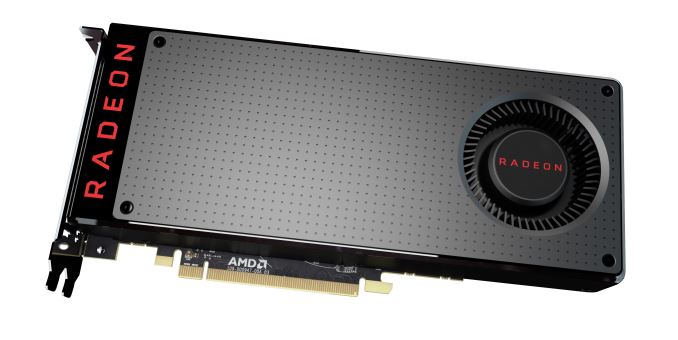

The AMD Radeon RX 480 Preview: Polaris Makes Its Mainstream Mark

by Ryan Smith on June 29, 2016 9:00 AM ESTFirst Thoughts

Bringing our first look at AMD’s new architecture to a close, it’s exciting to see the field shape up for the FinFET generation. After over four years since the last great node transition, we once again are making a very welcome jump to a new manufacturing process, bringing us AMD’s Polaris.

AMD learned a lot from the 28nm generation – and more often than not the hard way – and they have put those lessons to good use in Polaris. Polaris’s power efficiency has been greatly increased thanks to a combination of GlobalFoundries 14nm FinFET process and AMD’s own design choices, and as a result, compared to AMD’s last-generation parts, Polaris makes significant strides where it needs to. And this goes not just for energy efficiency, but overall performance/resource efficiency as well.

Because AMD is launching with a mainstream part first they don’t get to claim to be charting any new territory on absolute performance. But by being the first vendor to address the mainstream market with a FinFET-based GPU, AMD gets the honor of redefining the price, performance, and power expectations of this market. And the end result is better performance – sometimes remarkably so – for this high volume market.

Relative to last-generation mainstream cards like the GTX 960 or the Radeon R9 380, with the Radeon RX 480 we’re looking at performance gains anywhere between 45% and 70%, depending on the card, the games, and the memory configuration. As the mainstream market was last refreshed less than 18 months ago, the RX 480 generally isn’t enough to justify an upgrade. However if we extend the window out to cards 2+ years old to things like the Radeon R9 280 and GeForce GTX 760, then we have a generational update and then-some. AMD Pitcairn users (Radeon HD 7800, R9 270) should be especially pleased with the progress AMD has made from one mainstream GPU to the next.

Looking at the overall performance picture, averaged across all of our games, the RX 480 lands a couple of percent ahead of NVIDIA’s popular GTX 970, and similarly ahead of AMD’s own Radeon R9 390, which is consistent with our performance expectations based on AMD’s earlier hints. RX 480 can't touch GTX 1070, which is some 50% faster, but then it's 67% more expensive as well.

Given the 970/390 similarities, from a price perspective this means that 970/390 performance has come down by around $90 since these cards were launched, from $329 to $239 for the more powerful RX 480 8GB, or $199 when it comes to 4GB cards. In the case of the AMD card power consumption is also down immensely as well, in essence offering Hawaii-like performance at around half of the power. However against the GTX 970 power consumption is a bit more of a mixed bag – power consumption is closer than I would have expected under Crysis 3 – and this is something to further address in our full review.

Finally, when it comes to the two different memory capacities of the RX 480, for the moment I’m leaning strongly towards the 8GB card. Though the $40 price increase represents a 20% price premium, history has shown that when mainstream cards launch at multiple capacities, the smaller capacity cards tend to struggle far sooner than their larger counterparts. In that respect the 8GB RX 480 is far more likely to remain useful a couple of years down the road, making it a better long-term investment.

Wrapping things up then, today’s launch of the Radeon RX 480 puts AMD in a good position. They have the mainstream market to themselves, and RX 480 is a strong showing for their new Polaris architecture. AMD will have to fend off NVIDIA at some point, but for now they can sit back and enjoy another successful launch.

Meanwhile we’ll be back in a few days with our full review of the RX 480, so be sure to stay tuned.

449 Comments

View All Comments

Flunk - Thursday, June 30, 2016 - link

The Crossfire reviews I've read have said the GTX 1070 is faster on average than RX 480 Crossfire, maybe you should go read those reviews.Murloc - Tuesday, July 5, 2016 - link

comparing crossfire/sli to a single gpu is really useless. Multigpu means lots of heat, noise, power consumption, driver and game support issues, and performance that is most certainly not doubled on many games.Most people want ONE video card and they're going to get the one with the best bang for buck.

R0H1T - Wednesday, June 29, 2016 - link

For $200 I'll take this over the massive cash grab i.e. FE obviously!Wreckage - Wednesday, June 29, 2016 - link

Going down with the ship eh? It took AMD 2 years to compete with the 970. I guess we will have to wait until 2018 to see what they have to go against the 1070looncraz - Wednesday, June 29, 2016 - link

Two years to compete with the 970?The 970's only advantage over AMD's similarly priced GPUs was power consumption. That advantage is now gone - and AMD is charging much less for that level of performance.

The RX480 is a solid GPU for mainstream 1080p gamers - i.e. the majority of the market. In fact, right now, it's the best GPU to buy under $300 by any metric (other than the cooler).

Better performance, better power consumption, more memory, more affordable, more up-to-date, etc...

stereopticon - Wednesday, June 29, 2016 - link

are you kidding me?! better power consumption?! its about the same as the 970... it used something like 13 lets watts while running crysis 3... if the gtx1060 ends up being as good this card for under 300 while consuming less watts i have no idea what AMD is gonna do. I was hoping for this to have a little more power (more along 980) to go inside my secondary rig.. but we will see how the 1060 performance.i still believe this is a good card for the money.. but the hype was definitely far greater than what the actual outcome was...

adamaxis - Wednesday, June 29, 2016 - link

Nvidia measures power consumption by average draw. AMD measures by max.These cards are not even remotely equal.

dragonsqrrl - Wednesday, June 29, 2016 - link

"Nvidia measures power consumption by average draw. AMD measures by max."That's completely false.

CiccioB - Friday, July 1, 2016 - link

Didn't you know that when using AMD HW the watt meter switches to "maximum mode" while when applying the probes on nvidia HW it switched to "average mode"?Ah, ignorance, what a funny thing it is

dragonsqrrl - Friday, July 8, 2016 - link

@CiccioBNo I didn't, source? Are you suggesting that the presence of AMD or Nvidia hardware in a system has some influence over metering hardware use to measure power consumption? What about total system power consumption from the wall?

At least in relation to advertised TDP, which is what my original comment was referring to, I know that what adamaxis said about avg and max power consumption is false.