The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTFeeding Pascal: GDDR5X

An ongoing problem for every generation of GPUs is the matter of memory bandwidth. As graphics is an embarrassingly parallel problem, it scales out with additional ALUs – and consequently Moore’s Law – relatively well. Each successive generation of GPUs are wider and higher clocked, consuming more data than ever before.

The problem for GPUs is that while their performance tracks Moore’s Law well, the same cannot be said for DRAM. To be sure, DRAM has gotten faster over the years as well, but it hasn’t improved at nearly the same pace as GPUs, and physical limitations ensure that this will continue to be the case. So with every generation, GPU vendors need to be craftier and craftier about how they get more memory bandwidth, and in turn how they use that memory bandwidth.

To help address this problem, Pascal brings to the table two new memory-centric features. The first of which is support for the newer GDDR5X memory standard, which looks to address the memory bandwidth problem from the supply side.

By this point GDDR5 has been with us for a surprisingly long period of time – AMD first implemented it on the Radeon HD 4870 in 2008 – and it has been taken to higher clockspeeds than originally intended. Today’s GeForce GTX 1070 and Radeon RX 480 cards ship with 8Gbps GDDR5, a faster transfer rate than the originally envisioned limit of 7Gbps. That GPU manufacturers and DRAM makers have been able to push GDDR5 so high is a testament to their abilities, but at the same time the technology is clearly reaching its apex (at least for reasonable levels of power consumption)

As a result there has been a great deal of interest in the memory technologies that would succeed GDDR5. At the high end, last year AMD became the first vendor to implement version 1 of High Bandwidth Memory, a technology that is a significant departure from traditional DRAM and uses an ultra-wide 4096-bit memory bus to provide enormous amounts of bandwidth. Not to be outdone, NVIDIA has adopted HBM2 for their HPC-centric GP100 GPU, using it to deliver 720GB/sec of bandwidth for Pascal P100.

While from a technical level HBM is truly fantastic next-generation technology – it uses cutting edge technology throughput, from TSV die-stacking to silicon interposers that connect the DRAM stacks to the processor – its downside is that all of this next-generation technology is still expensive to implement. Precise figures aren’t publicly available, but the silicon interposer is more expensive than a relatively simple PCB, and connecting DRAM dies through TSVs and stacking them is more complex than laying down BGA DRAM packages on a PCB. For NVIDIA, a more cost-effective solution was desired for GP104.

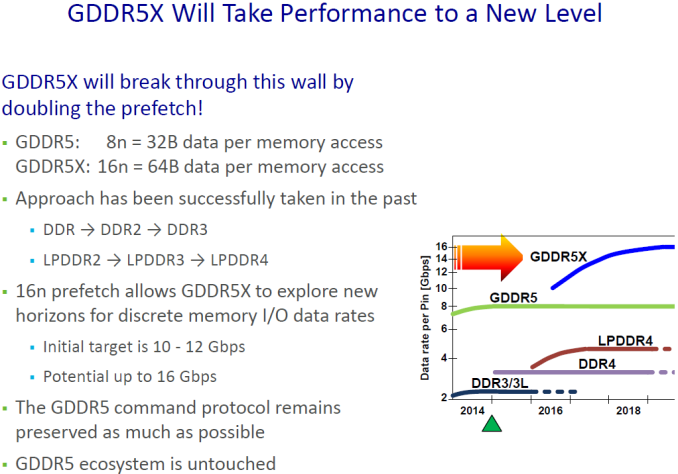

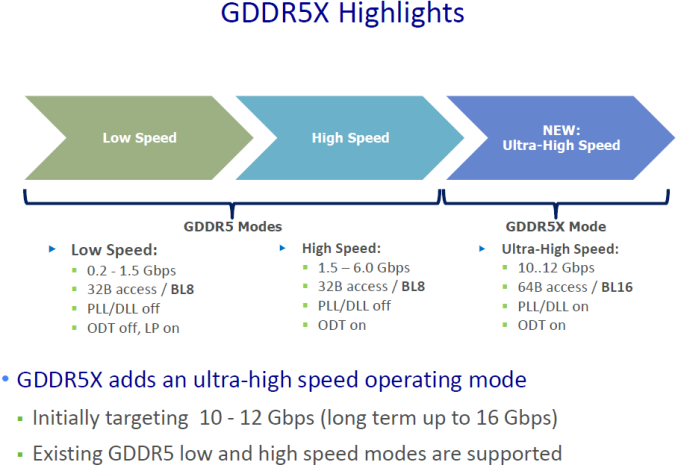

That solution came from Micron and the JEDEC in the form of GDDR5X. A sort of half-generation extension of traditional GDDR5, GDDR5X further increases the amount of memory bandwidth available from GDDR5 through a combination of a faster memory bus coupled with wider memory operations to read and write more data from DRAM per clock. And though it’s not without its own costs such as designing new memory controllers and boards that can accommodate the tighter requirements of the GDDR5X memory bus, GDDR5X offers a step in performance between the relatively cheap and slow GDDR5, and relatively fast and expensive HBM2.

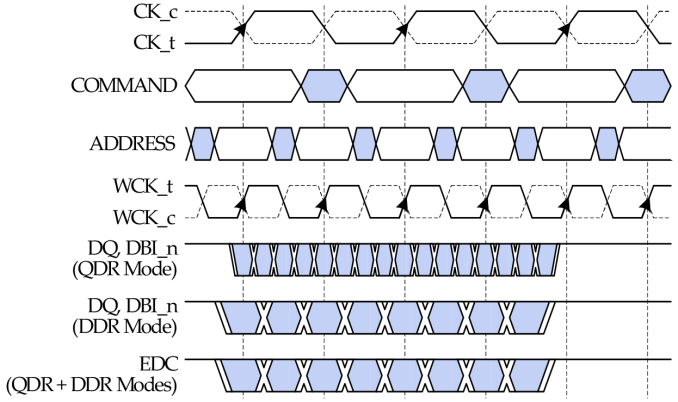

Relative to GDDR5, the significant breakthrough on GDDR5X is the implementation of Quad Data Rate (QDR) signaling on the memory bus. Whereas GDDR5’s memory bus would transfer data twice per write clock (WCK) via DDR, GDDR5X extends this to four transfers per clock. All other things held equal, this allows GDDR5X to transfer twice as much data per clock as GDDR5.

QDR itself is not a new innovation – Intel implemented a quad pumped bus 15 years ago for the Pentium 4 with AGTL+ – but this is the first time it has been implemented in a common JEDEC memory standard. The history of PC memory standards is itself quite a tale, and I suspect that the fact we’re only seeing a form of QDR now is related to patents. But regardless, here we are.

Going hand-in-hand with the improved transfer rate of the GDDR5X memory bus, GDDR5X also once again increases the size of read/write operations, as the core clockspeed of GDDR5X chips is only a fraction of the bus speed. GDDR5X uses a 16n prefetch, which is twice the size of GDDR5’s 8n prefetch. This translates to 64B reads/writes, meaning that GDDR5X memory chips are actually fetching (or writing) data in blocks of 64 bytes, and then transmitting it over multiple cycles of the memory bus. As discussed earlier, this change in the prefetch size is why the memory controller organization of GP104 is 8x32b instead of 4x64b like GM204, as each memory controller can now read and write 64B segments of data via a single memory channel.

Overall GDDR5X is planned to offer enough bandwidth for at least the next couple of years. The current sole supplier of GDDR5X, Micron, is initially developing GDDR5X from 10 to 12Gbps, and the JEDEC has been talking about taking that to 14Gbps. Longer term, Micron thinks the technology can hit 16Gbps, which would be a true doubling of GDDR5’s current top speed of 8Gbps. With that said, even with a larger 384-bit memory bus (ala GM200) this would only slightly surpass what kind of bandwidth HBM2 offers today, reinforcing the fact that GDDR5X will fill the gap between traditional GDDR5 and HBM2.

Meanwhile when it comes to power consumption and power efficiency, GDDR5X will turn back the clock, at least a bit. Thanks in large part to a lower operating voltage of 1.35v, circuit design changes, and a smaller manufacturing node for the DRAM itself, 10Gbps GDDR5X only requires as much power as 7Gbps GDDR5. This means that relative to GTX 980, GTX 1080’s faster GDDR5X is essentially “free” from a power perspective, not consuming any more power than before, according to NVIDIA.

That said, while this gets NVIDIA more bandwidth for the same power – 43% more, in fact – NVIDIA has now put themselves back to where they were with GTX 980. GDDR5X can scale higher in frequency, but doing so will almost certainly further increase power consumption. As a result they are still going to have to carefully work around growing memory power consumption if they continue down the GDDR5X path for future, faster cards.

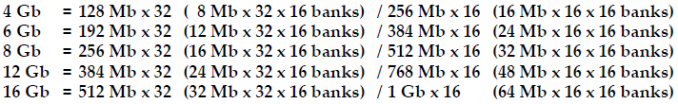

On a final specification note, GDDR5X also introduces non-power-of-two memory chip capacities such as 12Gb. These aren’t being used for GTX 1080 – which uses 8Gb chips – but I wouldn’t be surprised if we see these used down the line. The atypical sizing would allow NVIDIA to offer additional memory capacities without resorting to asymmetrical memory configurations as is currently the case, all the while avoiding the bandwidth limitations that can result from that.

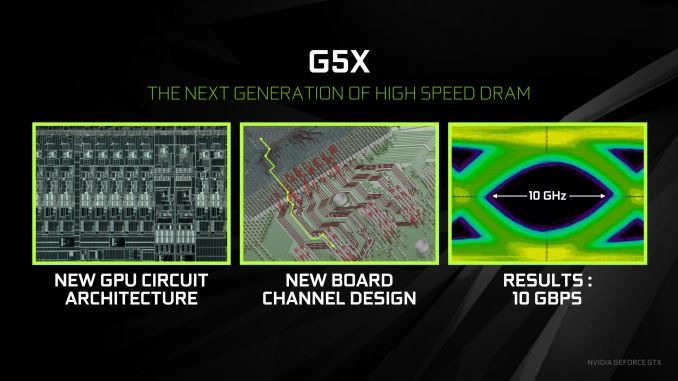

Moving on to implementation details, GP104 brings with it a new memory controller design to support GDDR5X. As intended with the specification, this controller design is backwards compatible with traditional GDDR5, and will allow NVIDIA to support both memory standards. At this point NVIDIA hasn’t talked about what kinds of memory speeds their new controller can ultimately hit, but the cropped signal analysis diagram published in their slide deck shows a very tight eye. Given the fact that NVIDIA’s new memory controller can operate at 8Gbps in GDDR5 mode, I would be surprised if we don’t see at least 12Gbps GDDR5X by the tail-end of Pascal’s lifecycle.

But perhaps the bigger challenge is on the board side of matters, where NVIDIA and their partners needed to develop PCBs capable of handling the tighter signaling requirements of the GDDR5X memory bus. At this point video cards are moving 10Gbps/pin over a non-differential bus, which is itself a significant accomplishment. And keep in mind that in the long run, the JEDEC and Micron want to push this higher still.

To that end it somewhat undersells the whole process to just say that GDDR5X required “tighter signaling requirements”, but it’s an apt description. There is no single technology in place on the physical trace side to make this happen; it’s just a lot of precision, intensive work into ensuring that the traces and the junctions between the PCB, the chip, and the die all retain the required signal integrity. With a 256-bit wide bus we’re not looking at something too wide compared to the 384 and 512-bit buses uses on larger GPUs, so the task is somewhat simpler in that respect, but it’s still quite a bit of effort to minimize the crosstalk and other phenomena that degrade the signal, and which GDDR5X has little tolerance for.

As it stands I suspect we have not yet seen the full ramifications of the tighter bus requirements, and we probably won’t for cards that use the reference board or the memory design lifted from the reference board. For stability reasons, data buses are usually overengineered, and it’s likely the GDDR5X memory itself that’s holding back overclocking. Things will likely get more interesting if and when GDDR5X filters its way down to cheaper cards, where keeping costs in check and eking out higher margins becomes more important. Alternatively, as NVIDIA’s partners get more comfortable with the tech and its requirements, it’ll be interesting to see where we end up with the ultra-high-end overclocking cards –the Kingpins, Lightnings, Matrices, etc – and whether all of the major partners can keep up in that race.

200 Comments

View All Comments

grrrgrrr - Wednesday, July 20, 2016 - link

Solid review! Some nice architecture introductions.euskalzabe - Wednesday, July 20, 2016 - link

The HDR discussion of this review was super interesting, but as always, there's one key piece of information missing: WHEN are we going to see HDR monitors that take advantage of these new GPU abilities?I myself am stuck at 1080p IPS because more resolution doesn't entice me, and there's nothing better than IPS. I'm waiting for HDR to buy my next monitor, but being 5 years old my Dell ST2220T is getting long in the teeth...

ajlueke - Wednesday, July 20, 2016 - link

Thanks for the review Ryan,I think the results are quite interesting, and the games chosen really help show the advantages and limitations of the different architectures. When you compare the GTX 1080 to its price predecessor, the 980 Ti, you are getting an almost universal ~25%-30% increase in performance.

Against rival AMDs R9 Fury X, there is more of a mixed bag. As the resolutions increase the bandwidth provided by the HBM memory on the Fury X really narrows the gap, sometimes trimming the margin to less that 10%,s specifically in games optimized more for DX12 "Hitman, "AotS". But it other games, specifically "Rise of the Tomb Raider" which boasts extremely high res textures, the 4Gb memory size on the Fury X starts to limit its performance in a big way. On average, there is again a ~25%-30% performance increase with much higher game to game variability.

This data lets a little bit of air out of the argument I hear a lot that AMD makes more "future proof" cards. While many Nvidia 900 series users may have to upgrade as more and more games switch to DX12 based programming. AMD Fury users will be in the same boat as those same games come with higher and higher res textures, due to the smaller amount of memory on board.

While Pascal still doesn't show the jump in DX12 versus DX11 that AMD's GPUs enjoy, it does at least show an increase or at least remain at parity.

So what you have is a card that wins in every single game tested, at every resolution over the price predecessors from both companies, all while consuming less power. That is a win pretty much any way you slice it. But there are elements of Nvidia’s strategy and the card I personally find disappointing.

I understand Nvidia wants to keep features specific to the higher margin professional cards, but avoiding HBM2 altogether in the consumer space seems to be a missed opportunity. I am a huge fan of the mini ITX gaming machines. And the Fury Nano, at the $450 price point is a great card. With an NVMe motherboard and NAS storage the need for drive bays in the case is eliminated, the Fury Nano at only 6” leads to some great forward thinking, and tiny designs. I was hoping to see an explosion of cases that cut out the need for supporting 10-11” cards and tons of drive bays if both Nvidia and AMD put out GPUs in the Nano space, but it seems not to be. HBM2 seems destined to remain on professional cards, as Nvidia won’t take the risk of adding it to a consumer Titan or GTX 1080 Ti card and potentially again cannibalize the higher margin professional card market. Now case makers don’t really have the same incentive to build smaller cases if the Fury Nano will still be the only card at that size. It’s just unfortunate that it had to happen because NVidia decided HBM2 was something they could slap on a pro card and sell for thousands extra.

But also what is also disappointing about Pascal stems from the GTX 1080 vs GTX 1070 data Ryan has shown. The GTX 1070 drops off far more than one would expect based off CUDA core numbers as the resolution increases. The GDDR5 memory versus the GDDR5X is probably at fault here, leading me to believe that Pascal can gain even further if the memory bandwidth is increased more, again with HBM2. So not only does the card limit you to the current mini-ITX monstrosities (I’m looking at you bulldog) by avoiding HBM2, it also very likely is costing us performance.

Now for the rank speculation. The data does present some interesting scenarios for the future. With the Fury X able to approach the GTX 1080 at high resolutions, most specifically in DX12 optimized games. It seems extremely likely that the Vega GPU will be able to surpass the GTX 1080, especially if the greatest limitation (4 Gb HBM) is removed with the supposed 8Gb of HBM2 and games move more and more the DX12. I imagine when it launches it will be the 4K card to get, as the Fury X already acquits itself very well there. For me personally, I will have to wait for the Vega Nano to realize my Mini-ITX dreams, unless of course, AMD doesn’t make another Nano edition card and the dream is dead. A possibility I dare not think about.

eddman - Wednesday, July 20, 2016 - link

The gap getting narrower at higher resolutions probably has more to do with chips' designs rather than bandwidth. After all, Fury is the big GCN chip optimized for high resolutions. Even though GP104 does well, it's still the middle Pascal chip.P.S. Please separate the paragraphs. It's a pain, reading your comment.

Eidigean - Wednesday, July 20, 2016 - link

The GTX 1070 is really just a way for Nvidia to sell GP104's that didn't pass all of their tests. Don't expect them to put expensive memory on a card where they're only looking to make their money back. Keeping the card cost down, hoping it sells, is more important to them.If there's a defect anywhere within one of the GPC's, the entire GPC is disabled and the chip is sold at a discount instead of being thrown out. I would not buy a 1070 which is really just a crippled 1080.

I'll be buying a 1080 for my 2560x1600 desktop, and an EVGA 1060 for my Mini-ITX build; which has a limited power supply.

mikael.skytter - Wednesday, July 20, 2016 - link

Thanks Ryan! Much appreciated.chrisp_6@yahoo.com - Wednesday, July 20, 2016 - link

Very good review. One minor comment to the article writers - do a final check on grammer - granted we are technical folks, but it was noticeable especially on the final words page.madwolfa - Wednesday, July 20, 2016 - link

It's "grammar", though. :)Eden-K121D - Thursday, July 21, 2016 - link

Oh the ironychrisp_6@yahoo.com - Thursday, July 21, 2016 - link

oh snap, that is some funny stuff right there