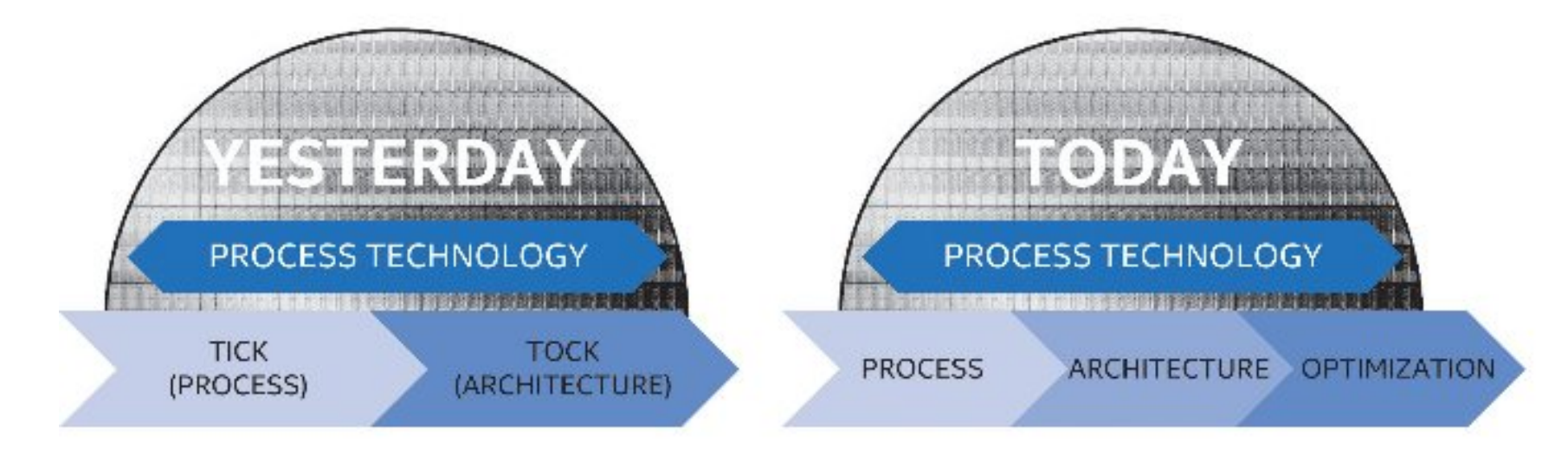

Intel’s ‘Tick-Tock’ Seemingly Dead, Becomes ‘Process-Architecture-Optimization’

by Ian Cutress on March 22, 2016 6:45 PM EST

As reported at The Motley Fool, Intel’s latest 10-K / annual report filing would seem to suggest that the ‘Tick-Tock’ strategy of introducing a new lithographic process note in one product cycle (a ‘tick’) and then an upgraded microarchitecture the next product cycle (a ‘tock’) is going to fall by the wayside for the next two lithographic nodes at a minimum, to be replaced with a three element cycle known as ‘Process-Architecture-Optimization’.

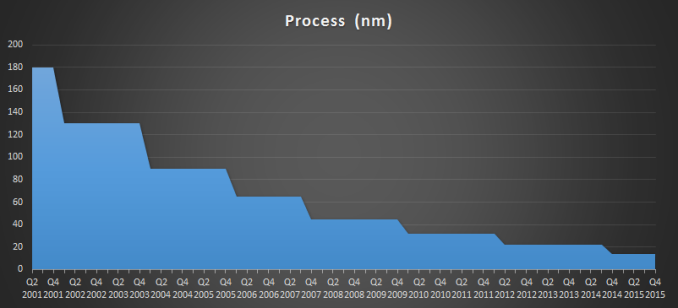

Intel’s Tick-Tock strategy has been the bedrock of their microprocessor dominance of the last decade. Throughout the tenure, every other year Intel would upgrade their fabrication plants to be able to produce processors with a smaller feature set, improving die area, power consumption, and slight optimizations of the microarchitecture, and in the years between the upgrades would launch a new set of processors based on a wholly new (sometimes paradigm shifting) microarchitecture for large performance upgrades. However, due to the difficulty of implementing a ‘tick’, the ever decreasing process node size and complexity therein, as reported previously with 14nm and the introduction of Kaby Lake, Intel’s latest filing would suggest that 10nm will follow a similar pattern as 14nm by introducing a third stage to the cadence.

From Intel's report: As part of our R&D efforts, we plan to introduce a new Intel Core microarchitecture for desktops, notebooks (including Ultrabook devices and 2 in 1 systems), and Intel Xeon processors on a regular cadence. We expect to lengthen the amount of time we will utilize our 14nm and our next generation 10nm process technologies, further optimizing our products and process technologies while meeting the yearly market cadence for product introductions.

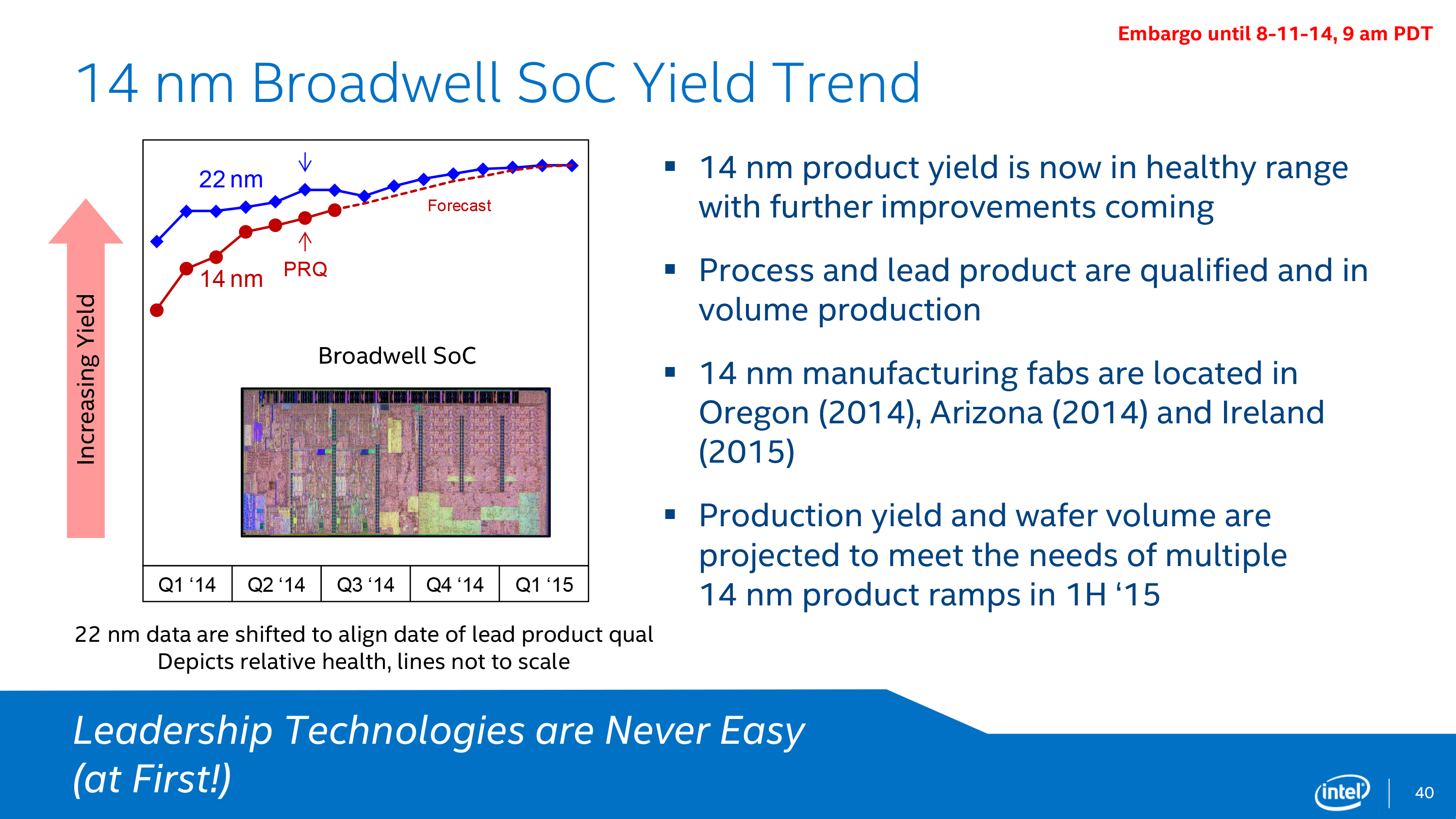

While the new PAO or ‘Process-Architecture-Optimization’ model is a direct result of the complexity of developing and implementing new lithographic nodes (Intel has even entered into a new five-year agreement with ASML to develop new extreme-ultra-violet lithographic techniques), but also with new process nodes typically comes a time where yields have to become high enough to remain financially viable in the long term. It has been well documented that the complexity of Intel’s 14nm node using the latest generation FinFET technology took longer than expected to reach maturation point compared to 22nm. As a result, product launches were stretched out and within a three-year cycle Intel was starting to produce only two new generations of products.

Intel’s current fabs in Ireland, Arizona, and Oregon are currently producing wafers on the 14nm node, with Israel joining Arizona and Oregon on the 22nm node. Intel also has agreements in place for third-party companies (such as Rockchip) to manufacture Intel’s parts for certain regional activity. As well as looking forward to 10nm, Intel’s filing also states projects in the work to move from 300mm wafers to 450mm wafers, reducing cost, although does not put a time frame on it.

The manufacturing lead Intel has had over the past few years over rivals such as Samsung, TSMC and Global Foundries, has put them in a commanding position in both home computing and enterprise. One could argue that by elongating the next two process nodes, Intel might lose ground on their advantage, especially as other companies start hitting their stride. However, the research gap is still there - Intel introduced 14nm back in August 2014, and has since released parts upwards of 400mm2, whereas Samsung 14nm / TSMC 16nm had to wait until the launch of the iPhone to see 100mm2 parts on the shelves, with Global Foundries still to launch their 14nm parts into products. While this relates to density, both power and performance are still considered to be on Intel’s side, especially for larger dies.

Intel's Current Process Over Time

On the product side of things, Intel’s strategy of keeping the same microarchitecture for two generations allows its business customers to guarantee the lifetime of the halo platform, and maintain consistency with CPU sockets in both consumer and enterprise. Moving to a three stage cycle has thrown some uncertainty on this, depending on how much ‘optimization’ will go into the PAO stage: whether it will be microarchitectural, better voltage and thermal qualities, or if it will be graphics focused, or even if it will keep the same socket/chipset. This has a knock on effect with Intel’s motherboard partners, who have used the updated socket and chipset strategy every two generations as a spike in revenue.

Suggested Reading:

EUV Lithography Makes Good Progress, Still Not Ready for Prime Time

Tick Tock on the Rocks: Intel Delays 10nm, adds 3rd Gen 14nm

The Intel Skylake Mobile and Desktop Launch with Microarchitecture Analysis

Source: Intel 10-K (via The Motley Fool)

98 Comments

View All Comments

Murloc - Wednesday, March 23, 2016 - link

saving electricity is often not a good reason considering how much you can spend on new parts.ImSpartacus - Tuesday, March 22, 2016 - link

Yeah, I have a Haswell machine and I have no use for USB3 (let alone 3.1). Stuff like CPU perf, RAM speed and PCIe bandwidth only matter when you have more GPU perf than any reasonable gamer would pursue.maximumGPU - Wednesday, March 23, 2016 - link

isn't SB PCIE 2.0?extide - Thursday, March 24, 2016 - link

Yeah, but SB-E supports 3.0MrPoletski - Wednesday, March 23, 2016 - link

My i7-920 is still going strong.bigboxes - Wednesday, March 23, 2016 - link

In my HTPCSamus - Monday, March 28, 2016 - link

Sandy bridge was the last generation where Intel really nailed it. It capitalized on and simplified Nehalem's complex QPI platform, introduced competitive ondie graphics and still remains competitive if not downright current in performance. It's been bland ever since.Ivy bridge did almost nothing for sandy bridge but shrink the node which came with its own compromises, and Haswell is the most controversial launch in Intel's recent history, from the FIVR to broadwell mostly MIA to botched chipset launches such as the early stepping of 80 series that couldn't run the refresh cpu's to the 90 series existing to be compatible with broadwell which never materialized, then broken promises of skylake compatibility. A lot of people who upgraded from even the X58 first gen Core to Haswell have heavy regret to this day. All they really got was a slightly lower power bill.

melgross - Tuesday, March 22, 2016 - link

I've been saying this for years. Intel, and others have seen slipping introductions for new process technology for some time.32nm delayed for 3 months.

22nm delayed for 6 months.

14nm delayed for 12 months.

And with 14nm, we've seen Intel come out with simpler chips first, which is something they've never done before.

Now, 10nm was supposed to come out in late 2016. Then it was delayed to early 2017, then late 2017. Anyone want to bet against the idea that as we move to late 2016, and early 2017, it will slip to early 2018?

When we look at the cumulative delays so far, 10nm is already a year late.

After that, we have the serious problem of 7nm. It's already been stated in various chip publications that fin-fet doesn't work for 7nm, because the spacing will be too close, among other problems. But the three other solutions for that problem haven't been working either. No one knows if they will work, and there's no other possible solution known at this time. While everyone doesn't doubt that 7nm will get working, the time scale is in real question.

As for 5nm, many chip experts still think it may not be possible.

extide - Tuesday, March 22, 2016 - link

What parts are 400mm^2? Until Broadwell-EP starts shipping, or Knights Landing, I think the biggest thing on 14nm is Xeon D. Or are some of those shipping already?wicketr - Tuesday, March 22, 2016 - link

If AMD were a serious competitor (as well as a couple other vendors), and Intel only had a 30-50% market share of desktop/laptop CPUs, I wonder if they'd be "optimizing" (aka slowing down research) as opposed to continuing on at a relentless pace that they once were.It seems that since they basically have a monopoly now, they are not nearly as interested in making their CPUs generationally faster. Moore's law has basically ended. And CPUs are becoming mere optimizations of their former self.