Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 Single-GPU Performance

We’ll start things off with a look at single-GPU performance. For this, we’ve grabbed a collection of RTG and NVIDIA GPUs covering the entire DX12 generation, from GCN 1.0 and Kepler to GCN 1.2 and Maxwell. This will give us a good idea of how the game performs both across a wide span of GPU performance levels, and how (if at all) the various GPU generational changes play a role.

Meanwhile unless otherwise noted, we’re using Ashes’ High quality setting, which turns up a number of graphical features and also utilizes 2x MSAA. It’s also worth mentioning that while Ashes does allow async shading to be turned off and on, this option is on by default unless turned off in the game’s INI file.

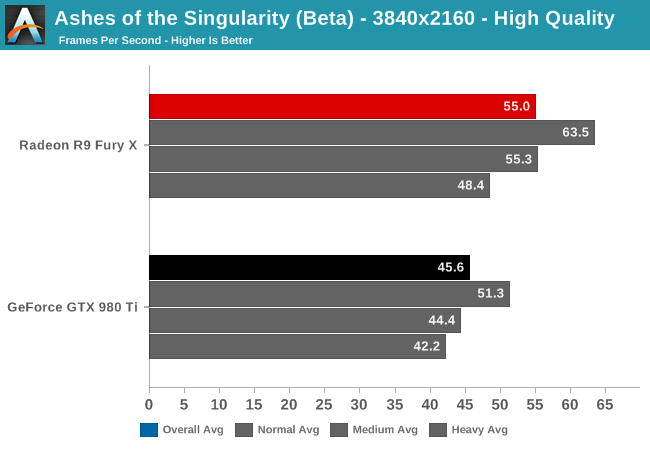

Starting at 4K, we have the GeForce GTX 980 Ti and Radeon R9 Fury X. On the latest beta the Fury X has a strong lead over the normally faster GTX 980 Ti, beating it by 20% and coming close to hitting 60fps.

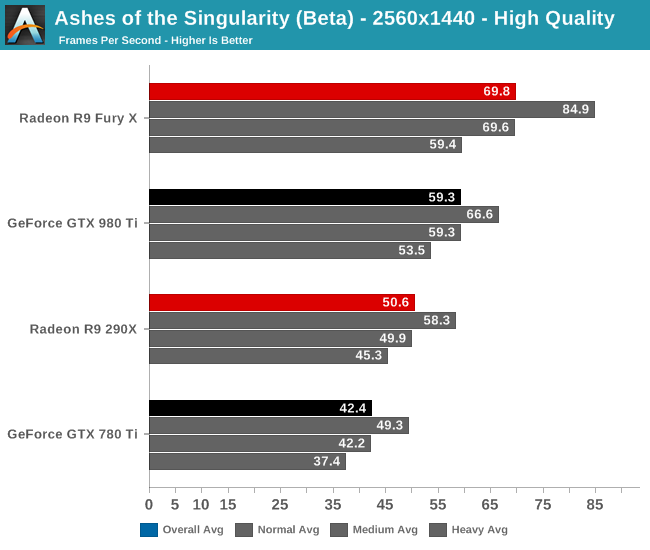

When we drop down to 1440p and introduce last-generation’s flagship video cards, the GeForce GTX 780 Ti and Radeon R9 290X, the story is much the same. The Fury X continues to hold a 10fps lead over the GTX 980 Ti, giving it an 18% lead. Similarly, the R9 290X has an 8fps lead over the 780 Ti, translating into a 19% performance lead. This is a significant turnabout from where we normally see these cards, as 780 Ti traditionally holds a lead over the 290X.

Meanwhile looking at the average framerates with different batch count intensities, there admittedly isn’t much remarkable here. All cards take roughly the same performance hit with increasingly larger batch counts.

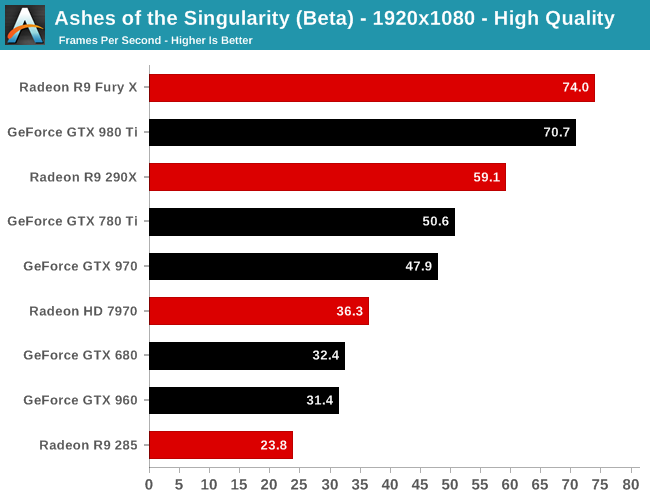

Finally at 1080p, with our full lineup of cards we can see that RTG’s lead in this latest beta is nearly absolute. The 2012 flagship battle between the 7970 and the GTX 680 puts the 7970 in the lead by 12%, or just shy of 4fps. Elsewhere the GTX 980 Ti does close on the Fury X, but RTG’s current-gen flagship remains in the lead.

The one outlier here is the Radeon R9 285, which is the only 2GB RTG card in our collection. At this point we suspect it’s VRAM limited, but it would require further investigation.

153 Comments

View All Comments

Dug - Sunday, February 28, 2016 - link

Very bad would indicate that it would be unplayable.I'm playing fine at over 60fps on nvidia. Maybe I should trade it in for an R9 to get 63fps?

C3PC - Wednesday, March 16, 2016 - link

Not really, this is a beta for a game that is heavily embedded with AMD tech, the way the game handles it would favor AMDs implementation, it could go the other way for a game designed around Nvidia's implementation.Also, calling this bed performance of DX12? Maybe you should clarify that this is an implementation of 12_0 and not 12_1, I highly doubt AMD will fair as well as Nvidia under such circumstances.

jsntech - Wednesday, February 24, 2016 - link

For Nvidia to perform the same or worse between DX11 and DX12 seems like a pretty big thing to be addressed with just an 'optimization', especially compared to AMD's results. I guess we'll see when it's out of beta!Senti - Wednesday, February 24, 2016 - link

Well, being low-level DX12 leaves much less to driver, so there should be less miraculous fps gains by driver optimization than in DX11.mabellon - Wednesday, February 24, 2016 - link

Something I have been wondering about this game is whether the DX11 vs DX12 comparison is really valid. The game apparently pushes higher draw calls and takes advantage of DX12. But when running in DX11 mode, is it still trying to push all those unique draw calls or is it optimized like most DX11 games and using draw call instancing? (Sorry not a game dev, so I don't fully grok the particulars).Basically, if the game was designed and optimized for DX11 it might perform well but not have the visual fidelity of so many draw calls (unique unit visuals). So the real difference should have been visual quality. Instead I get the impression that the game was designed to push DX12 and then when in DX11 mode stresses the draw call limitations, over emphasizing the apparent gains. Am I wrong?

Currently it seems that porting a game from DX11 to DX12 nets up to a 50% improvement in framerates. The reality is more nuanced that existing games are clearly working around draw call limitations and thus won't see something quite so dramatic. Thoughts?

ImSpartacus - Wednesday, February 24, 2016 - link

I think you're probably right.Dx12 doesn't simply improve performance and nothing else. So the massive performance improvements probably aren't entirely fair.

extide - Wednesday, February 24, 2016 - link

It would have to use less draw calls for DX11 as hitting DX11 with the high number of calls you can do in DX12 would make it fall flat on it's face. I am sure the DX11 path is well optimised for DX11, I mean while this game is a big showcase for DX12, most people who play it will probably be on DX11 ...Denithor - Thursday, February 25, 2016 - link

People with nVidia cards will be playing in DX11 mode. People with AMD cards, even those three generations old, will be fine in DX12 mode with better eye candy and simultaneously better FPS.Friendly0Fire - Wednesday, February 24, 2016 - link

There's definitely quite a bit of that. Looking at the benchmark, a lot of the graphics design seems aimed at causing more draw calls (long-lasting smoke consisting of lots of unique particles, lots of small geometric details, etc.). While I'm absolutely convinced that DX12 will give better performance than DX11 in the long run and that the gap will be fairly large, I think this benchmark is definitely designed to overemphasize just how great DX12 is.jardows2 - Wednesday, February 24, 2016 - link

I would like to see how much of an impact the DX12 on a released game makes in the CPU world. Do you get better performance from multiple cores, or is it irrelevant? Speculation is that DX12 could change the normal paradigm for judging gaming performance on CPU's.