Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

But 16FF+ Silicon Exists

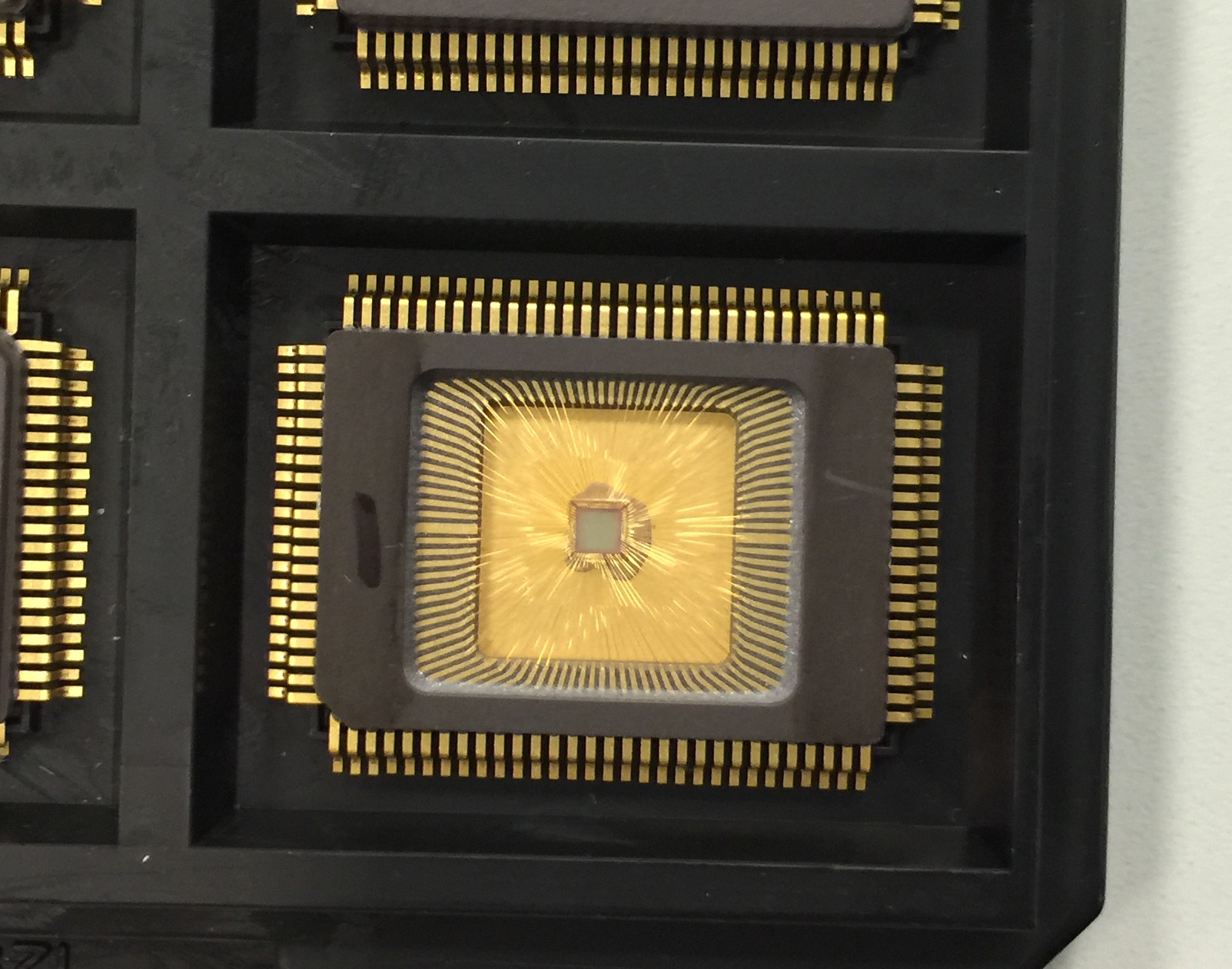

One of the salient points of our talk with Soft Machines was the fact that silicon talks louder than simulations. Their CTO was very honest and said this before I even had the chance to. The 28nm design was shown in 2014 and data was provided, but no 16FF+ design had since been made public. Soft Machines were happy enough to share with us that they do have the core design for 16nm at HQ being examined:

16nm Silicon of a Shasta design

This is literally a test chip of cores rather than a full SoC, and they are currently running the correlation data between simulation and silicon. We were told that the design errors that the 28nm silicon had, such as cache flushing properly, were fixed. The new silicon also includes power plane management, although customers are welcome to use their own power plane adjustments.

The goal, according to Soft Machines' numbers, is to provide a Shasta core on an optimized 16nm FF+ process at 2GHz at around 2W. Their goal includes scaling the design from SoC to server, meaning that there is the goal to reach a range of 0.5W per core up to 5W per core. Because there’s only one 16FF+ part-SoC early run currently at their headquarters it remains to be seen if that is possible, and requires a partner or investor to get their hands dirty with the technology first.

Before someone jumps up and says "is platform XYZ going to use VISC?", it should be fairly obvious from most public roadmaps covering the next 1-2 years that major platforms will not be using VISC. What we see on public roadmaps is a mix of ARM and x86, and the fact that VISC is a different ISA under the hood (which can run native VISC code without translation) means that there has to be an ecosystem change. Soft Machines, with their announcement last week, is at this time principally fishing for clients, investors, and potentially something more.

The big thing about why this design has got a lot of attention in the media and between analysts is because of the potential. Being able to have many light-weight cores that can share resources between threads would be a major milestone in semiconductor design and the next point in the CISC/RISC lineage. It epitomizes the idea of having all the hardware working on a task no matter what it is, such that you can have many slower power efficient cores working on a single task or one inefficient high power but fast core. If you can spare the die area and have a good ISA translation layer, this opens up some of the power budget in a power limited device. A lot of discussion on laptops or smartphones is all about the power, although Soft Machines believes this can impact servers just as easily.

Arguably one could state that future processors will have to do something like VISC in order to get better IPC – when a thread needs a large wide core, then a VISC design can be one when needed. Technically we already have semiconductor designs that work very well on prepared data – vector calculations and graphics are handled by lots of small, simple cores in their thousands. But these only work with consistent data and when the same calculation on all the data points is needed; with a VISC design, the code can be complex with dependencies and the virtual cores will shrink/expand as needed. A lot of questions surrounding the translation layer are to be expected, and if it can be as water-tight as possible when other ISAs are passed through (ARM to VISC, x86 to VISC) and also take advantage of compiler benefits as to SMI’s claims.

As it stands the design promises a lot, but because we really need to see the proper silicon implementation, it might be hard to visualize until a company in the technology ecosystem decides to make that step. It would be an interesting differentiation point for sure, but it requires investment to reach utility in mass production. That makes a number of analysts wary and conservative with good reasons, especially with the assumptions made on that data graph.

Soft Machines has invited us to their offices next time I’m in the Bay Area, which I will probably take them up on.

Sources:

Soft Machines

Microprocessor Report

2014 Linley Conference Video

2015 Linley Conference Video

97 Comments

View All Comments

ddriver - Saturday, February 13, 2016 - link

All abbreviations are capitalized, not just acronyms, idiot. Whether it is an acronym or not depends on how it is pronounced.erple2 - Saturday, March 12, 2016 - link

Enough people confuse initialisms and acronym that it probably doesn't matter anymore.FunBunny2 - Friday, February 12, 2016 - link

If SMI wants this to be believed, then just publish a paper (in a peer reviewed journal) showing how VISC invalidates Amdahl's Law. This is, after all, what they're really claiming.willis936 - Friday, February 12, 2016 - link

Could you explain how they're claiming to invalidate Amdahl's law ?FunBunny2 - Friday, February 12, 2016 - link

as I read the piece, SMI is implying/claiming performance improvement in running serial code in a parallel fashion, and Amdahl says you can't do that. if, OTOH, the claim is that VISC is able to suss out parallel execution in superficially serial code, then that process has to be proven to exist algorithmically. as the piece goes to some length to describe, based on what's been provided by SMI, it much like smoke and mirrors.Arnulf - Friday, February 12, 2016 - link

I read their claims as an expansion of superscalar design. Nothing new here and certainly nothing breaking any "laws". It still cannot magically make non-parallelizable code run faster than it normally would.Samus - Monday, February 15, 2016 - link

If their decoder can break up serial code and run it through different cores optimized to do different things better, this would theoretically complete the code faster because their will be no pipeline penalty.Personally. I think we have better odds of seeing a quantum processor before this type of thing taking off, though. That is to say, no time soon.

gamerk2 - Friday, February 12, 2016 - link

Kinda. There will still be a limited performance simply because some operations can not be made parallel under any circumstances, but Soft Machines is really taking ILP to the extreme here.xthetenth - Friday, February 12, 2016 - link

No it really isn't and you're profoundly misunderstanding Amdahl's law. All that says is how much an improvement to a portion of a workload's execution speed will affect the workload as a whole's execution speed. Meanwhile what they're doing is trying to extract parallelism from single threads, which means that they're speeding up a greater fraction of the code. Funnily enough, you can use Amdahl's law to predict when this method (shrinking the non-improved section to allow higher maximum speed) is more effective than things like clocking higher.I suspect what you're doing is confusing the law with an explanation/common use of the law because it is very popular to use it to show there's a limit on the gains that can be made by parallel processors.

Drumsticks - Friday, February 12, 2016 - link

It doesn't really invalidate Amdahl's Law. Serial code still can't be run on multiple cores. As I understand it, it only allows extracting more ILP using an ultra ultra wide design when possible.