Who Controls the User Experience? AMD’s Carrizo Thoroughly Tested

by Ian Cutress on February 4, 2016 8:00 AM ESTAMD’s Industry Problem

A significant number of small form factor and portable devices have been sold since the start of the century - this includes smartphones, tablets, laptops, mini-PCs and custom embedded designs. Each of these markets is separated by numerous facets: price, performance, mobility, industrial design, application, power consumption, battery life, style, marketing and regional influences. At the heart of all these applications is the CPU that takes input, performs logic, and provides output dependent on both the nature of the device and the interactions made. Both the markets for the devices, and the effort placed into manufacturing the processors, is large and complicated. As a result we have several multi-national and worldwide companies hiring hundreds or thousands of engineers and investing billions of dollars each year into processor development, design, fabrication and implementation. These companies, either by developing their own intellectual property (IP) or licensing then modifying other IP, aim to make their own unique products with elements that differentiate them from everyone else. The goal is to then distribute and sell, so their products end up in billions of devices worldwide.

The market for these devices is several hundreds of billions of dollars every year, and thus to say competition is fierce is somewhat of an understatement. There are several layers between designing a processor and the final product, namely marketing the processor, integrating a relationship with an original equipment manufacturer (OEM) to create a platform in which the processor is applicable, finding an entity that will sell the platform under their name, and then having the resources (distribution, marketing) to the end of the chain in order to get the devices into the hands of the end user (or enterprise client). This level of chain complexity is not unique to the technology industry and is a fairly well established route for many industries, although some take a more direct approach and keep each stage in house, designing the IP and device before distribution (Samsung smartphones) or handling distribution internally (Tesla motors).

In all the industries that use semiconductors however, the fate of the processor, especially in terms of perception and integration, is often a result of what happens at the end of the line. If a user, in this case either an end user or a corporate client investing millions into a platform, tries multiple products with the same processor but has a bad experience, they will typically relate the negativity and ultimately their purchase decision towards both the device manufacturer and the manufacturer of the processor. Thus it tends to be in the best interest of all parties concerned that they develop devices suitable for the end user in question and avoid negative feedback in order to develop market share, recoup investment in research and design, and then generate a profit for the company, the shareholders, and potential future platforms. Unfortunately, with many industries suffering a race-to-the-bottom, cheap designs often win due to budgetary constraints, which then provides a bad user experience, giving a negative feedback loop until the technology moves from ‘bearable’ to ‘suitable’.

Enter Carrizo

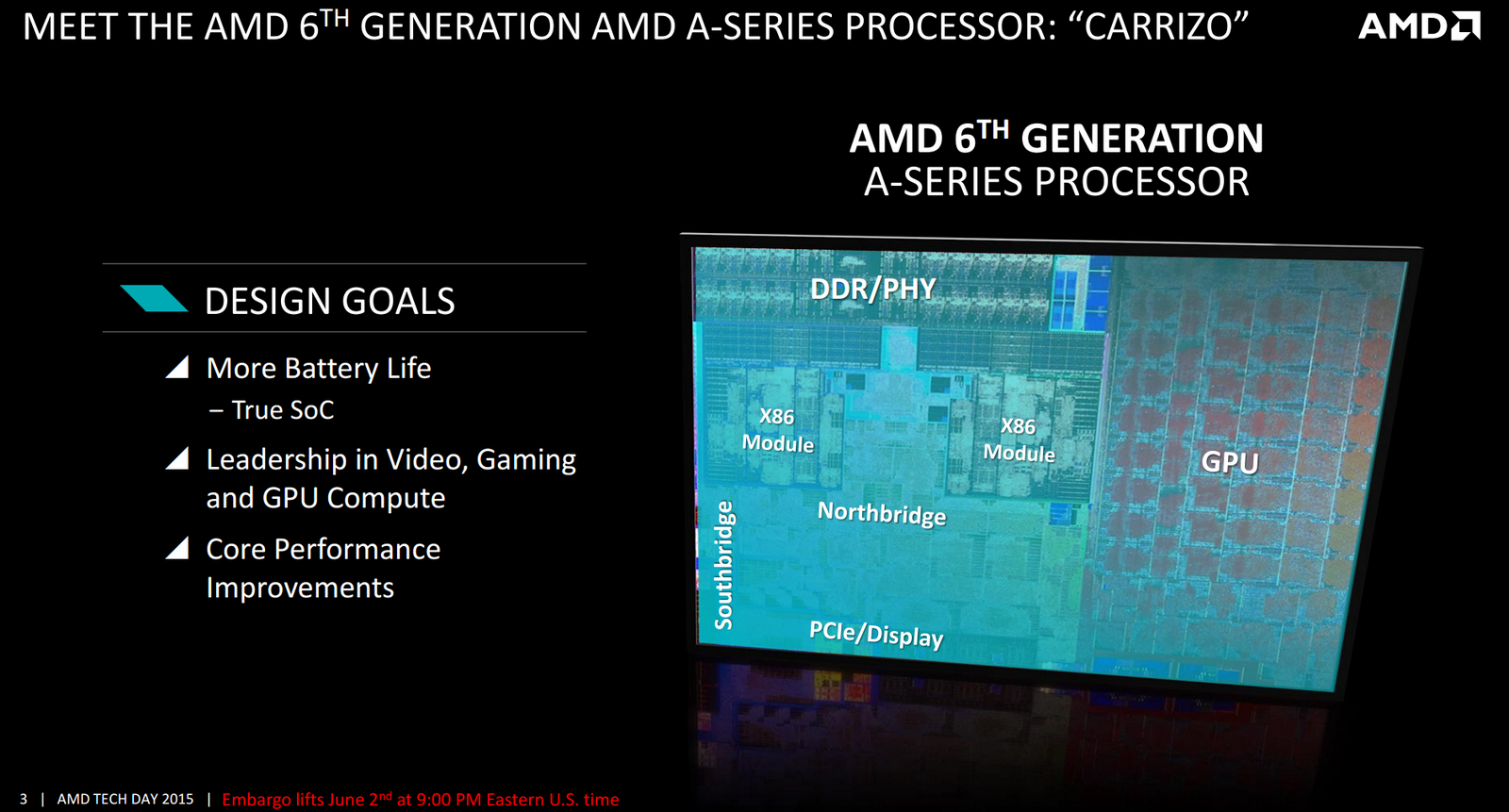

One such platform that was released in 2015 is that of AMDs Carrizo APU (accelerated processor unit). The Carrizo design is the fourth generation of the Bulldozer architecture, originally released in 2011. The base design of the microarchitecture is different to the classical design of a processor - at a high level, rather than one core having one logic pipeline sharing one scheduler, one integer calculation port and one floating point calculation port resulting in one thread per core, we get a compute module with two logic pipelines sharing two schedulers, two integer calculation ports and only one floating point pipeline for two threads per module (although the concept of a module has been migrated to that of a dual core segment). With the idea that the floating point pipeline is being used infrequently in modern software and compilers, sharing one between two aims to save die area, cost, and additional optimizations therein.

The deeper reasons for this design lie in typical operating system dynamics - the majority of logic operations involving non-mathematical interpretations are integer based, and thus an optimization of the classical core design can result in the resources and die area that would normally be used for a standard core design to be focused on other more critical operations. This is not new, as we have had IP blocks in both the desktop and mobile space that have shared silicon resources, such as video decode codecs sharing pipelines, or hybrid memory controllers covering two memory types, to save die area but enable both features in the market at once.

While interesting in the initial concept, the launch of Bulldozer was muted due to its single threaded performance compared to that of AMD’s previous generation product as well as AMD’s direct competitor, Intel, whose products could ultimately process a higher number of instructions per clock per thread. This was countered by AMD offering more cores for the same die area, improving multithreaded performance for high workload throughput, but other issues plagued the launch. AMD also ran at higher frequencies to narrow the performance deficit, and at higher frequencies, the voltage required to maintain those frequencies related in a higher power consumption compared to the competition. This was a problem for AMD as Intel started to pull ahead on processor manufacturing technology taking advantage of lower operating voltages, especially in mobile devices.

Also, AMD had an issue with operating system support. Due to the shared resource module design of the processor, Microsoft Windows 7 (the latest at the time) had trouble distinguishing between modules and threads, often failing to allocate resources to the most suitable module at runtime. In some situations, it would cause two threads would run on a single core, with the other cores being idle. This latter issue was fixed via an optional update and in future versions of Microsoft Windows but still resulted in multiple modules being on 'active duty', affecting power consumption.

As a result, despite the innovative design, AMDs level of success was determined by the ecosystem, which was rather unforgiving in both the short and long term. The obvious example is in platforms where power consumption is directly related to battery life, and maintaining a level of performance required for those platforms is always a balance in managing battery concerns. Ultimately the price of the platform is also a consideration, and along with historical trends from AMD, in order to function this space as a viable alternative, AMD had to use aggressive pricing and adjust the platforms focus, potentially reducing profit margins, affecting future developments and shareholder return, and subsequently investment.

175 Comments

View All Comments

MonkeyPaw - Friday, February 5, 2016 - link

The cat cores exist to compete with Atom-level SOCs. Intel takes the Atom design from phones and tablets all the way up to Celeron and Pentium laptops. It makes some business sense due to low cost chips, but if the OEM puts them in a design and asks too much of the SOC, then there you have a bad experience. Such SOCs should not be found in anything bigger than a $300 11" notebook. For 13" and up, the bigger cores should be employed.michael2k - Friday, February 5, 2016 - link

The cat cores can't compete with Atom level SoC because they don't operate at low enough power levels (ie, 2W to 6W). The cat cores may have been designed to compete with Atom performance and Atom priced parts, but they were poorly suited for mobile designs at launch.Intel999 - Sunday, February 7, 2016 - link

AMD hasn't updated the cat cores in over three years! It is a dead channel to them. They had a bit of a problem competing in the tablet market against a competitor that was willing to dump over $4 billion pushing inferior bay trail chips. Take a plane to China and you can still find a lot of those Bay Trail chips sitting in warehouses as once users had the misfortune of using tablets being run by them the reviews destroyed any chance that those tablets ever had at being sales successes.AMD was forced to stop funding R&D on cat cores as they were in no position to be selling them at negative $5.

In the time that AMD has stopped development on the cat cores Intel has improved their low end offerings, but still not enough to compete with ARM offerings that have improved as well. And now tablets are dropping at similar rates to laptops so it is actually a good thing for AMD that they suspended research on the cat cores. Sorta dodged a bullet.

At least they still get decent volume out of them through Sony and Microsoft gaming platforms.

testbug00 - Friday, February 5, 2016 - link

If the cat cores didn't exist AMD likely would have died as we know it a few years ago.

BillyONeal - Friday, February 5, 2016 - link

The "cat cores" are why AMD is not yet bankrupt; it let them get design wins in the PS4 and XBox One which kept the company afloat.mrdude - Friday, February 5, 2016 - link

YoY Q4 earnings showed a 42% decline in revenue for computing and graphics with less than 2bn in revenue for full-year 2015 and $502m operating loss. You couldn't be more correct. The console wins aren't just keeping the company afloat, they practically define it entirely.Lolimaster - Friday, February 5, 2016 - link

In that case simply remove the OEM's altogether and sell it at AMD's store or selected physical/online stores.TheinsanegamerN - Thursday, February 11, 2016 - link

10/10 would pay for an "AMD" branded laptop that does APUs correctly.Hrobertgar - Friday, February 5, 2016 - link

Since you are talking about use experience, AMD is not the only company with a bad user experience. I purchased an Alienware 15" R2 laptop on cyber Monday and it is horrible, and support is horrible. I compare my user experience to a Commodore 64 using a Cassette drive - its that bad (I suspect you are old enough to appreciate cassette drives). It arrived in a non-bootable configuration. It cannot stream Netflix to my 2005 Sony over an HDMI cable unless I use Chrome - took Netflix help to solve that (I took a cell-phone pic of a single Edge browser straddling the two monitors - the native monitor half streaming video and the Sony half dark after passing over the hdmi cable. It only occurs with Netflix). On 50% of bootups it gives me a memory change error despite even the battery being screwed in. On 10% of bootups it fails to recognize the HDD. Once it refused to shutdown and required holding the power button for 10 secs. Lately it claims the power brick is incompatible on about 10% of bootups. Yes, I downloaded all latest drives, bios, chipset, etc. Customer Service has hanged up on me once, deleted my review once, and repeatedly asked for my service tag after I already gave it to them. Some of the Netflix issue is probably Micorsoft's issue - certainly MS App was an epic fail, but much of even that must be Dell's issue. I realize it is probably difficult to spot many of these things given the timeframe of the testing you do, and the Netflix issue in particular is bizarre. I am starting to think a Lenovo might not be so bad.tynopik - Friday, February 5, 2016 - link

"put of their hands"