The Apple iOS 9 Review

by Brandon Chester on September 16, 2015 8:00 AM EST- Posted in

- Smartphones

- Apple

- Mobile

- Tablets

- iOS 9

A New Way To Navigate Between Apps

iOS 9 has a couple new features that Apple collectively calls Deep Linking. Users are undoubtedly familiar with the scenario where they’ll receive a link to a YouTube video or a tweet, or something else that relates to a service that does have a native iOS app. Unfortunately, clicking these links will usually bring you to a web page in mobile Safari instead of the appropriate application, which isn’t a very good experience. Android tackled this issue long ago with Intents, but even with the improvements to inter-app communication in iOS 8 there wasn’t a way for developers to easily and seamlessly implement such a feature. With iOS 9 they finally can.

Deep Linking builds on the same foundation as Handoff from the web which was introduced in iOS 8. The way that developers can implement deep linking is fairly straightforward. On their web server there needs to be a file called an apple-app-site-association file. This file contains a small amount of JSON code which indicates which sections or subdomains of your website should be directed to an on-device application rather than Safari. To ensure security, the association file needs to be signed by an SSL certificate. Once the developer has done this there’s nothing more to be done, and iOS will take care of opening the appropriate application on the device when an application is clicked.

The aspect of the improved navigation between applications that is most relevant to users right now is the back link that appears in the status bar when you move from one app to another. In the past, opening a link from within an application would either rip you out of that application and take you to Safari, or open the link in a mini web browser of sorts inside the application. The new back link is designed to allow users to quickly return to the application they were working in from the application that a link took them to.

Right now, the primary use for this is returning from Safari when you’re taken to it from inside an application. Once developers implement deep linking this feature will become even more significant, because clicking a Twitter link in the Google Hangouts application will simply slide the Twitter app overtop for you to see, and when you’re done you can click the back link to return to Hangouts.

It’s important not to confuse this new feature as a back button in the same sense as the one in Android or Windows Phone. iOS still has the exact same architecture for app navigation, with buttons to go back and forward located within each application rather than having a system wide button. The new back link is more of a passage to return to your task after a momentary detour into another application.

As for the UX, I think this is basically the only way that Apple could implement it. I’m not a fan of the fact that it removes the signal strength indicator, but anywhere else would have intruded on the open application which would cause serious problems. I initially wondered if it would have been better to just use the swipe from the left gesture to go back, but this wouldn’t be obvious and risks conflating the back link with the back buttons in apps. It looks like having the link block part of the status bar is going to be the solution until someone imaginative can come up with something better.

Reducing Input Lag

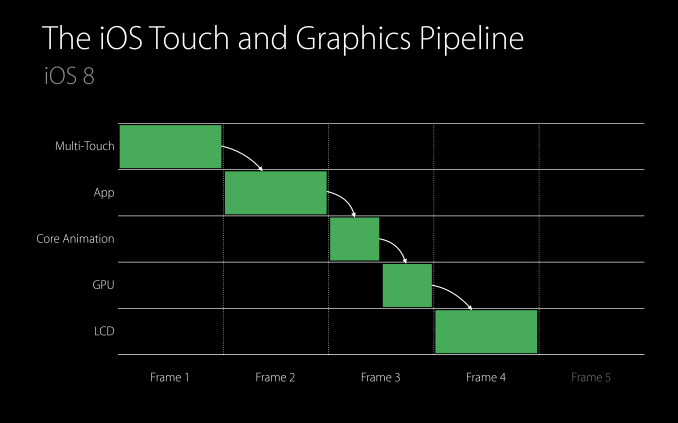

iOS is fairly good at minimizing input lag, but there has always been a certain amount of delay that you couldn't get around no matter how quick your application is. Part of this is related to how frequently the capacitive touch sensors scan for input, which is usually at the same frequency as the refreshing of the display, which puts the time between scans at about 16.7ms. Devices like the iPad Air 2 and upcoming iPad Pro scan at 120Hz for finger input, which drops this time to about 8.3ms. On the software side you have the steps and processes of the iOS touch and graphics pipeline, which is where Apple has made improvements to reduce touch latency on all devices in iOS 9.

The above image is a visual representation of the iOS touch and graphics pipeline. As you can see, with a typical application you're looking at about four frames of latency. Apple includes the display frame in their definition of latency which I disagree with because you'll see the results of your input at the beginning of the frame, but for the purposes of this discussion I'll use their definition.

In this theoretical case, it just so happens that the time for the updating the state of the application is exactly one frame long. One would think that decreasing the amount of time for the app to update state would reduce the latency for input by allowing Core Animation to start translating the application's views into GPU commands to be passed to the GPU for rendering. Unfortunately, this has not been the case on iOS in the past. No matter how well optimized an app is, Core Animation would only begin doing work at the beginning of the next display frame. This was because an application can update state several times during a single frame, and these are batched together to be rendered on screen at once.

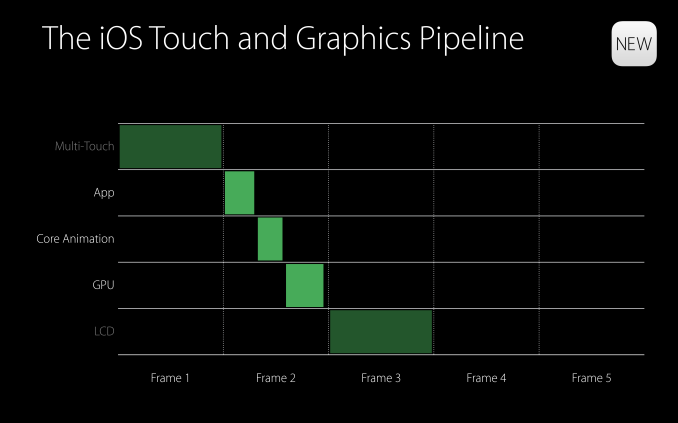

In iOS 9, Apple has removed the requirement that Core Animation will begin working at the beginning of the next frame. As a result, an optimized application can take care of updating state, having Core Animation issue GPU commands, and drawing the next frame all within the time span of a single display frame. This cuts the touch latency down to only three frames from the previous four, while applications with complicated views that require more than a single frame for Core Animation and GPU rendering can drop from five frames of latency to four.

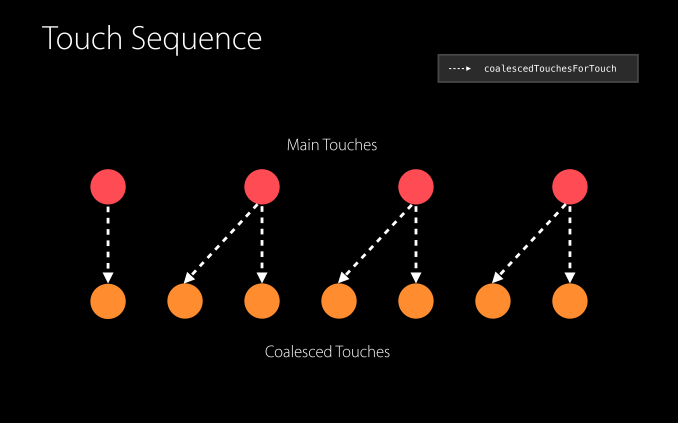

Apple has also made improvements at the actual touch input level. Like I mentioned earlier, the iPad Air 2 and iPad Pro scan for finger input at 120Hz, which can shave off an additional half frame of latency by allowing applications to begin doing work midway through a frame. In addition to reducing latency, the additional touch input information can be used by developers to improve the accuracy of how applications respond to user input. For example, a drawing application can more accurately draw a line that matches exactly where the user swiped their finger, as they now have twice the number of points to sample. Apple calls these additional touches coalesced touches, and they do require developers to add a bit of code to implement them in their applications. However, being able to begin app updating in the middle of a frame is something that will happen automatically on devices with 120Hz input.

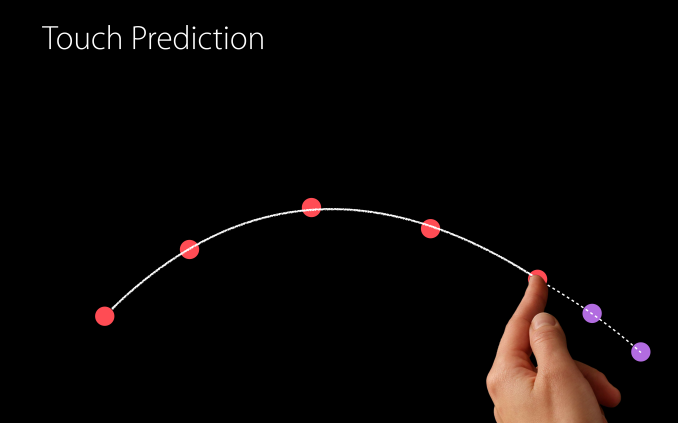

The last feature Apple is implementing in iOS 9 to reduce input latency is predictive touch. Put simply, this is information that developers can access to have some idea of where the user's finger is moving on the display. This allows them to update views in advance using the estimated information, and once actual information about the user's movement has been received they can undo the predicted changes, apply the actual changes, and also apply predictions made based on the user's new movements. Because Apple provides predicted touches for one frame into the future this technique can reduce apparent input latency by another frame. Combined with the improvements to the input pipeline this drops latency as low as 2 frames on most devices, and on the iPad Air 2 the effective touch latency can now be as low as 1.5 frames, which is an enormous improvement from the four frames of latency that iOS 8 provided as a bare minimum.

App Thinning

Apple gets a lot of criticism for shipping only 16GB of NAND in the starting tier for most iOS devices. I think this criticism is warranted, but it is true that Apple is putting effort into making those devices more comfortable to use in iOS 9. The most obvious improvements are with their cloud services, which allows users to store data like photos in iCloud while keeping downscaled 3.15MP copies on their devices. Unfortunately iCloud still only offers 5GB of storage for free which really needs to be increased significantly, but they have increased the storage of their $0.99 monthly tier from 20GB to 50GB, dropped the price for 200GB from $3.99 to $2.99 monthly, and reduced the 1TB tier price to $9.99, while eliminating the 500GB option that previously existed at that price.

In addition to iCloud, iOS 9 comes with a number of optimizations to reduce the space taken up by applications. Apple collectively refers to these improvements as app thinning, and it has three main aspects.

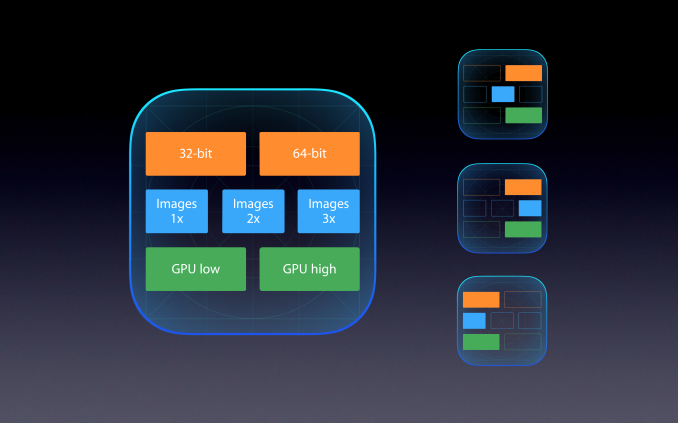

The first aspect of app thinning in iOS 9 is called app slicing. This refers to something that honestly should have been implemented five years ago, which is only including the assets that a device needs rather than having a package including assets for all devices. For example, current applications will come with 1x, 2x, and 3x scale bitmaps in order to work across every iOS device. Anyone who owned an iPad 2 may have noticed that app sizes inflated significantly after the release of the iPad 3, and this is because when apps were updated you needed to store all the 2x resolution bitmaps even though they were completely irrelevant to you. With the introduction of ARMv8 devices this problem has gotten even more significant, as app packages now include both 32bit and 64bit binaries. GPU shaders for different generations of PowerVR GPUs also contribute to package bloat.

App slicing means that applications will only include the assets they require to work on the device they are downloaded onto. The analogy Apple is using is that application only needs a single slice of the assets that a developer has made. What's great about app slicing is that any application already using the default Xcode asset catalogs will automatically be compatible, and the App Store will handle everything else. Developers using custom data formats will need to make an asset catalog and opt into app slicing.

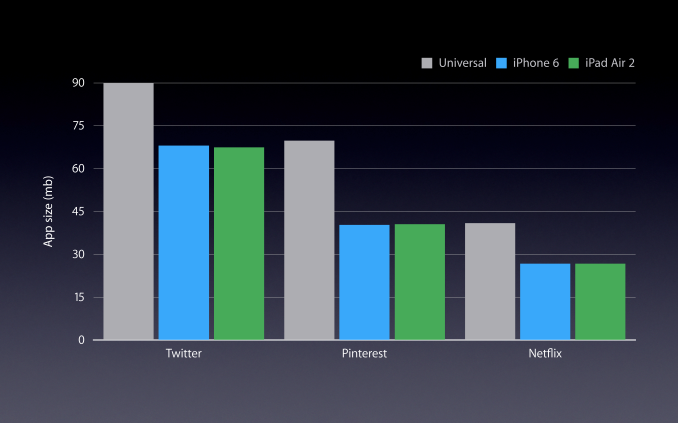

Apple's statistics show a significant reduction in app size when app thinning is used. Savings are typically in the range of 20-40%, but this will obviously depend on the application. I'm not trying to criticize what is really a good feature, but it's hard to believe that this is just now being implemented. It's likely that the introduction of the iPhone 6 Plus increased the need for this, as apps now need to include 3x assets, whereas previously having 1x assets in addition to the 2x ones wasn't a huge deal when most devices were retina and the 1x assets weren't very large.

The next aspect of app thinning is on demand resources. This feature is fairly self explanatory. Developers can specify files to be downloaded only when they're needed, and removed from local storage when they aren't in use. This can be useful for games with many levels, or for storing tutorial levels that most users won't end up ever using.

The third and final aspect of app thinning is bitcode. Bitcode is an intermediate representation of an application's binary, which allows the App Store to recompile and optimize an application for a specific device before it gets sent to the user. This means that the application will always be compiled using the latest compiler optimizations and can take advantage of optimizations specific to future processors. While I've mainly focused on how app thinning relates to trimming the space of applications, bitcode is targeted more toward thinning apps in the sense that they're always as fast as they can be.

Much like the situation with RAM on iOS devices, my favored solution to users having issues with storage is to start giving them more storage. With the iPhone 6s and 6s Plus still starting at 16GB it looks like the physical storage situation on iOS will remain unchanged for some time. At the very least, Apple is making an effort to come up with software improvements that will free up space on devices. This is still not an optimal fix, and even better would be implementing these changes and also giving users more storage, but any improvements are certainly welcome. Implementing them in ways that don't require much hassle on the part of developers is something they will certainly appreciate as well.

227 Comments

View All Comments

ama3654 - Wednesday, September 16, 2015 - link

" In my view, the addition of multitasking just puts the iPad experience even farther ahead of other tablets. Obviously Windows has a similar implementation, but the unfortunate truth is that the Windows tablet market is almost non-existent at this point outside of the Surface lineup"I wonder why Samsung TouchWiz was not mentioned there as it has a much better multitasking multi-split implementation together with the S-Pen, and Samsung tablets represent a majority of Android tablets.

Morawka - Wednesday, September 16, 2015 - link

The windows tablet market is the surface market. Surface is a billion dollar a year business now, and apple obviously is taking it personal because surface was able to grab so much attention. It is a huge threat to iPad, because of it's versatility. It makes iPad's look like $600 facebook/email machines when you have competitors running full blow photoshop and illustrator in a similar form factor. Display out, USB drive support, SD Camera Card Support, A File System so people can download and move around files between any machine, these are all things iOS can't do in it's current form, meanwhile android and windows can and will take all the prosumer market.Think of what it will look like in 5-6 years with intel core i7's are running at 5w TDP and can do without a fan. Apple devices are about to hit a brick wall in performance improvements because new nodes are 2-3 years away. I would say that this is the last 90% performance gain year over year generation claims. Apple so far has been lucky and has been getting a new node every year for the past 3 years.

next year ipads/iphones will maybe get 10-15% gains in Cpu/gpu unless they make the silicon really big which has lower yields. meanwhile intel surface will have skylake and kabylake and Nvidia might be able to do something incredible once it finally gets access to 16nm FF on their 5w K lineup

jmnugent - Wednesday, September 16, 2015 - link

It's humorous how you believe Chip/Hardware advancements will benefit only 1 company (Microsoft). As if Apple,.. a company with such a respected history of hardware-design and innovation.. will just let itself fall behind on Chip-design. Hilarious.kspirit - Wednesday, September 16, 2015 - link

They'll still use iOS on the iPad and not OSX so... yeah, Microsoft wins out on usability. Unless Apple outs a full-on laptop replacement. So until then your comment makes no sense.Matthmaroo - Wednesday, September 16, 2015 - link

Man you have totally missed his point.Chip design and os choice are totally different

OCedHrt - Thursday, September 17, 2015 - link

Considering that OS X runs on x86 and iOS doesn't, chip design and os chioce are not totally different. Of course they can port iOS to x86, but they have their work cut out for them.JeremyInNZ - Friday, September 18, 2015 - link

porting iOS to x86 is simple. considering both OSX and iOS run on Darwin. In fact I suspect the iOS simulator that comes with XCode is running natively on x86.beggerking@yahoo.com - Tuesday, October 13, 2015 - link

Trust me, it's not simple nor efficient to emulate from risc To cisc or likewise.Not happening.period.

Kalpesh78 - Friday, September 25, 2015 - link

As usual, Apple will be late to that party as well.xype - Saturday, September 26, 2015 - link

If you think Apple doesn’t have iOS compiling and running on x86 I have a bridge to sell you. Big, red one, in San Francisco.You should read up on OS X, its transition to x86 and where iOS came from.