The NVIDIA GeForce GTX 980 Ti Review

by Ryan Smith on May 31, 2015 6:00 PM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

As the GM200 flagship card, GTX Titan X gets the pick of the litter as far as GM200 GPUs go. GTX Titan X needed fully-functional GM200 GPUs, and even then needed GPUs that were good enough to meet NVIDIA’s power requirements. GTX 980 Ti on the other hand, as a cut-down/salvage card, gets second pick. So we expect to see these chips be just a bit worse; to have either functional units that came out of the fab damaged, or have functional units that have been turned off due to power reasons.

| GeForce GTX Titan X/980 Voltages | ||||

| GTX Titan X Boost Voltage | GTX 980 Ti Boost Voltage | GTX 980 Boost Voltage | ||

| 1.162v | 1.187v | 1.225v | ||

Looking at voltages, we can see just that in our samples. GTX 980 Ti has a slightly higher boost voltage – 1.187v – than our GTX Titan X. NVIDIA sometimes bins their second-tier cards for lower voltage, but this isn’t something we’re seeing here. Nor is there necessarily a need to bin in such a manner since the 250W TDP is unchanged from GTX Titan X.

| GeForce GTX 980 Ti Average Clockspeeds | |||

| Game | GTX 980 Ti | GTX Titan X | |

| Max Boost Clock | 1202MHz | 1215MHz | |

| Battlefield 4 |

1139MHz

|

1088MHz

|

|

| Crysis 3 |

1177MHz

|

1113MHz

|

|

| Mordor |

1151MHz

|

1126MHz

|

|

| Civilization: BE |

1101MHz

|

1088MHz

|

|

| Dragon Age |

1189MHz

|

1189MHz

|

|

| Talos Principle |

1177MHz

|

1126MHz

|

|

| Far Cry 4 |

1139MHz

|

1101MHz

|

|

| Total War: Attila |

1139MHz

|

1088MHz

|

|

| GRID Autosport |

1164MHz

|

1151MHz

|

|

| Grand Theft Auto V |

1189MHz

|

1189MHz

|

|

The far more interesting story here is GTX 980 Ti’s clockspeeds. As we have pointed out time and time again, GTX 980 Ti’s gaming performance trails GTX Titan X by just a few percent, this despite the fact that GTX 980 Ti is down by 2 SMMs and is clocked identically. On paper there is a 9% performance difference that in the real world we’re not seeing. So what’s going on?

The answer to that is that what GTX 980 Ti lacks in SMMs it’s making up in clockspeeds. The card’s average clockspeeds are frequently two or more bins ahead of GTX Titan X, topping out at a 64MHz advantage under Crysis 3. All of this comes despite the fact that GTX 980 Ti has a lower maximum boost clock than GTX Titan X, topping out one bin lower at 1202MHz to GTX Titan X’s 1215MHz.

Ultimately the higher clockspeeds are a result of the increased power and thermal headroom the GTX 980 Ti picks up from halving the number of VRAM chips along with disabling two SMMs. With those components no longer consuming power or generating heat, and yet the TDP staying at 250W, GTX 980 Ti can spend its power savings to boost just a bit higher. This in turn compresses the performance gap between the two cards (despite what the specs say), which coupled with the fact that performance doesn't scale lineraly with SMM count or clockspeed (you rarely lose the full theoretical performance amount when shedding frequency or functional units) leads to the GTX 980 Ti trailing the GTX Titan X by an average of just 3%.

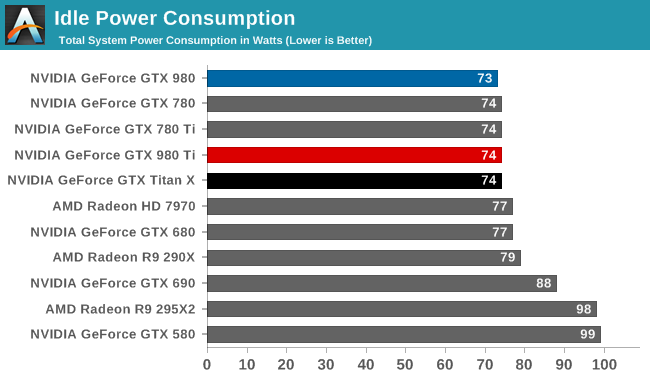

Starting off with idle power consumption, there's nothing new to report here. GTX 980 Ti performs just like the GTX Titan X, which at 74W is second only to the GTX 980 by a single watt.

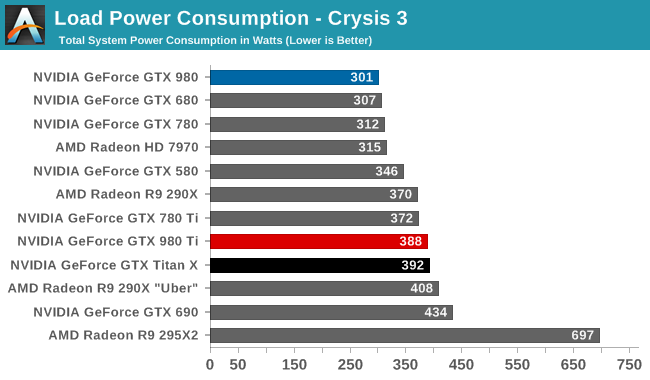

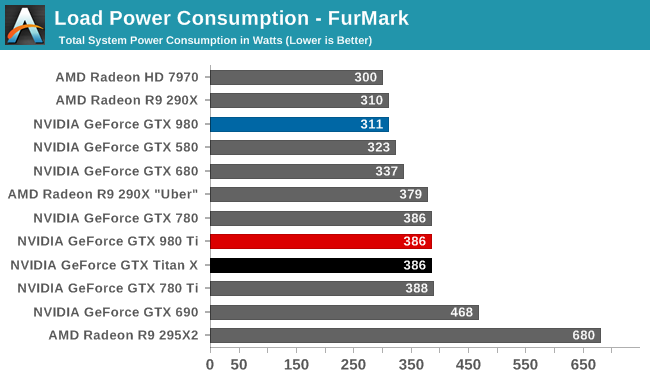

Meanwhile load power consumption is also practically identical to the GTX Titan X. With the same GPU on the same board operating at the same TDP, GTX 980 Ti ends up right where we expect it, next to GTX Titan X. GTX Titan X did very well as far as energy efficiency is concerned – setting a new bar for 250W cards – and GTX 980 Ti in turn does just as well.

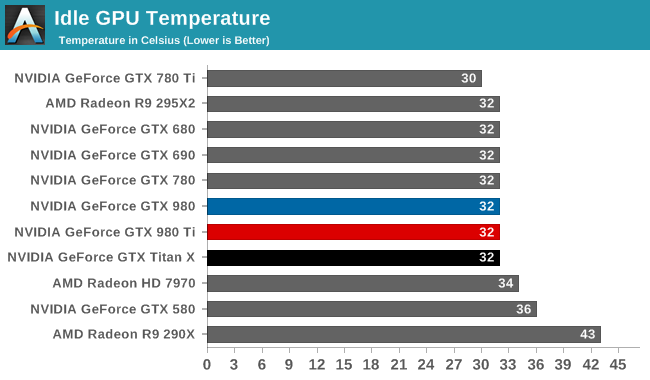

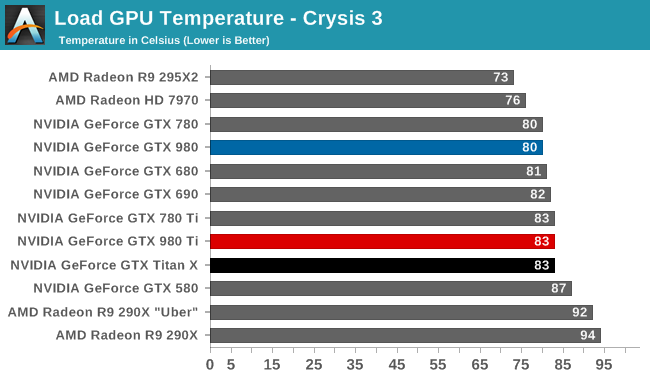

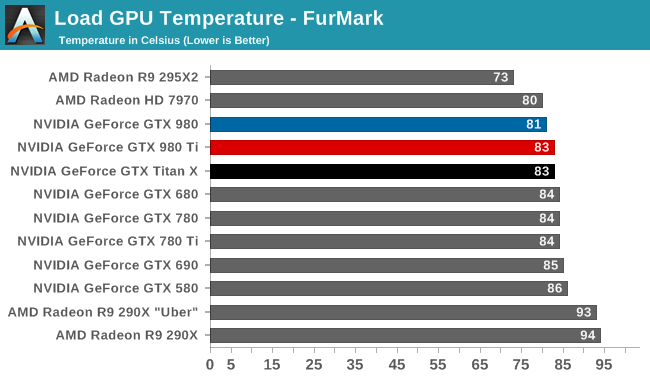

As was the case with power consumption, video card temperatures are similarly unchanged. NVIDIA’s metal cooler does a great job here, keeping temperatures low at idle while NVIDIA’s GPU Boost mechanism keeps temperatures from exceeding 83C under full load.

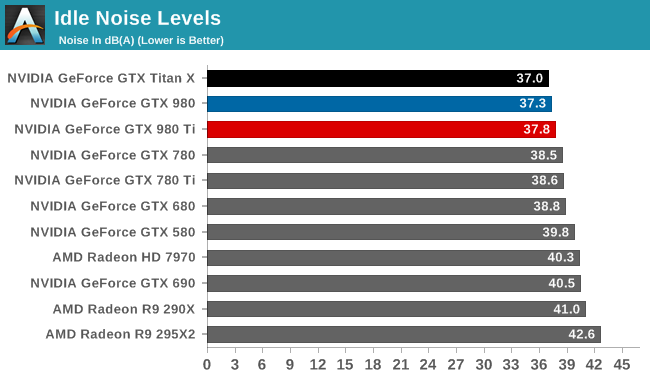

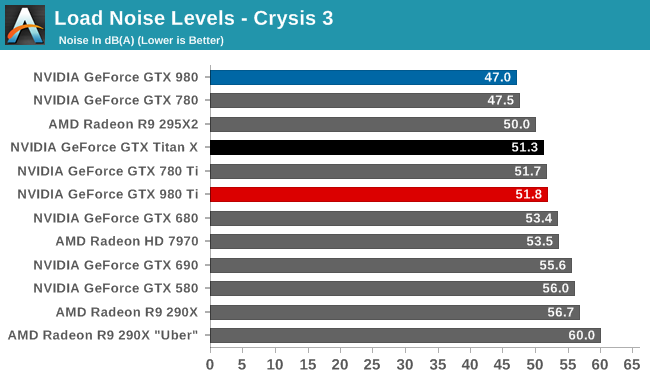

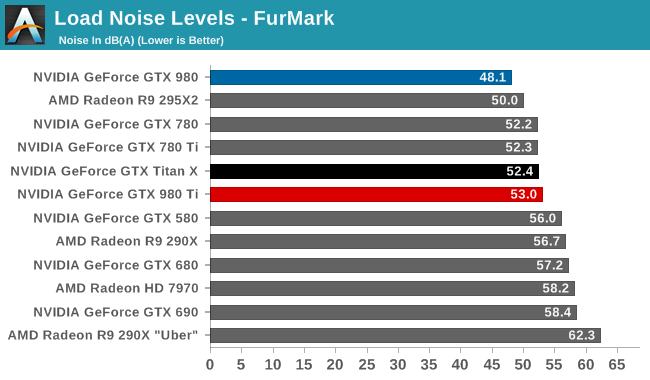

Finally for noise, the situation is much the same. Unexpected but not all that surprising, the GTX 980 Ti ends up doing a hair worse than the GTX Titan X here. NVIDIA has not changed the fan curves or TDP, so this ultimately comes down to manufacturing variability in NVIDIA’s metal cooler, with our GTX 980 Ti faring ever so slightly worse than the Titan. Which is to say that it's still right at the sweet spot for noise versus power consumption, dissipating 250W at no more than 53dB, and once again proving the mettle of NVIDIA's metal cooler.

290 Comments

View All Comments

ComputerGuy2006 - Sunday, May 31, 2015 - link

Well at $500 this would be 'acceptable', but paying this much for 28nm in mid 2015?SirMaster - Sunday, May 31, 2015 - link

Why do people care about the nm? If the performance is good isn't that what really matters?Galaxy366 - Sunday, May 31, 2015 - link

I think the reason people talk about nm is because a smaller nm means more graphical power and less usage.ComputerGuy2006 - Sunday, May 31, 2015 - link

Yeah, we also 'skipped' a generation, so it will be even a bigger bang... And with how old the 28nm is, it should be more mature process with better yields, so these prices look even more out of control.Kevin G - Monday, June 1, 2015 - link

Even with a mature process, producing a 601 mm^2 chip isn't going to be easy. The only larger chips I've heard of are ultra high end server processors (18 core Haswell-EX, IBM POWER8 etc.) which typically go for several grand a piece.chizow - Monday, June 1, 2015 - link

Heh, I guess you don't normally shop in this price range or haven't been paying very close attention. $650 is getting back to Nvidia's typical flagship pricing (8800GTX, GTX 280), they dropped it to $500 for the 480/580 due to economic circumstances and the need to regain marketshare from AMD, but raised it back to $650-700 with the 780/780Ti.In terms of actual performance gains, the actual performance increases are certainly justified. You could just as easily be paying the same price or more for 28nm parts that aren't any faster (stay tuned for AMD's rebranded chips in the upcoming month).

extide - Monday, June 1, 2015 - link

AMD will launch the HBM card on 400 series. 300 series is an OEM only series. ... just like ... wait for it .... nVidia's 300 series. WOW talk about unprecedented!chizow - Monday, June 1, 2015 - link

AMD already used that excuse...for the...wait for it...8000 series. Which is now the...wait for it....R9 300 OEM series (confirmed) and Rx 300 Desktop series (soon to underwhelm).NvidiaWins - Thursday, June 18, 2015 - link

RIGHT! AMD has been backpedaling for the last 3 years!Morawka - Monday, June 1, 2015 - link

980 was $549 at release.. So was the 780Nvidia is charging $650 for the first few weeks, but when AMD's card drops, you'll see the 980 Ti get discounted down to $500.

Just wait for AMD's release and the price will have to drop.