Intel Broadwell Architecture Preview: A Glimpse into Core M

by Ryan Smith on August 11, 2014 12:01 PM ESTBroadwell GPU Architecture

While Intel’s tick products are relatively conservative on the CPU side, the same cannot be said for the GPU side. Although the same general tick-tock rules apply to GPUs as well as they do CPUs – the bigger architectural changes are on the tock – the embarrassingly parallel nature of graphics coupled with the density improvements from newer process nodes means that even in a tick Intel’s GPU improvements are going to be substantial. And Broadwell will be no exception.

From a high level overview, Broadwell’s GPU is a continuation of the Intel Gen7 architecture first pioneered in Ivy Bridge and further refined for Gen7.5 in Haswell. While there are some important underlying changes that we’ll get to in a moment, at a fundamental level this is still the same GPU architecture that we’ve seen from Intel for the last two generations, just with more features, more polish, and more optimizations than ever before.

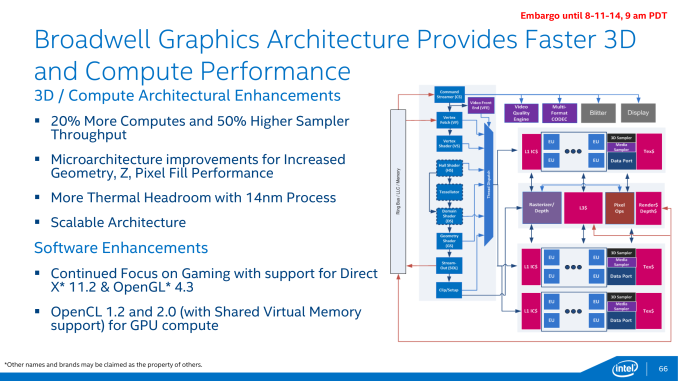

In terms of functionality Broadwell’s GPU has been upgraded to support the latest and greatest graphics APIs, an important milestone for Intel as this means their iGPU is now at feature parity with iGPUs and dGPUs from AMD and NVIDIA. With support for Direct3D feature level 11_2 and Intel’s previous commitment to Direct3D 12, Intel no longer trails AMD and NVIDIA in base features; in fact with FL 11_2 support they’re even technically ahead of NVIDIA’s FL 11_0 Kepler and Maxwell architectures. FL 11_2 is a rather minor update in the long run, but support for it means that Intel now supports tiled resources and pre-compiled shader headers.

Meanwhile on the compute front, Intel has confirmed that Broadwell’s GPU will offer support for OpenCL 2.0, including OpenCL’s shared virtual memory. OpenCL 2.0 will bring with it several improvements that allow GPUs to be more robust compute devices, and though Intel doesn’t have a programming paradigm comparable to AMD’s HSA, SVM none the less affords Intel and OpenCL programmers the chance to better leverage Broadwell’s CPU and GPU together by directly sharing complex data structures rather than copying them around.

Digging deeper however quickly exposes that Intel hasn’t left their GPU architecture entirely alone. Broadwell-Y, like Haswell-Y before it, implements a single slice configuration of Intel’s GPU architecture. However the composition of a slice will be changing for Broadwell, and this will have a significant impact on the balance between various execution units.

| Low Level Architecture Comparison | |||||||||||||||||

| AMD GCN | NVIDIA Maxwell | Intel Gen7.5 Graphics | Intel Gen8 Graphics | ||||||||||||||

| Building Block | GCN Compute Unit | Maxwell SMM | Sub-Slice | Sub-Slice | |||||||||||||

| Shader Building Block | 16-wide Vector SIMD | 32-wide Vector SIMD | 2 x 4-wide Vector SIMD | 2 x 4-wide Vector SIMD | |||||||||||||

| Smallest Implementation | 4 SIMDs | 4 SIMDs | 10 SIMDs | 8 SIMDs | |||||||||||||

| Smallest Implementation (ALUs) | 64 | 128 | 80 | 64 | |||||||||||||

In Haswell-Y Intel used a GT2 configuration, which was composed of a single slice that in turn contained 2 sub-slices. In Intel’s GPU architecture the sub-slice is the smallest functional building block of the GPU, containing the EUs (shaders) along with caches and texture/data/media samplers. Each EU in turn was composed of 2 4-wide vector SIMDs, with 10 EUs per sub-slice.

For Broadwell Intel is not changing the fundamental GPU architecture, but they are rebalancing the number of EUs per sub-slice and increasing the number of sub-slices overall. As compared to Haswell, Broadwell’s sub-slices will contain 8 EUs per sub-slice, with a complete slice now containing 3 sub-slices. Taken altogether this means that whereas Haswell-Y was a 2x10EU GPU, Broadwell-Y will be a 3x8EU GPU.

The ramifications of this is that not only is the total number of EUs increased by 20% from 20 to 24, but Intel has greatly increased the ratio of L1 cache and samplers relative to EUs. There is now 25% more sampling throughput per EU, with a total increase in sampler throughput (at identical clockspeeds) of 50%. By PC GPU standards increases in the ratio of samplers to EUs is very rare, with most designs decreasing that ratio over the years. The fact that Intel is increasing this ratio is a strong sign that Haswell’s balance may have been suboptimal for modern workloads, lacking enough sampler throughput to keep up with its shaders.

Moving on, along with the sub-slices front end and common slice are also receiving their own improvements. The common slice – responsible for housing the ROPs, rasterizer, and a port for the L3 cache – is receiving some microarchitecture improvements to further increase pixel and Z fill rates. Meanwhile the front end’s geometry units are also being beefed up to increase geometry throughput at that end.

Much like overall CPU performance, Intel isn’t talking about overall GPU performance at this time. Between the 20% increase in shading resources and 50% increase in sampling resources Broadwell’s GPU should deliver some strong performance gains, though it seems unlikely that it will be on the order of a full generational gain (e.g. catching up to Haswell GT3). What Intel is doing however is reiterating the benefits of their 14nm process in this case, noting that because 14nm significantly reduces GPU power consumption it will allow for more thermal headroom, which should further improve both burst and sustained GPU performance in TDP-limited scenarios relative to Haswell.

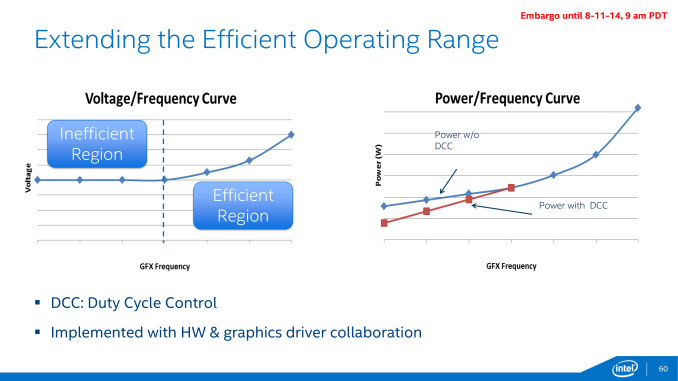

14nm isn’t the only technique Intel has to optimize power consumption on Broadwell’s GPU, which brings us to Broadwell’s final GPU technology improvement: Duty Cycle Control. While Intel has been able to clamp down on GPU idle power consumption over the years, they are increasingly fighting the laws of physics in extracting more idle power gains. At this point Intel can significantly scale down the frequency and operating voltage of their GPU, but past a point this offers diminishing returns. Transistors require a minimum voltage to operate – the threshold voltage – which means that after a certain point Intel can no longer scale down their voltage (and hence idle power consumption) further.

Intel’s solution to this problem is both a bit brute force and a bit genius, and is definitely unlike anything else we’ve seen on PC GPUs thus far. Since Intel can’t reduce their idle voltage they are going to start outright turning off the GPU instead; the process of duty cycling. By putting the GPU on a duty cycle Intel can run the GPU for just a fraction of the time – down to 12.5% of the time – which gets around the threshold voltage issue entirely.

This duty cycling is transparent to applications and the end user, with the display controller decoupled from the GPU clock domain and always staying online so that attached displays are always being fed regardless of what the GPU itself is doing. Control of the duty cycle is then handled through a combination of the GPU hardware and Intel’s graphics drivers, so both components will play a part in establishing the cycle.

Because today’s preview is Broadwell-Y centric, it’s unclear whether GPU duty cycle control is just a Broadwell-Y feature or whether it will be enabled in additional Broadwell products. Like many of Intel’s announced optimizations for Broadwell, duty cycle control is especially important for the TDP and battery life constrained Y SKU, but ultimately all mobile SKUs would stand to benefit from this feature. So it will be interesting to see just how widely it is enabled.

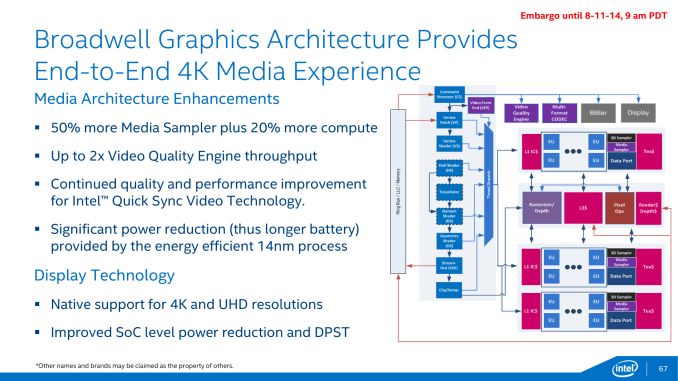

Moving on, last but not least in our GPU discussion, Intel is also upgrading their GPU’s media capabilities for Broadwell. The aforementioned increase in sub-slices and the resulting increase in samplers will have a direct impact on the GPU’s video processing capabilities – the Video Quality Engine and QuickSync – further increasing the throughput of each of them, up to 2x in the case of the video engine. Intel is also promising quality improvements in QuickSync, though they haven’t specified whether this is from technical improvements to the encoder or having more GPU resources to work with.

Broadwell’s video decode capabilities will also be increasing compared to Haswell. On top of Intel’s existing codec support, Broadwell will be implementing a hybrid H.265 decoder, allowing Broadwell to decode the next-generation video codec in hardware, but not with the same degree of power efficiency as H.264 today. In this hybrid setup Intel will be utilizing both portions of their fixed function video decoder and executing decoding steps on their shaders in order to offer complete H.265 decoding. The use of the shaders for part of the decoding process is less power efficient than doing everything in fixed function hardware but it’s better than the even less optimal CPU.

The use of a hybrid approach is essentially a stop-gap solution to the problem – the lead time on the finalization of H.265 would leave little time to develop a fixed function encoder for anyone with a long product cycle like Intel – and we expect that future generation products will have a full fixed function decoder. In the meantime Intel will be in the company of other GPU manufacturers such as NVIDIA, who is using a similar hybrid approach for H.265 on their Maxwell architecture.

Finally, Broadwell’s display controller will be receiving an update of its own. Broadwell is too soon for HDMI 2.0 or DisplayPort 2.0 – it will support HDMI 1.4 and DP 1.2/eDP 1.3a respectively – but the Y SKU in particular is getting native support for 4K. This is admittedly something of a backport since Haswell already supports 4K displays, but in Haswell’s case that feature was not available on Haswell-Y, so this is the first time native 4K support has come to a Y series SKU. This means that Broadwell-Y will be able to drive 4K displays, whether that means a 4K display in the device itself, or a 4K display hooked up internally (with an overall limit of 2 displays on Broadwell-Y). Don’t expect Broadwell-Y to have the performance necessary to do intensive rendering at this resolution, but for desktop work and video playback this should be enough.

158 Comments

View All Comments

crispbp04 - Monday, August 11, 2014 - link

Intel has something impressive in the works with Broadwell (at least on paper). I can't wait to get a Broadwell based Surface Pro. Assuming that Microsoft improves an already impressive hardware design from the sp3, the Broadwell iteration will likely be my next computer purchase.frostyfiredude - Monday, August 11, 2014 - link

I have a feeling SP4 will be fundamentally the same design as SP3 save for minor tweaks and improvements. SP3 was clearly designed for a processor with the kind of power profile Broadwell is set to deliver rather than the current Haswell profile. It will be interesting to see which set of SKUs Microsoft will put in the SP4, Core M or Broadwell ULT. Core M has a number of obvious benefits for power and area efficiency, but will it be powerful enough for their market with some features reduced from Haswell and Boradwell ULT.MonkeyPaw - Monday, August 11, 2014 - link

Pure speculation, but I think Intel might already be giving MS premium bins of Haswell for SP3, because SP3 is the only device to date to actually show off the ability to run premium Intel CPUs in a tablet format. Sure, MBA looks great, but SP3 took it to the next level.That said, I doubt that MS will use Core M in SP4, for the same reason we don't have Haswell-Y in SP3 (at least at the high end). It will probably be a step back in processing power to use one.

frostyfiredude - Tuesday, August 12, 2014 - link

Do we know what wattage the Broadwell ULT and Core M chips will be targeting? 15W TDP is clearly too high for the SP3 to handle so moving all SP4 chips to 11.5W like the current Haswell Y looks quite plausible at the moment, it just seems to be a matter of which version of Broadwell will have the 11.5W TDP.Samus - Wednesday, August 13, 2014 - link

15W is only a problem in SP3 to people who use it like a high performance computer (24x7 full load applications) but for general purpose use it barely warms up. We have people running Lightroom 8 hours a day on these things and like the Surface 2's (which I still have) they never got "hot" or "loud".That said, someone in the office infected their SP3 with some malware a few weeks ago (they literally owned the tablet not even 24 hours) and when they handed it to me, it was VERY hot with the fans whirling. Some 800kb task was using 100% of their CPU doing who knows what...at first I thought it was Cryptolocker but it turned out to be retrying a network connection. This was an i5 model, however, and it didn't seem to be throttling. The i3 will presumable run cooler, even at the same TDP.

What people need to keep in mind is these are mobile devices.

IntelUser2000 - Wednesday, August 13, 2014 - link

Broadwell ULT: 15WCore M(previously Broadwell-Y): 4.5W

vlad0 - Friday, August 15, 2014 - link

Isn't the core i3 version of the sp3 based on a Y series chip ?bebimbap - Monday, August 11, 2014 - link

agreed, Broadwell, and skylake will be vast improvements to PCs in general. Intel's Broadwell-Y announcement is all about "small, cool, efficient" while the recent FX-9590 seems more about "big, hot, gluttony" similar to the David vs Goliath story, the interesting part was the small one besting the big one. Ironically Intel is the bigger company. Hopefully AMD's new A1100 pans out as I don't want another Comcast, Microsoft, De Beers or Luxottica.wurizen - Monday, August 11, 2014 - link

well, if amd was as agressive as intel in shrinking dies or whathaveyou, then an AMD FX chip will probably be toe-to-toe to an intel i7-4930k or whatever the 6-core enthusiast intel chip is labeled. and not even die shrinks, but, also aggressive in producing a $500 cpu. imagine that. and you'd probably see an a10-7850k performance in a laptop by now. but, AMD seems content is sitting back and letting the other company do all the work, creating a path. as long as AMD doesn't completely die out, it's fine. we just need an alternative and AMD is the only one. so, go AMD. don't worry about broadwell. build it and we will come. be a niche. convert future x99 users to a future AMD product. and start from there.StevoLincolnite - Monday, August 11, 2014 - link

Except AMD can't be aggressive at shrinking dies.For one, die-shrinks costs money... For fab contracts, man-hours, research and possibly buying technology from other companies such as IBM.

AMD can't aggressively shrink dies anyway, they are at the mercy of fabrication companies like TSMC and Global Foundries, so what they can produce is limited to what they provide.

Intel has always been ahead of the industry in fabrication, the only way AMD can beat Intel is through something ground breaking (Like moving away from silicon?) or if Intel drops the ball, like they did with Netburst.

Or, AMD buys a fab company who is far ahead of Intel, which simply isn't going to happen.

Otherwise they can only compete on price and using an older more mature fabrication process allows them to do just that as the chips are much cheaper to produce, they just need to provide "Good enough" performance to mostly stay relevant, which the FX doesn't really do.