The Impact of Disruptive Technologies on the Professional Storage Market

by Johan De Gelas on August 5, 2013 9:00 AM EST- Posted in

- IT Computing

- SSDs

- Enterprise

- Enterprise SSDs

Nutanix: No More SAN

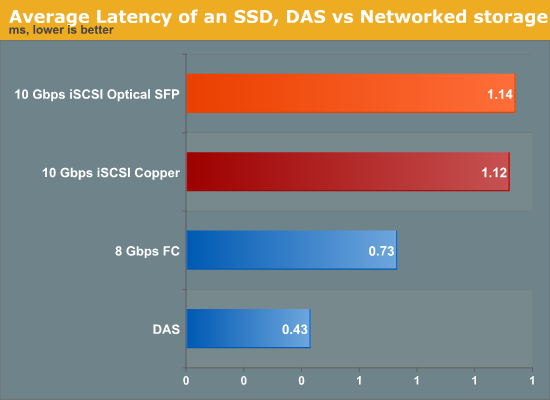

It is not a secret that even though a SAN comes with all the virtues of centralized data, network storage comes with a network bandwidth and latency penalty. By simply attaching a flash array directly to a system (DAS), we can measure the extra latency compared to SAN (Storage Area Network). It amounts to between 0.3 and 0.8 ms depending on whether you use Fibre Channel or iSCSI over copper wires.

So the minimal latency was 50% to 100% higher in a lightly loaded SAN than when the same SSD was running inside the server. However, this was the minimal latency. This can quickly grow to several milliseconds when the network load goes up.

Nutanix believes that virtualized servers should use local storage, clustered together in a virtual storage pool. Each of the virtual machines connects to a storage VM. That storage VM is typically an iSCSI target inside a VM, also called a VSA or Virtual Storage Appliance. The VSA on each server node are clustered together by the Nutanix Distributed File System (NDFS). NDFS makes sure that if one node dies, the other nodes are still able to access the necessary files to run.

The VSA also leverages the latest flash technology. The most accessed data is on a Fusion-IO or Intel S3700 SSDs, depending on the Nutanix node model. The “colder” (not frequently accessed) data is automatically transferred to the SATA terabyte disks. It's basically another level of caching, only with larger data caches than we see in the desktop world.

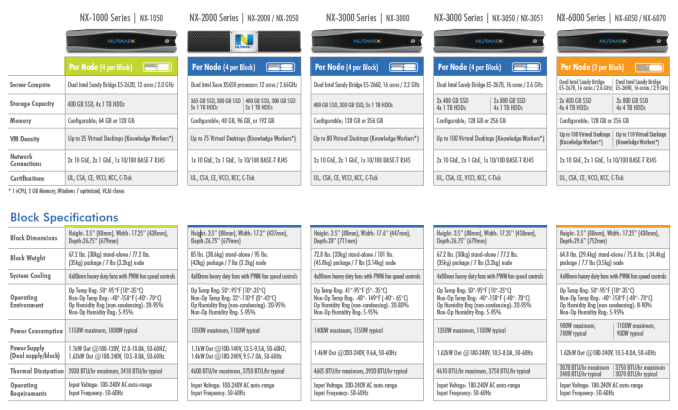

Using what seems to be a Supermicro Twin or Twin² chassis, even an entry level four node Nutanxi NX-3050 should support up to 400 virtual desktops with a power consumption of about 1.1 KW. Compare this with your typical SAN array that typically needs 700W just for one midrange array, and you probably need several expansion modules before you can even think about supporting 400 virtual desktops.

Unfortunately, we cannot verify the claims of Nutanix right now, but our experience tells us that from a power and performance point of view it will be very hard for the typical "server plus SAN infrastructure" to beat the much simpler “integrate everything inside a dense server” platform. The only disadvantage is that the number of DIMM slots inside such server nodes is limited. That is why even the largest Nutanix hosts do not support more than 256GB per node, which might be a limitation in some virtualization environments.

Starting at $22000 per node, the Nutanix nodes are hardly cheap, but since you don’t need a SAN the total investment is a lot lower than the traditional approach, especially for virtual desktops. Nutanix seems to have convinced quite a few people as it claims it is the fastest growing IT infrastructure startup ever, with an $80 million annual run rate. Now they just need to prove they have the reliability and support infrastructure to win over additional customers.

_575px.jpg)

60 Comments

View All Comments

Jammrock - Monday, August 5, 2013 - link

Great write up, Johan.The Fusion-IO ioDrive Octal was designed for the NSA. These babies are probably why they could spy on the entire Internet without ever running low on storage IO. Unsurprisingly that bit about the Octal being designed for the US government is no longer on their site :)

Seemone - Monday, August 5, 2013 - link

I find the lack of ZFS disturbing.Guspaz - Monday, August 5, 2013 - link

Yeah, you could probably get pretty far throwing a bunch of drives into a well configured ZFS box (striped raidz2/3? Mirrored stripes? Balance performance versus redundancy and take your pick) and throwing some enterprise SSDs in front of the array as SLOG and/or L2ARC drives.In fact, if you don't want to completely DIY, as many enterprises don't, there are companies selling enterprise solutions doing exactly this. Nexenta, for example (who also happen to be one of the lead developers behind modern opensource ZFS), sell enterprise software solutions for this. There are other companies that sell hardware solutions based on this and other software.

blak0137 - Monday, August 5, 2013 - link

Another option for this would be to go directly to Oracle with their ZFS Storage Appliances. This gives companies the very valuable benefit of having hardware and software support from the same entity. They also tend to undercut the entrenched storage vendors on price as well.davegraham - Tuesday, August 6, 2013 - link

*cough* it may be undercut on the front end but maintenance is a typical Oracle "grab you by the chestnuts" type thing.Frallan - Wednesday, August 7, 2013 - link

More like "grab you by the chestnuts - pull until they rips loose and shove em up where they don't belong" - type of thing...davegraham - Wednesday, August 7, 2013 - link

I was being nice. ;)equals42 - Saturday, August 17, 2013 - link

And perhaps lock you into Larry's platform so he can extract his tribute for Oracle software? I think I've paid for a week of vacation on Ellison's Hawaiian island.Everybody gets their money to appease shareholders somehow. Either maintenance, software, hardware or whatever.

Brutalizer - Monday, August 5, 2013 - link

Discs have grown bigger, but not faster. Also, they are not safer nor more resilient to data corruption. Large amounts of data will have data corruption. The more data, the more corruption. NetApp has some studies on this. You need new solutions that are designed from the ground up to combat data corruption. Research papers shows that ntfs, ext, etc and hardware raid are vulnerable to data corruption. Research papers also show that ZFS do protect against data corruption. You find all papers on wikipedia article on zfs, including papers from NetApp.Guspaz - Monday, August 5, 2013 - link

It's worth pointing out, though, that enterprise use of ZFS should always use ECC RAM and disk controllers that properly report when data has actually been written to the disk. For home use, neither are really required.