The Great Equalizer 3: How Fast is Your Smartphone/Tablet in PC GPU Terms

by Anand Lal Shimpi on April 4, 2013 1:00 AM EST- Posted in

- Tablets

- Smartphones

- Mobile

- GPUs

- SoCs

GL/DXBenchmark 2.7 & Final Words

While the 3DMark tests were all run at 720p, the GL/DXBenchmark results run at roughly 2.25x the pixel count: 1080p. We get a mixture of low level and simulated game benchmarks with GL/DXBenchmark 2.7, the former isn't something 3DMark offers across all platforms today. The game simulation tests are far more strenuous here, which should do a better job of putting all of this in perspective. The other benefit we get from moving to Kishonti's test is the ability to compare to iOS and Windows RT as well. There will be a 3DMark release for both of those platforms this quarter, we just don't have final software yet.

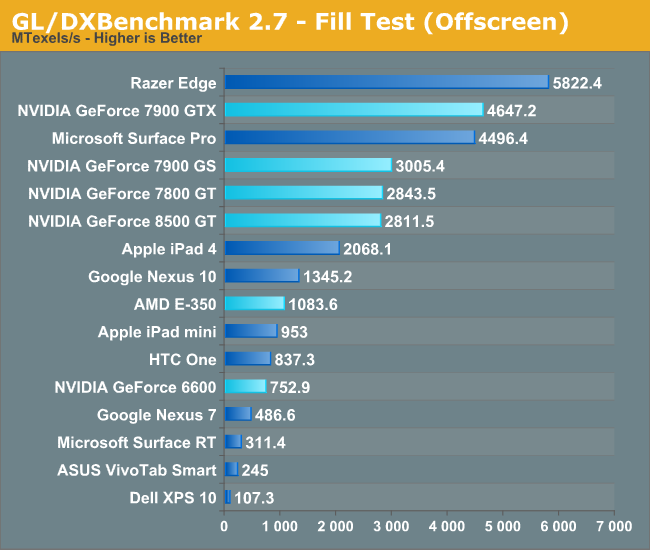

We'll start with the low level tests, beginning with Kishonti's fill rate benchmark:

Looking at raw pixel pushing power, everything post Apple's A5 seems to have displaced NVIDIA's GeForce 6600. NVIDIA's Tegra 3 doesn't appear to be quite up to snuff with the NV4x class of hardware here, despite similarities in the architectures. Both ARM's Mali-T604 (Nexus 10) and ImgTec's PowerVR SGX 554MP4 (iPad 4) do extremely well here. Both deliver higher fill rate than AMD's Radeon HD 6310, and in the case of the iPad 4 are capable to delivering midrange desktop GPU class performance from 2004 - 2005.

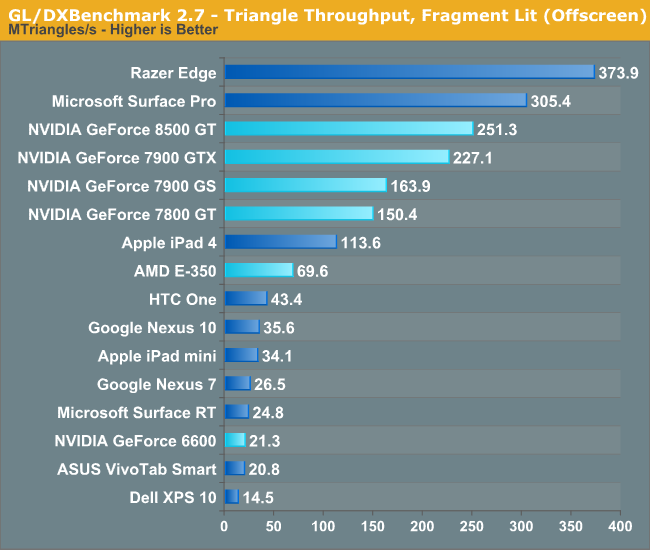

Next we'll look at raw triangle throughput. The vertex shader bound test from 3DMark did some funny stuff to the old G7x based architectures, but GL/DXBenchmark 2.7 seems to be a bit kinder:

Here the 8500 GT definitely benefits from its unified architecture as it is able to direct all of its compute resources towards the task at hand, giving it better performance than the 7900 GTX. The G7x and NV4x based architectures unfortunately have limited vertex shader hardware, and suffer as a result. That being said, most of the higher end G7x parts are a bit too much for the current crop of ultra mobile GPUs. The midrange NV4x hardware however isn't. The GeForce 6600 manages to deliver triangle throughput just south of the two Tegra 3 based devices (Surface RT, Nexus 7).

Apple's iPad 4 even delivers better performance here than the Radeon HD 6310 (E-350).

ARM's Mali-T604 doesn't do very well in this test, but none of ARM's Mali architectures have been particularly impressive in the triangle throughput tests.

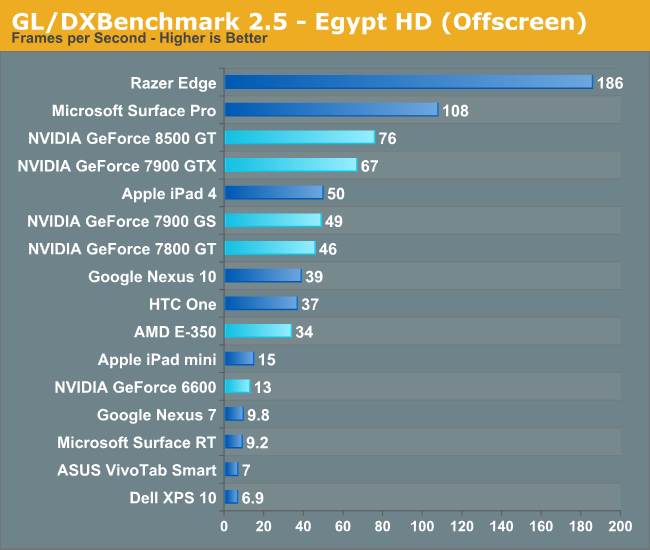

With the low level tests out of the way, it's time to look at the two game scenes. We'll start with the less complex of the two, Egypt HD:

Now we have what we've been looking for. The iPad 4 is able to deliver similar performance to the GeForce 7900 GS, and 7800 GT, which by extension means it should be able to outperform a 6800 Ultra in this test. The vanilla GeForce 6600 remains faster than NVIDIA's Tegra 3, which is a bit disappointing for that part. The good news is Tegra 4 should be somewhere around high-end NV4x/upper-mid-range G7x performance in this sort of workload. Again we're seeing Intel's HD 4000 do remarkably well here. I do have to caution anyone looking to extrapolate game performance from these charts. At best we know how well these GPUs stack up in these benchmarks, until we get true cross-platform games we can't really be sure of anything.

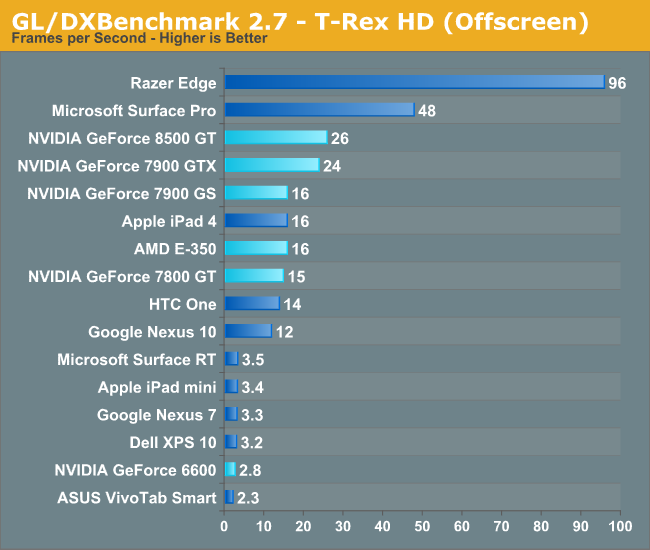

For our last trick, we'll turn to the insanely heavy T-Rex HD benchmark. This test is supposed to tide the mobile market over until the next wave of OpenGL ES 3.0 based GPUs take over, at which point GL/DXBenchmark 3.0 will step in and keep everyone's ego in check.

T-Rex HD puts the iPad 4 (PowerVR SGX 554MP4) squarely in the class of the 7800 GT and 7900 GS. Note the similarity in performance between the 7800 GT and 7900 GS indicates the relatively independent nature of T-Rex HD when it comes to absurd amounts of memory bandwidth (relatively speaking). Given that all of the ARM platforms south of the iPad 4 line have less than 12.8GB/s of memory bandwidth (and those are the platforms these benchmarks were designed for), a lack of appreciation for the 256-bit memory interfaces on some of the discrete cards is understandable. Here the 7900 GTX shows a 50% increase in performance over the 7900 GS. Given the 62.5% advantage the GTX holds in raw pixel shader performance, the advantage makes sense.

The 8500 GT's leading performance here is likely due to a combination of factors. Newer drivers, a unified shader architecture that lines up better with what the benchmark is optimized to run on, etc... It's still remarkable how well the iPad 4's A6X SoC does here as well as Qualcomm's Snapdragon 600/Adreno 320. The latter is even more impressive given that it's constrained to the power envelope of a large smartphone and not a tablet. The fact that we're this close with such portable hardware is seriously amazing.

At the end of the day I'd say it's safe to assume the current crop of high-end ultra mobile devices can deliver GPU performance similar to that of mid to high-end GPUs from 2006. The caveat there is that we have to be talking about performance in workloads that don't have the same memory bandwidth demands as the games from that same era. While compute power has definitely kept up (as has memory capacity), memory bandwidth is no where near as good as it was on even low end to mainstream cards from that time period. For these ultra mobile devices to really shine as gaming devices, it will take a combination of further increasing compute as well as significantly enhancing memory bandwidth. Apple (and now companies like Samsung as well) has been steadily increasing memory bandwidth on its mobile SoCs for the past few generations, but it will need to do more. I suspect the mobile SoC vendors will take a page from the console folks and/or Intel and begin looking at embedded/stacked DRAM options over the coming years to address this problem.

128 Comments

View All Comments

ChronoReverse - Thursday, April 4, 2013 - link

Very interesting article. I've been wondering where the current phone GPUs stood compared to desktop GPUskrumme - Thursday, April 4, 2013 - link

+1Anand sticking to the subject and diving into details and at the same time giving perspective is great work!

I dont know if i buy the convergence thinking on the technical side, because from here it look like people is just buying more smatphones and far less desktop. The convergence is there a little bit, but i will see the battle on the field before it gets really intereting. Atom is not yet ready for phones and bobcat is not ready for tablets. When they get there, where are arm then?

I put my money on arm :)

kyuu - Thursday, April 4, 2013 - link

If Atom is ready for tablets, then Bobcat is more than ready. The Z-60 may only have one design win (in the Vizio Tablet PC), but it should deliver comparable (if not somewhat superior) CPU performance with much, much better GPU performance.zeo - Tuesday, April 16, 2013 - link

Uh, no... The Hondo Z-60 is basically just an update to the Desna, which itself was derived from the AMD Fusion/Brazos Ontario C-50.While it is true that Bobcats are superior to ATOM processors for equivalent clock speeds. The problem is AMD has to deal with higher power consumption and that generates more heat, which in turn forces them to lower the max clock speed... especially, if they want to offer anywhere near competitive run times.

So the Bobcat cores for the Z-60 are only running at 1GHz, while Clover Trail ATOM is running at 1.8GHz (Clover Trail+ even goes up to 2GHz for the Z2580, that that version is only for Android devices). The differences in processor efficiency is overcome by just a few hundred MHz difference in clock speed.

Meaning you actually get more CPU performance from Clover Trail than you would a Hondo... However, where AMD holds dominance over Intel is in graphical performance and while Clover Trail does provide about 3x better performance than previous GMA 3150 (back in the netbook days of Pine Trail ATOM) it is still about 3x less powerful as the Hondo graphical performance.

Only other problems is Hondo only slightly improves power consumption compared to the previous Desna, down to about 4.79W max TDP though that is at least nearly half of the original C-50 9W...

However, keep in mind Clover Trail is a 1.7W part and all models are fan-less but Hondo models will continue to require fans.

While AMD also doesn't offer anything like Intel's advance S0i Power Management that allows for ARM like extreme low mw idling states and allowing for features like always connected standby.

So the main reason to get a Hondo tablet is because it'll likely offer better Linux support, which is presently virtually non-existent for Intel's present 32nm SoC ATOMs, and the better graphical performance if you want to play some low end but still pretty good games.

It's the upcoming 28nm Temash that you should keep a eye out for, being AMD's first SoC that can go fan-less for the dual core version and while the quad core version will need a fan, it will offer a Turbo docking feature that lets it go into a much higher 14W max TDP power mode that will provide near Ultrabook level performance... Though the dock will require an additional battery and additional fans to support the feature.

Intel won't be able to counter Temash until their 22nm Bay Trail update comes out, though that'll be just months later as Bay Trail is due to start shipping around September of this year and may be in products in time for the holiday shopping season.

Speedfriend - Thursday, April 4, 2013 - link

Atom is not yet ready for phones?It is in several phones already, where it delivers a strong performance from a CPU and power consumption perspective. It's weak point is the GPU from Imagination. In two years time, ARM will be a distant memory in high-end tablets and possibly high-end smartphones too, even more so if we get advances in battery technology.

krumme - Thursday, April 4, 2013 - link

Well Atom is in several phones that do not sell in any meaningfull matter. Sure there will be x86 in high-end tablets, and jaguar will make sure that happens this year, but will those tablets matter? There is ARM servers also. Do they matter?Right now there is sold tons of cheap 40nm A9 products, the consumers is just about to get into 28nm quadcore A7 at 2mm2 for the cpu part. And they are ready for cheap, slim phones, with google play, and acceptable graphics performance for templerun 2.

Wilco1 - Thursday, April 4, 2013 - link

Also note that despite Anand making the odd "Given that most of the ARM based CPU competitors tend to be a bit slower than Atom" claim, the Atom 2760 in the Vivo Tab Smart scores consistently the worst on both the CPU and GPU tests. Even Surface RT with low clocked A9's beats it. That means Atom is not even tablet-ready...kyuu - Thursday, April 4, 2013 - link

The Atom scores worse in 3DMark's physics test, yes. But any other CPU benchmark I've seen has always favored Clover Trail over any A9-based ARM SoC. A15 can give the Atom a run for its money, though.Wilco1 - Thursday, April 4, 2013 - link

Well I haven't seen Atom beat an A9 at similar frequencies except perhaps SunSpider (a browser test, not a CPU test). On native CPU benchmarks like Geekbench Atom is well behind A9 even when you compare 2 cores/4 threads with 2 cores/2 threads.kyuu - Friday, April 5, 2013 - link

At similar frequencies? What does that matter? If Atom can run at 1.8GHz while still being more power efficient than Tegra 3 at 1.3GHz, then that's called -- advantage: Atom.DId you read the reviews of Clover Trail when it came out?

http://www.anandtech.com/show/6522/the-clover-trai...

http://www.anandtech.com/show/6529/busting-the-x86...