AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTFinal Words

As GPUs have increased in complexity, the refresh cycle has continued to lengthen. 6 month cycles have largely given way to 1 year cycles, and even then it can be 2+ years between architecture refreshes. This is not only a product of the rate of hardware development, but a product of the need to give developers time to breathe and to absorb information about new architectures.

The primary purpose of the AMD Fusion Developer Summit and the announcement of the AMD Graphics Core Next is to give developers even more time to breathe by extending the refresh window backwards as well as forwards. It can take months to years to deliver a program, so the sooner an architecture is introduced the sooner a few brave developers can begin working on programs utilizing it; the alternative is that it may take years after the launch of a new architecture before programs come along that can fully exploit the new architecture. One only needs to take a look at the gaming market to see how that plays out.

Because of this need to inform developers of the hardware well in advance, while we’ve had a chance to see the fundamentals of GCN products using it are still some time off. At no point has AMD specified when a GPU will appear using GCN will appear, so it’s very much a guessing game. What we know for a fact is that Trinity – the 2012 Bulldozer APU – will not use GCN, it will be based on Cayman’s VLIW4 architecture. Because Trinity will be VLIW4, it’s likely-to-certain that AMD will have midrange and low-end video cards using VLIW4 because of the importance they place on being able to Crossfire with the APU. Does this mean AMD will do another split launch, with high-end parts using one architecture while everything else is a generation behind? It’s possible, but we wouldn’t make at bets at this point in time. Certainly it looks like it will be 2013 before GCN has a chance to become a top-to-bottom architecture, so the question is what the top discrete GPU will be for AMD by the start of 2012.

Moving on, it’s interesting that GCN effectively affirms most of NVIDIA’s architectural changes with Fermi. GCN is all about creating a GPU good for graphics and good for computing purposes; Unified addressing, C++ capabilities, ECC, etc were all features NVIDIA introduced with Fermi more than a year ago to bring about their own compute architecture. I don’t believe there’s ever been a question whether NVIDIA was “right”, but the question has been whether it’s time to devote so much engineering effort and die space on technologies that benefit compute as opposed to putting in more graphics units. With NVIDIA and now AMD doing compute-optimized GPUs, clearly the time is quickly approaching if it’s not already here.

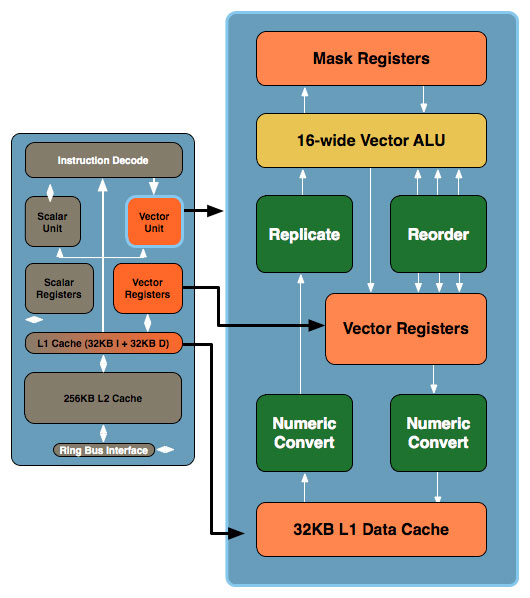

Larrabee As It Was: Scalar + 16-Wide Vector

I can’t help but to also make a comparison to Intel’s aborted Larrabee Prime architecture here. There are some very interesting similarities between Larrabee and GCN, primarily in the dual vector/scalar design and in the use of a 16-wide vector ALU. Processing 16 elements at once is an incredibly common occurrence in GPUs – it even shows up in Fermi which processes half a warp (16 threads) a clock. There are still a million differences between all of these architectures, but there’s definitely a degree of convergence occurring. Previously NVIDIA and AMD converged around VLIW in the days of the graphical GPU, and now we’re converging at a new point for the compute GPU.

Finally, while we’ve talked about the GCN architecture in great detail we haven’t talked about how to program it. Of course there’s OpenCL, but with GCN there’s going to be so much more. Next week we will be taking a look at AMD’s Fusion System Architecture, a high-level abstraction layer that will make GPU programming even more CPU-like, an advancement necessary to bring forth the kind of heterogeneous computing AMD is shooting for. We will also be taking a look at Microsoft’s C++ Accelerated Massive Parallelism (AMP), a C++ extension to bridge the gap between current and future architectures by allowing developers to program for GPUs in C++ even if the GPU doesn’t fully support the C++ feature set.

It’s clear that 2011 is shaping up to be a big year for GPUs, and we’re not even half-way through. So stay tuned, there’s much more to come.

83 Comments

View All Comments

StormyParis - Friday, June 17, 2011 - link

Thank you for a very enlightening write up. Comments and questions:1- please add a comma in there somewhere. I had to read the sentence 4 times to understand it (page 1=: "VLIW designs will never achieve perfect efficiency in this regard, but the farther off real world utilization is the weaker the benefits of VLIW."

2- When, if ever, will we vile users see any benefits ? I get the feeling that most apps are still not optimized well, if at all, for multicore/threading. Come to think of it, most don't even use most of the x86 extensions more recent than SSE2. Now we're talking of yet another x86 extension, that is not only AMD-specific, but very task-specific. Apart from a handful of labs doing GPU computing, and the usual Photoshop filters... i'm doubtful ?

MonkeyPaw - Friday, June 17, 2011 - link

I'm not an expert in this sort of design, but is AMD setting up this architecture to replace the x86 ALU? Bulldozer is already running 2 ALUs for every 1 FPU, which is promoting ALU-heavy software design. It may take a few revisions to meld them (or phase one out), but it certainly seems like that's a heterogeneous CPU in the end.marc1000 - Friday, June 17, 2011 - link

there is a slide (on Llano article, I believe) where AMD points this. yes, they want to completely merge them, and the ALU would be one of this mergind points.A5 - Friday, June 17, 2011 - link

I think it'll be quite awhile before the monolithic cores dissolve into the heterogeneous architectures, mostly depending on how fine-grained the power gating can get. When it gets to the point where the CPU can selectively turn off components inside a given SIMD unit, I think we'll see someone go "Wait a minute, then why do we even have this big core anymore?" and it'll go away. 2018ish, maybe?jamescox - Monday, June 20, 2011 - link

ALU is generally used to refer to a very simple unit that performs arithmetic, logic, and possibly bit shift operations on integers, not floating point values. The units labeled ALU in the GPU diagrams in the article may support some integer operations, but they mainly process 32-bit floating point values, and (IMO) should not be labeled as "ALUs". FPU would probably be more accurate, but I do not know what operations these units support and whether they include a native integer ALU or just convert to integers to FP.

I don't know what you would mean by ALU-heavy software design. Bulldozer has two integer execution cores per module. Each core is composed of 2 ALUs and 2 AGUs, not shared. It also has 2 128-bit floating point (FMA) units per module shared between the two threads. This isn't really much different than an intel hyper-threaded core. Intel has, I believe, 3 ALUs, 3 AGUs, and 2 FPUs per core which is shared between 2 threads. AMDs version of multi-threading just doesn't share as much hardware between threads, which may be better than Intel's HT (2-2-1 AMD vs 1.5-1.5-1 Intel ALU-AGU-FPU). Intel's version would allow a single thread to us all of the execution resources at once, if there is no competing thread. Sharing the FPU makes a lot of sense, since most code that runs on CPUs only uses the FPU intermittently. If the code uses FP more than intermittently, then it would be a candidate for vectorization, and execution on the GPU instead.

While AMDs next generation graphics hardware may be able to execute more general code compiled from a wider range of languages, it is not an x86 processor, and it can not replace the CPU. If you look at the diagram, it has a single scalar unit to handle non-vector code in each compute unit. It also has 64 units in the 4 vector arrays of each CU. If you actually tried to compile and run the kind of branch heavy, integer code that CPUs have to deal with on a CU, then it would probably run entirely and very, very slowly on that single scalar unit.

MrSpadge - Wednesday, June 22, 2011 - link

I think you've got the right idea with this being melted into a Bulldozer-like design. however, it wouldn't replace the x86 ALUs, which are highly-optimized for high clock speed and low latency execution, as well as excellent handling of branches etc.No, it would rather replace or supplement a fat FPU shared between many "cores" (which, by then would basically mean ALUs + scheduling). Most tasks which requires massive fp number crunching can be executed well in parallel and therefore are suitable for execution on a GPU core. The question is just how to bond them together so that the software guys can actually use them..

MrS

Deleted - Thursday, December 22, 2011 - link

Basically, what we have here is a math coprocessor. Back in the day, Intel's x86 processors were very good (relatively speaking) at integer math, but choked on floating point math. So Intel created the 8087 to handle the floating point calculations while the CPU handled the integer calculations (obviously this wasn't exclusive to Intel, but I'm generalizing). Eventually, the floating point unit was merged onto the CPU, and programs began using them interchangeably.What we have today is very similar. CPUs, even with their advanced FPUs, are nowhere near as powerful as the massively parallel monstrosities we use for graphics. Eventually, they will be merged onto the CPU, and used as readily for general floating point processing tasks as FPUs are currently.

And this is the point of Fusion: to fully replace the aging floating point unit with an IGP.

A5 - Friday, June 17, 2011 - link

The benefits to home or enthusiast users of heterogeneous CPUs are still several years off. We need market penetration of hardware along with fundamental changes in software development models and smarter compilers.nedwards - Tuesday, January 28, 2014 - link

Smarter programmers would help! Let me rephrase that. Programmers thinking in a parallel mindset would help!Beenthere - Friday, June 17, 2011 - link

If AMD delivers in a timely manner they will have a bright future. This looks like a huge technological transition and I understand the need to get developers onboard now but it also tips AMD's hand to Intel who will steal any ideas that they can.Unfortunately we are still waiting for most applications to be written for 64-bit use so I'm not holding out much hope for an expeditious migration on a complex technological transition though it does appear that maybe AMD has been working on this for some time and may be able to do a better job of executing with Trinity and future products. Time will tell but I hope AMD delivers on time and they will definitely get my dime - all of them.