NVIDIA's GeForce GT 430: The Next HTPC King?

by Ryan Smith & Ganesh T S on October 11, 2010 9:00 AM ESTCompute Performance & Synthetics

While the GT 430 isn’t meant to be a computing monster and you won’t see NVIDIA presenting it as such, it’s still a member of the Fermi family and possesses the family’s compute capabilities. This includes the Fermi cache structure, along with the 48 CUDA core SM that was introduced with GF104/GTX 460. This also means that it has a greater variation of performance than the past-generation NVIDIA cards; the need to extract ILP means the card performs between a 64 CUDA core card and a 96 CUDA core card depending on the application.

Meanwhile being based on the GF104 SM, the GT 430 is FP64 capable at 1/12th FP32 speeds (~20 GFLOPS FP64), a first for a card of this class.

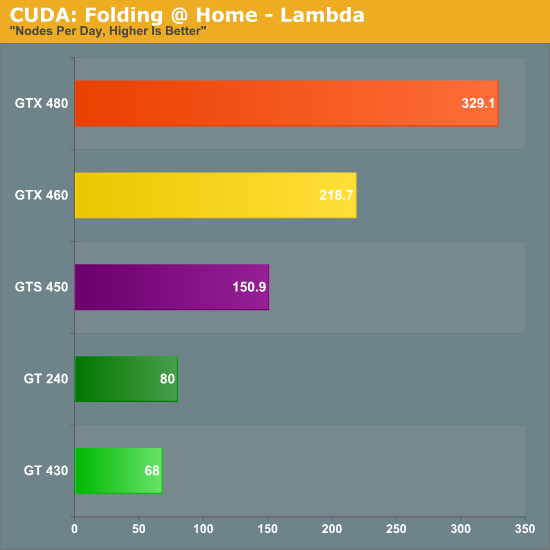

For our look at compute performance we’ll turn to our trusty benchmark copy of Folding @ Home. We’ve also included the GT 240, a last-generation 96 CUDA core card just like the GT 430. This affords us an interesting opportunity to see the performance of Fermi compared to GT200 with the same number of CUDA cores in play, although GT 430 has a clockspeed advantage here that gives it a higher level of performance in theory.

The results are interesting, but also a bit distressing. GT 430’s performance as compared to the GTS 450’s performance is quite a bit lower, but this is expected. GT 240 however manages to pull ahead by nearly 17%, which is quite likely a manifestation of Fermi’s more variable performance. This makes the GT 220 comparison all the more appropriate, as if Fermi’s CUDA cores are weaker on average then GT 430 can’t hope to keep pace with GT 240.

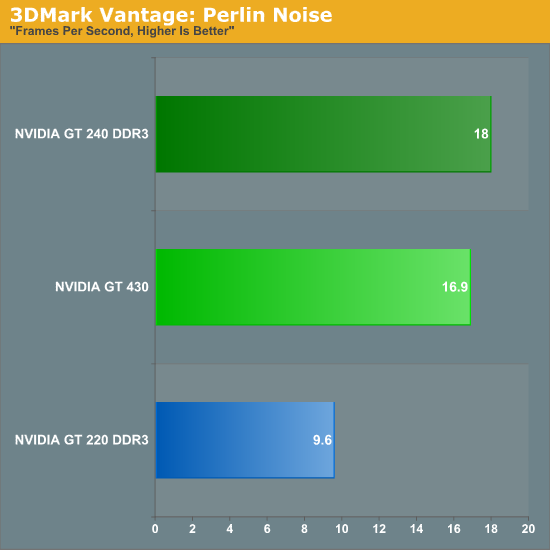

To take a second look at CUDA core performance, we’ve also busted 3DMark Vantage out of the vault. As we’ve mentioned before we’re not huge fans of synthetic tests like 3DMark since they encourage non-useful driver optimizations for the benchmark instead of real games, but the purely synthetic tests do serve a useful purpose when trying to get to the bottom of certain performance situations.

We’ll start with the Perlin Noise test, which is supposed to be computationally bound, similar to Folding @ Home.

Once more we see the GT 430 come in behind the GT 240, even though the GT 430 has the theoretical advantage due to clockspeed. The loss isn’t nearly as great as it was under Folding @ Home, but this lends more credit to the theory that Fermi shaders are less efficient than GT21x CUDA cores. As a card for development GT 430 still has a number of advantages such as the aforementioned FP64 support and C++ support in CUDA, but if we were trying to use it as a workhorse card it looks like it wouldn’t be able to keep up with GT 240. Based on our gaming results earlier, this would seem to carry over to shader-bound games, too.

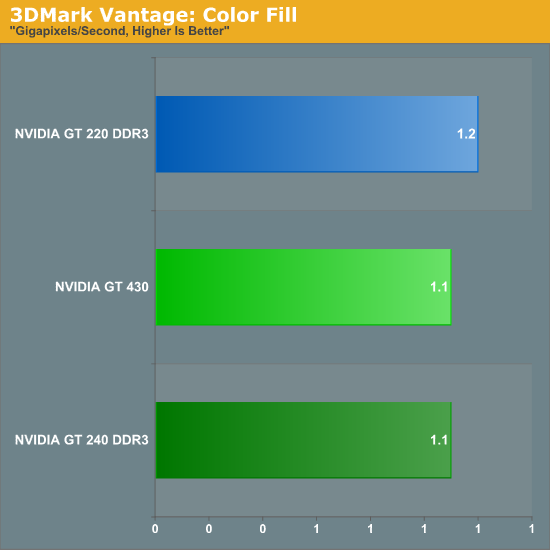

Moving on, we also used this opportunity to look at 3DMark Vantage’s color fill test, which is a ROP-bound test. With only 4 ROPs on the GT 430, this is the perfect synthetic test for seeing if having fewer ROPs really is an issue when we’re comparing GT 430 to older cards.

And the final verdict? A not very useful yes and no. GT 220 and GT 240 both have 8 ROPs, with GT 220 having the clockspeed advantage. This is why GT 220 ends up coming out ahead of GT 240 here by less than 100 MPixels/sec. But on the other hand, GT 430 has a clockspeed advantage of its own while possessing half the ROPs. The end result is that GT 430 is effectively tied with these previous-generation cards, which is actually quite a remarkable feat for having half the ROPs.

NVIDIA worked on making the Fermi ROPs more efficient and it has paid off by letting them use 4 ROPs to do what took 8 in the last generation. With this data in hand, NVIDIA’s position that 4 ROPs is enough is much more defensible, as they’re at least delivering last-generation ROP performance on a die not much larger than GT216 (GT 220). This doesn’t provide enough additional data to clarify whether the ROPs alone are the biggest culprit in the GT 430’s poor gaming performance, but it does mean that we can’t rule out less efficient shaders either.

Do note however that while Fermi ROPs are more efficient than GT21x ROPs, it’s only a saving grace when doing comparisons to past-generation architectures. GT 430 still only has ¼ the ROP power as GTS 450, which definitely hurts the card compared to its more expensive sibling.

120 Comments

View All Comments

n9ntje - Monday, October 11, 2010 - link

Sad to see Nvidia doesn't live up to expectations, while they want us to believe that they have a perfect HTPC card, it isn't.To most people, image quality counts. 3D is still a niche.

IceDread - Monday, October 11, 2010 - link

Yeap, it's always best if the competition is even, gives us the best prices.medi01 - Monday, October 11, 2010 - link

I am afraid market is too slow to react to nVidia having worse products, AMD has nowhere near market share that it deserves to have.We can't expect one player to dominate all the time. So when the underdog creates superior products, it should benefit from it. But this is not the case in GPU market, unfortunatelly, as nVidia still keeps much bigger market share, than AMD.

dnd728 - Monday, October 11, 2010 - link

I've tried quite a few ATI/AMD cards over the years, including the latest 5000 series, and to date not a single one of them worked right, i.e. without keep crashing Windows.It could be one reason.

electroju - Monday, October 11, 2010 - link

I agree and I have also used ATI and AMD graphics over the years. AMD graphics writes the worst software or drivers from a reputable company. I go with nVidia because I care for reliability and stability. I do not mind spending money on nVidia graphics because the money goes towards software development. The cost of AMD graphics is too low to provide enough for software development.Zoomer - Monday, October 11, 2010 - link

I have personally found nvidia cards to have inferior hardware quality. This was very evident from the time when quality dacs for vga mattered, and nvidia cards absolutely sucked at that. Further suboptimal decisions made their cards meh.Software wise, I thought nvidia's software quality peaked around the time of the detonators.

AmdInside - Monday, October 11, 2010 - link

DACs depended on the maker of the card. Quadro NVS cards which were made by NVIDIA were regarding as having excellent 2D image quality over analog display. Sadly a lot of NVIDIA partners used cheap DACs on some of their cards.mentatstrategy - Wednesday, October 13, 2010 - link

Nvidia Fanboi: I have used ati cards and they suck!ATI Fanboi: I have used nvidia cards and they suck!

heflys - Monday, October 11, 2010 - link

Hmmm....Haven't had a problem with ATi/AMD drivers thus far.duploxxx - Friday, October 15, 2010 - link

perhaps you need to read a bit more and see how many 1000's have been recently been affected by this awesome nvidia reliability and stability when they all had to throw away there graphic cards and laptops.