Intel's Sandy Bridge Architecture Exposed

by Anand Lal Shimpi on September 14, 2010 4:10 AM EST- Posted in

- CPUs

- Intel

- Sandy Bridge

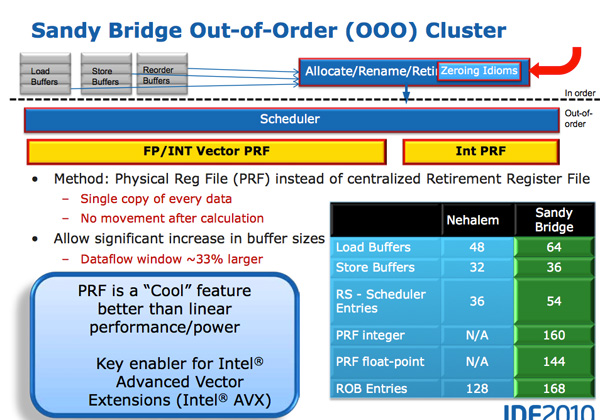

A Physical Register File

Just like AMD announced in its Bobcat and Bulldozer architectures, in Sandy Bridge Intel moves to a physical register file. In Core 2 and Nehalem, every micro-op had a copy of every operand that it needed. This meant the out-of-order execution hardware (scheduler/reorder buffer/associated queues) had to be much larger as it needed to accommodate the micro-ops as well as their associated data. Back in the Core Duo days that was 80-bits of data. When Intel implemented SSE, the burden grew to 128-bits. With AVX however we now have potentially 256-bit operands associated with each instruction, and the amount that the scheduling/reordering hardware would have to grow to support the AVX execution hardware Intel wanted to enable was too much.

A physical register file stores micro-op operands in the register file; as the micro-op travels down the OoO engine it only carries pointers to its operands and not the data itself. This significantly reduces the power of the out of order execution hardware (moving large amounts of data around a chip eats tons of power), it also reduces die area further down the pipe. The die savings are translated into a larger out of order window.

The die area savings are key as they enable one of Sandy Bridge’s major innovations: AVX performance.

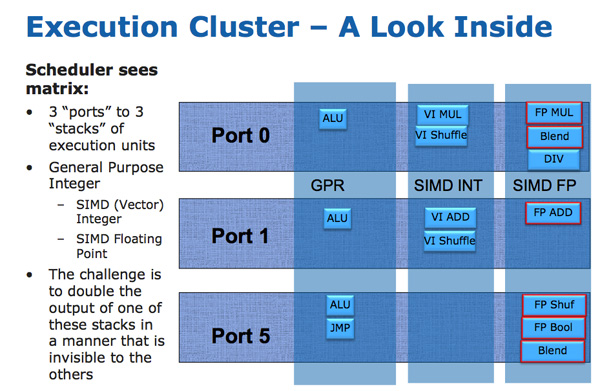

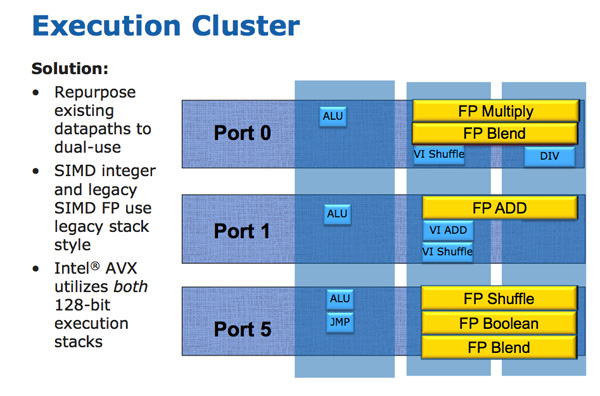

The AVX instructions support 256-bit operands, which as you can guess can eat up quite a bit of die area. The move to a physical register file enabled Intel to increase OoO buffers to properly feed a higher throughput floating point engine. Intel clearly believes in AVX as it extended all of its SIMD units to 256-bit wide. The extension is done at minimal die expense. Nehalem has three execution ports and three stacks of execution units:

Sandy Bridge allows 256-bit AVX instructions to borrow 128-bits of the integer SIMD datapath. This minimizes the impact of AVX on the execution die area while enabling twice the FP throughput, you get two 256-bit AVX operations per clock (+ one 256-bit AVX load).

Granted you can’t mix 256-bit AVX and 128-bit integer SSE ops, however remember SNB now has larger buffers to help extract more ILP.

The upper 128-bits of the execution hardware and paths are power gated. Standard 128-bit SSE operations will not incur an additional power penalty as a result of Intel’s 256-bit expansion.

AMD sees AVX support in a different light than Intel. Bulldozer features two 128-bit SSE paths that can be combined for 256-bit AVX operations. Compared to an 8-core Bulldozer a 4-core Sandy Bridge has twice the 256-bit AVX throughput. Whether or not this is an issue going forward really depends on how well AVX is used in applications.

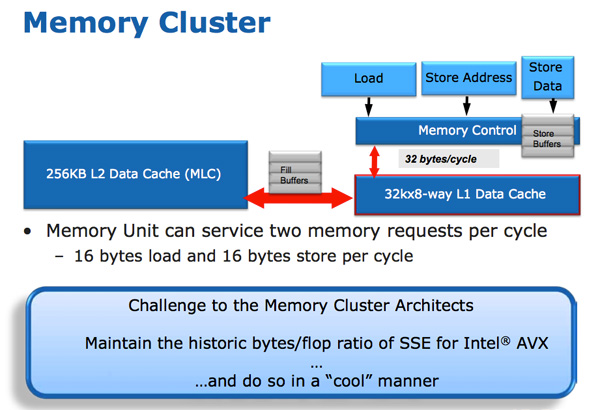

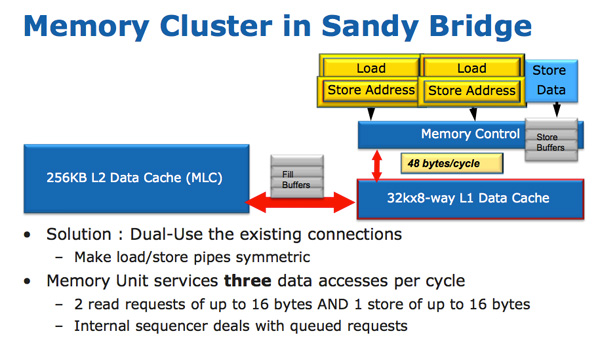

The improvements to Sandy Bridge’s FP performance increase the demands on the load/store units. In Nehalem/Westmere you had three LS ports: load, store address and store data.

In SNB, the load and store address ports are now symmetric so each port can service a load or store address. This doubles the load bandwidth which is important as Intel doubled the peak floating point performance in Sandy Bridge.

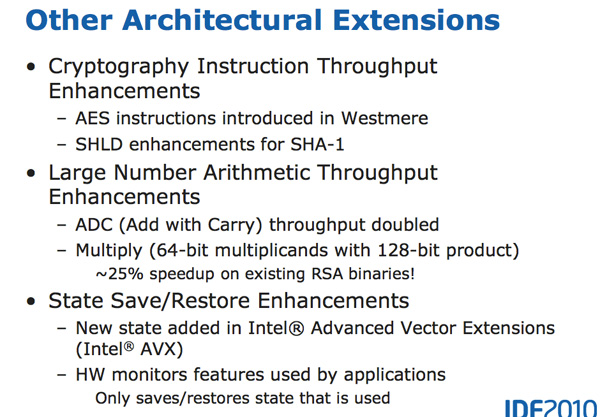

There are some integer execution improvements in Sandy Bridge, although they are more limited. Add with carry (ADC) instruction throughput is doubled, while large scale multiplies (64 * 64) see a ~25% speedup.

62 Comments

View All Comments

beginner99 - Tuesday, September 14, 2010 - link

AMD's been taking about fusion forever but I can't get rid of the feeling that this Intel implementation will be much more "fused" than the AMD one will be. AMD barley has CPU turbo so adding a comined cpu/gpu turbo at once, maybe they can pull it off but experience makes me doubt that very much.BTW, if it takes like 3mm^2 for a super fast video encoder I ask my self, why wasn't this done before?

duploxxx - Tuesday, September 14, 2010 - link

first or not, doesn't really matter.who says AMD need's GPU turbo? If Liano really is a 400SP GPU it will knock any Intel GPU with or without turbo.

If we see the first results of Anadtech review which seems to be a GT2 part it doesn't have a chance at all.

core i5 is really castrated due to lack of HT, This is exactly where liano will fight against, with a bit less cpu power.

B3an - Tuesday, September 14, 2010 - link

Even if AMD's GPU in Liano is faster, intels GPU is finally decent and good enough for most people, but more importantly more people will care about CPU performance because most users dont play games and this GPU can more than easily handle HD video. And i'm sure SB will be faster than anything AMD has. Then throw in the AVX and i'd say Intel clearly have a better option for the vast majority of people, it just comes down to price now.B3an - Tuesday, September 14, 2010 - link

Sorry, didnt mean AVX, i meant the hardware accelerated video encoding.bitcrazed - Tuesday, September 14, 2010 - link

But it's not just about raw power - it's about power per dollar.If you've got $500 to spend on a mobo and CPU, where do you spend it? On a slower Intel platform or on a faster AMD platform?

If AMD get their pricing right, they could turn this into a no-brainer decision, greatly increasing their sales.

duploxxx - Tuesday, September 14, 2010 - link

now here comes the issue with the real fanboys:"And i'm sure SB will be faster than anything AMD has."

It's exactly price where AMD has the better option. It's people " known brand name" that keeps them at buying the same thing without knowledge... yeah lets buy a Pentium.

takeulo - Wednesday, September 15, 2010 - link

hahahahah yeah i agree AMD is the better option at all if i have the high budget i'll go for Insane i mean Intel but since im only "poor" and i cant afford it so i'll stick to AMD and my money worth itsorry for my bad english XD

MySchizoBuddy - Monday, December 20, 2010 - link

how do you know Intel GPU has reached good enough state (do you have benchmarks to support your hypothesis). they have been trying to reach this state for as long as i can remember.your good enough state might be very different that somebodies else's good enough state.

bindesh - Tuesday, September 20, 2011 - link

Your all doubts will be cleared after watching this video, and related once.http://www.youtube.com/watch?v=XqBk0uHrxII&fea...

I am having 3 AMDs and 1 Intel, Believe me with the price of AMD CPUs, i can only get a celeron in Intel. Which cannot run NFS SHIFT. Or TIme Shift. But other hand, with AMD athlon, i have completed Devil May Cry 4 with decent speed. And the laptop costs 24K, Toshiba C650, psg xxxxx18 model. It has 360 GB SSD, ATI 4200HD.

Can you get such price and performance with Intel?

Best part is that i am running it with 800MHz cpu speed, with performance much much greater than 55K intel dual core laptop of my friend.

vlado08 - Tuesday, September 14, 2010 - link

Still no word ont the 23.976 FPS play back?