The Best Server CPUs part 2: the Intel "Nehalem" Xeon X5570

by Johan De Gelas on March 30, 2009 3:00 PM EST- Posted in

- IT Computing

Virtualization (ESX 3.5 Update 2/3)

More than 50% of the servers are bought to virtualize. Virtualization is thus the killer application and the most important benchmark available. VMware is by far the market leader with about 80% of the market. However, we encountered - once again - serious issues in getting ESX installed and running on the newest platform. ASUS told us we need the ESX Update 4, which we do not yet have in the labs. We are doing all we can to make sure that our long awaited hypervisor comparison will be online in April, so stay tuned. Since we have not been able to carry out our own virtualization benchmarking, we turn to VMware's VMmark.

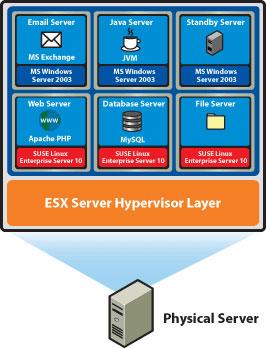

VMware VMmark is a benchmark of consolidation. Several virtual machines performing different tasks are consolidated, creating a tile. A VMmark tile consists of:

- MS Exchange VM

- Java App VM

- Idle VM

- Apache web server VM

- MySQL database VM

- SAMBA fileserver VM

The first three run on a Windows 2003 guest OS and the last three on SUSE SLES 10.

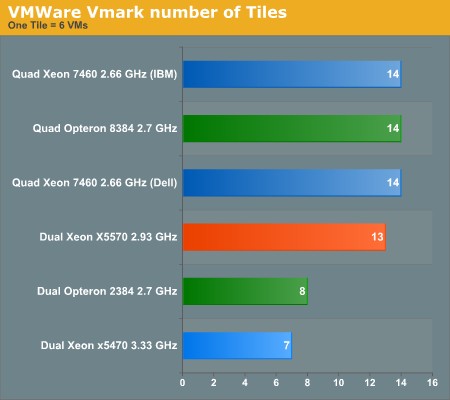

Let us first see how many tiles (six VMs per tile) each server can support:

The newest Xeon is shattering records again: with 13 tiles (in 72GB) it can consolidate by far the most VMs in a dual socket server. It is already dangerously close to the quad socket servers with up to 128GB of RAM. It is important to note that once you use more than one DIMM per channel, the maximum DDR3 speed is 1066. Once you fill up all slots (three DIMMs per channel, nine DIMMs per CPU), the DDR3 memory is running at 800MHz. Intel's official validation results can be found here.

Nevertheless, the performance impact of lower DDR3 speeds is not large enough to offset the advantage of three DIMMs per channel: up to 18 DIMMs in a dual configuration is a record. So far, AMD's latest Opteron held the record with eight DIMMs per CPU, or a maximum of 16 per dual socket server. AMD' supports up to three DIMMs per channel at 800MHz. Once you use four DIMMs (eight per CPU) per channel, the clock speed falls back to 533MHz. That is also a reason, besides pure performance, why Intel can support 13 tiles or 78 light VMs per server: Intel used 72GB of DDR3 at 800MHz. AMD is stuck at eight tiles for the moment: the dual Opteron servers get 64GB (at 533MHz) at the most.

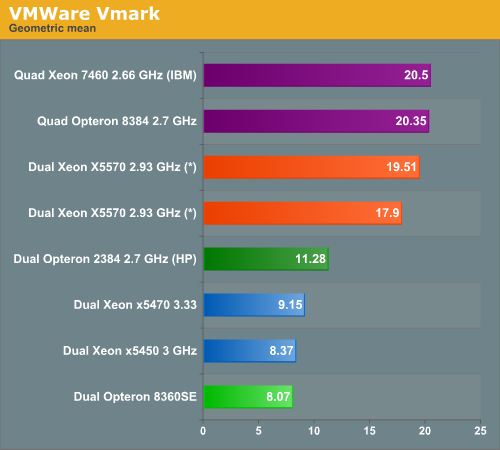

After a benchmark run, the workload metrics for each tile are computed and aggregated into a score for that tile. This aggregation is performed by first normalizing the different performance metrics such as MB/second and database commits/second with respect to a reference system. Then, a geometric mean of the normalized scores is computed as the final score for the tile. The resulting per-tile scores are then summed to create the final metric.

(*) preliminary benchmark data

World switch times from VM to hypervisor have been reduced to 40% of those of Clovertown (Xeon 53xx), and EPT is good for a 27% performance increase. Add a massive amount of memory bandwidth, and we understand why the Nehalem EP shines in this benchmark. The scores for the Xeon X5570 are however preliminary: we have seen scores range from 17.9 to 19.51, but always with 13 tiles. The ESX version was not an official version ("VMware ESX Build 140815") which will probably morph into ESX 3.5 Update 4. AMD's results might also get a bit better with ESX 3.5 Update 4, so take the results with a grain of salt, but they give a good first idea. There is little doubt that the newest Xeon is also the champion in virtualization.

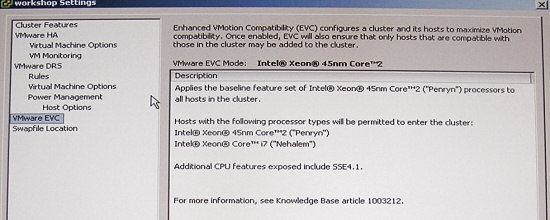

Both AMD and Intel emphasize that you can "vmotion" across several generations. AMD demonstrated that it is possible to migrate from the hex-core Istanbul to the quad-core Barcelona, while Intel demonstrated vmotion between "Harpertown" and "Nehalem".

It will be interesting to see how far you can go with this in practice. In theory you can go from Woodcrest to Nehalem. It is funny to see that Intel (and AMD to a lesser degree) have to clean up the mess they made with the incredibly chaotic ISA SIMD extensions: from MMX to more SSE extensions then we care to remember.

44 Comments

View All Comments

rkchary - Tuesday, June 16, 2009 - link

We've a customer who is interested in upgrading to Nehalem. He's running on Windows with Oracle database for SAP Enterprise Portals.Could you kindly let us know your recommendations please?

The approximate concurrent users would be around 3000 Portal users.

Keenly looking forward for your response and if you could state any instances of Nehalem installed in SAP environment for production usage, that would be a great deal of help.

Regards,

Chary

Adun - Thursday, April 9, 2009 - link

Hello,I understand the PHP not-enough-threads explanation as to why Dual X5570 doesn't scale up.

But, can anyone please explain why when you add another AMD Opteron 2384 the increase is from 42.9 to 63.9, while when you add another Xeon X5570 there isn't such an increase?

Thank you for the article,

Adun.

stimudent - Thursday, April 2, 2009 - link

Was it really too much effort to clean off the processor before posting a picture of it? Or were they trying to show that it was used, tested?LizVD - Friday, April 3, 2009 - link

Would you perhaps like us to draw a smiley face on it as well? ;-)GazzaF - Wednesday, April 1, 2009 - link

Well done on an excellent review using as many real-world tests as possible. The VMWare test is a real eye opener and shows how the 55xx can match double the number of CPUs from the last generation of Xeons *AND* crucially save $$$$ on licensing from Windows and MS SQL and other per-socket licensed software, plus the power saving which is again a financial saving if you hire rack space in a datacentre.I eagerly await your own in-house VM tests. Please consider also testing using Windows 2008 Hyper-V which I think doesn't have the 55xx optimisations that the latest release of VMWare has (and might not have until R2?).

Thanks for the time you put in to running the endless tests. The results make a brilliant business case for anyone wanting to upgrade their servers. You must have had the chips a good week before Intel officially launched them. :-) I do feel sorry for AMD though. I'm sure they have plenty of motivation to come back with a vengeance like they did a few years ago.

JohanAnandtech - Thursday, April 2, 2009 - link

Thanks! Good to hear from another professional. I believe the current Hyper Beta R2 already has some form of support for EPT.Our virtualization testing is well under way. I'll give an update soon on our blog page.

Lifted - Wednesday, April 1, 2009 - link

You mention octal servers from Sun and HP for VM's, but does anybody really use these systems for VM's? I can't imagine why anybody would, since you are paying a serious premium for 8 sockets vs. 2 x 4 socket servers, or even 4 x 2 socket servers. Then the redundancy options are much lower when running only a few 8 socket servers vs many 2 or 4 socket servers when utilizing v-motion, and the expansion options are obviously far less w/ NIC's and HBA's. From what I've seen, most 8 socket systems are for DB's.Veteran - Wednesday, April 1, 2009 - link

What i mentioned after reading the review is there are very few benches on benchmarks a little bit favored by AMD.For example, only 1 3DSmax test (so unusefull) at least 2 are needed

Only 1 virtualization benchmark, which is really a shame....

Virtualization is becoming so important and you guys only throw in one test?

Besides that, the review feels a bit biased towards intel, but i will check some other reviews of the xeon 5570

duploxxx - Wednesday, April 1, 2009 - link

Virtualization benchmark come from the official Vmmark scores.However there is something real strange going on in the results...

HP HP ProLiant DL370 G6

VMware ESX Build #148783 VMmark v1.1

23.96@16tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Dell Dell PowerEdge R710

VMware ESX Build #150817 VMmark v1.1

23.55@16tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Inspur Inspur NF5280

VMware ESX Build #148592 VMmark v1.1

23.45@17tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

Intel Intel Supermicro 6026-NTR+

VMware ESX v3.5.0 Update 4 VMmark v1.1

14.22@10 tiles

View Disclosure 2 sockets

8 total cores

16 total threads 03/30/09

So lets see all the prebuilds of esx3.5 update 4 get a real high score of 16 tiles almost as much as a 4s shanghai while Vmware performance team themselves stated that we should never see the HT core as a real cpu in Vmware (even with the new code for HT) while yet the benchmark shows a high performance increase, no not like anandtech is stating that this is due to the more available memory and its bandwith, those Vmmarks are not memory starving. Now look at the official Intel benchmark with ESX update 4, it provides 10 tiles and a healthy increase, that from a technical point of view seems much more realistic. All other marketing stuff like switching time etc, all nice, but then again is within the same line of current shanghai.

JohanAnandtech - Wednesday, April 1, 2009 - link

What kind of tests are you looking for? The techreport guys have a lot of HPC tests, we are focusing on the business apps."very few benches on benchmarks a little bit favored by AMD."

That is a really weird statement. First of all, what is a test favored by AMD?

Secondly, this new kind of testing with OLTP/OLAP testing was introduced in the Shanghai review. And it really showed IMHO that there was a completely wrong perception about harpertown vs Shanghai. Because Shanghai won in the tests that mattered the most to the market. While many tests (inclusive those of Intels) were emphasizing purely CPU intensive stuff like Blackscholes, rendering and HPC tests. But that is a very small percentage of the market, and that created the impression that Intel was on average faster, but that was absolutely not the case.

"Only 1 virtualization benchmark, which is really a shame..."

Repeat that again in a few weeks :-). We have just succesfully concluded our testing on Nehalem.

Personally I am a bit shocked about the "not enough tests" :-). Because any professional knows how hard these OLTP/OLAP tests are to set up and how much time they take. But they might not appeal to the enthousiast, I am not sure.