ATI Radeon HD 4350 and 4550: Great HTPC Solutions

by Derek Wilson on September 30, 2008 12:45 AM EST- Posted in

- GPUs

The Benefits Over Integrated Graphics

For our comparison to integrated graphics, we looked at two games: Crysis and Oblivion. These games tend to cover the spectrum fairly well from DX9 to DX10, and they tell the same story: integrated graphics suck.

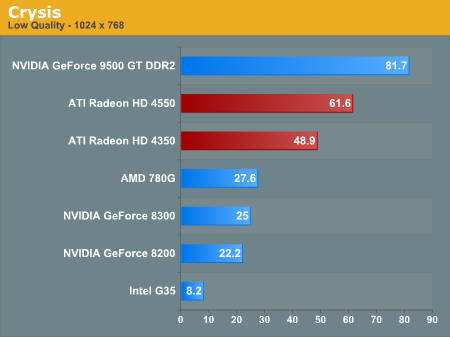

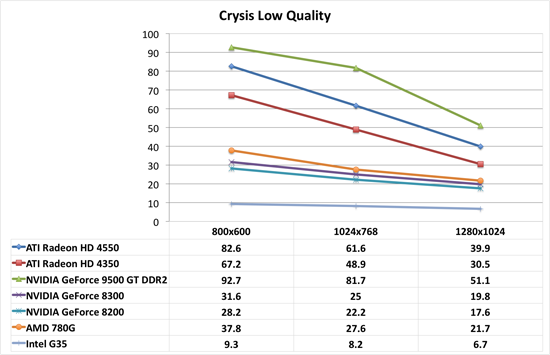

First up is Crysis. For integrated graphics, we needed to test everything at the absolute lowest setting, and even that was painful. It is too bad we couldn't test 640x480, as that might have given some of this hardware a chance at playability. But with the tests we did run, none of our integrated solutions were really playable at 1024x768, and only the AMD 780G did anything useful at 800x600. By contrast, the 4350 and the 4550 both we very playable at this very low quality setting. Pushing up to 1280x1024 wasn't really as effective, but the 4550 did still hang on to playable framerates at that resolution.

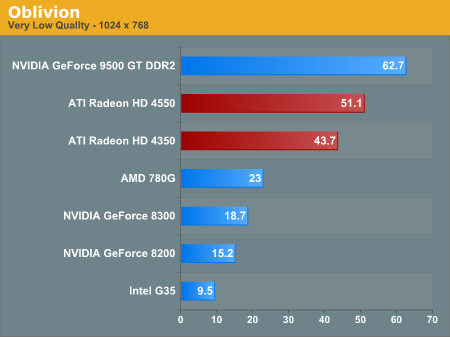

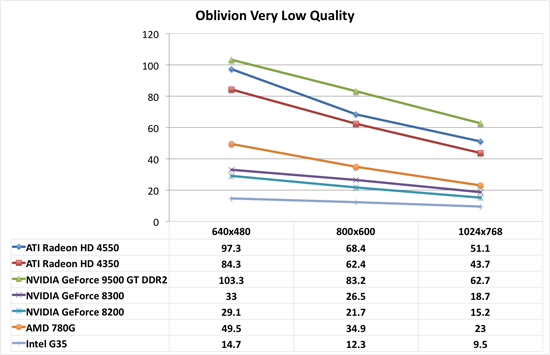

As for Oblivion, we see nearly the same behavior as with Crysis. There is a huge performance gap between integrated graphics and even the lowest end of add-in cards we are testing today. And these settings with Oblivion are insanely ugly. We would never recommend playing with very low settings ever. It's a horrendous experience.

As usual, Intel's integrated graphics are the biggest joke of the bunch. But that's not any sort of feather in AMD or NVIDIA's cap here: Intel's G35 is just really horrible hardware for 3D.

So, with the benefit over integrated graphics well established, how do these parts compare to the slightly higher price bracket right next door? Let's take a look at how they compare to NVIDIA's 9500 GT DDR2 and AMD's 4670.

55 Comments

View All Comments

ThermoMonkey - Wednesday, October 8, 2008 - link

I like many other readers was interested in this article because of its HTPC title. We all know the article wasn't written for the HTPC audience. Oh wait, at the very end he says "This hardware is currently where it's at for HTPCs. Both the 4550 and 4350 support 8-channel LPCM over HDMI." This only added to my confusion.First, what exactly provides this 8-channel LPCM support?

Isn't that provided by an SPDIF connection from the sound card?

From what I understand this ATI card gets that SPDIF connection through the PCI BUS, connecting the graphics card to the motherboards sound card.

Now if anyone knows anything about the nVidia 9500GT card, they would know that it has an SPDIF input to pass audio through the HDMI. Isn't that 8-channel LPCM??? And isn't that 8-channel LPCM totally dependent on the sound card capabilities???

I know there isn't a graphics card out there that generates and provides audio, they can only pass it through. I would prefer the digital pass through to not be on PCI BUS because maybe I don't like low quality motherboard sound and want to send SPDIF signal from my high quality stand alone sound card to my graphics cards HDMI output.

Again Correct me If I'm wrong.

Nil Einne - Thursday, February 5, 2009 - link

You're wrong, as has already been mentioned BTW. Most/all? ATI cards of the 3xxx line and 4xxx line have a audin chip (usually Realtek I believe) for outputting digital audio over HDMI (only). Nvidia card don't have an audio chip although in some cases it's possible to connect audio from the mobo (or sound card) via an internal SP/DIF connector (basically a two pin cable) and you are then able to output audio over HDMI. However I'm not aware how widespread this is among Nvidia cards nor what the limitations are (it will obviously depend on your mobo/sound card but there will likely be additional limitations). AFAIK, it is not possible to get HDMI audio otherwise. While in theory it you could send it over the PCI-express bus, this isn't done that I'm aware of.This is mentioned in a number of places besides here BTW

Nil Einne - Thursday, February 5, 2009 - link

BTW, 'low quality motherboard sound' makes no sense. We're talking about a purely digital path here.Nil Einne - Thursday, February 5, 2009 - link

BTW, 'low quality motherboard sound' makes no sense. We're talking about a purely digital path here.puddnhead - Friday, October 3, 2008 - link

The headline "perfect HTPC cards" caught my eye and I thought, great, I'm really starting to feel the limitations of the integrated x1250 graphics of my 690g chipset for HD video playback, let me see what picking up one of these cards will do for me."Then I read the review and ... huh? You don't even LOOK at HTPC tasks like HD video playback! Just cookie cutter tests of the same old fps on standard games. LOL, who is building a low power, quiet HTPC to play games & only play games. You know what HTPC means right?

I don't understand the point of this review, it makes a claim in the title and then there is zilch in the actual text to support it. Huh?

JonnyDough - Friday, October 3, 2008 - link

"Using a $1450 processor, $240 mobo, $300 RAM and $400 PSU to test a $40 GPU is assanine. That does no service to the HTPC end user."Agreed. For gaming, rather than compare it to just modern GPUs, how about comparing it to my old X1650XT. It's HDCP enabled, although it lacks HDMI and sound. I had originally bought it for use in an HTPC although it never got there. How would one of these cards perform with my skt 939 2.0ghz Athlon X2 and 2GB's of ram. THAT is what I would make an HTPC out of. Forget buying a new processor JUST for an HTPC. Why bother when I can get a PS3?

Donkeyshins - Thursday, October 2, 2008 - link

When are we going to start seeing these cards - especially the HD4550 Passive - at retailers? So far the Egg doesn't have anything and a casual web search is not giving much either.Thanks!

Syclone - Wednesday, October 1, 2008 - link

These still don't seem to support lossless codecs that require protected path audio unfortunately (Dolby TrueHD/DTS-HD MA). So the highest quality audio from blu-ray will be passed at lower quality.7Enigma - Wednesday, October 1, 2008 - link

I think the author may be in hiding after this article....but here's a hint if the previous 41 comments didn't sink in:Make sure your article title is backed up even remotely by the tests in the article itself.

Claiming something as great for one purpose, but testing it for irrelevant purposes does not a good article make.

I really would like to hear the reasoning behind how this card was tested.

TA152H - Tuesday, September 30, 2008 - link

I'm a little surprised there were no tests with the AMD IGP and these cards. Individually, they don't perform so well, but did Anandtech forget that AMD allowed their IGPs to work with discrete cards now, so you can get benefit of both. Assuming even a 30% boost, the 4550 would change pretty considerably in terms of what it can and can't do.Also, some people with older systems might be inclined to pop one of these in to run Vista on their older system. If these come in AGP, I'll surely buy a few, they are absolutely excellent cards for an incredibly low price. Sites like this can whine about what it isn't, but what is it will sell extremely well. The price is right, it will run Vista adequately, offloads work from the processor for playback, and is silent (the 4550 anyway). It's going to sell really well, especially for people with AMD IGPs that want them to work together. Again, it's a pity Anandtech didn't have the sense to try this out and see if it was worthwhile.