RAID Primer: What's in a number?

by Dave Robinet on September 7, 2007 12:00 PM EST- Posted in

- Storage

RAID 5

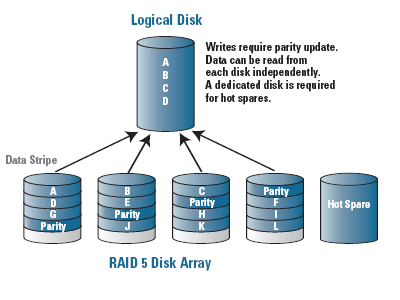

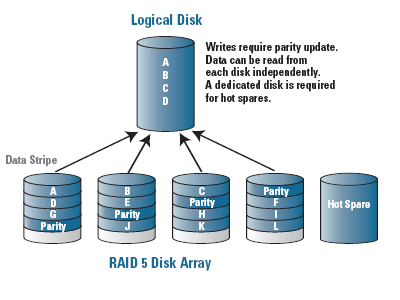

In an effort to strike a balance between performance and data redundancy, RAID 5 not only stripes data across multiple disks, but also writes a "parity" block among those disks which allows the array to recover from a single failed drive in the set. This parity block is staggered from one drive to the next, resulting in each drive having either a portion of the data that is trying to be read, or the parity block which allows the data to be reconstructed. In this way, the array gains some performance benefits in having the data striped among multiple disks, while being able to stay online after the failure of a single disk in the array.

Rather than having a dedicated drive for parity as in the less-popular RAID levels 3 and 4, the parity sharing of RAID 5 allows for a more distributed drive access pattern, resulting in improved write performance and a more even disk wear pattern than with a dedicated parity drive.

In its optimal form, RAID 5 provides substantially faster read performance than a single drive or RAID 1 configuration, but write performance suffers due to the need to write (and sometimes recalculate) the parity information for the majority of writes performed. While this write performance is still faster than a single disk configuration in most cases, the true performance benefit of a RAID 5 array is in its read ability.

It should be noted that the read performance of a RAID 5 array improves as the number of disks in the array increases. This increase in disks, however, increases the odds of a disk failure in the array due to the law of averages, which results in a performance degradation during rebuilding operations. It also increases the likelihood of the entire array being unrecoverable if a second disk in the array fails before the first failed disk is replaced. In the image shown, the array contains a "hot spare" disk - in the event of a disk failure, this "hot spare" disk would be brought into the array to replace the failed disk immediately.

RAID 5 finds a comfortable home in most "read often, write infrequently" server applications which require long periods of uptime, such as web servers, file/print servers, and database servers. Dedicated RAID 5 controllers that include large amounts of RAM can negate much of the write performance penalty, but such setups are quite a bit more costly. Note also that simplified RAID 5 controllers exist that require the CPU to perform the parity calculations, which can result in write performance that is lower than a single drive.

Pros:

RAID 6 attempts to address the most glaring of the RAID 5 issues: The comparatively large window in which the array is in a dangerous state due to a failed disk.

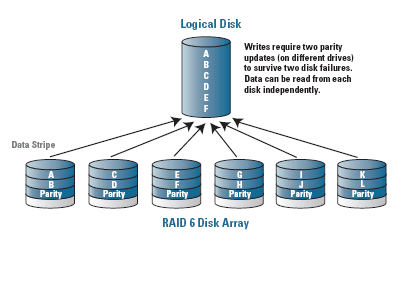

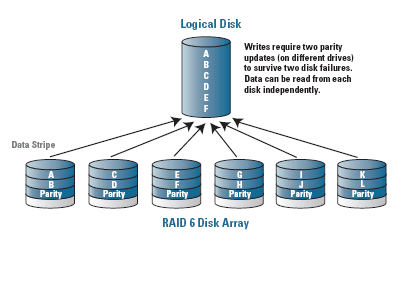

As in RAID 5, RAID 6 staggers its parity information across multiple drives. Its major difference, however, is that it writes two parity blocks for every stripe of data, which means that the array is capable of remaining accessible to the users even after having sustained two simultaneous drive failures. The advantage of a RAID 6 array versus a RAID 5 array with a hot spare risk is that no rebuilding is necessary to bring the last disk into the array in the event of a failure. In this sense, performance is more or less guaranteed after a single disk failure under RAID 6, whereas a significant performance hit occurs during the rebuilding required under RAID 5.

RAID 6's parity scheme is not simply multiple copies of the same parity information, but rather two different means of calculating parity information for the same data. This results in a much higher computing overhead than the already-intensive RAID 5 scheme, and a resulting increase in controller/CPU usage. This requirement for the second parity calculation and write, however, further adversely impacts the write performance of a RAID 6 array versus a RAID 5 solution.

RAID 6 is an excellent choice for both extremely mission-critical applications and in instances where large numbers of disks are intended to be used in the array to improve read performance. Because of this (and the poor write performance without special hardware), RAID 6 support is typically only included in high-end, expensive controller cards.

Pros:

In an effort to strike a balance between performance and data redundancy, RAID 5 not only stripes data across multiple disks, but also writes a "parity" block among those disks which allows the array to recover from a single failed drive in the set. This parity block is staggered from one drive to the next, resulting in each drive having either a portion of the data that is trying to be read, or the parity block which allows the data to be reconstructed. In this way, the array gains some performance benefits in having the data striped among multiple disks, while being able to stay online after the failure of a single disk in the array.

Rather than having a dedicated drive for parity as in the less-popular RAID levels 3 and 4, the parity sharing of RAID 5 allows for a more distributed drive access pattern, resulting in improved write performance and a more even disk wear pattern than with a dedicated parity drive.

In its optimal form, RAID 5 provides substantially faster read performance than a single drive or RAID 1 configuration, but write performance suffers due to the need to write (and sometimes recalculate) the parity information for the majority of writes performed. While this write performance is still faster than a single disk configuration in most cases, the true performance benefit of a RAID 5 array is in its read ability.

It should be noted that the read performance of a RAID 5 array improves as the number of disks in the array increases. This increase in disks, however, increases the odds of a disk failure in the array due to the law of averages, which results in a performance degradation during rebuilding operations. It also increases the likelihood of the entire array being unrecoverable if a second disk in the array fails before the first failed disk is replaced. In the image shown, the array contains a "hot spare" disk - in the event of a disk failure, this "hot spare" disk would be brought into the array to replace the failed disk immediately.

RAID 5 finds a comfortable home in most "read often, write infrequently" server applications which require long periods of uptime, such as web servers, file/print servers, and database servers. Dedicated RAID 5 controllers that include large amounts of RAM can negate much of the write performance penalty, but such setups are quite a bit more costly. Note also that simplified RAID 5 controllers exist that require the CPU to perform the parity calculations, which can result in write performance that is lower than a single drive.

Pros:

- Good usable amount of data (capacity is the sum of all but one drive in the set)

- Fault-tolerant - can survive one disk failure without impact to users

- Strong read performance

- Write performance (without a large controller cache) is substantially below that of RAID 0

- Expensive (either in terms of controller cost or CPU usage) due to parity calculations

RAID 6 attempts to address the most glaring of the RAID 5 issues: The comparatively large window in which the array is in a dangerous state due to a failed disk.

As in RAID 5, RAID 6 staggers its parity information across multiple drives. Its major difference, however, is that it writes two parity blocks for every stripe of data, which means that the array is capable of remaining accessible to the users even after having sustained two simultaneous drive failures. The advantage of a RAID 6 array versus a RAID 5 array with a hot spare risk is that no rebuilding is necessary to bring the last disk into the array in the event of a failure. In this sense, performance is more or less guaranteed after a single disk failure under RAID 6, whereas a significant performance hit occurs during the rebuilding required under RAID 5.

RAID 6's parity scheme is not simply multiple copies of the same parity information, but rather two different means of calculating parity information for the same data. This results in a much higher computing overhead than the already-intensive RAID 5 scheme, and a resulting increase in controller/CPU usage. This requirement for the second parity calculation and write, however, further adversely impacts the write performance of a RAID 6 array versus a RAID 5 solution.

RAID 6 is an excellent choice for both extremely mission-critical applications and in instances where large numbers of disks are intended to be used in the array to improve read performance. Because of this (and the poor write performance without special hardware), RAID 6 support is typically only included in high-end, expensive controller cards.

Pros:

- Fair usable amount of data (sum of all but two drives in the set)

- Provides more comfortable levels of redundancy for very large array sizes (8+ disks)

- Strong read performance

- Expensive (both in computing power, controller, and in additional "wasted" disks)

- Write performance is generally very poor compared to other RAID solutions

41 Comments

View All Comments

ShadowFlash - Monday, March 2, 2009 - link

RAID 10 not as fault tolerant as RAID 5 ??? unlikely...RAID 5 is used when capacity cannot be sacrificed for the increased data protection of RAID 10. Yes, RAID 0+1 is horrible, and should be avoided as mentioned in other posts. RAID 10 sets will absolutely rebuild faster than a RAID 5 in almost all situations. With the dirt cheap pricing of modern large capacity drives, I can think of almost no situation where RAID 5 is preferable to RAID 10. The flaw is in the way hard drives die, and parity. I was going to type out a long explanation, but this link covers it well.http://miracleas.com/BAARF/RAID5_versus_RAID10.txt">http://miracleas.com/BAARF/RAID5_versus_RAID10.txt

I strongly urge any home user not to use RAID 5 ( or any other parity form of RAID ). RAID 5 is antiquated and left over from the days when cost vs capacity was a major concern. RAID 10 also dosen't require as expensive of a controller card.

And remember if you do insist on RAID 5 to never use it as a system disk. The parity overhead from the many small writes an OS performs is far too great a penalty.

I'm not trying to start a fight, just trying to educate on the flaws of parity.

Codesmith - Sunday, September 9, 2007 - link

The drives in my 2 drive RAID 1 array are 100% readable as normal drives by any SATA controller.With any other RAID configuration you are dependent on both remembering the proper settings, performing the rebuild properly and most importantly, finding a compatible controller.

Until the manufactures decide to standardize, the system you have in place to protect your data could have you waiting days to access your data.

I am planning to add a RAID 5/6 array for home theater usage, but the business documents are staying on the RAID 1 array.

Anonymous Freak - Saturday, September 8, 2007 - link

That's my acronym for it. It also describes my desire for it.RAID = Redundant Array of Independent Disks.

AIDS = Array of Independent Disks, Striped.

"RAID" 0 has very few legitimate uses. If you value the data stored at all, and have any care at all about uptime, it's inappropriate. If all you want is an ultra-fast 'scratch' disk, it is appropriate. Before ultra-large drives, I used a RAID-0 of 9 GB, 10k RPM SCSI drives as my capture and edit partition for video editing, and that's about it. Once the editing was done, I wrote the finished file back out to DV tape, and transcoded to something more manageable for computer use, and storage on my main ATA hard drive.

MadAd - Saturday, September 8, 2007 - link

[quote]"Higher quality RAID 1 controllers can outperform single drive implementations by making both drives active for read operations. This can in theory reduce file access times (requests are sent to whichever drive is closer to the desired data) as well as potentially doubling data throughput on reads"[/quote]Its not the best place to post here I know, but as a home user with a 1tb 0+1 pata array on a promise fastrack (on a budget) I was thinking of looking on ebay for a reliable replacement controller with the above characteristics, but dont know what series cards are both inexpensive for a second user now and fit an x32 pci.

Thanks a lot

tynopik - Saturday, September 8, 2007 - link

> but as a home user with a 1tb 0+1 pata array on a promise fastrack (on a budget) I was thinking of looking on ebay for a reliable replacement controller with the above characteristics, but dont know what series cards are both inexpensive for a second user now and fit an x32 pci.saying you currently have a 0+1 array i assume you have at least 4 drives, probably 4 500gb drives

since 0+1 provides the speed of raid0 with the mirroring of raid1 i'm not sure what you're looking for. if you went for a straight raid1 solution your system would see 2 500gb volumes instead of 1 1tb volume.

and not sure what you mean by x32 pci, just a regular pci slot? if you're talking about PCIe they only go to x16 and can't say i'm aware of any 'reasonable' card that uses more than x8

MadAd - Sunday, September 9, 2007 - link

urm....no4x250 and im wondering what enterprise class controller is cheap on ebay that uses pata drives, an x32 pci slot

(not pcie, see- http://en.wikipedia.org/wiki/Peripheral_Component_...">http://en.wikipedia.org/wiki/Peripheral_Component_...

and performs as quoted from the article, because my promise controller is good but still a home class controller. Just i dont know the enterprise segment at all and I thought some of these guys would.

tynopik - Sunday, September 9, 2007 - link

> 4x250then you aren't using raid0+1, just raid0

> (not pcie, see- http://en.wikipedia.org/wiki/Peripheral_Component_...">http://en.wikipedia.org/wiki/Peripheral_Component_...

it's the x32 that is confusing

if you search that page you will see x32 doesn't show up anywhere on it

i'm going to assume you just mean 32-bit PCI which is standard which is what practically every motherboard manufactured today has at least one of

but still i can't answer your question about which PCI (no need to say 32-bit, it's assumed) raid controllers support IDE drives with enhanced read speed, sorry

MadAd - Sunday, September 9, 2007 - link

http://en.wikipedia.org/wiki/Peripheral_Component_...">http://en.wikipedia.org/wiki/Peripheral_Component_...Zak - Saturday, September 8, 2007 - link

I gave up on RAID "for protection" a long time ago. I tried everything from software RAID to on-board 1 and 5, and to $300 cards with controllers and on-board RAM and 5 hard drives. It is not worth the hassle for home use or even small business, period. I absolutely agree with that article from Pudget computers. I had more problems due to raid controllers acting up than hard drive failures. RAID will nor protect you against directory corruption, accidental deletion and infections - things that happen A LOT more often than hard drive failures. RAID adds level of complexity that involves extra maintenance and extra cost.My current solution is two external FW or USB drivers. I run two redundant Retrospect backups every 12 hours, one right after another that backs up my storage drive plus one at night that mirrors the drive to another. It's probably an overkill but I'll take it over any RAID5 any time: three separate drives, three separate file systems - total protection against file deletion, directory corruption and infections (the external drives are dismounted between backups. I do the same on Macs and PCs.

I still may use RAID0 for scratch and system disks for speed, but my files are kept on a separate single drive that gets triple backup love.

Zak

Sudder - Saturday, September 8, 2007 - link

Hi,can anybody point me in the right direction?

I want to switch from backing up my porn (lets call it data ;-) ) on DVD to saving my data on HDs (since cost/gig arn't that far appart anymore and bue-ray will IMHO not catch up fast enough in regards of cost/gig to be a good alternative for me).

But since loosing 1 HD (which can always happen) puts one back a couple of 100gigs at once I want some redundance.

Going for RAID 5 (I'm not willing to spend the extra money on RAID 6) has the huge disatvantage (for _my_ scenario) that if I loose 2 HDs (which also might happen since I plan to store the discs "offline" most of the time) _all_ my data is gone.

So I'm looking for a solution which stores my data in a "normal" way on the discs + one extra disk with the parity (somewhat like RAID 3 but without the striping).

I don't care about read/write speed too much, I just want the redundance and the cost effectiveness of RAID 5 (RAID 1 would also be too expensive for me) but without the danger of loosing all if more than 1 disc is gone*. Also, if I just want to read some data this way it should be sufficent to plug in just one disc instead of the whole array with RAID 5.

So, does anyone know if such a Sollution is allready implemented somewhere? (it also should be able to calculate the parity "on the fly" so that if I change one single file on one of the discs I don't have to wait until the parity of the whole array is recalculated but just for the corresponding sectors that have actually changed)

* this solution isn't that much better than RAID 5 with small arrays, but the more discs there are in the array, the more data will survive if 2 (or even more) discs die - with RAID 5 all is lost (and going for multiple 3 disc RAID 5 arrays isn't verry cost effective)