Quad Core Intel Xeon 53xx Clovertown

by Johan De Gelas on December 27, 2006 5:00 AM EST- Posted in

- IT Computing

Quad Core Choices

True, a "dual dual core" is not really a quad core, such as AMD's upcoming quad core Barcelona chip. However, from an economical point of view it does make a lot of sense: not only is there the marketing advantage of being the "first quad core" processor, but - according to Intel - using two dual cores gives you 20% higher die yields and 12% lower manufacturing costs compared to a simulated but similar quad core design. Economical advantages aren't the only perspective, of course, and from a technical point of view there are some drawbacks.

Per core bandwidth is one of them, but frankly it receives too much attention. Only HPC applications really benefit from high bandwidth. Let us give you one example. We tested the Xeon E5345 with two and four channels of FB-DIMMs, so basically we tested with the CPU with about 17GB/s and 8.5GB/s of memory bandwidth. The result was that 3dsmax didn't care (1% difference) and that even the memory intensive benchmark SPECjbb2005 showed only an 8% difference. Intel's own benchmarks prove this further: when you increase bandwidth by 25% using a 1333 MHz FSB instead of a 1066 MHz, the TPC score is about 9% higher running on the fastest Clovertown. That performance boost would be a lot less for database applications that do not use 3TB of data. Thus, memory bandwidth for most applications - and thus IT professionals - is overrated. Memory latency on the other hand can be a critical factor.

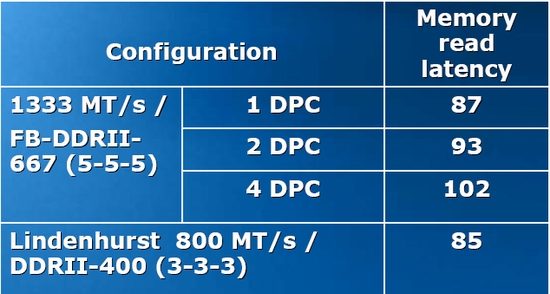

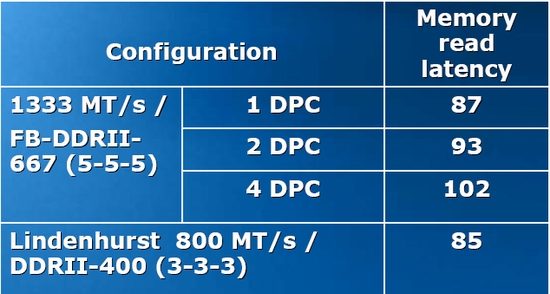

Unfortunately for Intel, that is exactly the Achilles heel of Intel's current server platform. The numbers below are expressed in clock pulses, except the last column, where we measure in nanoseconds. All measurements were done with the latency test of CPU-z.

Anand's memory latency tests measured a 70 ns latency on a Core 2 using DDR2 667 and a desktop chipset and a 100ns latency for the same CPU with a server chipset and 667 MHz FB-DIMM. Let us add about 10 ns latency for using buffered instead of unbuffered DIMMs and using the 5000p chipset instead of Intel's desktop chipset. Based on those assumptions, a theoretical Xeon DP should be able to access 667 MHz DIMMs in about 80 ns. So using FB-DIMMs result in 25% more latency compared to DDR2, which is considerable. Intel is well aware of this as you can see from the slide below.

An older chipset (Lindenhurst) with slower memory (DDR2-400) is capable of offering lower latency than a sparkling new one. FB-DIMM offers a lot of advantages, such as dramatically higher bandwidth per pin and higher capacity. FB-DIMM is a huge step forward from the motherboard designer point of view. However, with 25% higher latency and 3-6 Watt more power consumption per DIMM, it remains to be seen if it is really a step forward for the server buyer.

How about bandwidth? The bandwidth tests couldn't find any real bandwidth advantage for FB-DIMM. The 533 MHz DDR2 chips delivered about 3.7GB/s (via SSE2), and about 2.7GB/s in "normal" conditions (non-SSE compiled). Compared to DDR400 on the Opteron (4.5GB/s max, 3.5GB/s), this is nothing spectacular. Of course, we tested with stream and ScienceMark, and these are single threaded numbers. Right now we don't have the right multithreaded benchmarking tools to really compare the bandwidth of complex NUMA systems such as our HP DL585 or the DIB (Dual Independent Bus) of the Xeon system.

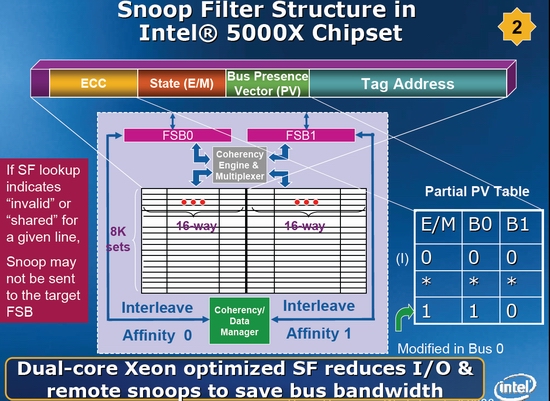

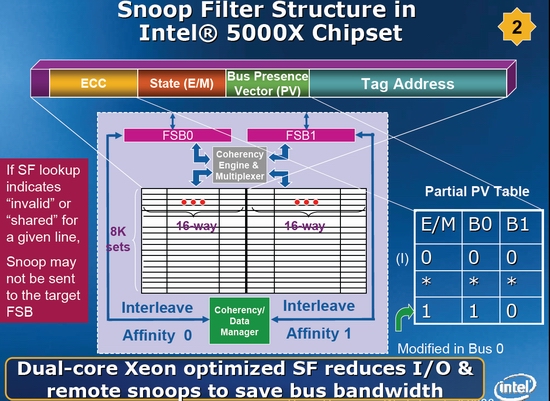

There are much bigger technical challenges than bandwidth. Two Xeon 53xx CPUs have a total of four L2 caches, which all must remain consistent. That results in quite a bit of cache coherency traffic that has to pass over the FSB to the chipset, and from the chipset to the other independent FSB. To avoid making the four cache controllers listen (snoop) all those messages, Intel implemented a "snoop filter", a sort of cache that keeps track of the coherency state info of all cache lines mapped. The snoop filter tries to prevent unnecessary cache coherency traffic from being sent to the other Independent Bus.

The impact that cache coherency has on performance is not something only academics discuss; it is a real issue. Intel's successor of the 5000p chipset, codenamed "Seaburg", will feature a more intelligent and larger snoop filter (SF) and is expected to deliver 5% higher performance in bandwidth/FP intensive applications (measured in LS Dyna, Fluent and SpecFP). Seaburg's larger SF will be split up into four sets instead of Blackford's two, which allows it to keep track of each separate L2 cache more efficiently.

To quantify the delay that a "snooping" CPU encounters when it tries to get up-to-date data from another CPU's cache, take a look at the numbers below. We have used Cache2Cache before, and you can find more info here. Cache2Cache measures the propagation time from a store by one processor to a load by the other processor. The results that we publish are approximately twice the propagation time.

The Xeons based on the Core architecture (E5345 and 5160) can keep cache coherency latency between the two cores to a minimum thanks to the shared L2 cache. When exchanging cache coherency information from one die from another, the Opteron does have an advantage: exchanging data goes 7 to 25% quicker. Note that the "real cache coherency latency" when running a real world workload is probably quite a bit higher for the Xeons. When the FSB has to transfer a lot of data to the memory, the FSB will be "less available" for cache coherency traffic.

The Opteron platform can handle its cache coherency traffic via the HyperTransport links, while most of the data is transferred by the onboard memory controller to the local memory. Unless there is a lot of traffic to remote memory, the Opteron doesn't have to send the cache coherency traffic the same way as the data being processed.

So when we look at our benchmarking numbers, it is good to remember that cache coherency traffic and high latency accesses to the memory might slow our multiprocessing systems down.

True, a "dual dual core" is not really a quad core, such as AMD's upcoming quad core Barcelona chip. However, from an economical point of view it does make a lot of sense: not only is there the marketing advantage of being the "first quad core" processor, but - according to Intel - using two dual cores gives you 20% higher die yields and 12% lower manufacturing costs compared to a simulated but similar quad core design. Economical advantages aren't the only perspective, of course, and from a technical point of view there are some drawbacks.

Per core bandwidth is one of them, but frankly it receives too much attention. Only HPC applications really benefit from high bandwidth. Let us give you one example. We tested the Xeon E5345 with two and four channels of FB-DIMMs, so basically we tested with the CPU with about 17GB/s and 8.5GB/s of memory bandwidth. The result was that 3dsmax didn't care (1% difference) and that even the memory intensive benchmark SPECjbb2005 showed only an 8% difference. Intel's own benchmarks prove this further: when you increase bandwidth by 25% using a 1333 MHz FSB instead of a 1066 MHz, the TPC score is about 9% higher running on the fastest Clovertown. That performance boost would be a lot less for database applications that do not use 3TB of data. Thus, memory bandwidth for most applications - and thus IT professionals - is overrated. Memory latency on the other hand can be a critical factor.

Unfortunately for Intel, that is exactly the Achilles heel of Intel's current server platform. The numbers below are expressed in clock pulses, except the last column, where we measure in nanoseconds. All measurements were done with the latency test of CPU-z.

| CPU-Z Memory Latency | ||||||

| CPU | L1 | L2 | L3 | min mem | max mem | Absolute latency (ns) |

| Dual DC Xeon 5160 3.0 | 3 | 14 | 69 | 380 | 127 | |

| Dual DC Xeon 5060 3.73 | 4 | 30 | 200 | 504 | 135 | |

| Dual Quad Xeon E5345 2.33 | 3 | 14 | 80 | 280 | 120 | |

| Quad DC Xeon 7130M 3.2 | 4 | 29 | 109 | 245 | 624 | 195 |

| Quad Opteron 880 2.4 | 3 | 12 | 84 | 228 | 95 | |

Anand's memory latency tests measured a 70 ns latency on a Core 2 using DDR2 667 and a desktop chipset and a 100ns latency for the same CPU with a server chipset and 667 MHz FB-DIMM. Let us add about 10 ns latency for using buffered instead of unbuffered DIMMs and using the 5000p chipset instead of Intel's desktop chipset. Based on those assumptions, a theoretical Xeon DP should be able to access 667 MHz DIMMs in about 80 ns. So using FB-DIMMs result in 25% more latency compared to DDR2, which is considerable. Intel is well aware of this as you can see from the slide below.

An older chipset (Lindenhurst) with slower memory (DDR2-400) is capable of offering lower latency than a sparkling new one. FB-DIMM offers a lot of advantages, such as dramatically higher bandwidth per pin and higher capacity. FB-DIMM is a huge step forward from the motherboard designer point of view. However, with 25% higher latency and 3-6 Watt more power consumption per DIMM, it remains to be seen if it is really a step forward for the server buyer.

How about bandwidth? The bandwidth tests couldn't find any real bandwidth advantage for FB-DIMM. The 533 MHz DDR2 chips delivered about 3.7GB/s (via SSE2), and about 2.7GB/s in "normal" conditions (non-SSE compiled). Compared to DDR400 on the Opteron (4.5GB/s max, 3.5GB/s), this is nothing spectacular. Of course, we tested with stream and ScienceMark, and these are single threaded numbers. Right now we don't have the right multithreaded benchmarking tools to really compare the bandwidth of complex NUMA systems such as our HP DL585 or the DIB (Dual Independent Bus) of the Xeon system.

There are much bigger technical challenges than bandwidth. Two Xeon 53xx CPUs have a total of four L2 caches, which all must remain consistent. That results in quite a bit of cache coherency traffic that has to pass over the FSB to the chipset, and from the chipset to the other independent FSB. To avoid making the four cache controllers listen (snoop) all those messages, Intel implemented a "snoop filter", a sort of cache that keeps track of the coherency state info of all cache lines mapped. The snoop filter tries to prevent unnecessary cache coherency traffic from being sent to the other Independent Bus.

The impact that cache coherency has on performance is not something only academics discuss; it is a real issue. Intel's successor of the 5000p chipset, codenamed "Seaburg", will feature a more intelligent and larger snoop filter (SF) and is expected to deliver 5% higher performance in bandwidth/FP intensive applications (measured in LS Dyna, Fluent and SpecFP). Seaburg's larger SF will be split up into four sets instead of Blackford's two, which allows it to keep track of each separate L2 cache more efficiently.

To quantify the delay that a "snooping" CPU encounters when it tries to get up-to-date data from another CPU's cache, take a look at the numbers below. We have used Cache2Cache before, and you can find more info here. Cache2Cache measures the propagation time from a store by one processor to a load by the other processor. The results that we publish are approximately twice the propagation time.

| Cache2Cache Latency | |||||

| Cache coherency ping-pong (ns) | Xeon E5345 | Xeon DP 5160 | Xeon DP 5060 | Xeon 7130 | Opteron 880 |

| Same die, same package | 59 | 53 | 201 | 111 | 134 |

| Different die, same package | 154 | N/A | N/A | N/A | |

| Different die, different socket | 225 | 237 | 265 | 348 | 169-188 |

The Xeons based on the Core architecture (E5345 and 5160) can keep cache coherency latency between the two cores to a minimum thanks to the shared L2 cache. When exchanging cache coherency information from one die from another, the Opteron does have an advantage: exchanging data goes 7 to 25% quicker. Note that the "real cache coherency latency" when running a real world workload is probably quite a bit higher for the Xeons. When the FSB has to transfer a lot of data to the memory, the FSB will be "less available" for cache coherency traffic.

The Opteron platform can handle its cache coherency traffic via the HyperTransport links, while most of the data is transferred by the onboard memory controller to the local memory. Unless there is a lot of traffic to remote memory, the Opteron doesn't have to send the cache coherency traffic the same way as the data being processed.

So when we look at our benchmarking numbers, it is good to remember that cache coherency traffic and high latency accesses to the memory might slow our multiprocessing systems down.

15 Comments

View All Comments

Antinomy - Wednesday, March 7, 2007 - link

A great review, very interesting.But there are a few things to mention. A mistake in results of Cinebench test. In the overall table the uni Clovertown system got 1272 points, but in the next (per core performance) - 1169. The result was swapped with the one of Xeon 7130. And a comment about the scalability extrapolation. The result of scalability 2.33 Clover vs 3.0 Dual Woodcrest can be hardly compared due to different organization of the systems. These MoBo have two independent FSB so this means, that the two Woodcrests will be provided with twice more peak memory bandwith. This can't make no influence on the result. Also the 4 channel memory mode provides a 5% increase versus 2 channel in real bandwith, so we can't say that theese applications do not suffer from lack of memory bandwith.

It would be interesting to provide a test of uni Woodcrest system and a test of system based on Woodcrest (both uni and dual) at the same frequency as Clovertown has. And a Kentsfield\Conroe systems (despite they aren't server ones) would be nice to look at because of their more efficient usage of memory bandwith and FSB.

afuruhed - Thursday, December 28, 2006 - link

We are getting more Clovertowns. There is a chart at http://www.pantor.com/software.html">pantor.com that indicates that some applications benefit a lot. http://en.wikipedia.org/wiki/FIX_protocol">The FIX protocol is a technical specification for electronic communication of trade-related messages (financial markets).henriks - Thursday, December 28, 2006 - link

Agree with other responses - good article!Some comments on the jbb results page:

You state that JRockit is (only) available for x86-64 and Itanium. x86 and Sparc should be added to this list.

The JRockit configuration you're using enables a single-spaced GC. In that configuration, performance is tied to heap size (larger heap means fewer GC events). Increasing the heap size to 3 GB - as for the Sun benchmark results - would increase performance slightly but in particular give much better scalability when you increase the number of warehouses to large numbers.

It looks like you have not enabled large pages in the OS. Doing this would give a large performance boost and help scalability regardless of chip or JVM vendor.

Astute readers may note that your results are lower than the published results on www.spec.org. Apart from OS and possibly BIOS tuning, the reason is that the most recent results are using a newer JRockit version (not yet available for public download). This new version improves performance on this benchmark by 20-30% on x86 chips - Intel *and* AMD - with the largest positive effect on high-bin chips from the respective vendors. The effect on other Java applications vary from zero to a lot.

Cheers!

Henrik, JRockit team

dropadrop - Wednesday, December 27, 2006 - link

Considering how much we just payed for some DL585's compared to DL380's I think the performance is pretty impressive. There is still something the DL380's (and most other two socket servers) can't do, and that is hosting 64GB or more ram.I mainly take care of vmware servers, and there the amount of memory becomes a bottleneck long before the processors, atleast in most setups. I don't think I'd have alot of use for octal processors unless I got a minimum of 32GB of ram, probably 64.

rowcroft - Thursday, December 28, 2006 - link

I've run into the same challenge when planning for the quads. My take is that I'm getting dual quads for half the price of quad dual cores. With ESX 3's HA functionality I can group the host servers and get the 32GB of ram with double the cores and have host based redundancy for critical vm's.mino - Thursday, December 28, 2006 - link

there is another thing DL380 lacks: no drop-in analog to Barcelona on the horizon...Justin Case - Wednesday, December 27, 2006 - link

Finally a good article at AT, written by someone who knows what he's talking about. Meaningful benchmarks, meaningful comments, and conclusions that make sense. If only some Johanness could rub off on other AT writers...hans007 - Wednesday, December 27, 2006 - link

i think an alternative to say a dual dual core AMD though even as a server or workstation is say a quad core socket 775 cpu. I know the lower 3xxx series xeons are made for this (and are exactly the same as core 2 duo) soyou could do a comparison of 2 amd dual cores vs a single 775 quad with ECC ddr2 etc.

mino - Thursday, December 28, 2006 - link

Check QuadFX vs. Kentsfield reviews.With ECC both results will be a bit lower but the conparison remains.

A small hint: NO ONE tested QuadFX as DB server against Kenstfield....

Gues what: Quad FX is cheaper and would rules the roost on server-like tasks.

ltcommanderdata - Wednesday, December 27, 2006 - link

Well it's nice to finally see a review of the 5145, although I was hoping for more detailed power consumption numbers. The performance benchmarks were very detailed though which was great.Thought I would point out a few errors I noticed as I was flipping through. First on page 2, in the Cache2Cache Latency chart the 201 for the Xeon DP 5060 that is placed in the "Same die, same package" row should be in the "Different die, same package" row. Dempsey uses a dual die approach like Presler and Cloverton as opposed to a single die approach like Smithfield and Paxville DP. And in the last page in the conclusion, you mentioned Clarksboro having "four DIBs", which implies 8 FSBs. I believe that should read two DIBs or really a Quad Independent Bus (QIB) since I'm pretty sure it only has 4 FSBs. (On a side note, Intel slides showed those 4 FSBs clocked at 1066MHz which is really disappointing. Hopefully, now that Cloverton turns out to come in 1333MHz versions instead of only 1066MHz versions that was first announced, Tigerton (and therefore Clarksboro) which is based on Cloverton will also have 1333MHz versions.)