NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

Texture Filtering Image Quality

Texture filtering is always a hot topic when a new GPU is introduced. For the past few years, every new architecture has had a new take on where and how to optimize texture filtering. The community is also very polarized and people can get really fired up about how this company or that is performing an optimization that degrades the user's experience.

The problem is that all 3D graphics is an optimization problem. If GPUs were built to render every detail of every of every scene without any optimization, rather than frames per second, we would be looking at seconds per frame. Despite this, looking at the highest quality texture filtering available is a great place from which to start working our way down to what most people will use.

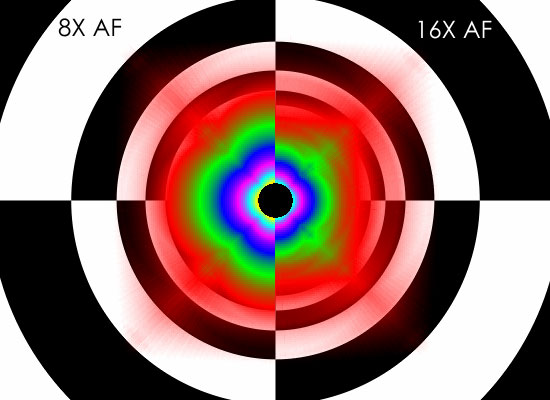

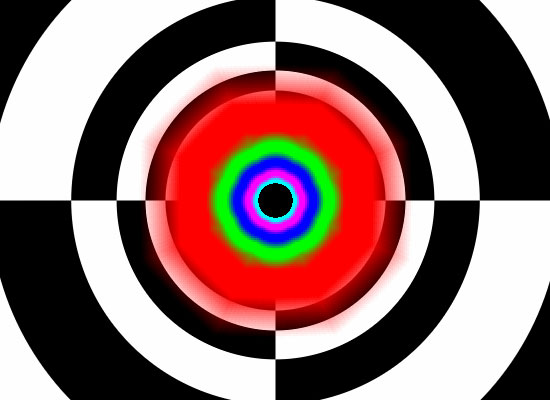

The good news is that G80 completely eliminates angle dependent anisotropic filtering. Finally we have a return to GeForce FX quality anisotropic filtering. When stacked up against R580 High Quality AF with no optimizations enabled on either side (High Quality mode for NVIDIA, Catalyst AI Disabled for ATI), G80 definitely shines. We can see at 8xAF (left) under NVIDIA's new architecture is able to more accurately filter textures based on distance from and angle to the viewer. On the right, we see ATI's angle independent 16xAF degrade in quality to a point where different texture stages start bleeding into one another in undesirable ways.

ATI G80

Hold mouse over links to see Image Quality

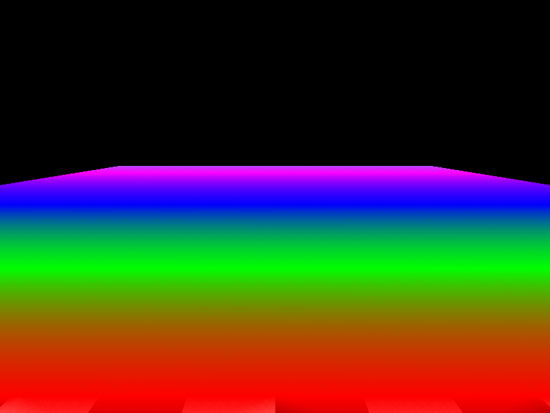

Oddly enough, ATI's 16xAF is more likely to cause shimmering with the High Quality AF box checked than without. Even when looking at an object like a flat floor, we can see the issue pop up in the D3DAFTester. NVIDIA has been battling shimmering issues due to some of their optimizations over the past year or so, but these issues could be avoided through driver settings. There isn't really a way to "fix" ATI's 16x high quality AF issue.

ATI Normal Quality AF ATI High Quality AF

Hold mouse over links to see Image Quality

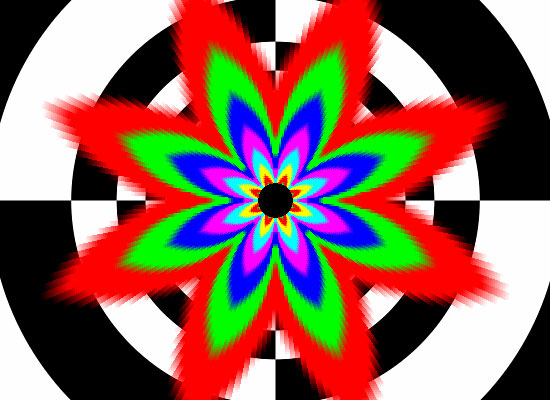

But, we would rather have angle independent AF than not, so for the rest of this review, we will enable High Quality AF on ATI hardware. This will give us a more fair comparison to G80, even if we still aren't really looking at two bowls of apples. G70 is not able to enable angle independent AF, so we'll be stuck with the rose pattern we've been so familiar with over the past few years.

There is still the question of how much impact optimization has on texture filtering. With G70, disabling optimizations resulted in more trilinear filtering being done, and thus a potential performance decrease. The visual result is minimal in most cases, as trilinear filtering is only really necessary to blur the transition between mipmap levels on a surface.

G70 Normal Quality AF G70 High Quality AF

Hold mouse over links to see Image Quality

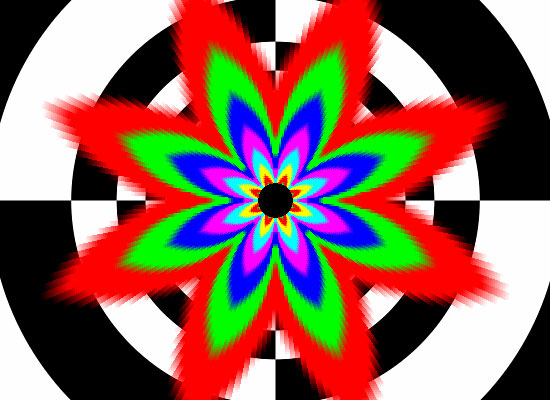

On G80, we see a similar effect when comparing default quality to high quality. Of course, with angle independent anisotropic, we will have to worry less about shimmering period, so optimizations shouldn't cause any issues here. Default quality does show a difference in the amount of trilinear filtering being applied, but this does not negatively impact visual quality in practice.

G80 Normal Quality AF G80 High Quality AF

Hold mouse over links to see Image Quality

111 Comments

View All Comments

Nightmare225 - Sunday, November 26, 2006 - link

Are the FPS posted in this article, Minimum FPS, Average FPS, or Maximum? Thanks!multiblitz - Monday, November 20, 2006 - link

I enjoyed your reviews always a lot as they inclueded the video-capbilities for a HTPC on previous cards. Unfortunately this was this time not the case. Hopefully there will be a 2. Part covering this as well ? If so, it would be nice to make a compariosn on picture quality as well against the filters of ffdshow, as nvidia is now as well supporting postprocessing filters...DerekWilson - Tuesday, November 21, 2006 - link

What we know right now is that 8800 gets a 128 out of 130 on HQV tests.We haven't quite put together an HTPC look at 8800, but this is a possibility for the future.

epsil0n - Sunday, November 19, 2006 - link

I am not agree with this:"It isn't surprising to see that NVIDIA's implementation of a unified shader is based on taking a pixel shader quad pipeline, and breaking up the vector units into 4 scalar units. Now, rather than 4 pixel quads, we see 16 SPs per "quad" or block of stream processors. Each block of 16 SPs shares 4 texture address units, 8 texture filter units, and an L1 cache."

If i understood well this sentence tells that given 4 pixels the numbers of SPs involved in the computation are 16. Then, this assumes that each component of the pixel shader is computed horizontally over 16 SP (4pixel x 4rgba = 16SP). But, are you sure??

I didn't found others articles over the web that speculate about this. Reading others articles the main idea that i realized is that a shader is computed by one and only one SP. Each vector instruction (inside the shader) is "mapped" as a sequence of scalar operations (a dot product beetwen two vectors is mapped as 4 MUD/ADD operations). As a consequence, in this scenario 4 pixels are computed only by 4 SPs.

DerekWilson - Tuesday, November 21, 2006 - link

Honestly, NVIDIA wouldn't give us this level of detail. We certainly pressed them about how vertices and pixels map to SPs, but the answer we got was always something about how dynamic the hardware is able to dynamically schedule the SPs optimally according to what needs to be done.They can get away with being obscure about how they actually process the data because it could happen either way and provide the same effect to the developer and gamer alike.

Scheduling the simultaneous processing one vec4 MAD operation on 4 quads (16 pixels) over 4 groups of 4 SPs will take 4 clock cycles (in terms of throughput). Processing the same 16 pixels on 16 SPs will also take 4 clock cycles.

But there are reasons to believe that things happen the way we described. Loading components of 16 different "threads" (verts, pixels or whatever) would likely be harder on the cache than loading all 4 components of 4 different threads. We could see them schedule multiple ops from 4 threads to fill up each block of shaders -- like computing 4 consecutive scalar operations for 4 threads on 16 SPs.

At the same time, it might be easier to maximize SP utilization if 16 threads were processed on one block of SPs every clock.

I think the answer to this question is that NVIDIA knows, they didn't tell us, and all we can do is give it our best guess.

xtknight - Thursday, November 16, 2006 - link

This has been AT's best article in awhile. Tons of great, concise info.I have a question about the gamma corrected AA. This would be detrimental if you've already calibrated your display, correct (assuming the game heeds to the calibration)? Do you know what gamma correction factor the cards use for 'gamma corrected AA'?

DerekWilson - Monday, November 20, 2006 - link

I don't know if they dynamically adjust gamma correction based on monitor (that would be nice though) ...if they don't they likely adjusted for a gamma of either (or between) 2.2 or 2.5.

Also, thanks :-) There was a lot more we wanted to pack in, but I'm glad to see that we did a good job with what we were able to include.

Thanks,

Derek Wilson

bjacobson - Sunday, November 12, 2006 - link

This comment is unrelated, but could you implement some system where after rating a comment, on reload the page goes back to the comment I was just at? Otherwise I rate something halfway down and then have to spend several seconds finding where I just was. Just a little nuissance.Thanks for the great article, fun read.

neo229 - Friday, November 10, 2006 - link

This is a very suspect quote. A card that requires two PCIe power connectors is going to dissipate a lot of heat. More heat means there must be a faster, louder fan or more substantial and costly heat sink. The extra costs associated with providing a truly quiet card mean that the bulk of manufacturers go with the loud fan option.

DerekWilson - Friday, November 10, 2006 - link

If manufacturers go with the NVIDIA reference design, then we will see a nice large heatsink with a huge quiet fan.Really, it does move a lot of air without making a lot of noise ... Are there any devices we can get to measure the airflow of a cooling solution?

We are also seeing some designs using water cooling and theres even one with a thermo-electric (peltier) cooler on it. Manufacturers are going to great lengths to keep this thing running cool without generating much noise.

None of the 8 retail cards we are testing right now generate nearly the noise of the X1950 XTX ... We are working on a retail roundup right now, and we'll absolutely have noise numbers for all of these cards at load.