AMD Threadripper Pro Review: An Upgrade Over Regular Threadripper?

by Dr. Ian Cutress on July 14, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- ThreadRipper

- Threadripper Pro

- 3995WX

Power Consumption

The nature of reporting processor power consumption has become, in part, a dystopian nightmare. Historically the peak power consumption of a processor, as purchased, is given by its Thermal Design Power (TDP, or PL1). For many markets, such as embedded processors, that value of TDP still signifies the peak power consumption. For the processors we test at AnandTech, either desktop, notebook, or enterprise, this is not always the case.

Modern high performance processors implement a feature called Turbo. This allows, usually for a limited time, a processor to go beyond its rated frequency. Exactly how far the processor goes depends on a few factors, such as the Turbo Power Limit (PL2), whether the peak frequency is hard coded, the thermals, and the power delivery. Turbo can sometimes be very aggressive, allowing power values 2.5x above the rated TDP.

AMD and Intel have different definitions for TDP, but are broadly speaking applied the same. The difference comes to turbo modes, turbo limits, turbo budgets, and how the processors manage that power balance. These topics are 10000-12000 word articles in their own right, and we’ve got a few articles worth reading on the topic.

- Why Intel Processors Draw More Power Than Expected: TDP and Turbo Explained

- Talking TDP, Turbo and Overclocking: An Interview with Intel Fellow Guy Therien

- Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

- Intel’s TDP Shenanigans Hurts Everyone

In simple terms, processor manufacturers only ever guarantee two values which are tied together - when all cores are running at base frequency, the processor should be running at or below the TDP rating. All turbo modes and power modes above that are not covered by warranty. Intel kind of screwed this up with the Tiger Lake launch in September 2020, by refusing to define a TDP rating for its new processors, instead going for a range. Obfuscation like this is a frustrating endeavor for press and end-users alike.

However, for our tests in this review, we measure the power consumption of the processor in a variety of different scenarios. These include full peak AVX workflows, a loaded rendered test, and others as appropriate. These tests are done as comparative models. We also note the peak power recorded in any of our tests.

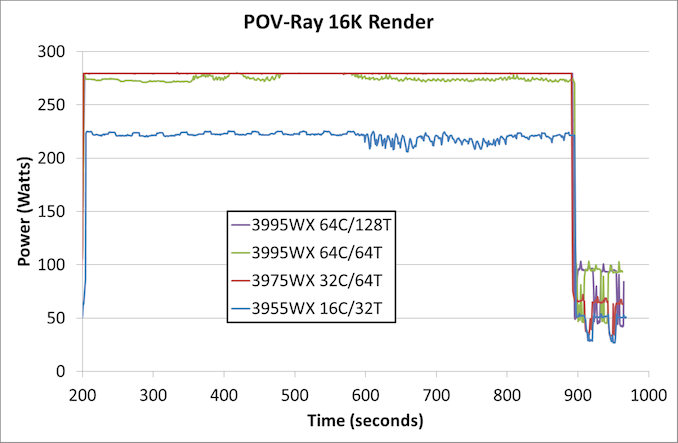

First up is our loaded rendered test, designed to peak out at max power.

In this test the 3995WX with only 64 threads actually uses slightly less power, given that one thread per core doesn’t keep everything active. Despite this, the 64C/64T benchmark result is ~16000 points, compared to ~12600 points when all 128 threads are enabled. Also in this chart we see that the 3955WX with only sixteen cores hovers around the 212W mark.

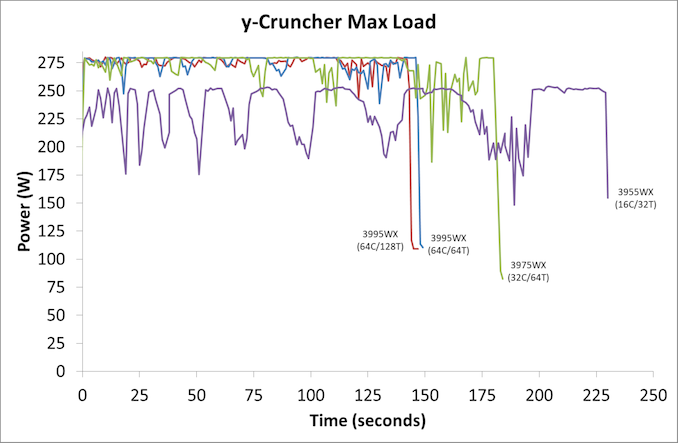

The second test is from y-Cruncher, which is our AVX2/AVX512 workload. This also has some memory requirements, which can lead to periodic cycling with systems that have lower memory bandwidth per core options.

Both of the 3995WX configurations perform similarly, while the 3975WX has more variability as it requests data from memory causing the cores to idle slightly. The 3955WX peaks around 250W this time.

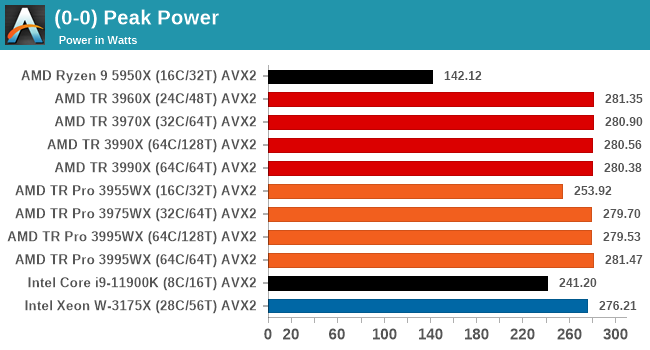

For peak power, we report the highest value observed from any of our benchmark tests.

As with most AMD processors, there is a total package power tracking value, and for Threadripper Pro that is the same as the TDP at 280 W. I have included the AVX2 values here for the Intel processors, however at AVX512 these will turbo to 296 W (i9-11900K) and 291 W (W-3175X).

98 Comments

View All Comments

Mikewind Dale - Wednesday, July 14, 2021 - link

I have a ThreadRipper Pro 3955WX, and I discovered something interesting about the memory bandwidth.Originally, I bought 4x64 GB ECC RDIMM because I thought 256 GB might be enough, and I wanted to leave some empty RAM slots to populate with 128 GB RDIMMs if those ever became cost-effective. (Right now, 128 GB RDIMMs are about triple the price of 64 GB.)

CPU-Z and AIDA64 reported "quad" channel memory, and AIDA64's memory benchmarks showed reasonable memory performance.

But I discovered that 256 GB wasn't enough for my application, so I bought 2 more 64 GB RDIMMs.

At this point, I had 6 DIMMs populated. CPU-Z and AIDA64 both reported "hexa" channel memory, but AIDA64's memory benchmarks showed that my memory performance was about 2/3 that of a Ryzen.

So I bought 2 more RDIMMs again, for a total of 8. Now, my memory benchmark in AIDA64 is much closer to expected.

So the moral of the story is: you can populate 4 DIMMs, or you can populate 8, but don't dare populate 6. Populating precisely 6 DIMMs will absolutely cripple your memory performance, whereas 4 DIMMs still have acceptable performance.

kobblestown - Wednesday, July 14, 2021 - link

The 3955 probably has only 2 CCDs and is therefore limited to 4 DDR channels throughput. It seems that each IF link has the throughput of 2 DDR channels and this makes sense.You should keep in mind that the IO die has in effect 4 dual channel controllers and you may have populated them suboptimally. If you have two dual channel controllers fully populated and two half populated (instead of a third fully populated and the fourth one staying empty) you'll have skewed results. Also, there was some noise about Milan working better with 6 channel configurations so it may be something specific to Rome chips.

Rudde - Wednesday, July 14, 2021 - link

Server providers had requested for 6 channel memory support for server processors and that was implemented in Milan.McFig - Wednesday, July 14, 2021 - link

What kobblestown is suggesting is that maybe Mikewind Dale could have gotten the 6 RDIMMs working by moving one of them so that each pair is fully populated.Mikewind Dale - Wednesday, July 14, 2021 - link

McFig, there are only 8 slots, so I'm not sure how I could have moved the 6 DIMMs among the 8 slots to ensure that each pair is populated.1_rick - Wednesday, July 14, 2021 - link

He probably means "each of 3 pairs fully populated".DougMcC - Wednesday, July 14, 2021 - link

I think the question is whether 3/3 is better than 4/2kobblestown - Friday, July 16, 2021 - link

Heya! Sorry for the nebulous formulation. In terms of the number of DIMMS per memory controller, I suggest having 2+2+2+0 instead of 2+1+2+1. One needs to figure out what this means for any particular MB. But as DougMcC suggests, that would probably mean having 4 DIMMs on one side of the CPU and 2 on the other, rather than having 3 DIMMs on each side. The latter is bound to be suboptimal. Whether the former offers an improvement is something that I would be very interested to know but could be that Rome has some shortcoming in this area which is addressed in Milan.Again, dual CCD configurations are limited to 4 channel bandwidth but it's still worth it to have all channels populated so you don't get bitten by badly handled assymetry and the IO does not fight (too much) with the cores for the bandwidth.

kobblestown - Friday, July 16, 2021 - link

BTW, one should also check the memory interleaving options in the UEFI. Maybe the way the IO die aggregates the memory channels can be tweaked to achive the expected performance even with 6 DIMMs. Or maybe that's only achievable with Milan.Mikewind Dale - Friday, July 16, 2021 - link

Ahhh, I see what you mean. Thanks. Well, I have 8 DIMMs now, and I don't want to mess with my system any more. Maybe Anandtech can test this.