Supermicro SuperServer E302-9D Review: A Fanless 10G pfSense Powerhouse

by Ganesh T S on July 28, 2020 3:00 PM EST- Posted in

- Networking

- Intel

- Supermicro

- 10GBase-T

- Xeon-D

- SFP+

- 10GbE

- ASpeed

- Skylake-D

Packet Generation Options - A Quantitative Comparison

The determination of packet processing speeds of a firewall / router in a test largely absolves the need to take a look at the transport protocol (TCP or UDP). Towards this, packet generators are commonly used tomeasure the performance of routers, switches, and firewalls. Traditional bandwidth measurement at higher levels in the network stack make more sense for client devices running end-user applications. There are many commercial packet generating hardware appliances and applications used in the industry from vendors such as Ixia and Spirent. For software developers and homelab enthusiasts, and even for many hardware developers, PC software such as TRex and Ostinato fit the bill. While these software tools have a bit of a learning curve, there are simple command-line applications that can deliver quick performance measurement results.

FreeBSD supports a framework for fast packet I/O in netmap. It allows applications to access interface devices without the need to go through the host stack (assuming the existence of support from the device driver). Packet generators taking advantage of this framework can generate packets at line rates for even reasonably small packet sizes. The netmap source also includes pkt-gen, a sample packet generator application that utilizes the netmap framework. The open-source community has also created a number of applications utilizing netmap and pkt-gen, allowing for easier interactive testing as well as easy automation for common scenarios. One such application is ipgen. It also includes a built-in option to benchmark packet generation. iPerf is a popular network performance measurement tool. It outputs easy to understand bandwidth numbers particularly relevant to end users of client devices. iPerf3 includes a length parameter that allows control over the UDP datagram size, allowing the simulation of packet generation similar to pkt-gen and ipgen.

In the rest of this section, we benchmark each of these options on various machines in our testbed under different conditions. This includes the dimunitive Compulab fitlet-XA10-LAN with four gigabit LAN ports. It is an attractive x86-64 system for embedded networking applications requiring multiple network ports. While it is not in the same class as the other server systems being tested in this section, it does provide context to folks adopting these types of systems for packet generation / testbed applications.

iPerf3

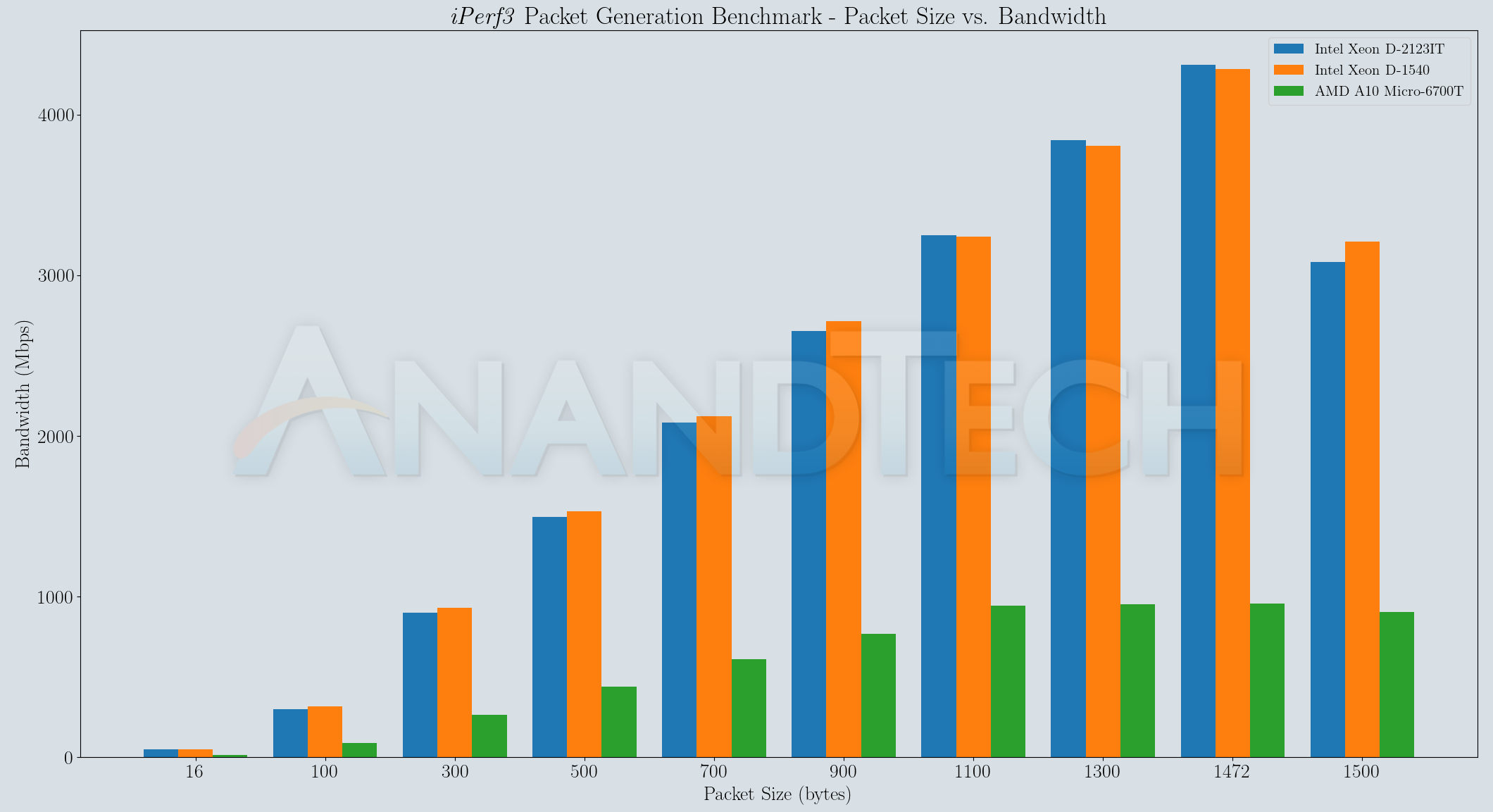

The iPerf3 benchmarking tool is used to get a quick idea of the networking capabilities of end-user devices. In its most common usage avatar, various options such as the TCP window size / UDP packet length are left at default. The ability to alter the latter does provide an avenue to explore the packet generation capabilities of iPerf. Though iPerf allows the length parameter to be set to very high values for the UDP datagram size (up to the maximum theoretical value of around 64K), going above the MTU results in fragmentation.

`iperf3 -u -c ${ServerIP} -t ${RunDuration} -O 5 -f m -b 10G --length ${pktsize} 2>&1`

As part of our testing, the source was configured to send UDP datagrams of various lengths ranging from 16 bytes to 1500 bytes across the DUT in router mode, as shown in the testing script extract above.

The bandwidth drop when going from 1472 to 1500 for the datagram length is explained by fragmentation. Protocol overheads tag more bytes on top of the length parameter passed to iPerf3, and that exceeds the minimum configured MTU in the network path. Packet generators are expected to saturate the link bandwidth for all but the smallest packet sizes. The results above suggest that usage of iPerf3 for this purpose is not advisable.

ipgen

The ipgen tool is considered next because it has a built-in benchmark mode. This mode doesn't actually place the generated packets on the network interface - rather it is a pure test of the CPU and the memory subsystem's capability to generate raw packets of different sizes. Multiple instances of the packet generator running simultaneously need to be bound to different cores in order to obtain the best performance.

`timeout 10s cpuset -l $cpuset ipgen -X -s $pktsize 2>&1`

The ipgen benchmark involves generating packets of various sizes for 10 seconds each. The first set involves generating of a single stream, the second involves two simultaneous streams, and so on up to four simultaneous streams. The process is bound to distinct physical cores in case of systems having the physical core count different from the logical core count. The average packet generation rate for across all enabled streams (measured in million packets per second - Mpps) is presented in the graph below.

The generator must be able to output 1.488 Mpps on a 1G interface and 14.88 Mpps on a 10G interface in order to maintain wire speeds when minimum-sized packets are considered. Considering the network interfaces on the machines in the above graphs, the CPUs are suitably equipped for the presented best-case scenario where no attempt is made to dump out the generated packet contents or drive them on to a network interface. Enabling such activities is bound to introduce some performance penalties.

pkt-gen

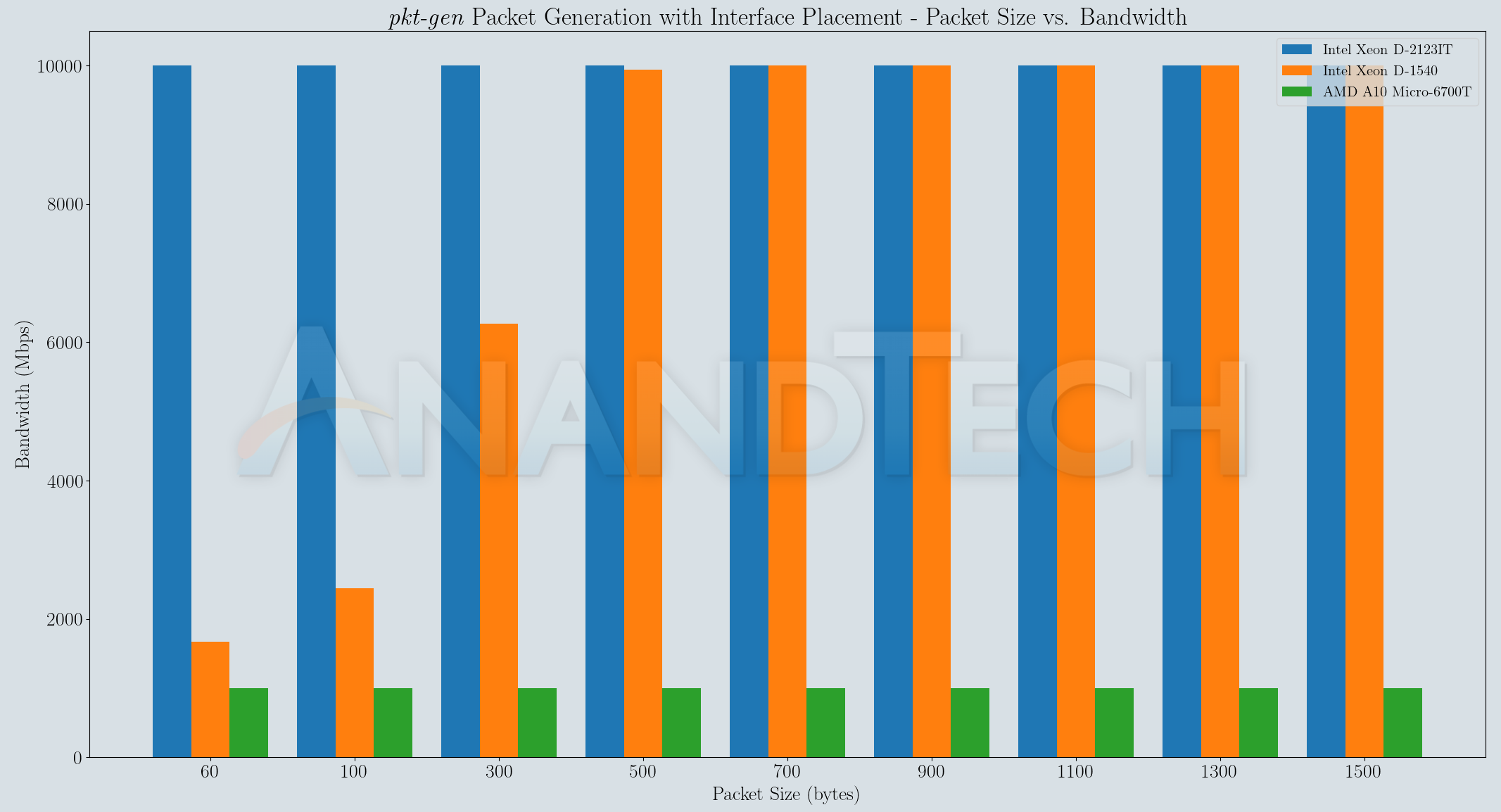

The pkt-gen benchmark described here adds a practical layer to the benchmark mode seen in the previous sub-section. The generated packets are driven on the network interface to the external device (in this case, the E302-9D pfSense firewall) which is configured to drop them. The line-rate often acts as the limiting factor for large frame sizes.

`timeout ${RunDuration}s /usr/obj/usr/src/amd64.amd64/tools/tools/netmap/pkt-gen -i ${IntfName} -l ${pktsize} -s ${SrcIP} -d ${DestIP} -D ${DestMAC} -f tx -N -B 2>&1`

With the network interface as the limiting factor, benchmark numbers are presented only for a single stream. As expected, the CPU speed and cache organization plays a major role in this task, with the 5019D-4C-FN8TP (equipped with an actively cooled 2.2 GHz Intel Xeon D-2123IT) being able to generate packets at the line-rate even for minimum-sized packets.

Based on the above results, it is clear why the pkt-gen tool is adopted widely as a reliable packet generator for performance verification. It may not offer the flexibility and additional features needed for other purposes (fulfilled by offerings such as TRex and Ostinato), but it suffices for a majority of the testing we set out to do. Tools such as ipgen and iPerf3 are still used in a few sections, but, as we shall see further down, pkt-gen is able to stress the DUT the best without being bottlenecked by the stimulus generators.

34 Comments

View All Comments

eastcoast_pete - Tuesday, July 28, 2020 - link

Thanks, interesting review! Might be (partially) my ignorance of the design process, but wouldn't it be better from a thermal perspective to use the case, especially the top part of the housing directly as heat sink? The current setup transfers the heat to the inside space of the unit and then relies on passive convection or radiation to dispose of the heat. Not surprised that it gets really toasty in there.

DanNeely - Tuesday, July 28, 2020 - link

From a thermal standpoint yes - if everything is assembled perfectly. With that design though, you'd need to screw attach the heat sink to the CPU via screws from below, and remove/reattach it from the CPU every time you open the case up. This setup allows the heatsink to be semi-permanently attached to the CPU like in a conventional install.You're also mistaken about it relying on passive heat transfer, the top of the case has some large thermal pads that will make contact with the tops of the heat sinks. (They're the white stuff on the inside of the lid in the first gallery photo; made slightly confusing by the lid being rotated 180 from the mobo.) Because of the larger contact area and lower peak heat concentration levels thermal pads are much less finicy about being pulled apart and slapped together than the TIM between a chip and the heatsink base.

Lindegren - Tuesday, July 28, 2020 - link

Could be Solved by having the CPU on the opposite side og the boardclose - Wednesday, July 29, 2020 - link

Lower power designs do that quite often. The MoBo is flipped so it faces down, the CPU is on the back side of the MoBo (top side of the system) covered by a thick, finned panel to serve as passive radiator. They probably wanted to save on designing a MoBo with the CPU on the other side.eastcoast_pete - Tuesday, July 28, 2020 - link

Appreciate the comment on the rotated case; those thermal pads looked oddly out of place. But, as Lindegren's comment pointed out, having the CPU on the opposite site of this, after all, custom MB, one could have the main heat source (SoC/CPU) facing "up", and all others facing "down".For maybe irrational reasons, I just don't like VRMs, SSDs and similar getting so toasty in an always-on piece of networking equipment.

YB1064 - Wednesday, July 29, 2020 - link

Crazy expensive price!Valantar - Wednesday, July 29, 2020 - link

I think you got tricked by the use of a shot of the motherboard with a standard server heatsink. Look at the teardown shots; this version of the motherboard is paired with a passive heat transfer block with heat pipes which connects directly to the top chassis. No convection involved inside of the chassis. Should be reasonably efficient, though of course the top of the chassis doesn't have that many or that large fins. A layer of heat pipes running across it on the inside would probably have helped.herozeros - Tuesday, July 28, 2020 - link

Neat review! I was hoping you could offer an opinion on why they elected to not include a SKU without quickassist? So many great router scenarios with some juicy 10G ports, but bottlenecks if you’re trafficing in resource intensive IPSec connections, no? Thanks!herozeros - Tuesday, July 28, 2020 - link

Me English are bad, should read “a SKU without Quickassist”GreenReaper - Tuesday, July 28, 2020 - link

The MSRP of the D-2123IT is $213. All D-2100 CPUs with QAT are >$500:https://www.servethehome.com/intel-xeon-d-2100-ser...

https://ark.intel.com/content/www/us/en/ark/produc...

And the cheapest of those has a lower all-core turbo, which might bite for consistency.

It's also the only one with just four cores. Thanks to this it's the only one that hits a 60W TDP.

Bear in mind internals are already pushing 90C, in what is presumably a reasonably cool location.

The closest (at 235% the cost) is the 8-core D-2145NT (65W, 1.9Ghz base, 2.5Ghz all-core turbo).

Sure, it *could* do more processing, but for most use-cases it won't be better and may be worse. To be sure it wasn't slower, you'd want to step up to D-2146NT; but now it's 80W (and 301% the cost). And the memory is *still* slower in that case (2133 vs 2400). Basically you're looking at rack-mount, or at the very least some kind of active cooling solution - or something that's not running on Intel.

Power is a big deal here. I use a quad-core D-1521 as a CPU for a relatively large DB-driven site, and it hits ~40W of its 45W TDP. For that you get 2.7Ghz all-core, although it's theoretically 2.4-2.7Ghz. The D-1541 with twice the cores only gets ~60% of the performance, because it's _actually_ limited by power. So I don't doubt TDP scaling indicates a real difference in usage.

A lower CPU price also gives SuperMicro significant latitude for profit - or for a big bulk discount.