The Kingston DC500 Series Enterprise SATA SSDs Review: Making a Name In a Commodity Market

by Billy Tallis on June 25, 2019 8:00 AM EST- Posted in

- SSDs

- Storage

- Kingston

- SATA

- Enterprise

- Phison

- Enterprise SSDs

- 3D TLC

- PS3112-S12

QD1 Random Read Performance

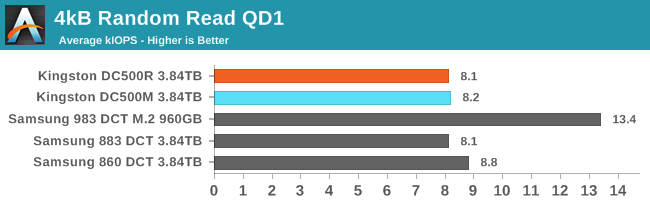

Drive throughput with a queue depth of one is usually not advertised, but almost every latency or consistency metric reported on a spec sheet is measured at QD1 and usually for 4kB transfers. When the drive only has one command to work on at a time, there's nothing to get in the way of it offering its best-case access latency. Performance at such light loads is absolutely not what most of these drives are made for, but they have to make it through the easy tests before we move on to the more realistic challenges.

|

|||||||||

| Power Efficiency in kIOPS/W | Average Power in W | ||||||||

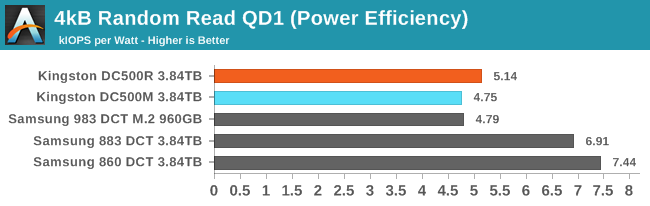

The Kingston DC500 SSDs offer similar QD1 random read throughput to Samsung's current SATA SSDs, but the Kingston drives require 35-45% more power. Samsung's most recent SATA controller platform has provided a remarkable improvement to power efficiency for both client and enterprise drives, while the new Phison S12DC controller leaves the Kingston drives with a much higher baseline for power consumption. However, the Samsung entry-level NVMe drive has even higher power draw, so despite its lower latency it is only as efficient as the Kingston drives at this light workload.

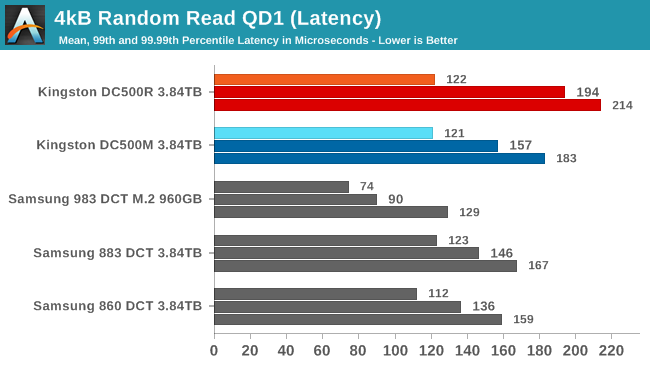

The Kingston DC500M has slightly better QoS than the DC500R for QD1 random reads, but both of the Samsung SATA drives are better still. The NVMe drive is better in all three latency metrics, but the 99th percentile latency has the most significant improvement over the SATA drives.

|

|||||||||

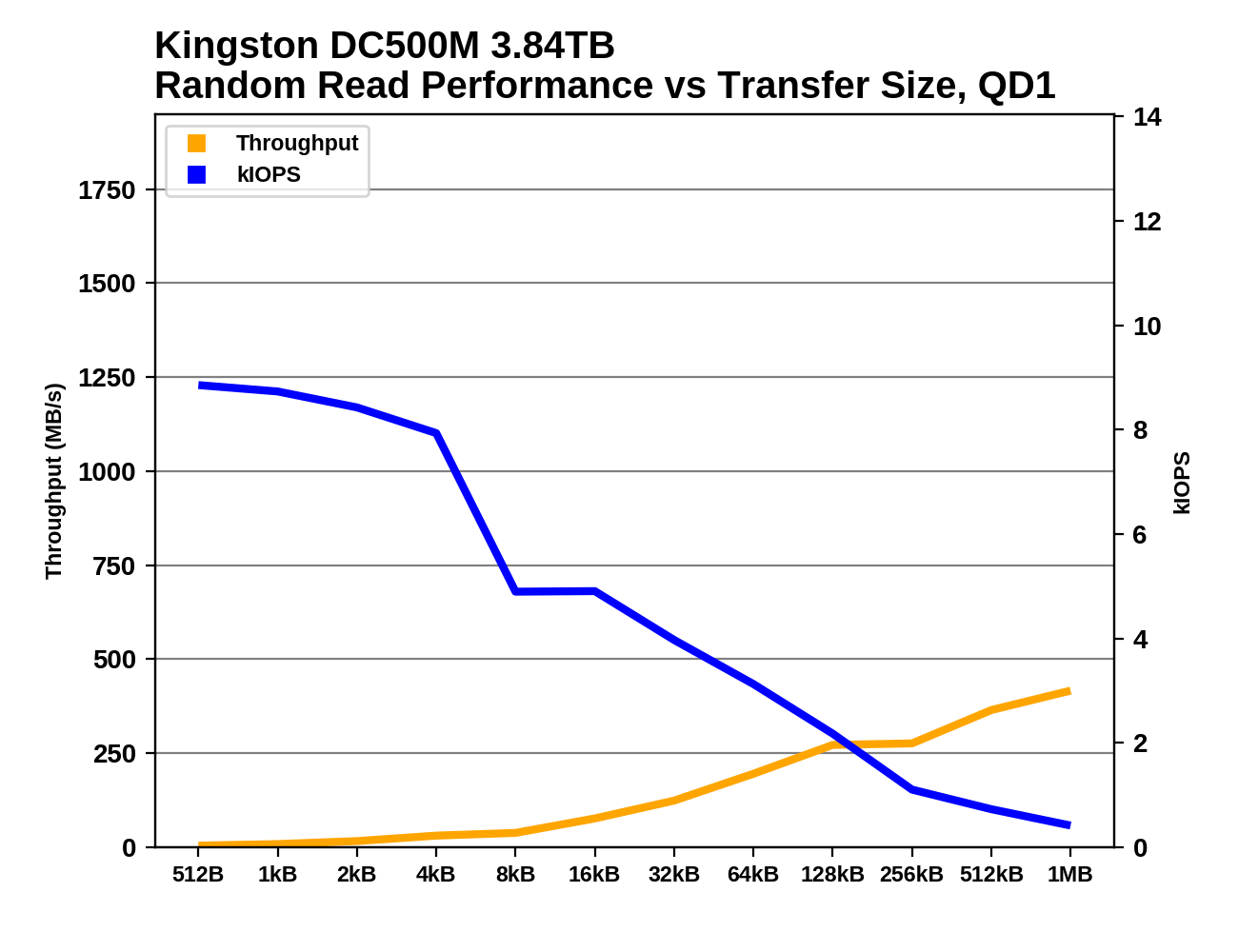

The Kingston drives offer 8-9k IOPS for QD1 random reads of 4kB or smaller blocks, but jumping up to 8kB blocks cuts IOPS in half, leaving bandwidth unchanged. After that, increasing block size does bring steady throughput improvements, but even at 1MB reading at QD1 isn't enough to saturate the SATA link.

QD1 Random Write Performance

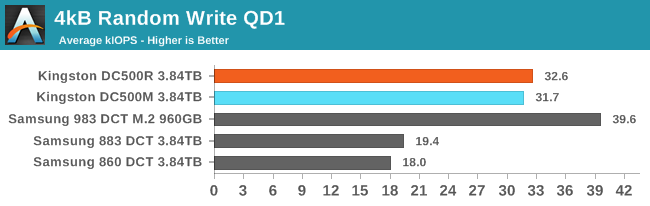

The steady-state QD1 random write throughput of the DC500s is pretty good, especially for the DC500R that is only rated for 28k IOPS regardless of queue depth. At higher queue depths, the Samsung 883 DCT is supposed to reach the speeds the DC500s are providing here, but then the DC500M should also be much faster. The entry-level NVMe drive outpaces all the SATA drives, despite having a fourth the capacity.

|

|||||||||

| Power Efficiency in kIOPS/W | Average Power in W | ||||||||

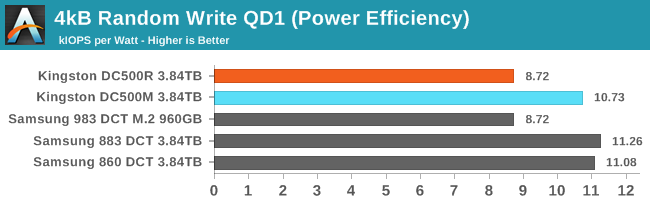

The good random write throughput of the Kingston DC500s comes with a proportional cost in power consumption, leaving them with comparable efficiency to the Samsung drives. The DC500M was fractionally slower than the DC500R but uses much less power, probably due to the extra spare area allowing the -M to have a much easier time with background garbage collection.

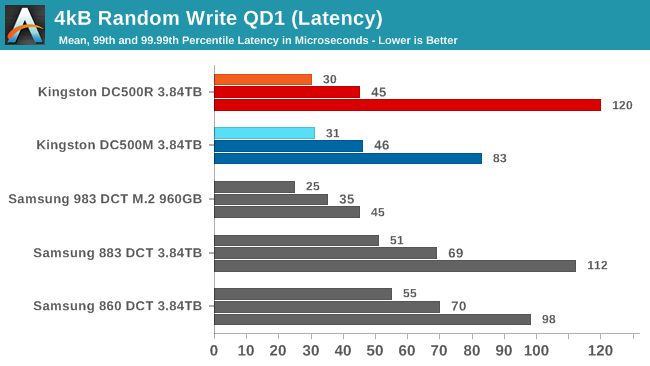

The latency statistics for the DC500R and DC500M only differ meaningfully for the 99.99th percentile score, where the -R is predictably worse off—but not too much worse off than the Samsung drives. Overall, the Kingston drives have competitive QoS with the Samsung SATA drives during this test.

|

|||||||||

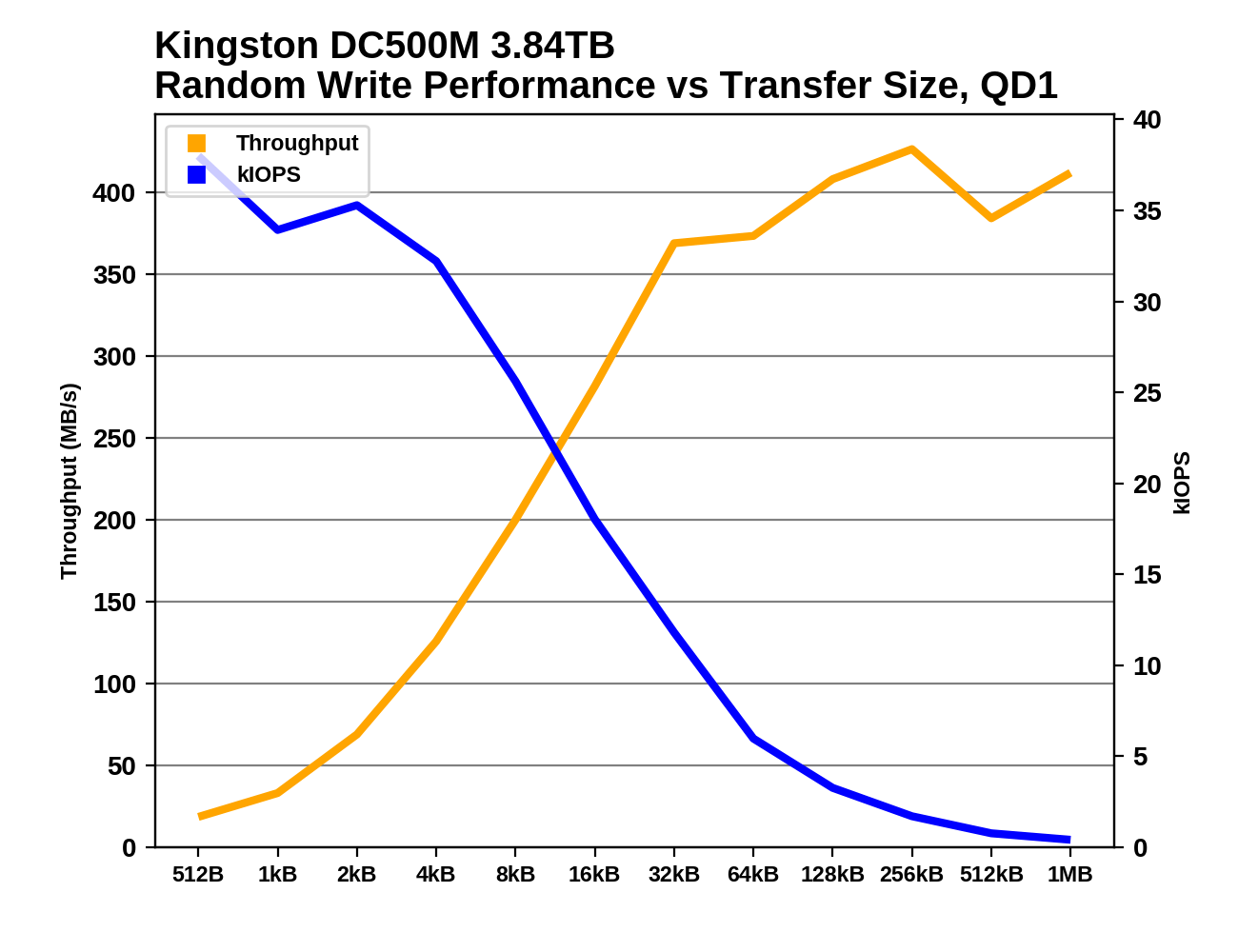

The Kingston DC500R has oddly poor random write performance for 1kB blocks, but otherwise both Kingston drives do quite well across the range of block sizes, with a clear IOPS advantage over the Samsung SATA drives for small block size random writes and better throughput once the drives have saturated somewhere around 8-32kB.

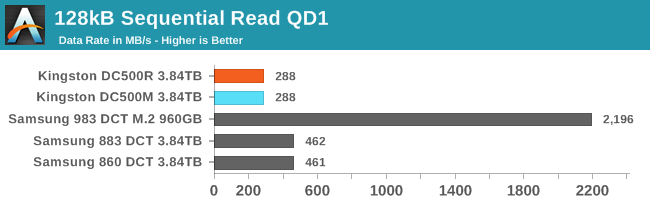

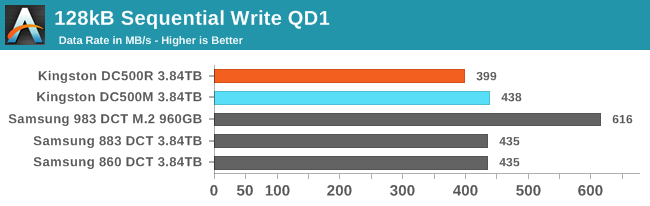

QD1 Sequential Read Performance

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

The Kingston DC500s clearly aren't saturating the SATA link when performing 128kB sequential reads at QD1, while the Samsung drives are fairly close. We've noticed in our consumer SSD reviews that Phison-based drives often require a moderately high queue depth (or block sizes above 128kB) in order to start delivering good sequential performance, and that seems to have carried over to the S12DC platform. This disappointing performance really hurts the power efficiency scores for this test, especially considering that the DC500s are drawing a bit more power than they're supposed to for reads.

|

|||||||||

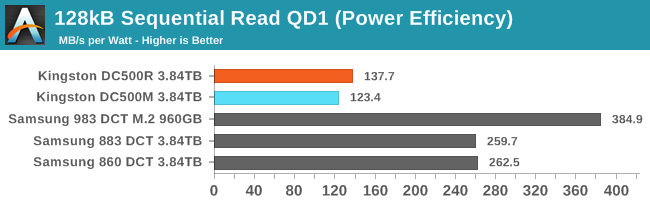

Performing sequential reads with small block operations isn't particularly useful, but the Samsung drives are much better at it. They start getting close to saturating the SATA link with block sizes around 64kB, while the Kingston drives still haven't quite caught up when the block size reaches 1MB—showing again that they really need queue depths above 1 to deliver the expected sequential read performance.

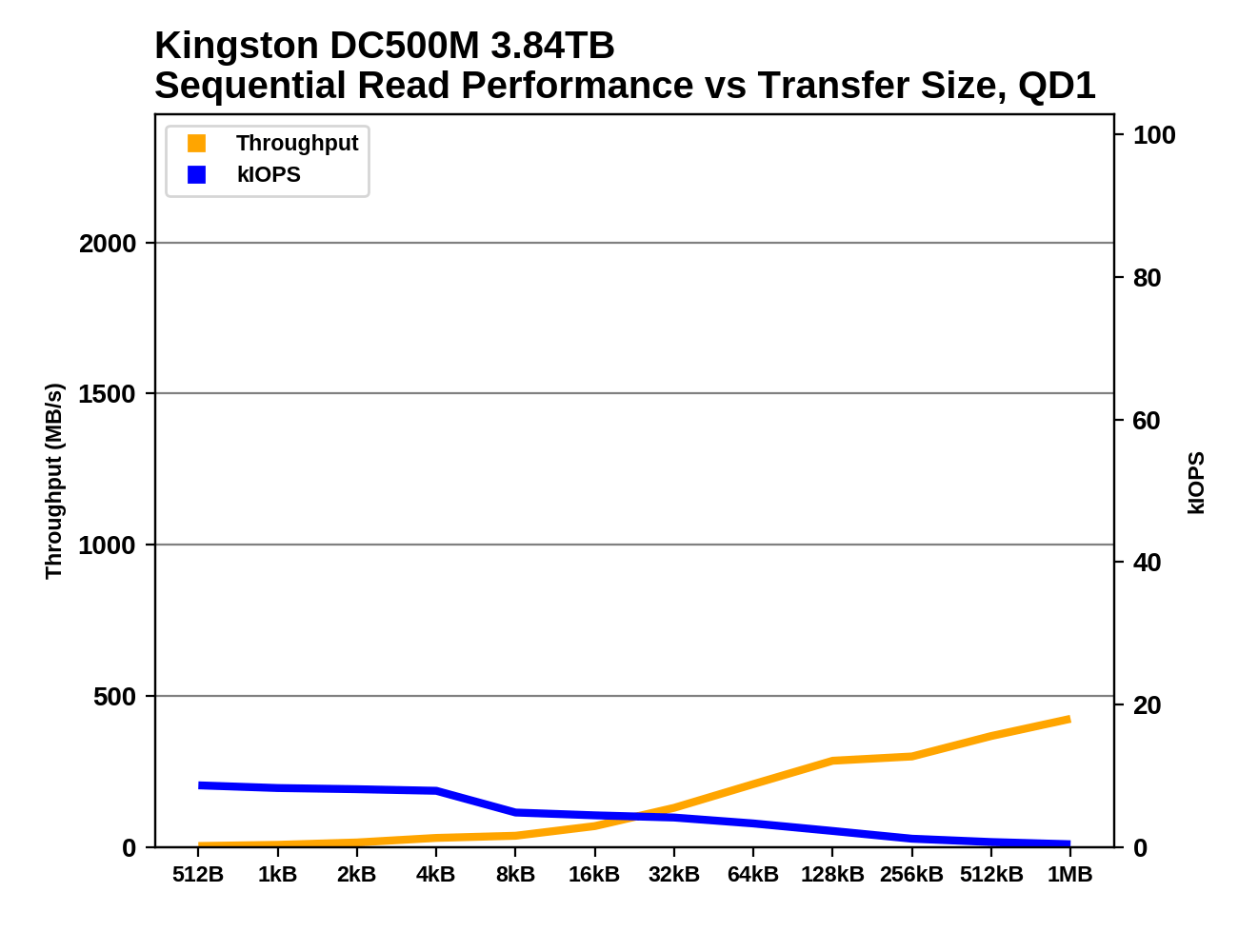

QD1 Sequential Write Performance

|

|||||||||

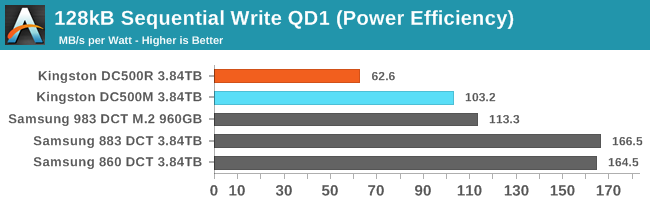

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

Sequential writes at QD1 isn't a problem for the Kingston drives the way reads were: the DC500M is a hair faster than the Samsung SATA SSDs and the DC500R is less than 10% slower. However, Samsung again comes out way ahead on power efficiency, and the DC500R exceeds its specified power draw for writes by 30%.

|

|||||||||

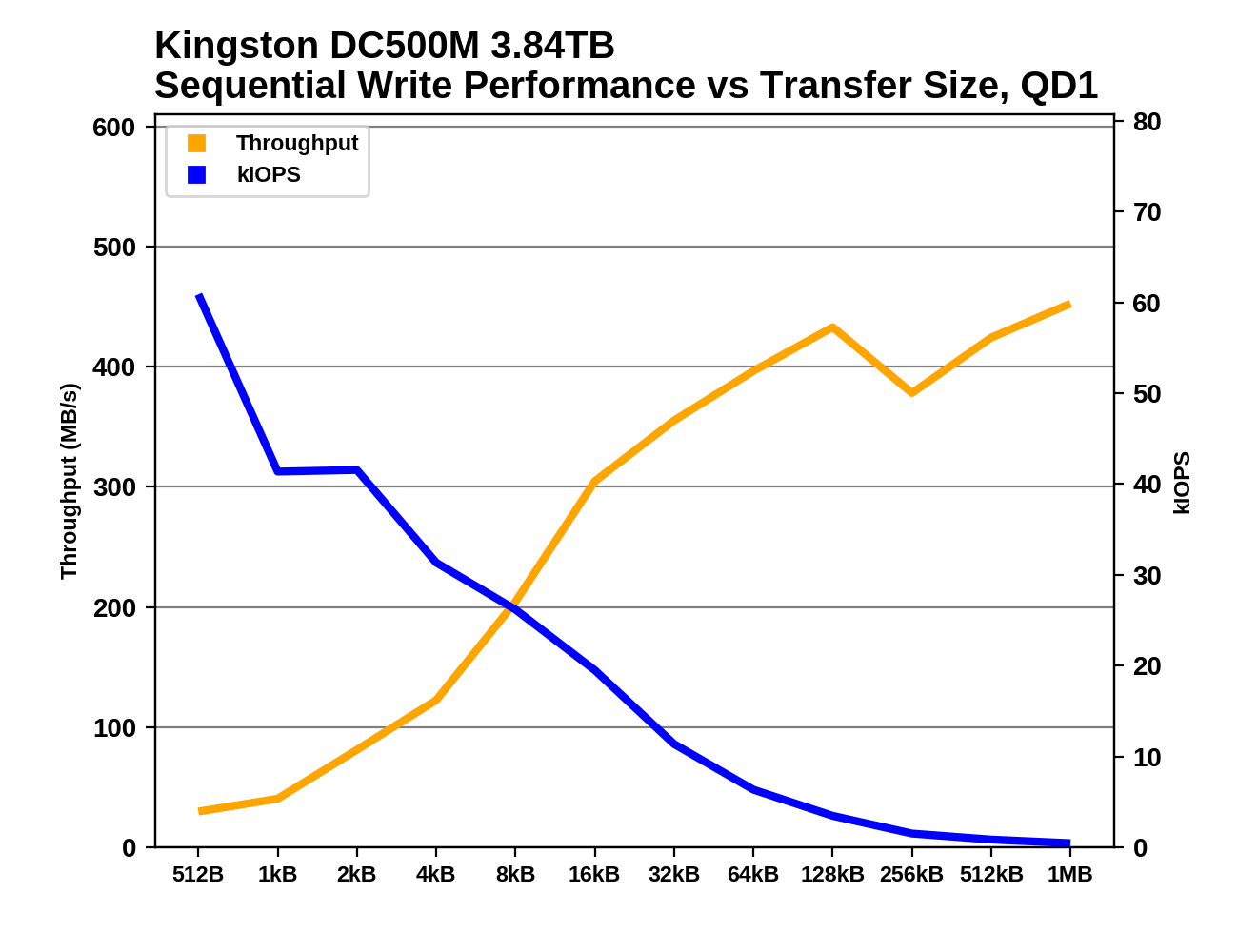

The DC500M and the Samsung 883 DCT are fairly evenly matched for sequential write performance across the range of block sizes, except that the Kingston is clearly faster for 512-byte writes (which in practice are basically never sequential). The DC500R differs from the -M by hitting a throughput limit sooner than the rest of the drives, and a bit lower during this test than the above 128kB sequential write test.

28 Comments

View All Comments

Umer - Tuesday, June 25, 2019 - link

I know it may not be a huge deal to many, but Kingston, as a brand, left a really sour taste in my mouth after V300 fiasco since I bought those SSDs in a bulk for a new build back then.Death666Angel - Tuesday, June 25, 2019 - link

Let's put it this way: they have to be quite a bit cheaper than the nearest, known competitor (Crucial, Corsair, Adata, Samsung, Intel...) to be considered as a purchase by me.mharris127 - Thursday, July 25, 2019 - link

I don't expect Kingston to be any less expensive than ADATA as Kingston serves the mid price market and ADATA the low priced one. Samsung is a supposed premium product with a premium price tag to match. I haven't used a Kingston SSD but have some of their other products and haven't had a problem with any of them that wasn't caused by me. As far as picking my next SSD, I have had one ADATA SSD fail, they replaced it once I filled out some paperwork, they sent an RMA and I sent the defective drive back to them, the second one and a third one I bought a couple of months ago are working fine so far. My Crucial SSDs work fine. I have a Team Group SSD that works wonderfully after a year of service. I think my money is on either Crucial or Team Group the next time I buy that product.Notmyusualid - Tuesday, June 25, 2019 - link

...wasn't it OCZ that released the 'worst known' SSD?I had almost forgotten about those days.

I believe the only customers that got any value out of them where those on PERC and other known RAID controllers, which were not writing < 128kB blocks - and I wasn't one of them. I RMA'd & insta-sold the return, and bought X25M.

What a 'mare that was.

Dragonstongue - Tuesday, June 25, 2019 - link

Sandforce controller was ahead of it's time, not in the most positive ways all the time either...I had an Agility 3 60gb, used for just over 2 years for my system, mom used now an additional over 2.5 years, however it was either starting to have issues, or the way mom was using caused it to "forget" things now and then.

I fixed with a crucial mx100 or 200 (forget LOL) that still has over 90% life either way, the Agility 3 was "warning" though still showed as over 75% life left (christmas '18-19) .. def massive speed up by swapping to more modern as well as doing some cleaning for it..

SSD have come A LONG way in a short amount of time, sadly the producers of them via memory/controller/flash are the problem bad drivers, poor performance when should not, not work in every system when should etc.

thomasg - Tuesday, June 25, 2019 - link

Interestingly, I still have one of the OCZs with the first pre-production SandForce, the Vertex Limited 100 GB, which has been running for many years at high throughput and many many Terabytes of Writes.Still works perfectly.

I'm not sure I remember correctly, but I think the major issues started showing up for the production SandForce model that was used later on.

Chloiber - Tuesday, June 25, 2019 - link

I still have a Supertalent Ultadrive MX 32GB - not working properly anymore, but I don't even remember how many firmware updates I put that one through :)They just had really bad, buggy firmwares throughout.

leexgx - Wednesday, June 26, 2019 - link

main problem with sandforce is the compressed layer and the nand layer was not ever managed correctly with made trim ineffective and GC was having to be used on new writes resulting in high access times and half speed writes after 1 full drive of writes , you note did not have to be filled.if it was a 240gb ssd all you had to do to slow it down permanently was write over time 240-300gb(due to compression to get an actual full 1DWP) of data only way to reset it was secure erase (unsure if that was ever fixed on the SandForce SF3000 , seagate enterprise SSDs and ironwolf nas SSD)other issue which was more or less fixed was rare BSOD (more or less 2 systems i managed did not like them) or well the drive eating it self and becoming a 0Mb drive (extremely rare but did happen) the 0mb bug i think was fixed if you owned a intel drive but the BSOD fix was limited success

Gunbuster - Tuesday, June 25, 2019 - link

Indeed. Not going to support a company with a track record of shady practices.kpxgq - Tuesday, June 25, 2019 - link

The V300 fiasco is nothing compared to the Crucial V4 fisaco... quite possible the worst SSD drives ever made right along with the early OCZ Vertex drives. Over half the ones I bought for a project just completely stopped working a month in. I bought them trusting the Crucial brand name alone.