The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTMeet The EVGA GeForce GTX 1660 Ti XC Black GAMING

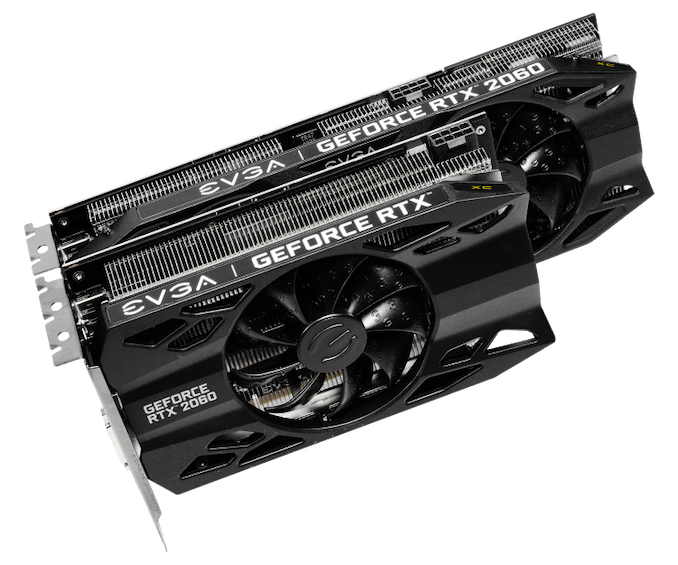

As a pure virtual launch, the release of the GeForce GTX 1660 Ti does not bring any Founders Edition model, and so everything is in the hands of NVIDIA’s add-in board partners. For today, we look at EVGA’s GeForce GTX 1660 Ti XC Black, a 2.75-slot single-fan card with reference clocks and a slightly increased TDP of 130W.

| GeForce GTX 1660 Ti Card Comparison | ||||

| GTX 1660 Ti Ref Spec | EVGA GTX 1660 Ti XC Black GAMING | |||

| Base Clock | 1500MHz | 1500MHz | ||

| Boost Clock | 1770MHz | 1770MHz | ||

| Memory Clock | 12Gbps GDDR6 | 12Gbps GDDR6 | ||

| VRAM | 6GB | 6GB | ||

| TDP | 120W | 130W | ||

| Length | N/A | 7.48" | ||

| Width | N/A | 2.75-Slot | ||

| Cooler Type | N/A | Open Air | ||

| Price | $279 | $279 | ||

Seeing as the GTX 1660 Ti is intended to replace the GTX 1060 6GB, EVGA’s cooler and card design is new and improved compared to their Pascal cards, and was first introduced with the RTX 20-series as they rolled out the iCX2 cooling design and new “XC” card branding, complementing their existing SC and Gaming series. As we’ve seen before, the iCX platform is comprised of a medley of features, and some of the core technology is utilized even when the full iCX suite isn’t. For one, EVGA reworked their cooler design with hydraulic dynamic bearing (HDB) fans, offering lower noise and higher lifespan than sleeve and ball bearing types, and this is present in the EVGA GTX 1660 Ti XC Black.

In general, the card essentially shares the design of the RTX 2060 XC, complete with those new raised EVGA ‘E’s on the fans, intended to improve slipstream. The single-fan RTX 2060 XC was paired with a thinner dual-fan XC Ultra variant, and in the same vein the GTX 1660 Ti XC Black is a one-fan design that essentially occupies three slots due to the thick heatsink and correspondingly taller fan hub. Being so short, though, makes the size a natural fit for mini-ITX form factors.

As one of the cards lower down the RTX 20 and now GTX 16 series stack, the GTX 1660 Ti XC Black also lacks LEDs and zero-dB fan capability, where fans turn off completely at low idle temperatures. The former is an eternal matter of taste, as opposed to the practicality of the latter, but both tend to be perks of premium models and/or higher-end GPUs. Putting price aside for the moment, the reference-clocked GTX 1660 Ti and RTX 2060 XC Black editions are the more mainstream variant anyhow.

Otherwise, the GTX 1660 Ti XC Black unsurprisingly lacks a USB-C/VirtualLink output, offering up the mainstream-friendly 1x DisplayPort/1x HDMI/1x DVI setup. Although the TU116 GPU still supports VirtualLink, the decision to implement it is up to partners; the feature is less applicable for cards further down the stack, where cards are more sensitive to cost and are less likely to be used for VR. Additionally, the 30W USB-C controller power budget could be significant amount relative to the overall TDP.

And on the topic of power, the GTX 1660 Ti XC Black’s power limit is actually capped at the default 130W, though theoretically the card’s single 8-pin PCIe power connector could supply 150W on its own.

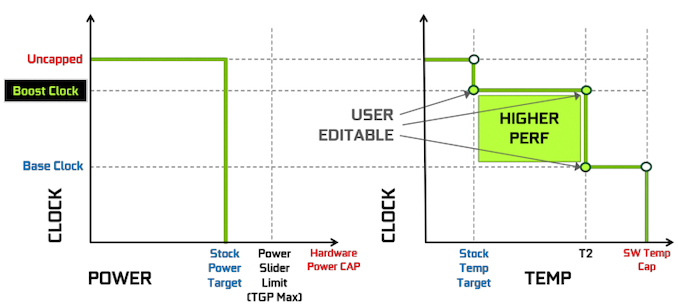

The rest of the other GPU-tweaking knobs are there for your overclocking needs, and for EVGA this goes hand-in-hand with Precision, their overclocking utility. For NVIDIA’s Turing cards, EVGA released Precision X1, which allows modifying the voltage-frequency curve and scanning for auto-overclocking as part of Turing’s GPU Boost 4. Of course, NVIDIA’s restriction of actual overvolting is still in place, and for Turing there is a cap at 1.068v.

157 Comments

View All Comments

C'DaleRider - Friday, February 22, 2019 - link

Good read. Thx.Opencg - Saturday, February 23, 2019 - link

gtx at rtx prices. not really a fan of that graph at the end. I mean 1080 ti were about 500 about half a year ago. the perf/dollar is surely less than -7% more like -30%. as well due to the 36% perf gain quoted being inflated as hell. double the price and +20% perf is not -7% anandeddman - Saturday, February 23, 2019 - link

They are comparing them based on their launch MSRP, which is fair.Actually, it seems they used the cut price of $500 for 1080 instead of the $600 launch MSRP. The perf/$ increases by ~15% if we use the latter, although it's still a pathetic generational improvement, considering 1080's perf/$ was ~55% better than 980.

close - Saturday, February 23, 2019 - link

In all fairness when comparing products from 2 different generations that are both still on the market you should compare on both launch price and current price. The purpose is to know which is the better choice these days. To know the historical launch prices and trends between generation is good for conformity but very few readers care about it for more than curiosity and theoretical comparisons.jjj - Friday, February 22, 2019 - link

The 1060 has been in retail for 2.5 years so the perf gains offered here a lot less than what both Nvidia and AMD need to offer.They are pushing prices up and up but that's not a long term strategy.

Then again, Nvidia doesn't care much about this market, they are shifting to server, auto and cloud gaming. In 5 years from now, they can afford to sell nothing in PC, unlike both AMD and Intel.

jjj - Friday, February 22, 2019 - link

A small correction here, there is no perf gain here at all, in terms of perf per dollar.D. Lister - Friday, February 22, 2019 - link

Did you actually read the article before commenting on it? It is right there, on the last page - 21% increase in performance/dollar, which added with the very decent gain in performance/watt would suggest the company is anything but just sitting on their laurels. Unlike another company, which has been brute-forcing an architecture that is more than a decade old, and squandering their intellectual resources to design budget chips for consoles. :Pshabby - Friday, February 22, 2019 - link

We didn't wait 2.5 years for such a meager performance increase. Architecture performance increases were much higher before Turing, Nvidia is milking us, can't you see?Smell This - Friday, February 22, 2019 - link

DING !I know it's my own bias, but branding looks like a typical, on-going 'bait-and-switch' scam whereby nVidia moves their goal posts by whim -- and adds yet another $100 in retail price (for the last 2 generations?). For those fans who spent beeg-buckeroos on a GTX 1070 (or even a 1060 6GB), it's The Way You Meant to Be 'Ewed-Scrayed.

haukionkannel - Saturday, February 23, 2019 - link

Do you remember how much cpus used to improve From generation to generation... 3-5%...That was when there was no competition. Now when there is competition we see 15% increase between generations or less. Well come to the future of GPUs. 3-5 % of increase between generations if there is not competition. Maybe 15 or less if there is competition. The good point is that you can keep the same gpu 6 year and you have no need to upgrade and lose money.