Intel Developer Forum Spring 2004 - Wrapup

by Derek Wilson on February 23, 2004 8:44 PM EST- Posted in

- Trade Shows

In a very convenient twist of fate, one of the designers of the Tumwater and Lindenhurst reference boards happens to be a reader and forum member here (and over at ArsTechnica) who goes by KalTorak. We talked about such cool things as the weight of those nifty cooling solutions in the picture (they are about a pound each and need to be anchored to the chassis since the motherboard can't support them) and just why we couldn't crack open the other systems to take a look at the boards within.

Apparently there were quite a few boards on display at IDF that shouldn't have been as Intel didn't want to disclose the maximum amount of RAM or PCI Express slots available on Tumwater and Lindenhurst. That's why we couldn't take a look inside the systems running any of the cool PCI Express graphics demos either.

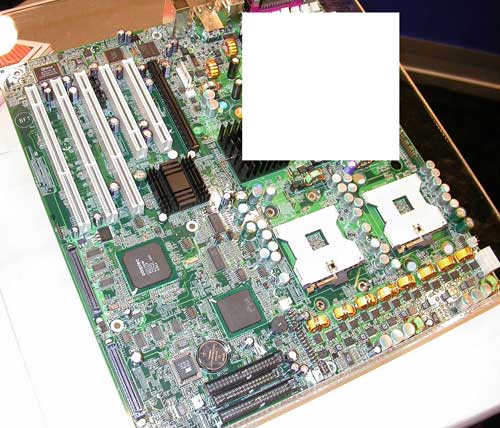

We did manage to get some pictures of a server board that we've edited to keep everyone out of trouble. Take a look:

We saw another Lindenhurst board over at the Corsair booth showing off their DDR2 modules. It also had a little contraband material on it as well, but that's been taken care of here also.

Most of the rest of the show was Itanium and server hardware, or how everyone had an example of (insert hardware here) with a PCI Express interface. The only thing left to tackle on the Technology Showcase is the differences between ATI and NVIDIA's PCI Express solutions.

9 Comments

View All Comments

TrogdorJW - Tuesday, February 24, 2004 - link

Ugh... IPS was supposed to be IPC.IPS has been proposed as an alternative to MHz as a processor speed measurement (Instructions Per Second = IPC * MHz), but figuring out the *average* number of instructions per clock is likely to bring up a whole new set of problems.

TrogdorJW - Tuesday, February 24, 2004 - link

The AMD people will probably love this quote:"We still need to answer the question of how we are going to get from here to there. As surprising as it may seem, Intel's answer isn't to push for ever increasing frequencies. With some nifty charts and graphs, Pat showed us that we wouldn't be able to rely on increases in clock frequency giving us the same increases in performance as we have had in the past. The graphs showed the power density of Intel processors approaching that of the sun if it remains on its current trend, as well as a graph showing that the faster a processor, the more cycles it wastes waiting for data from memory (since memory latency hasn't decreased at the same rate as clock speed has increased). Also, as chips are fabbed with smaller and smaller processes, increasing clock speeds will lead to problems with moving data across around a chip in less than one clock cycle (because of interconnect RC delays)."

Of course, this is nothing new. Intel has been pursuing clock speed with P4 and parallelism with P-M and Itanium. In an ideal world, you would have Pentium M/Athlon IPS with P4 clock speeds. Anyway, it looks like programmers (WOOHOO - THAT'S ME!) are going to become more important than ever in the future processor wars. Writing software to properly take advantage of multiple threads is still an enormously difficult task.

Then again, if game developers for example would give up on the "pissing contest" of benchmarks and code their games to just run at a constant 100 FPS max, it might be less of an issue. If CPUs get fast enough that they can run well over 100 fps on games, then they could stop being "Real Time Priority" processes.

It really irks me that most games suck up 100% of the processor power. If I could get by with 30% processor usage and let the rest be multi-tasked out to other threads while maintaining a good frame rate, why should the game not do so? This is especially annoying on games that aren't real-time, like the turn-based strategy games.

TrogdorJW - Tuesday, February 24, 2004 - link

"As for an example of synthesis, we were shown a demo of realtime raytracing. Visualization being the infinitely parallelizable problem that it is, this demo was a software renderer running on a cluster of 23 dual 2.2GHz Xeon processors. The world will be a beautiful place when we can pack this kind of power into a GPU and call it a day."Heheheh.... I like that. It's a real-time raytracing demo! Woohoo! I've heard people talk about raytracing being a future addition to graphics cards. If you assume that the GPU with specialized hardware could do raytracing ten times faster than the software on the Xeons, we'll still need 5 GHz graphics chips to pull it off. Or two chips running at 2.5 GHz? Still, the thought of being able to play a game with Toy Story quality graphics is pretty cool. Can't wait for 2010!

Shuxclams - Tuesday, February 24, 2004 - link

Oops, no comment before. Am I seeing things or do I see a southbridge, northbridge and memory controller?SHUX

Shuxclams - Tuesday, February 24, 2004 - link

HammerFan - Tuesday, February 24, 2004 - link

Intel probably won't use an onboard mem controller for a long time...i've heard that their first experiences with them weren't good. Also, the northbridges are way too big to no have a mem controller on board.*new topic*

That BTX case looks wacky to me...why such a big heatsink for the CPU?

*new topic*

I have the same question Cygni had: Are their any CTs in these pictures, or are there none out-and-about yet?

Ecmaster76 - Tuesday, February 24, 2004 - link

I counted eight dimms on the first board and either six or eight on the second one. Dual core memory controller? If so it would help Intel keep the Xeon from being spanked by Opteron as they scale.capodeloscapos - Tuesday, February 24, 2004 - link

Quote: " It is possible that future games (and possibly games ported by lazy console developers) may want to use the CPU and main memory a great deal and therefore benefit from PCI Express"cough!, Halo, Cough!, Colin McRae 3, cough!...

:)

Cygni - Tuesday, February 24, 2004 - link

I like the attempt to hide the number of DIMM slots... but i think its still pretty easy to tell how many are there, becaouse of the top of the slots still showing, as well as a little of the bottom of the last slot.So, is Intel trying to hide that Lindenhurst is 64bit (XeonCE) compatible, or am i off base here?