Western Digital My Passport SSD Mini-Review

by Ganesh T S on June 28, 2017 8:00 AM EST- Posted in

- Storage

- SSDs

- Western Digital

- DAS

- USB 3.1

AnandTech DAS Suite and Performance Consistency

This section looks at how the My Passport SSD behaves when subject to real-world workloads.

robocopy and PCMark 8 Storage Bench

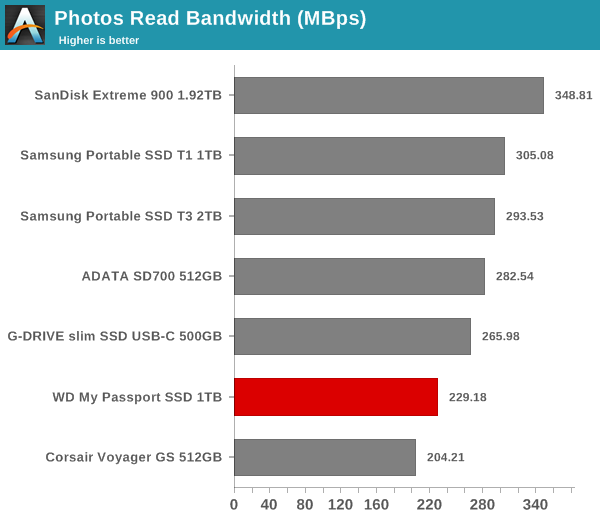

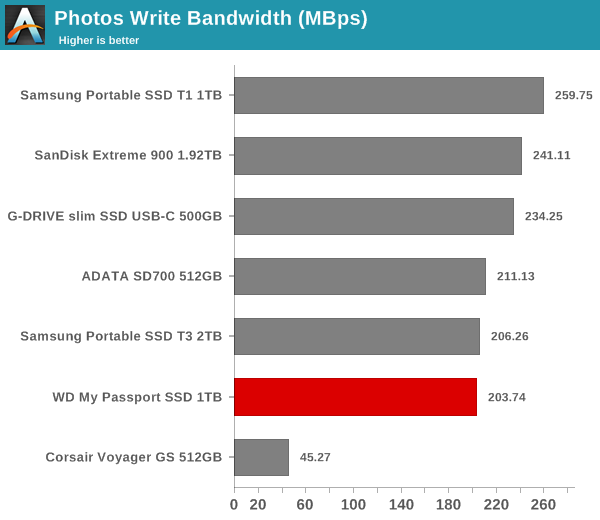

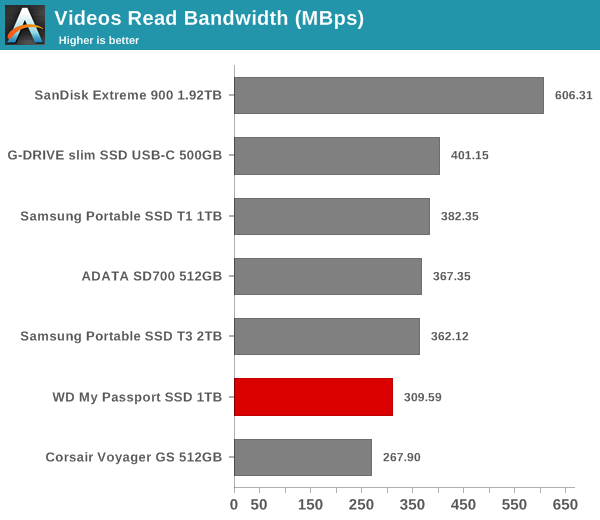

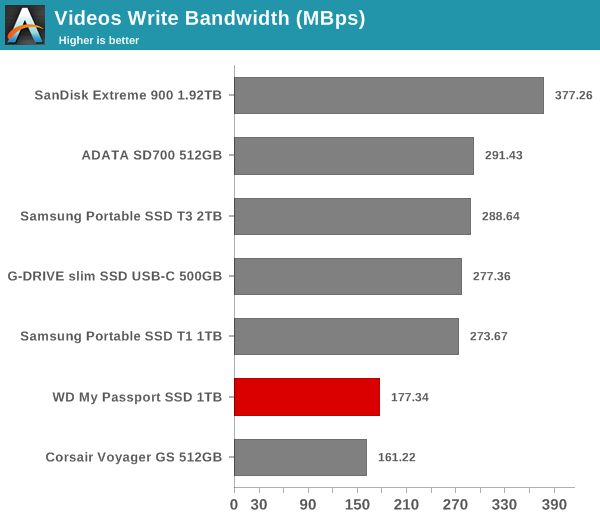

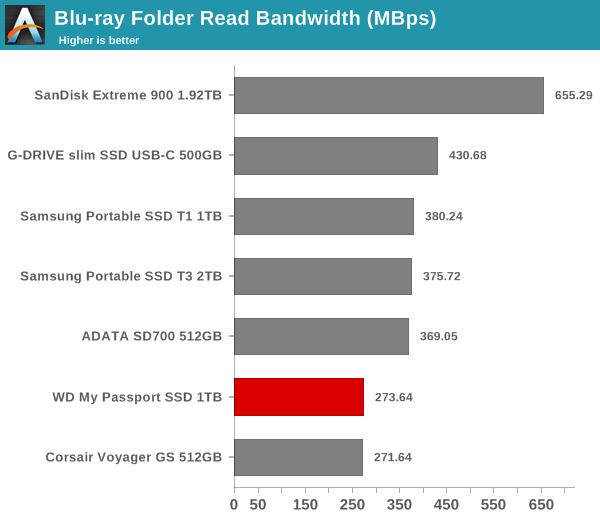

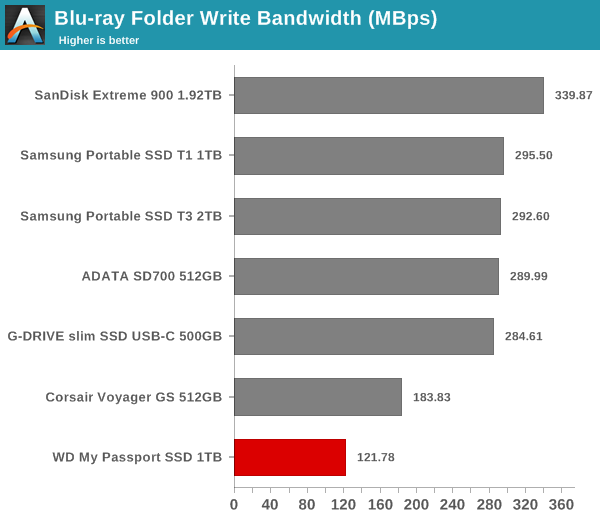

Our testing methodology for DAS units also takes into consideration the usual use-case for such devices. The most common usage scenario is transfer of large amounts of photos and videos to and from the unit. The minor usage scenario is importing files directly off the DAS into a multimedia editing program such as Adobe Photoshop.

In order to tackle the first use-case, we created three test folders with the following characteristics:

- Photos: 15.6 GB collection of 4320 photos (RAW as well as JPEGs) in 61 sub-folders

- Videos: 16.1 GB collection of 244 videos (MP4 as well as MOVs) in 6 sub-folders

- BR: 10.7 GB Blu-ray folder structure of the IDT Benchmark Blu-ray (the same that we use in our robocopy tests for NAS systems)

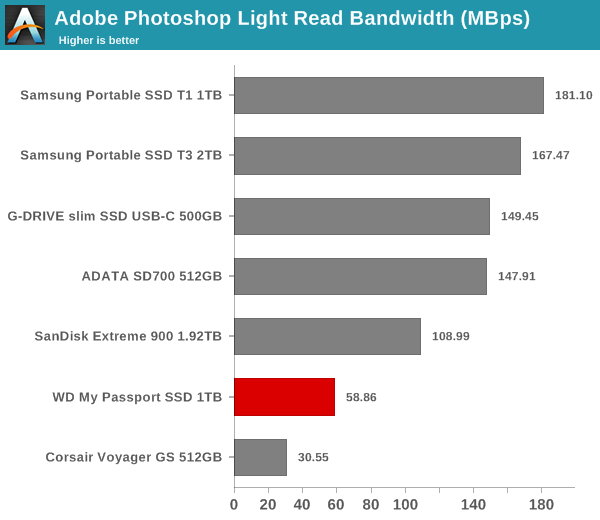

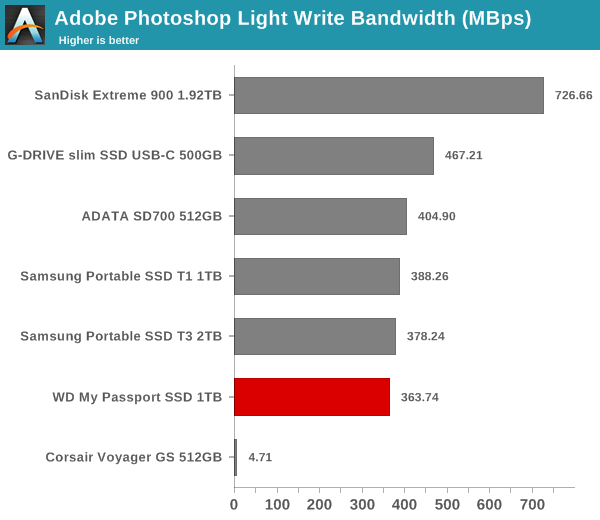

For the second use-case, we take advantage of PC Mark 8's storage bench. The storage workload involves games as well as multimedia editing applications. The command line version allows us to cherry-pick storage traces to run on a target drive. We chose the following traces.

- Adobe Photoshop (Light)

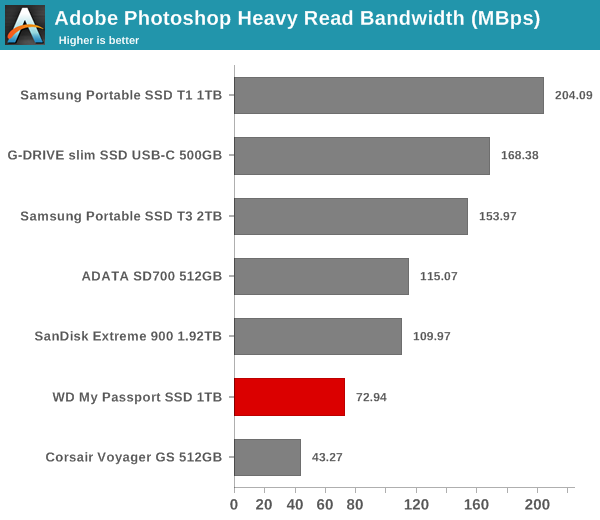

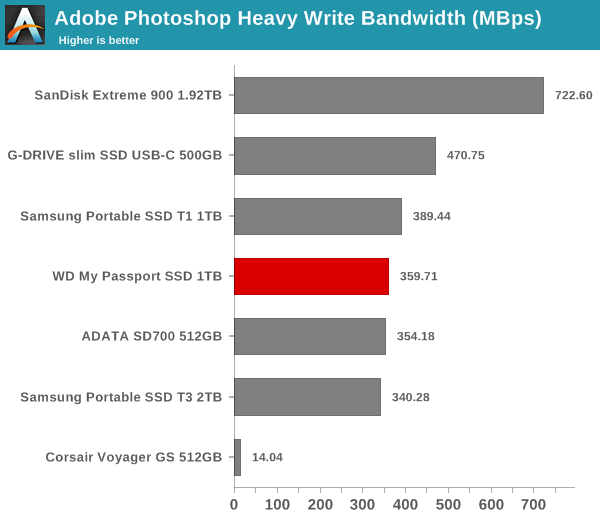

- Adobe Photoshop (Heavy)

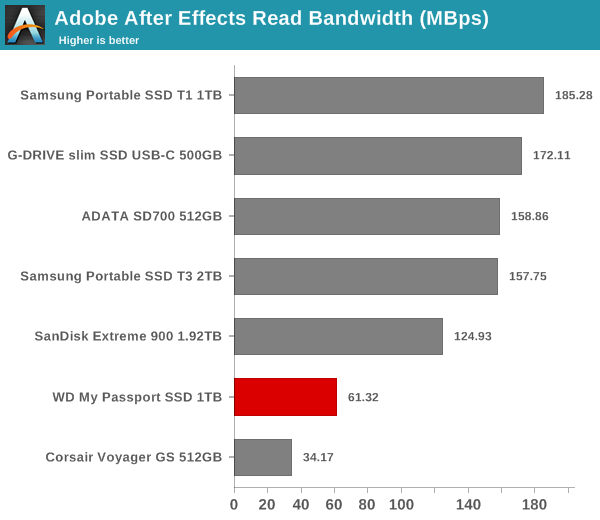

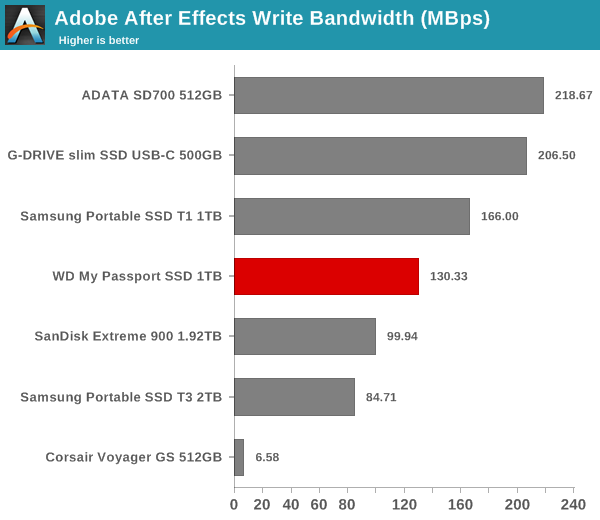

- Adobe After Effects

- Adobe Illustrator

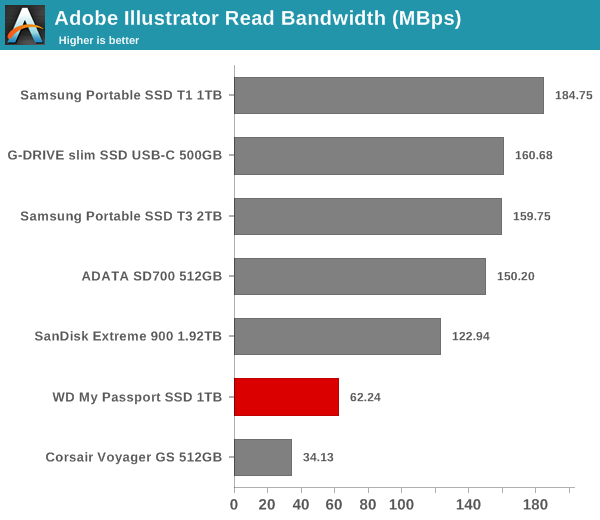

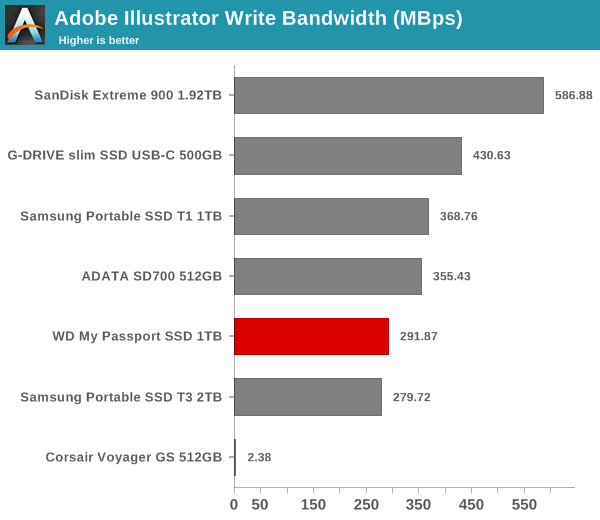

Usually, PC Mark 8 reports time to complete the trace, but the detailed log report has the read and write bandwidth figures which we present in our performance graphs. Note that the bandwidth number reported in the results don't involve idle time compression. Results might appear low, but that is part of the workload characteristic. Note that the same testbed is being used for all DAS units. Therefore, comparing the numbers for each trace should be possible across different DAS units.

We defer the analysis of these numbers to the performance consistency subsection.

Performance Consistency

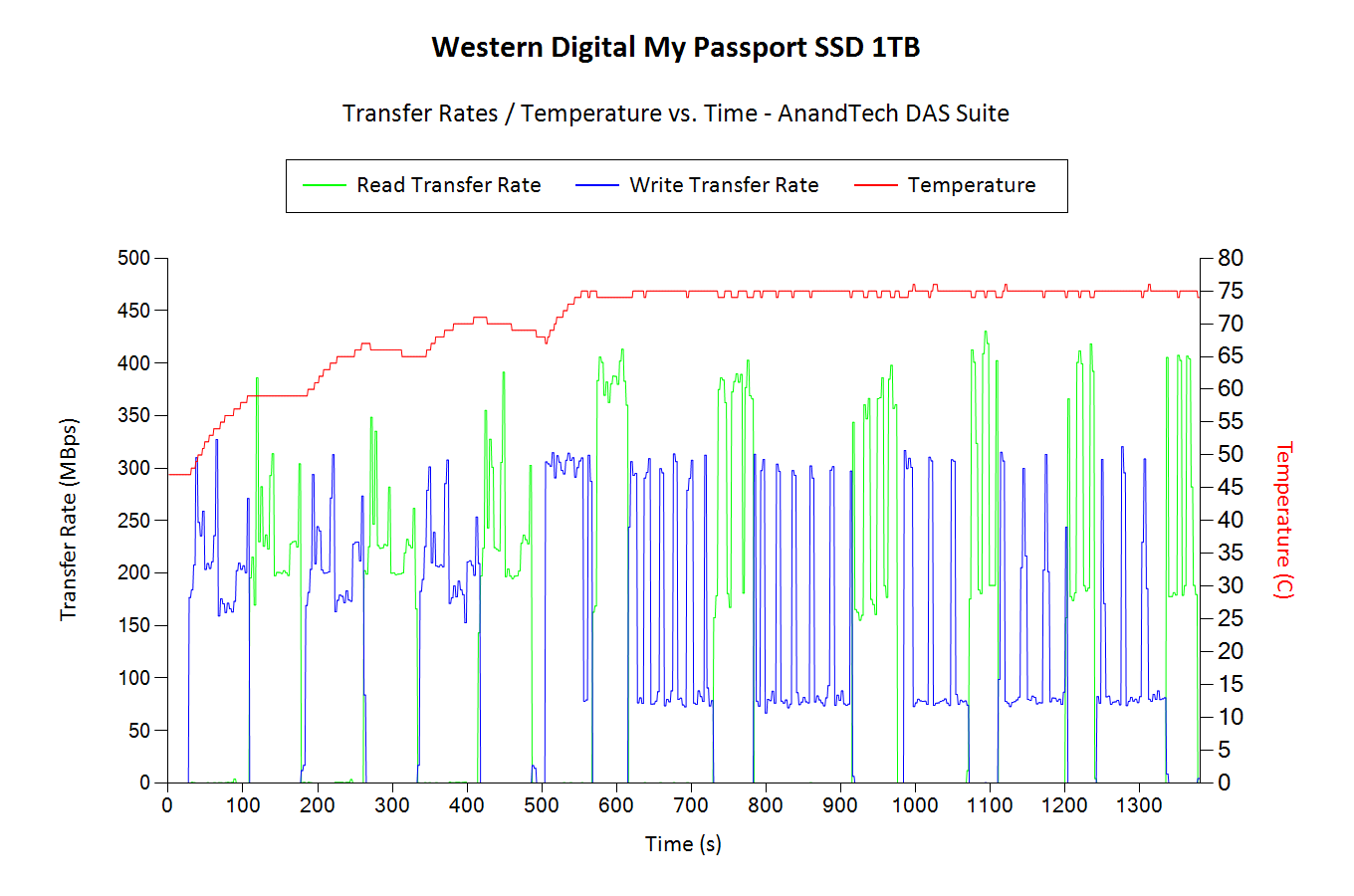

Yet another interesting aspect of these types of units is performance consistency. Aspects that may influence this include thermal throttling and firmware caps on access rates to avoid overheating or other similar scenarios. This aspect is an important one, as the last thing that users want to see when copying over, say, 100 GB of data to the flash drive, is the transfer rate going to USB 2.0 speeds. In order to identify whether the drive under test suffers from this problem, we instrumented our robocopy DAS benchmark suite to record the flash drive's read and write transfer rates while the robocopy process took place in the background. For supported drives, we also recorded the internal temperature of the drive during the process. The graphs below show the speeds observed during our real-world DAS suite processing. The first three sets of writes and reads correspond to the photos suite. A small gap (for the transfer of the videos suite from the primary drive to the RAM drive) is followed by three sets for the next data set. Another small RAM-drive transfer gap is followed by three sets for the Blu-ray folder. An important point to note here is that each of the first three blue and green areas correspond to 15.6 GB of writes and reads respectively.

In the case of the My Passport SSD, throttling doesn't activate until the temperature reaches 75C. This happens after more than 100GB of data has been transferred in a sustained manner. For a mainstream device, Western Digital indicates that this is an acceptable limitation. Note that the drive starts out at 45C in this case (since we start the process right after the CrystalDiskMark benchmark is done). Consumers can expect around 150GB of sustained transfers at the highest possible bandwidth when the My Passport SSD is plugged in fresh.

22 Comments

View All Comments

StevoLincolnite - Wednesday, June 28, 2017 - link

Really not interested in SSD's for mass storage. Not until they are affordable at 4~ Terabytes or larger.Anyone know what they are like for archival purposes verses a mechanical disk?

azazel1024 - Wednesday, June 28, 2017 - link

Retention errors are caused by charge leakage over time from a flash cell. It varies and at this point I assume all SSD controllers implement strategies and algorithms to reduce this source of errors (cannot eliminate it, as a NAND cell will eventually leak current back to a 0 state, it just takes a very long time). Also all modern SSD controllers do flash correct and refresh (FCR reads out the page, corrects any errors and then refreshes the page).BTW I pulled this out of a white paper on flash memory retention and error correction strategies.

Also as the number of writes to a cell accumulate over time, it's retention ability also drops off. Which is one thing you don't see tested in SSD endurance benchmarks/tests. A 1TB SSD might have 1000 P/E cycles on it and after the first program cycle it might retain the data for a year, with zero FCR or anything else done to preserve the data in the cells. By P/E cycle 980 it might be storing that data for a month. It might start accumulating some correctable page errors around 1080, but it might also be down to only a couple of weeks of retaining its memory.

Temperature also has an impact on retention age. At 20C the 1080 day error retention equivalent at 70C is 94 hours, basically 4 days. So if you left a NAND flash cell at 70C, it would "age" equivalent to almost 3 years at room temperature. DO NOT STORE NAND FLASH IN A HOT LOCATION. The table I found in the white paper lists the aging factor based on temperature which is derived from Arrhenius' Law.

So a hot car might "kill" the data on an SSD in a couple of months, compared to room temperature storage that might take a few years. At 50C the aging factor is 27.5. At 60C it is 90.2. Up at 90C it is 2143.6.

By a consequence, storing it at very low temperatures will give you a fractional aging factor.

Also to cut to the chase, from what I could find in the article, 2xnm NAND flash has roughly a 1 year retention age, impacted somewhat by the different ECC and error correction strategies implemented by the controller. The white paper gets in to deep details, but I can't suss out if that 1 year retention is at P/E cycles = 1, or at the maximum. With this 2xnm NAND flash the white paper looked at raw error rates related to retention age and found the difference between P/E = 1 and P/E = 5000 as about 20-40x (scale of graph is hard to see on the low end of P/E cycles) higher raw error rate of P/E = 5000. Down around P/E = 1000 it was more like only 3-5x higher than P/E = 0.

TLC is going to be worse than MLC which is going to be worse than SLC. Of course, the endurance rating is also different between them, which probably takes some of that in to account as well. There is also a difference between slow and fast leakage cells. It is possible "archival" SD cards and such are made with slow leakage cells which might have a much longer endurance.

Extremely long story executive summary at the end (cause I am a jerk), NAND flash sucks for long term cold archival, unless you are storing it COLD. Probably not too many people put some stuff on an SSD and then walk away and not come back till 10 years later, or even a year later. An archival SSD/flash memory card is also probably not going to sit on a shelf for a year plus without every being accessed, data added, whatever.

Modern SSD controllers (and possibly SD/uSD card controllers) also employ strategies to refresh the cells periodically so they don't "age out".

DO NOT STORE FLASH BASED DEVICES IN A HOT CAR. A black SSD, phone, flash card, etc. stored in a car, with the windows up with the sun beating down on the device can easily hit or exceed 70C. A couple of days of sitting in the sun like that could easily cause significant data corruption.

Also PS, that 1 year data retention thing, might not be universal across the board, that just seems to be the design goal and newer SSDs might be better or worse than that (smaller cells are going to lose charge faster, which might again be part of why the P/E endurance is lower), better controllers might be able to pull data with lower error rates with more decayed cells or have better ECC strategies. Also once that 1 year is exceed, it doesn't mean all of the data is gone, just that statistically you are likely to start encountering some uncorrectable errors. It might just be 1 error that is uncorrectable across the entire disk, but that is data lost and the uncorrectable errors are going to start skyrocketing.

DanNeely - Wednesday, June 28, 2017 - link

Maybe not SSDs; but as they've gotten larger I've seen people using thumb drives for backup instead of USB HDDs. That's going to be a mostly stale set of data and in many cases will only be infrequently powered even if the controller in it is smart enough to refresh the data.Glaring_Mistake - Wednesday, June 28, 2017 - link

About the one year's data retention is that according to JEDEC specs it should be able to hold data for one year (under specific conditions and for client SSDs, not enterprise drives) after using all of its specified P/E cycles.So it's after it's used those 1000 P/E, not before you start to use it.

Anandtech had a pretty good explanation of how it works here: http://www.anandtech.com/show/9248/the-truth-about...

Like you said a lot of drives rewrites data that is in bad shape, Silicon Motion even has a specific name for its function - StaticDataRefresh.

While smaller lithographies lose charge faster than larger lithographies everything else being equal it kind of also depends on the construction of the NAND and the controller which can make a bit of a difference.

I've actually seen one 16nm MLC drive slow down before one 15nm TLC drive did.

Which may not be exactly what you would expect given that the second was at a disadvantage in terms of both type of NAND and lithography.

That was under specific conditions though, most of the time the MLC one would likely hold up better.

Xajel - Wednesday, June 28, 2017 - link

Damn it's looks very good, but doesn't perform very good.. I hope some company will release similar designed enclosure with Type-C connectivity.vailr - Wednesday, June 28, 2017 - link

Samsung's T3 external SSD has both: USB 3.0 and USB Type C. The included M.2 SSD is not physically compatible with desktop M.2 slots, however (for those wanting that option).LordConrad - Wednesday, June 28, 2017 - link

The Samsung T3 external SSD is not M.2, it is a mSATA drive inside the external case.Samus - Thursday, June 29, 2017 - link

I actually love that WD includes an adapter like that. It's a nice touch for compatibility when shuffling around data.Also happy to see they support a full SMART array, no proprietary BS preventing display of some information. Sandisk is pretty good about this, so no surprise.

Alas, this is an expensive way to shuffle around data considering laptop HDD's can hit 200MB/sec sequentially for a fraction of the price.

VulkanMan - Wednesday, June 28, 2017 - link

"WD Security allows the setting of a password (up to 25 characters) that activates the hardware encryption features on the drive."AFAIK, unless they changed something, this unit always has hardware encryption enabled.

The software allows you to set a password, but, that does NOT change the hard-coded encryption key they installed at the factory.

If you swap the unit with another unit, you can't read the drive, so, if the controller dies out, kiss your data goodbye.

In other words, say you wrote a 2GB file to this unit. Take it out, and plug it into a SATA connection, you won't be able to decipher the data.

If you use the security software to add a password, it does NOT re-encrypt the whole drive using your password.

I would pass on all these hard-coded encryption key units.

ganeshts - Wednesday, June 28, 2017 - link

Yes, that likely explains why setting / removing the password has no effect on the performance.The hardcoded encryption key is probably good enough for mainstream users. Btw, I believe the actual encryption key is a combination of the user password and the hardcoded one.