Investigating Cavium's ThunderX: The First ARM Server SoC With Ambition

by Johan De Gelas on June 15, 2016 8:00 AM EST- Posted in

- SoCs

- IT Computing

- Enterprise

- Enterprise CPUs

- Microserver

- Cavium

Energy Consumption

A large part of the server market is very sensitive to performance-per-watt. That includes the cloud vendors. For a smaller part of the market, top performance is more important than the performance/watt ratio. Indeed for financial trading, big data analyses, database, and some simulation servers, performance is the top priority. Energy consumption should not be outrageous, but it is not the most important concern.

We tested the energy consumption for a one-minute period in several situations. The first one is the point where the tested server performs best in MySQL: the highest throughput just before the response time goes up significantly. Then we look at the point where throughput is the highest (no matter what response time). This is is the situation where the CPU is fully loaded. And lastly we compare with a situation where the floating point units are working hard (C-ray).

| SKU | TDP (on paper) spec |

Idle W |

MySQL Best throughput at lowest resp time (W) |

MySQL Max Throughput (W) |

Peak vs idle (W) |

Transactions per watt |

C-ray W |

| Xeon D-1557 | 45 W | 54 | 99 | 100 | 46 | 73 | 99 |

| Xeon D-1581 | 65 W | 59 | 123 | 125 | 66 | 97 | 124 |

| Xeon E5-2640 v4 | 90 W | 76 | 135 | 143 | 67 | 71 | 138 |

| ThunderX | 120 W | 141 | 204 | 223 | 82 | 46 | 190 |

| Xeon E5-2690 v3 | 135 W | 84 | 249 | 254 | 170 | 47 | 241 |

Intel allowed the Xeon "Haswell" E5 v3 to consume quite a bit of power when turbo boost was on. There is a 170W difference between idle and max throughput, and if you assume that 15 W is consumed by the CPU in idle, you get a total under load of 185W. Some of that power has to be attributed to the PSU losses, memory activity (not much) or fan speed. Still we think Intel allowed the Xeon E5 "Haswell" to consume more than the specified TDP. We have noticed the same behavior on the Xeon E5-2699 v3 and 2667 v3: Haswell EP consumes little at low load, but is relatively power hungry at peak load.

The 90W TDP Xeon E5-2640v4 consumes 67W more at peak than in idle. Even if you add 15W to that number, you get only 82W. Considering that the 67W is measured at the wall, it is clear that Intel has been quite conservative with the "Broadwell" parts. We get the same impression when we tried out the Xeon E5-2699 v4. This confirms our suspicion that with Broadwell EP, Intel prioritized performance per watt over throughput and single threaded performance. The Xeon D, as a result, is simply the performance per watt champion.

The Cavium ThunderX does pretty badly here, and one of the reason is that power management either did not work, or at least did not work very well. Changing the power governor was not possible: the cpufreq driver was not recognized. The difference between peak and idle (+/- 80W) makes us suspect that the chip is consuming between 40 and 50W at idle, as measured at the wall. Whether is just a matter of software support or a real lack of good hardware power management is not clear. It is quite possibly both.

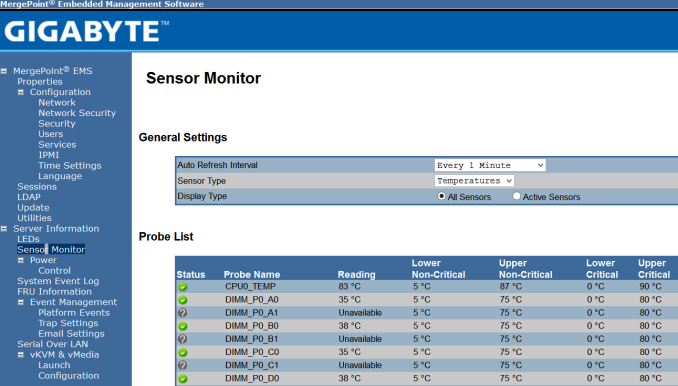

We would also advise Gigabyte to use a better performing heatsink for the fastest ThunderX SKUs. At full load, the reported CPU temperature is 83 °C, which leaves little thermal headroom (90°C is critical). When we stopped our CRAC cooling, the gigabyte R120-T30 server forced a full shutdown after only a few minutes while the Xeon D systems were still humming along.

82 Comments

View All Comments

Spunjji - Wednesday, June 15, 2016 - link

Well, this is certainly promising. Absent AMD, Intel need some healthy competition in this market - even if it is in something of a niche area.niva - Wednesday, June 15, 2016 - link

This is the area where profits are made, not "something of a niche area."Shadow7037932 - Wednesday, June 15, 2016 - link

Yeah, I mean getting some big customers like Facebook or Google would be rather profitable I'd imagine.JohanAnandtech - Thursday, June 16, 2016 - link

More than 30% of Intel's revenue, and the most profitable area for years, and for years to come...prisonerX - Wednesday, June 15, 2016 - link

This is the future. Single thread performance has reached a dead end and parallelism is the only way forward. Intel's legacy architecture is a millstone around its neck. ARM's open model and efficient implementation will deliver more cores and more performance as software adapts.The monopolists monopolise themselves into irrelevance yet again.

CajunArson - Wednesday, June 15, 2016 - link

" Intel's legacy architecture is a millstone around its neck."I wouldn't call those Xeon-D parts putting up excellent performance at lower prices and vastly lower power consumption levels to be any kind of "millstone".

"ARM's open model and efficient implementation "

What's "open" about these Cavium chips exactly? They can only run a few specialized Linux flavors that don't even have the full range of standard PC software available to them.

What is efficient about a brand-new ARM chip from 2016 losing at performance per watt to the 4.5 year old Sandy Bridge parts that you were insulting?

As for monopolies, ARM has monopolized the mobile market and brought us "open" ecosystems like the iPhone walled-garden and Android devices that literally never receive security updates. I'd take a plain x86 PC that I can slap Linux on any day of the week over the true monopoly that ARM has over locked-down smartphones.

shelbystripes - Wednesday, June 15, 2016 - link

You're right to criticize the "millstone" comment, Intel has done quite well achieving both high performance and high performance-per-watt in their server designs.But your comment about a "true monopoly" in the "locked-down smartphone" market is ridiculous. The openness (or lack thereof) that you're complaining about has nothing to do with the CPU architecture at all. An x86 smartphone or tablet can just as easily be locked down, and they are. I own a Dell Venue 8 7000, which is an Android tablet with an Intel Atom SoC inside. It's a great tablet with great hardware. But it's got a bunch of uninstallable crapware installed, Dell abandoned it after 5.1 (it's ridiculous that a tablet with a quad-core 2GHz SoC and 2GB RAM will never see Marshmallow), and the locked smartphone-esque bootloader means I can't repurpose it to a Linux distro even if one existed that supported all the hardware inside this thing.

On the flipside, the most popular open-source learning/development solution out there right now is the ARM-based Raspberry Pi. There are a number of Linux distros available for it, and everything is OSS, even the GPU driver.

TheLightbringer - Thursday, June 16, 2016 - link

You haven't done your homework.Some mobile devices were coming with Intel. But like Microsoft it entered the market too late, without offering any real value. The phrase "Too little, too late" fit them both.

ARM didn't do a monopoly. They just simply saw an opportunity and embrace it. In the early IBM clone days Intel licensed their architecture to allow competition and broad arrange of products. After the market was won, they went greedy, didn't licensed the architecture anymore and cut a lot of players out, leaving a need for a chip licensing scheme. And that's where ARM got in.

Google develops Android OS, but is up to phone vendors and carriers to deploy them. And they don't want to for economic reasons. They prefer to sell you a new phone for $$$.

Intel and MS got in the mobile/car market exactly what they deserve, nothing else.

junky77 - Friday, June 17, 2016 - link

they all greedy. Some just play it smartly or have more luck in decision makingBut, yea, when you read about the way IBM behaved when things were fresh - it's quite amazing. They had much of the market and could do a lot of stuff, but they simply had a very narrow mind set

soaringrocks - Wednesday, June 15, 2016 - link

You make it sound like it's mostly a SW problem, I think it's more complex than that. Actual performance is very dependent on the types of workload and some tasks fit Intel CPUs nicely and the performance per watt for ARM is lacking despite the hype of that architecture being uniquely qualified for low-power. It will be fun to watch how the battle evolves though.