AMD Reveals Polaris GPU Architecture: 4th Gen GCN to Arrive In Mid-2016

by Ryan Smith on January 4, 2016 9:00 AM ESTPolaris: Made For FinFET

The final aspect of RTG’s Polaris hardware presentation (and the bulk of their slide deck) is focused on the current generation FinFET manufacturing processes and what that means for Polaris.

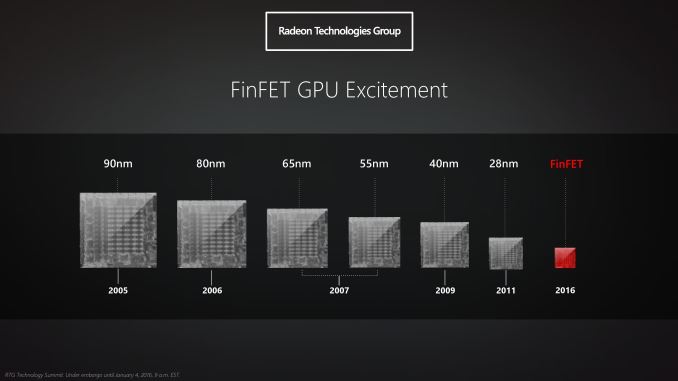

As RTG’s slide concisely and correctly notes, the regular march of progress in semiconductor fabrication has quickly tapered off over the last decade. What was once a yearly cadence of new manufacturing processes – a major new node every 2 years with a smaller step in the intermediate years – became just every two years. And even then, after the 20nm planar process proved unsuitable for GPUs due to leakage, we are now to our fifth year of 28nm planar as the leading manufacturing node for GPUs. The failure of 20nm has essentially stalled GPU manufacturing improvements, and in RTG’s case resulted in GPUs being canceled and features delayed to accommodate the unexpected stall at 28nm.

For their most recent generation of products both RTG and NVIDIA took steps to improve their architectural efficiency due to the lack of a new manufacturing process – with NVIDIA having more success at this than RTG – but ultimately both parties were held back from what they originally were planning back around 2010. So to say that the forthcoming move to FinFET for new GPUs is a welcome change is an understatement; after nearly half a decade of 28nm GPUs we finally will see the kind of true generational improvements that can only come from a new manufacturing node.

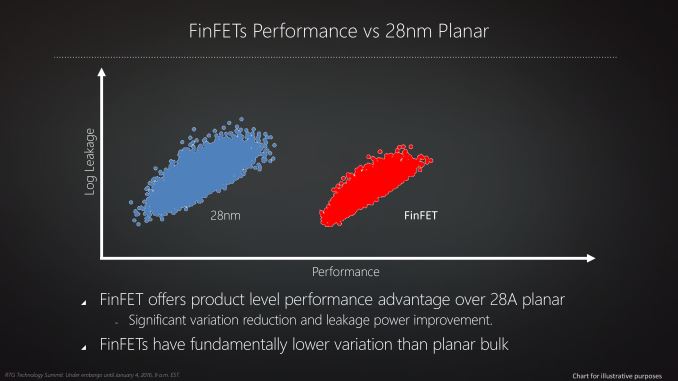

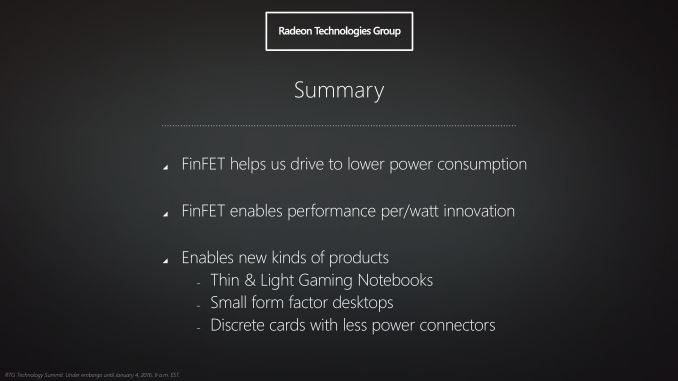

To no surprise then, RTG is aggressively targeting FinFET with Polaris and promoting the benefits thereof. With power efficiency essentially being the limiting factor to GPU performance these days, the greatest gains can only be reached by improving overall power efficiency, and for RTG FinFETs will be a big part of getting there. Polaris will be the first RTG architecture designed for FinFETs, and coupled with the architecture improvements discussed earlier, it should result in the largest overall increase in performance per watt for any Radeon GPU family.

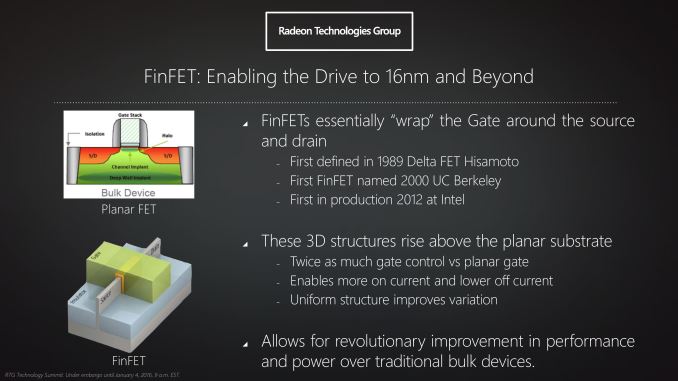

We’ve already covered the technical aspects of FinFET a number of times before, so I’m not going to go into too much depth here. But at the most basic level, FinFETs are the solution to the leakage problems that have made planar transistors impractical below 28nm (and ultimately killed 20nm for GPUs). By using multiple fins to essentially make a transistor 3D, it becomes possible to control leakage in a manner not possible with planar transistors, and that in turn will significantly improve energy efficiency by reducing the amount of energy a GPU wastes just to be turned on.

With the introduction of FinFET manufacturing processes, GPU manufacturing can essentially get back on track after the issues at 20nm. FinFETs will be used for generations to come, and while the initial efficiency gain from adding FinFETs will likely be the single greatest gain, it solves the previous leakage problem and gives foundries a route to 10nm and beyond. At the same time however as far as the Polaris GPUs are concerned, it should be noted that the current generation of 16nm/14nm FinFET processes are not too far removed from 20nm with FinFETs. Which is to say that the move to FinFETs gets GPU manufacturing back on track, but it won’t make up for lost time. 14nm/16nm FinFET is essentially only one generation beyond 28nm by historical performance standards, and the gains we're expecting from the move to FinFET should be framed accordingly.

As for RTG’s FinFET manufacturing plans, the fact that RTG only mentions “FinFET” and not a specific FinFET process (e.g. TSMC 16nm) is intentional. The group has confirmed that they will be utilizing both traditional partner TSMC’s 16nm process and AMD fab spin-off (and Samsung licensee) GlobalFoundries’ 14nm process, making this the first time that AMD’s graphics group has used more than a single fab. To be clear here there’s no expectation that RTG will be dual-sourcing – having both fabs produce the same GPU – but rather the implication is that designs will be split between the two fabs. To that end we know that the small Polaris GPU that RTG previewed will be produced by GlobalFoundries on their 14nm process, meanwhile it remains to be seen how the rest of RTG’s Polaris GPUs will be split between the fabs.

Unfortunately what’s not clear at this time is why RTG is splitting designs like this. Even without dual sourcing any specific GPU, RTG will still incur some extra costs to develop common logic blocks for both fabs. Meanwhile it's also not clear right now whether any single process/fab is better or worse for GPUs, and what die sizes are viable, so until RTG discloses more information about the split order, it's open to speculation what the technical reasons may be. However it should be noted that on the financial side of matters, as AMD continues to execute a wafer share agreement with GlobalFoundries, it’s likely that this split helps AMD to fulfill their wafer obligations by giving GlobalFoundries more of AMD's chip orders.

Closing Thoughts

And with that, we wrap up our initial look at RTG's Polaris architecture and our final article in this series on RTG's 2016 GPU plans. As a high level overview what we've seen so far really only scratches the surface of RTG's plans - and this is very much by design. But as the first occasion of RTG opening up their roadmaps and giving us a bit of a look into the future, it's a welcome change not only for developers, but for the press and public alike.

Backed by the first major node shrink for GPUs in over 4 years, RTG has laid out an aggressive plan for Polaris in 2016. At this point RTG needs to catch up and close the market share gap with NVIDIA - of this RTG is quite aware - and Polaris will be the means to do that. What needs to happen now is for RTG to fully execute on the plans they've laid out, and if they can do so then 2016 should turn out to be an interesting (and competitive) year in the GPU industry.

153 Comments

View All Comments

baii9 - Monday, January 4, 2016 - link

All this efficency talk, you guys must love intel.Sherlock - Tuesday, January 5, 2016 - link

Off topic - Is it technically feasible to have a GPU with only USB 3 ports & other interfaces tunneled through it?Strom- - Tuesday, January 5, 2016 - link

Not really. The available bandwidth with USB 3.1 Gen 2 is 10 Gbit/s. DisplayPort 1.3 has 25.92 Gbit/s.daddacool - Tuesday, January 5, 2016 - link

The big question is whether we'll see Radeons with the same/slightly lower power consumption as current high end cards but with significant performance hikes. Getting the same/marginally better performance at a much lower power consumption isn't really that appealing to me :)BrokenCrayons - Tuesday, January 5, 2016 - link

Identical performance at reduced heat output would be appealing to me. I'd be a lot more interested in discrete graphics cards if AMD or NV can produce a GPU for laptops that can be passively cooled alongside a passively cooled CPU. If that doesn't happen soon, I'd rather continue using Intel's processor graphics and make do with whatever they're capable of handling. Computers with cooling fans aren't something I'm interested in dealing with.TheinsanegamerN - Tuesday, January 5, 2016 - link

Almost all laptops have fans. Only the core m series can go fanless, and gaming on them is painful at best.TheinsanegamerN - Tuesday, January 5, 2016 - link

And amd or no making a 2-3 watt you would perform no better. Physically, it's impossible to make a decent got in a fanless laptopBrokenCrayons - Wednesday, January 6, 2016 - link

The Celeron N3050 in the refreshed HP Stream 11 & 13 doesn't require a cooling fan. Under heavy gaming demands, the one I own only gets slightly warm to the touch. Based on my experiences with the Core M, I do think it's a decent processor but highly overpriced and simply not deserving of a purchase in light of Cherry Trail processors' 16 EU IGP being a very good performer. I do lament the idea of manufacturers capping system memory at 2GB on a single channel and storage at 32GB in the $200 price bracket since, at this point, it's unreasonable to penalize performance that way and force the system to burn up flash storage life by swapping. At least the storage problem is easy to fix with SD or a tiny plug-and-forget USB stick that doesn't protrude much from the case.I think that a ~2 watt AMD GPU could easily be added to such a laptop to relieve the system's RAM of the responsibility of supporting the IGP and be placed somewhere in the chassis where it could work fine with just a copper heat plate without thermally overloading the design. Something like that would make a fantastic gaming laptop and I'd happily part with $300 to purchase one.

If that doesn't come to fruition soon, I think I'd rather just shift my gaming over to an Android. A good no contract phone can be had for about $30 and really only needs a bluetooth keyboard and controller pad to become a gaming platform. Plus you can make the occasional call with it if you want. I admit that it makes buying a laptop for gaming a hard sell with the only advantage being an 11 versus 4 inch screen. But I've traveled a lot with just a Blackberry for entertainment and later a 3.2 inch Android and it worked pretty well for a week or two in a hotel to keep me busy after finishing on-site. I have kicked around the idea of a 6 inch Kindle Fire though too....either way, AMD is staring down a lot of good options for gaming so it needs to get to work bringing wattage way down in order to compete with gaming platforms that can fit in a pocket.

Nagorak - Wednesday, January 6, 2016 - link

Well, you're clearly not much of a gamer, since what the Intel IGP's are capable of is practically nothing.I will agree that the space heaters we currently have in laptops are kind of annoying, but I don't see that improving any time soon.

doggface - Wednesday, January 6, 2016 - link

I don't know about fanless. But if nVidia or AMD could get a meaningful CPU + GPU package in under ~30 watts tdp - that would be very interesting. I can see an ultrabook with a small fan @ 1080p/medium being a great buy for rocket league/lol fans.