The AMD A8-7670K APU Review: Aiming for Rocket League

by Ian Cutress on November 18, 2015 8:00 AM ESTThe Testing

A number of factors about the A8-7670K processor suggest that this is "another release of the same sort of stuff," albeit with increased frequencies. Nevertheless, we put the processor through our regular tests, to see what would happen. Our bench suite this time had one omission and one addition. For whatever reason, Linux Bench refused to run, with Ubuntu 14.04 throwing a hissy fit and not willing to start. I’m not sure if this was a BIOS issue or something more fundamental with the software stack, but it was odd. The addition, as the title of the review alluded to, is a Rocket League benchmark. At this time, we haven’t run it on many systems, but the A8-7670K is the sort of APU that enables games like Rocket League. Rocket League is a good contender for our 2016 CPU/APU benchmark suite on the integrated graphics side of things, and this serves as a good tester in the wild.

All of our regular benchmark results can also be found in our benchmark engine, Bench. Rocket League will be added in the future with the 2016 updates.

Test Setup

| Test Setup | |

| Processor | AMD A8-7670K 2 Modules, 4 Threads 3.6 GHz (3.9 GHz Turbo) R7 Integrated Graphics 384 SPs at 756 MHz |

| Motherboards | MSI A88X-G45 Gaming |

| Cooling | Cooler Master Nepton 140XL |

| Power Supply | OCZ 1250W Gold ZX Series Corsair AX1200i Platinum PSU |

| Memory | G.Skill 2x8 GB DDR3-2133 1.5V |

| Memory Settings | JEDEC |

| Video Cards | ASUS GTX 980 Strix 4GB MSI GTX 770 Lightning 2GB (1150/1202 Boost) ASUS R7 240 2GB |

| Hard Drive | Crucial MX200 1TB |

| Optical Drive | LG GH22NS50 |

| Case | Open Test Bed |

| Operating System | Windows 7 64-bit SP1 |

Many thanks to...

We must thank the following companies for kindly providing hardware for our test bed:

Thank you to AMD for providing us with the R9 290X 4GB GPUs.

Thank you to ASUS for providing us with GTX 980 Strix GPUs and the R7 240 DDR3 GPU.

Thank you to ASRock and ASUS for providing us with some IO testing kit.

Thank you to Cooler Master for providing us with Nepton 140XL CLCs.

Thank you to Corsair for providing us with an AX1200i PSU.

Thank you to Crucial for providing us with MX200 SSDs.

Thank you to G.Skill and Corsair for providing us with memory.

Thank you to MSI for providing us with the GTX 770 Lightning GPUs.

Thank you to OCZ for providing us with PSUs.

Thank you to Rosewill for providing us with PSUs and RK-9100 keyboards.

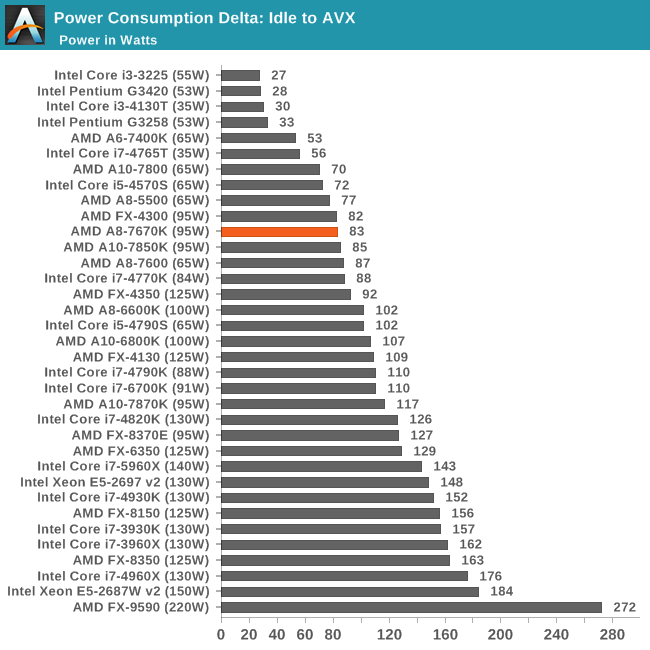

Load Delta Power Consumption

Power consumption was tested on the system while in a single GTX 770 configuration with a wall meter connected to the OCZ 1250W power supply. This power supply is Gold rated, and as I am in the U.K. on a 230-240 V supply, that leads to ~75% efficiency at greater than 50W, and 90%+ efficiency at 250W, suitable for both idle and multi-GPU loading. This method of power reading allows us to compare the power management of the UEFI and the board to supply components with power under load, and includes typical PSU losses due to efficiency.

The TDP for the A8-7670K is up at 95W, similar to many other AMD processors. However, at load, ours drew only an additional 83W, giving some headroom.

AMD A8-7670K Overclocking

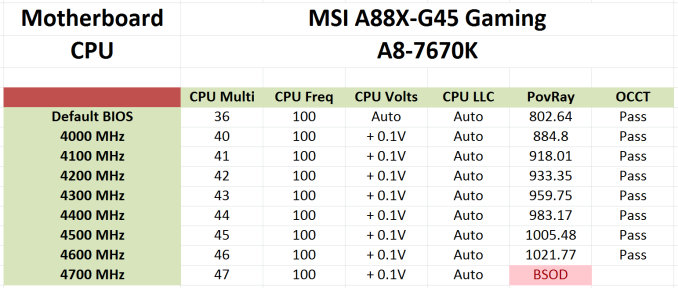

For this review, we even tried our hand at overclocking on the MSI A88X-G45 Gaming motherboard and managed to get 4.6 GHz stable.

Methodology

Our standard overclocking methodology is as follows. We select the automatic overclock options and test for stability with POV-Ray and OCCT to simulate high-end workloads. These stability tests aim to catch any immediate causes for memory or CPU errors.

For manual overclocks, based on the information gathered from previous testing, we start off at a nominal voltage and CPU multiplier, and the multiplier is increased until the stability tests are failed. The CPU voltage is increased gradually until the stability tests are passed, and the process is repeated until the motherboard reduces the multiplier automatically (due to safety protocol) or the CPU temperature reaches a stupidly high level (100º C+, or 212º F). Our test bed is not in a case, which should push overclocks higher with fresher (cooler) air.

Overclock Results

MSI’s motherboard doesn’t allow fixed voltages to be set but prefers to rely on an offset system only. There is a problem here that we are also fighting a DVFS implementation, which will automatically raise the voltage when an overclock is applied, with an end result of stacking the overclock voltage offset on top of the DVFS voltage boost. On our cooling system, the processor passed quite easily up to 4.6 GHz without much issue, but 4.7 GHz produced an instant blue screen when a rendering workload was applied. Hitting 4.6 GHz on a midrange AMD processor is quite good, indicating our sample is some nice silicon, but your mileage might vary.

154 Comments

View All Comments

Ian Cutress - Wednesday, November 18, 2015 - link

It's a 95W desktop part. It's not geared for laptops or NUCs. There are 65W desktop parts with TDP Down modes to 45W, and lower than that is the AM1 platform for socketed. Carrizo at 15W/35W for soldered such as laptops and NUC-like devices.Vesperan - Wednesday, November 18, 2015 - link

Apologies if I missed it - but what speed was the memory running at for the APUs?The table near the start just said 'JEDEC' and linked to the G-skill/Corsair websites. This is important given these things are bandwidth constrained - the difference between 1600mhz and 2133mhz can be significant (over 20 percent).

tipoo - Wednesday, November 18, 2015 - link

2133mhz, page 2Ian Cutress - Wednesday, November 18, 2015 - link

We typically run the CPUs at their maximum supported memory frequency (which is usually quoted as JEDEC specs with respect to sub-timings). So the table on the front page for AMD processors is relevant, and our previous reviews on Intel parts (usually DDR3-1600 C11 or DDR4-2133 C15) will state those.A number of people disagree with this approach ('but it runs at 2666!' or 'no-one runs JEDEC!'). For most enthuiasts, that may be true. But next time you're at a BYOC LAN, go see how many people are buying high speed memory but not implementing XMP. You may be suprised - people just putting parts together and assuming they just work.

Also, consider that the CPU manufacturers would put the maximum supported frequency up if they felt that it should be validated at that speed. It's a question of silicon, yields, and DRAM markets. Companies like Kingston and Micron still sell masses of DDR3-1600. Other customers just care about the density of the memory, not the speed. It's an odd system, and by using max-at-JEDEC it keeps it fair between Intel, AMD or others: if a manufacturer wants a better result, they should release a part with a higher supported frequency.

I don't think we've done a DRAM scaling review on Kaveri or Kaveri Refresh, which is perhaps an oversight on my part. Our initial samples had issues with high speed memory - maybe I should put this one from 1600 up to 2666 if it will do it.

Oxford Guy - Wednesday, November 18, 2015 - link

SInce you always overclock processor is makes little sense to hold back an APU with slow RAM.Oxford Guy - Wednesday, November 18, 2015 - link

It's not just the bandwidth, either (like 2666) but the combination of that and latency. My FX runs faster in Aida benches, for the most part, at CAS 9-11-10-1T 2133 (DDR3) than at 2400, probably due to limitations of the board (which is rated for 20000. Don't just focus on high clocks.Oxford Guy - Wednesday, November 18, 2015 - link

rated for 2000Ian Cutress - Thursday, November 19, 2015 - link

Off the bat, that's a false equivalence - we only overclocked in this review to see how far it would go, not for the general benchmark set.But to reiterate a variation on what I've already said to you before:

For DDR3, if I was to run AMD at 2666 and Intel at 1600, people would complain. If I was to run both at DDR3-2133, AMD users would complain because I'm comparing overclocked DRAM perf to stock perf.

Most users/SIs don't overclock - that's the reality.

If AMD or Intel wanted better performance, they'd rate the DRAM controller for higher and offer multiple SKUs.

They do it with CPUs all the time through binning and what you can actually buy.

e.g. 6700k and 6600k - they don't sell a 6600k at 2133 and 6600k at 2400 for example.

This is why we test out of the box for our main benchmark results.

If they did do separate SKUs with different memory controller specifications, we would test update the dataset accordingly with both sets, or the most popular/important set at any rate.

Besides, anyone following CPU reviews at AT will know your opinion on the matter, you've made that abundantly clear in other reviews. We clearly disagree. But if you want to run the AIDA synthetics on your overclocked system, great - it totally translates into noticeable real-world performance gains for sure.

Vesperan - Thursday, November 19, 2015 - link

Thanks Ian - I missed than when quickly going through the story this morning prior to work. Yet somehow picked out the JEDEC bit!I like the approach you've outlined, it makes sense to me. So - for what it's worth, you have support of at least one irrelevant person on the internet!

From what I saw from a few websites (Phoronix springs to mind) the gains from memory scaling decline rapidly after 2133mhz.

CaedenV - Wednesday, November 18, 2015 - link

I just don't understand the argument for buying AMD these days. Computers are not things you replace every 3-5 years anymore. In the post Core2 world systems last at least a good 7-10 years of usefulness, where simple updates of SSDs and GPUs can keep systems up to date and 'good enough' for all but the most pressing workloads. People need to stop sweating about how much the up-front cost of a system is, and start looking at what tier it performs at, and finding a way to get their budget to stretch to that level.I don't mean starting with a $500 build and stretching your wallet (or worse, your credit card) to purchase a $1200 system. I'm not some elitist rich guy; I understand the need to stick to a budget. But the difference between AMD and Intel in price is not very much, while the Intel chip is going to run cooler, quieter, and faster. Spending the extra $50 for the Intel chip and compatible motherboard is not going to break the bank.

Because lets face it; pretty much everyone is going to fall in one of 2 camps.

1) you are not going to game much at all, and the integrated Intel graphics, while not stellar, are going to be 'good enough' to run solitaire, phone game ports, 4K video, and a few other things. In this case the system price is going to be essentially the same, the video performance is going to be more than adequate, and the i3 is going to knock the socks off of the A8 5+ years down the road.

2) You actually do play 'real' games on a regular basis, and the integrated A8 graphics are going to be a bonus to you for the first 2-6 months while you save up for a dGPU anyways... in which case the video performance is going to be nearly identical between the i3 and A8, while the i3 is going to be much more responsive in your day-to-day browsing, work, and media consumption. Or, you are going to find that you outgrow what an i3 or A8 can do, and you end up building a much faster i5 or i7 based system... in which case the i3 will either retain it's resale value better, or will make a much better foundation for a home server, non-gaming HTPC, or some other use.

I really want to love AMD, but after cost of ownership and longevity of the system is taken into consideration, they just do not make sense to purchase even in the budget category. The only place where AMD makes sense is if you absolutely have to have the GPU horsepower, but cannot have a dGPU in the system for some reason. And even in that case, the bump up to an A10 is going to be well worth the extra few $$. There is almost no use in getting anything slower than an A10 on the AMD side.

But then again, AMD is working hard these days to reinvent themselves. Maybe 2 years from now this will all turn around and AMD will have more worthwhile products on the market that are useful for something.