Windows 10 Feature Focus: .NET Native

by Brett Howse on October 2, 2015 9:00 AM EST- Posted in

- Software

- Windows

- Microsoft

- Windows 10

Programming languages seem to be cyclical. Low level languages give developers the chance to have very fast code with the minimum of commands necessary, but the closer you code to hardware the more difficult it becomes, and the developer should have a good grasp of the hardware in order to get the best performance. In theory, everything could be written in assembly language but that has some limitations. Programming languages, over time, have been abstracted from the hardware they run on which gives advantages to developers in that they do not have to micro-manage their code, and the code itself can be compiled against different architectures.

In the end, the processor just executes machine code, and job of moving from a developer’s mind to machine code can be done in a several ways. Two of the most common are Ahead-of-Time (AOT) and Just-in-Time (JIT) compilation. Each have their own advantages, but AOT can yield better performance because the CPU is not translating code on the fly. For desktops, this has not necessarily been a big issue since they generally have sufficient processing power anyway, but in the mobile space processors are much more limited in resources, especially power.

We’ve seen Android moving to AOT with ART just last year, and the actual compilation of the code is done on the device after the app is downloaded from the store. With Windows, apps can be written in more than just one language, and apps in the Windows Store can be written in C++, which is compiled AOT, as well as C# which runs as JIT code using Microsoft’s .NET framework, or even HTML5, CSS, and Javascript.

With .NET Native, Microsoft is now allowing C# code to be pre-compiled to native code, eliminating the need to fire up the majority of the .NET framework and runtime, which saves time at app launch. Visual Studio will now be able to do the compile to native code, but Microsoft is implementing quite a different system than Google has done with Android’s ART. Rather than having the developer do the compilation and upload to the cloud, and rather than have the code be compiled on the device, Microsoft will be doing the compilation themselves once the app is uploaded to the store. This will allow them to use their very well-known C++ compiler, and any tweaks they make to the compiler going forward will be able to be applied to all .NET Native apps in the store rather than having to get the developer to recompile. Microsoft's method is actually very similar to Apple's latest changes as well, since they will also be recompiling on their end if they make changes to their compiler.

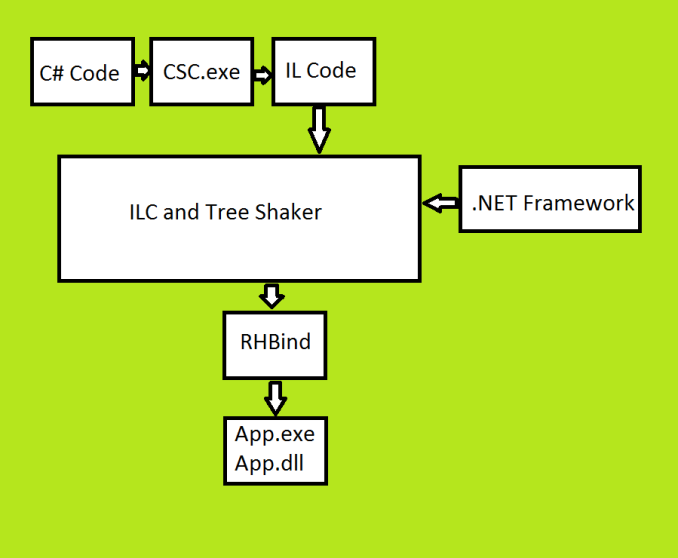

They have added some pretty interesting functionality to their toolkit to enable .NET Native. Let’s take a look at the overview.

Starting with C# code, this is compiled into IL code using the same compiler that they use for any C# code running on the .NET framework as JIT code. The resulting Intermediate Language code is actually the exact same result as someone would get if they wanted to run the code as JIT. If you were not going to compile to native, you would stop right here.

To move this IL code to native code, next the code is run through an Intermediate Language Complier. Unlike a JIT compiler which runs on the fly when the code is running, the ILC can see the entire program and can therefore make larger optimizations than the JIT compiler would be able to do since it only sees a tiny portion of the code. ILC also has access to the .NET framework to add in the necessary code for standard functions. The ILC will actually create C# code for any WinRT calls made to avoid the framework having to be invoked during execution. That C# code is then fed back into the toolchain as part of the overall project so that all of these calls can be static calls for native code. The ILC then does transforms on any C# code that are required; C# code can rely on the framework for certain functions, so these need to be transformed since the framework will not be invoked once the app is compiled as native. The resulting output from the ILC is one file which contains all of the code, all of the optimizations, and any of the .NET framework necessary to run this app. Next, the single monolithic output is put through a Tree Shaker which looks at the entire program and determines what code is being used, and what is not, and expunging code that is never going to be called.

The resultant output from ILC.exe is Machine Dependent Intermediate Language (MDIL) which is very close to native code but with a light abstraction of the native code. MDIL contains placeholders which must be replaced before the code can be run by a process called binding.

The binder, called rhbind.exe, changes the MDIL into a final set of outputs which results in a .exe file and a .dll file. This final output is run as native code, but it still relies on a minimal runtime to be active which contains the garbage collector.

The process might seem pretty complicated, with a lot of steps, but there is a method to the madness. By keeping the developer’s code as an IL and treating it just like it would be non-native code, the debugging process is much faster since the IDE can run the code in a virtual machine with JIT and avoid having to recompile all of the code to do any debugging.

The final compile will be done in the cloud for any apps going to the store, but the tools will be available locally as well to assist with testing the app before it gets deployed to ensure there are no unseen consequences of moving to native code.

All of this work is done for really one reason. Performance. Microsoft’s numbers for .NET Native shows up to 60% quicker cold startup times for apps, and 40% quicker launch with a warm startup. Memory usage will also be lower since the operating system will not need to keep the runtime active at the same time. All of this is important for any system, but especially for low powered tablets and phones.

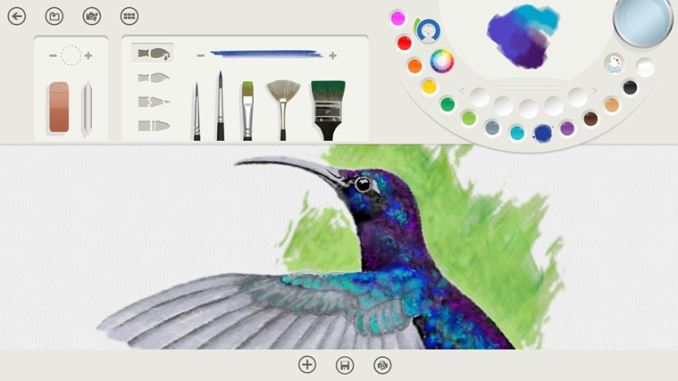

Microsoft has been testing .NET Native with two of their own apps. Both Wordament and Fresh Paint have been running as native code on Windows 8.1. This is the case for both the ARM and x86 versions of the app, and the compilation in the cloud ensures that the device downloading will be sure to get the correct executable for its platform.

.NET Native has been officially released with Visual Studio 2015, and is available for use with the Universal Windows App platform and therefore apps in the store. At this time, it is not available for desktop apps, although that is certainly something that could come with a future update. It’s not surprising though, since they really want to move to the new app platform anyway.

There is a lot more under the covers to move from managed code to native code, but this is a high level overview of the work that has been done to provide developers with a method to get better performance without having to move away from C#.

Source: MSDN

30 Comments

View All Comments

Jaybus - Wednesday, October 7, 2015 - link

Well, that depends upon the programmer. I think you mean that [some] compiled languages have been as fast as hand written assembler for some time now. It is not possible to beat optimal assembly code.whatacrock - Tuesday, October 6, 2015 - link

This is pure crap. Nobody cares about Microsoft's stupid store or Windows Phone.This will probably never be in desktop applications, which is the only thing people want. Microsoft would rather vainly attempt to capture a market they'll never have than keep the one they've already got.

MrSpadge - Thursday, October 8, 2015 - link

Yeah, nobody wants apps which are available and similar on all their devices and which take care of updating themselves. And the worst: on Win 10 they simply look and feel like other programs! How shall people know what to hate with all that unification?Guspaz - Tuesday, October 6, 2015 - link

This is a pretty terrible decision. If your code may be compiled to native machine language, but you don't have any way of running that code yourself (because it's only generated when you send it to Microsoft), developers no longer have any way of accurately profiling their application, and most developers (who don't use the Windows Store) won't get to benefit from it.IanHagen - Tuesday, October 6, 2015 - link

I can't wait for them to update the documentation with the apparent shift on focus from HTML5 to C# regarding "metro" apps that started recently. It's a bloody mess that bizarrely focuses heavily on half-baked JavaScript "universal" apps instead of their in-house C# language. I'd take Cocoa over it any day.da_asmodai - Wednesday, October 7, 2015 - link

Compiling C# to native code is already available for Desktop apps. It's called NGEN (Native Image Generator) and it's been since .Net 1.1 (2003?) at least.asldkfli2n - Wednesday, October 7, 2015 - link

NGEN generates code that still runs on the .net framework (its just been pre-jitted into native code)..Net Native produces native code that runs on no framework at all (except for a tiny GC that gets baked in)

da_asmodai - Thursday, October 8, 2015 - link

I didn't say they were EXACTLY the same. NGEN is native code though as it's pre-jitted. So it makes no difference to the user at runtime. If you use NGEN on the install machine (similar to how Google works ART) then you have to have the framework there to do the NGEN anyway so would you statically link everything when you're going to need the framework anyway. The framework is pre-jitted as well so it's all running native code at execution time for the user. They COULD make NGEN statically link but then what will that solve, the whole point is to distribute platform independent code and then compile to native on any target device. If they linked in everything so no framework was required then they'd have tons of the same code over and over again. They'd have the copy the IL version they use to NGEN, then they'd have a separate native code copy for each application that used that part of the framework. Instead they have one IL version they use for NGEN and ONE native code version that share by all applications that use that part of the framework.aoshiryaev - Thursday, October 8, 2015 - link

What's a "complier"?EWJ - Wednesday, November 4, 2015 - link

A complier is a new name for compiler. The core framework underpinning UWP for which .NET native works compiles to memory. There are no binaries at all on disk, only in Release mode. So there's why Microsoft tends to refer to compile as 'syntax check' as of late and hence the compiler can be called complier ... (yes, pun, true)That said, NGEN creates a patching problem, where NGEN could take an hour or more in the Windows 7 era e.g. if sth that was inlined most of the time was patched. And because the GAC is global you'd have to go over all dependencies of all apps to fix one small issue. With NET Native each app has its own dependency on a version of a dll, which would at the very least vary with when the app was published, so they won't all be vulnerable at the same time every time. And the whole framework is much more granular by design (NuGet everything) but if it wasn't it still has a fairly limited footprint compared to the full .NET Framework.

About Java people noted it's almost 100% comparable, so would benefit from this principle in much the same way. I leave to others to compare versioning and compilation scope - I just don't know. But then Google doesn't seem to patch at all, so there's no argument to be made neither for or against 'Java Native' compared to having it compile on install.

I don't really think it is a problem that it is 'under Microsoft control'. Actually I could challenge you to design a new (Windows 10) device with a new ISA for which you do not already have a world class C++ compiler. Then how are you going to create firmware?? But if you have it, why not let Microsoft (re)compile it - would it matter at all? Yes, you'd have to give Microsoft your brand new compiler, but I'd guess you'd want all of the world to have it - or who'd be making apps for your ISA. So Microsoft will produce the same binaries as on your own machine. I don't see how profiling is impacted. Rather, if you didn't have time, you can still submit an app for ARM even if you don't have a device that runs it on your desk.

But checking for nasty security issues, malicious apps, and so forth, I'm happy to see Microsofts take responsibility. I have no issue they use IL code that is easier to analyze than native binaries. Google on the other hand, neither seems to have a watertight patching ecosystem, nor is very effective at keeping users safe from malicious apps, seeing the reports about vulnerability and exploits of the last few years.

On top of this, expect the whole principle to work on Linux and iOS pretty soon too, all of it compiling from the same sources on Github essentially (although yes true UAP apps are slightly more of an odd beast than a DNX target for which this already holds albeit in beta).