Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTCPU Scaling

When it comes to how well a game scales with a processor, DirectX 12 is somewhat of a mixed bag. This is due to two reasons – it allows GPU commands to be issued by each CPU core, therefore removing the single core performance limit that hindered a number of DX11 titles and aiding configurations with fewer core counts or lower clock speeds. On the other side of the coin is that it because it allows all the threads in a system to issue commands, it can pile on the work during heavy scenes, moving the cliff edge for high powered cards further down the line or making the visual effects at the high end very impressive, which is perhaps something benchmarking like this won’t capture.

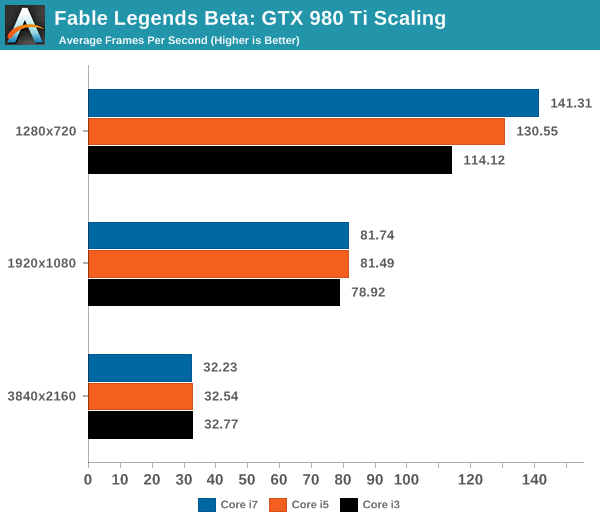

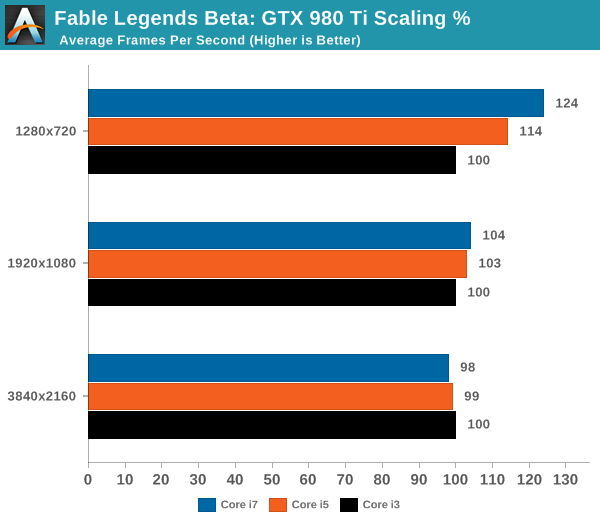

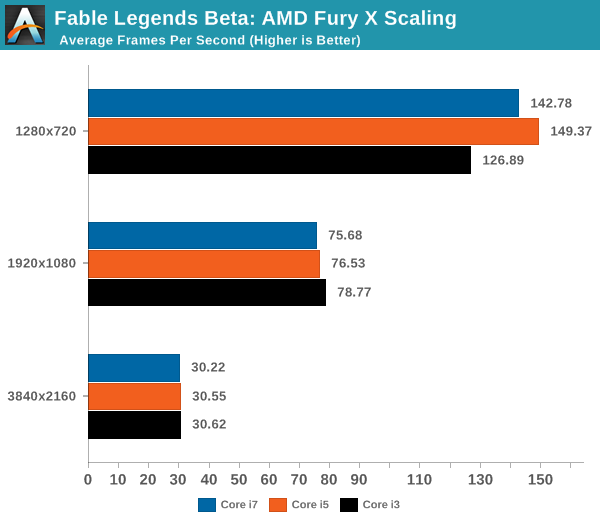

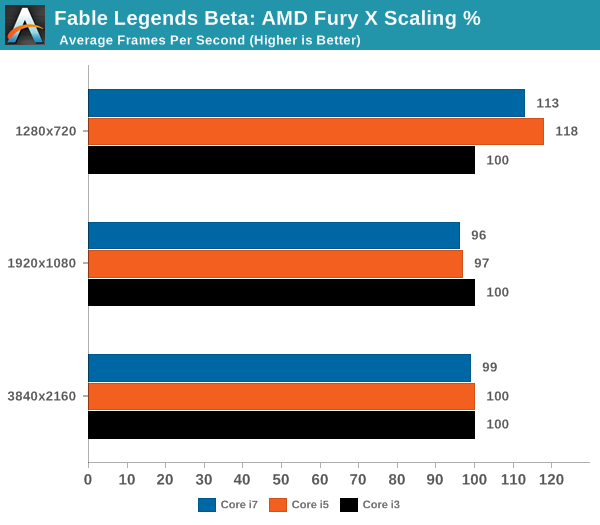

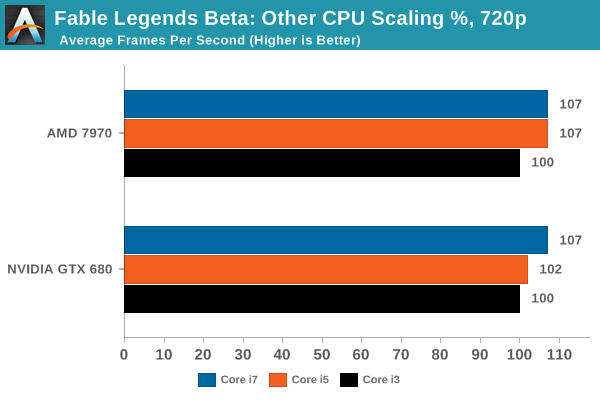

For our CPU scaling tests, we took the two high end cards tested and placed them in each of our Core i7 (6C/12T), Core i5 (4C/4T) and Core i3 (2C/4T) environments, at three different resolution/setting configurations similar to the previous page, and recorded the results.

Looking solely at the GTX 980 Ti to begin, and we see that for now the Fable Benchmark only scales at the low resolution and graphics quality. Moving up to 1080p or 4K sees similar performance no matter what the processor – perhaps even a slight decrease at 4K but this is well within a 2% variation.

On the Fury X, the tale is similar and yet stranger. The Fable benchmark is canned, so it should be running the same data each time – but in all three circumstances the Core i7 trails behind the Core i5. Perhaps in this instance there are too many threads on the processor contesting for bandwidth, giving some slight cache pressure (one wonders if some eDRAM might help). But again we see no real scaling improvement moving from Core i3 to Core i7 for our 1920x1080 and 3840x2160.

As we’ve seen in previous reviews, the effects of CPU scaling with regards resolution are dependent on both the CPU architecture and the GPU architecture, with each GPU manufacturer performing differently and two different models in the same silicon family also differing in scaling results. To that end, we actually see a boost at 1280x720 with the AMD 7970 and the GTX 680 when moving from the Core i3 to the Core i7.

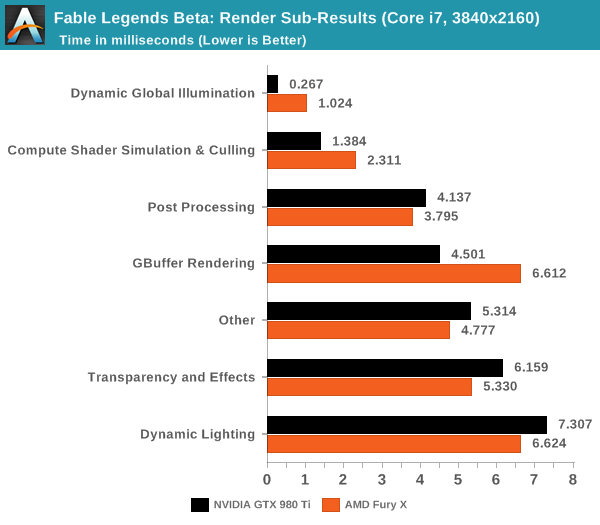

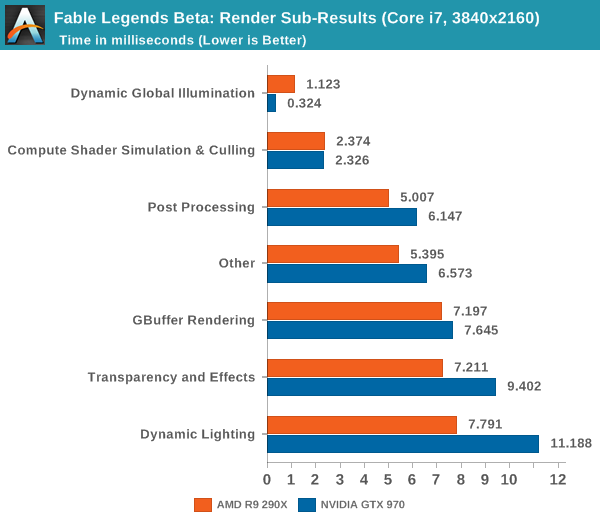

If we look at the rendering time breakdown between GPUs on high end configurations, we get the following data. Numbers here are listed in milliseconds, so lower is better:

Looking at the 980Ti and Fury X we see that NVIDIA is significantly faster at GBuffer rendering, Dynamic Global Illumination, and Compute Shader Simulation & Culling. Meanwhile AMD pulls narrower leads in every other category including the ambiguous 'other'.

Dropping down a couple of tiers with the GTX 970 and R9 290X, we see some minor variations. The R9 290X has good leads in dynamic lighting, and 'other', with smaller leads in Compute Shader Simulation & Culling and Post Processing. The GTX 970 benefits on dynamic global illumination significantly.

What do these numbers mean? Overall it appears that NVIDIA has a strong hold on deferred rendering and global illumination and AMD has benefits with dynamic lighting and compute.

141 Comments

View All Comments

Nenad - Thursday, September 24, 2015 - link

I suggest using 2560x1440 as one of tested resolution for articles where important part are top cards, since that is currently sweet spot for top end cards like GTX 980Ti or AMD Fury.I know that, on steam survey, that resolution is not nearly represented as 1920x1080, but neither are cards like 980ti and FuryX.

Jtaylor1986 - Thursday, September 24, 2015 - link

The benchmark doesn't support this.Peichen - Thursday, September 24, 2015 - link

Buy a Fury X if you want to play Fable at 720p. Buy a 980Ti if you want to play 4K. And remember, get a fast Intel CPU if you are playing at 720p otherwise your Fury X won't be able to do 120+fps.DrKlahn - Thursday, September 24, 2015 - link

The Fury is 2fps less at 4k (though a heavily overclocked 980ti may increase this lead), so I'd say both are pretty comparable at 4K. Fury also scales better at lower resolutions with lower end CPU's so I'm not sure what point you're trying to make. Although to be fair none of the cards tested struggle to maintain playable frame rates at lower resolutions.Asomething - Thursday, September 24, 2015 - link

Man what a strange world where amd has less driver overhead than the competition.extide - Thursday, September 24, 2015 - link

Also, remember there are those newer AMD drivers that were not able to be used for this test. I could easily see a new driver gaining 2+ fps to match/beat the 980 ti in this game.Gigaplex - Thursday, September 24, 2015 - link

If you're buying a card specifically for one game at 720p, you wouldn't be spending top dollar for a Fury X.Jtaylor1986 - Thursday, September 24, 2015 - link

Kind of suprising you chose to publish this at all. Given how limited the testing options are in the benchmark this release seems a little to much like a pure marketing stunt for my tastes from Lionhead and Microsoft rather than a DX12 showcase. The fact that it doesn't include a DX11 option is a dead giveaway.piiman - Saturday, September 26, 2015 - link

"The fact that it doesn't include a DX11 option is a dead giveaway."My thought also. What the point of this Benchmark if there are no Dx11 to compare them to?

Gotpaidmuch - Thursday, September 24, 2015 - link

How come GTX 980 was not included in the tests? Did it score that bad?