Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTDiscussing Percentiles and Minimum Frame Rates

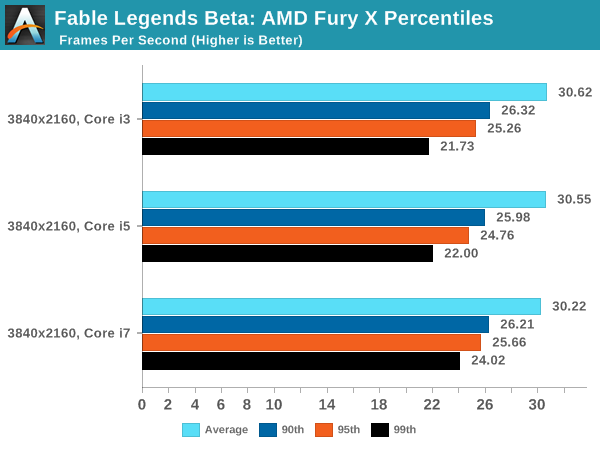

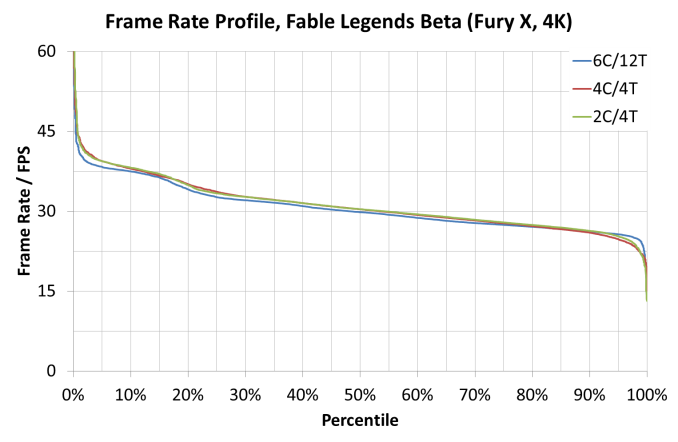

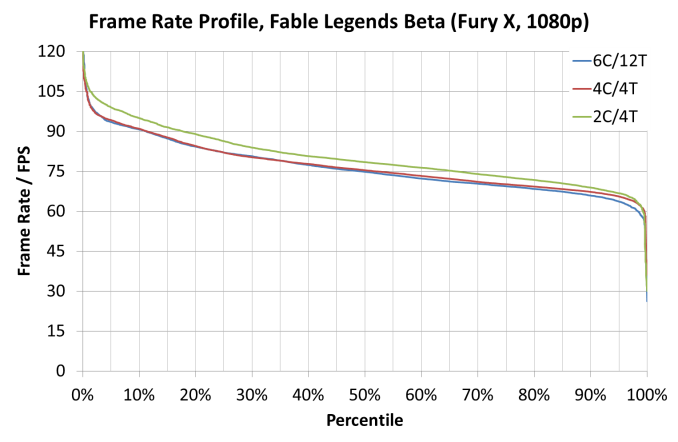

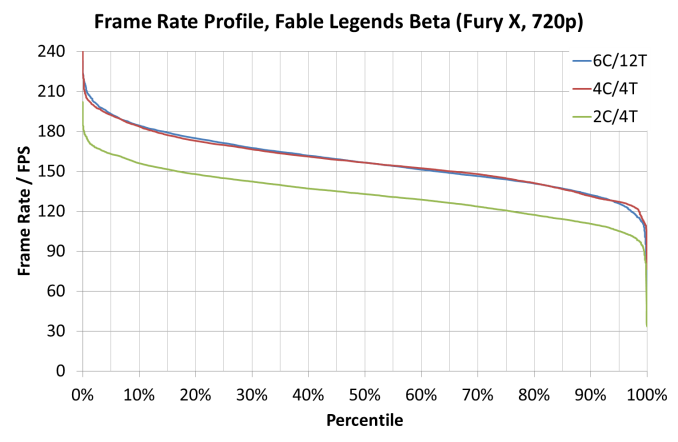

Continuing from the previous page, we performed a similar analysis on AMD's Fury X graphics card. Same rules apply - all three resolution/setting combinations using all three system configurations. Results are given as frame rate profiles showing percentiles as well as choosing the 90th, 95th and 99th percentile values to get an indication of minimum frame rates.

Moving on to the Fury X at 4K and we see all three processor lineups performing similarly, giving us an indication that we are more GPU limited here. There is a slight underline on the Core i7 though, giving slightly lower frame rates in easier scenes but a better frame rate when the going gets tough beyond the 95th percentile.

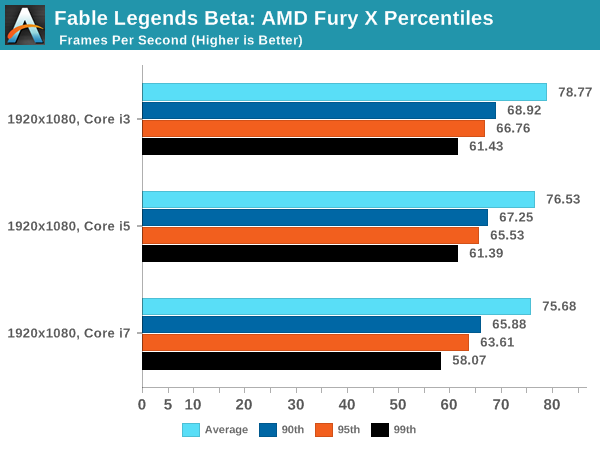

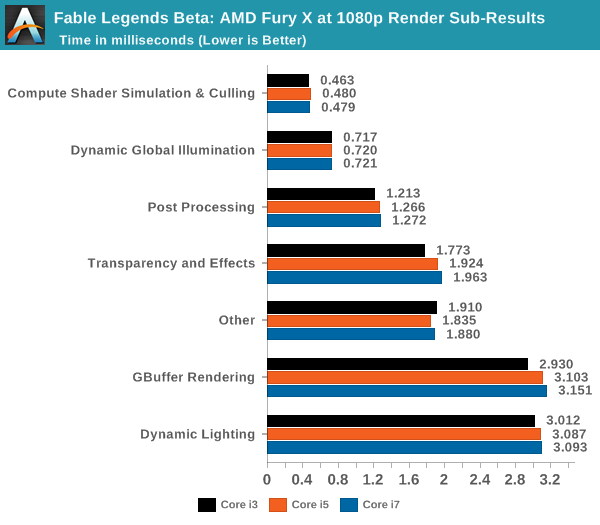

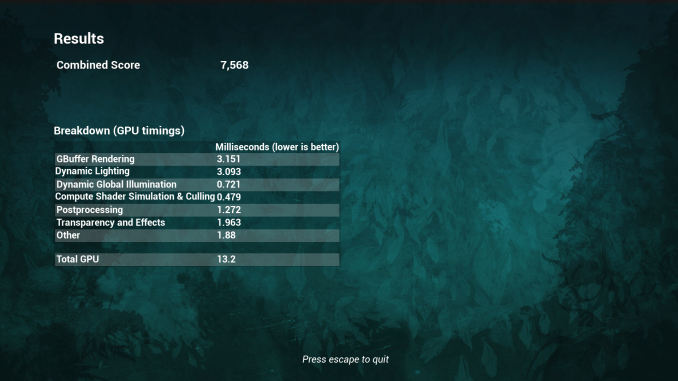

For 1080p, the results take a twist. It almost seems as if we have some form of reverse scaling, whereby more cores is doing more damage to the results. If we have a look at the breakdown provided by the in-game benchmark (given in milliseconds, so lower is better):

Three areas stand out as benefitting from fewer cores: Transparency and Effects, GBuffer Rendering and Dynamic Lighting. All three are related to illumination and how the illumination interacts with its surroundings. One reason springs to mind on this – with large core counts, too many threads are issuing work to the graphics card causing thread contention in the cache or giving the thread scheduler a hard time depending on what comes in as high priority.

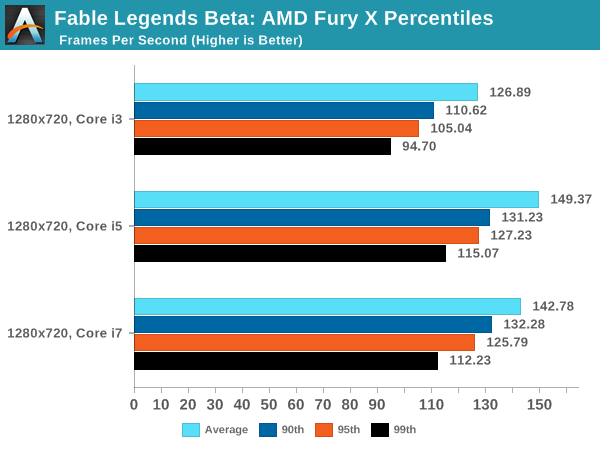

Nevertheless, the situation changes when we move down again to 720p:

Here the Core i3 takes a nose dive as we become CPU limited to pushing out the frames.

141 Comments

View All Comments

HotBBQ - Friday, September 25, 2015 - link

Please avoid using green and red together for plots. It's nigh impossible to distinguish if you are red-green colorblind (the most common).Crunchy005 - Friday, September 25, 2015 - link

So we have a 680, 970, 980ti. Why is there a 980 missing and no 700 series cards from nvidia? The 700s were the original cards to go against things like the 7970, 290, 290x. Would be nice see whether those cards are still relevant, although the lack of them showing in benchmarks says otherwise. Also the 980 missing is a bit concerning.Daniel Williams - Friday, September 25, 2015 - link

It's mostly time constraints that limit our GPU selection. So many GPU's with not so many hours in the day. In this case Ryan got told about this benchmark just two days before leaving for the Samsung SSD global summit and just had time to bench the cards and hand the numbers to the rest of us for the writeup.It surely would be great if we had time to test everything. :-)

Oxford Guy - Friday, September 25, 2015 - link

Didn't Ashes come out first?Daniel Williams - Friday, September 25, 2015 - link

Yes but our schedule didn't work out. We will likely look at it at a later time, closer to launch.Oxford Guy - Saturday, September 26, 2015 - link

So the benchmark that favors AMD is brushed to the side but this one fits right into the schedule.This is the sort of stuff that makes people wonder about this site's neutrality.

Brett Howse - Saturday, September 26, 2015 - link

I think you are fishing a bit here. We didn't even have a chance to test Ashes because of when it came out (right at Intel's Developer Forum) so how would we even know it favored AMD? Regardless, now that Daniel is hired on hopefully this will alleviate the backlog on things like this. Unfortunately we are a very small team so we can't test everything we would like to, but that doesn't mean we don't want to test it.Oxford Guy - Sunday, September 27, 2015 - link

Ashes came out before this benchmark, right? So, how does it make sense that this one was tested first? I guess you'd know by reading the ArsTechnica article that showed up to a 70% increase in performance for the 290X over DX11 as well as much better minimum frame rates.Ananke - Friday, September 25, 2015 - link

Hmm, this review is kinda pathetic...NVidia has NO async scheduler in the GPU, the scheduler is in the driver, aka it needs CPU cycles. Then, async processors are one per compute cluster instead one per compute unit, i.e. lesser number.So, once you run a Dx12 game with all AI inside, in a heavy scene it will be CPU constrained and the GPU driver will not have enough resource to schedule, and it will drop performance significantly. Unless, you somehow manage to prioritize the GPU driver, aka have dedicated CPU thread/core for it in the game engine...which is exactly what Dx12 was supposed to avoid - higher level of virtualization. That abstract layer of Dx11 is not there anymore.

So, yeah, NVidia is great in geometry calculations, it's always been, this review confirms it again.

The_Countess - Friday, September 25, 2015 - link

often the fury X GAINS FPS as the CPU speed goes down from i7 to i5 and i3.3 FPS gained in the ultra benchmark going from the i7 to the i3, and 7 in the low benchmark between the i7 and the i5.